TECHNICAL ASSET FINGERPRINT

1048b64da252d086894f53bd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Llama-3.2 Model Performance

### Overview

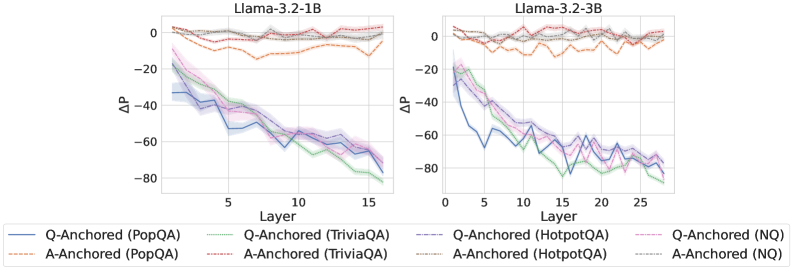

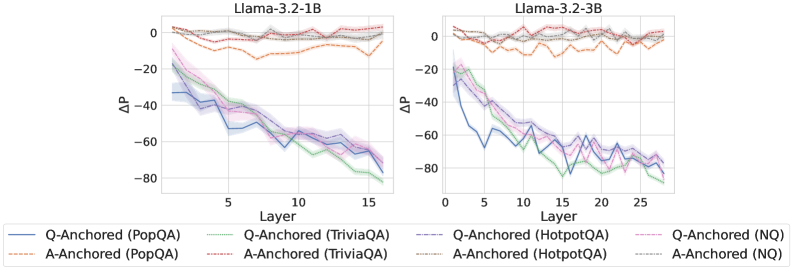

The image contains two line charts comparing the performance of Llama-3.2 models (1B and 3B) across different question-answering datasets. The charts plot the change in performance (ΔP) against the layer number of the model. Each chart displays six data series, representing question-anchored (Q-Anchored) and answer-anchored (A-Anchored) performance on PopQA, TriviaQA, HotpotQA, and NQ datasets.

### Components/Axes

**Left Chart (Llama-3.2-1B):**

* **Title:** Llama-3.2-1B

* **X-axis:** Layer, with markers at 0, 5, 10, and 15.

* **Y-axis:** ΔP, with markers at 0, -20, -40, -60, and -80.

* **Legend:** Located below the chart.

* Q-Anchored (PopQA): Solid Blue Line

* A-Anchored (PopQA): Dashed Brown Line

* Q-Anchored (TriviaQA): Dotted Green Line

* A-Anchored (TriviaQA): Dash-Dotted Pink Line

* Q-Anchored (HotpotQA): Dash-Dot Blue Line

* Q-Anchored (NQ): Dotted Pink Line

* A-Anchored (HotpotQA): Solid Green Line

* A-Anchored (NQ): Dotted Grey Line

**Right Chart (Llama-3.2-3B):**

* **Title:** Llama-3.2-3B

* **X-axis:** Layer, with markers at 0, 5, 10, 15, 20, and 25.

* **Y-axis:** ΔP, with markers at 0, -20, -40, -60, and -80.

* **Legend:** Located below both charts.

* Q-Anchored (PopQA): Solid Blue Line

* A-Anchored (PopQA): Dashed Brown Line

* Q-Anchored (TriviaQA): Dotted Green Line

* A-Anchored (TriviaQA): Dash-Dotted Pink Line

* Q-Anchored (HotpotQA): Dash-Dot Blue Line

* Q-Anchored (NQ): Dotted Pink Line

* A-Anchored (HotpotQA): Solid Green Line

* A-Anchored (NQ): Dotted Grey Line

### Detailed Analysis

**Llama-3.2-1B Chart:**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts at approximately -30 and generally decreases to around -70 by layer 15.

* **A-Anchored (PopQA):** (Dashed Brown Line) Remains relatively flat, fluctuating between 0 and -10.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts at approximately -30 and decreases to around -60 by layer 15.

* **A-Anchored (TriviaQA):** (Dash-Dotted Pink Line) Remains relatively flat, fluctuating between 0 and -10.

* **Q-Anchored (HotpotQA):** (Dash-Dot Blue Line) Starts at approximately -20 and decreases to around -60 by layer 15.

* **Q-Anchored (NQ):** (Dotted Pink Line) Starts at approximately -20 and decreases to around -50 by layer 15.

* **A-Anchored (HotpotQA):** (Solid Green Line) Starts at approximately -30 and decreases to around -70 by layer 15.

* **A-Anchored (NQ):** (Dotted Grey Line) Remains relatively flat, fluctuating between 0 and -10.

**Llama-3.2-3B Chart:**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts at approximately -30 and generally decreases to around -70 by layer 25.

* **A-Anchored (PopQA):** (Dashed Brown Line) Remains relatively flat, fluctuating between 0 and -10.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts at approximately -30 and decreases to around -70 by layer 25.

* **A-Anchored (TriviaQA):** (Dash-Dotted Pink Line) Remains relatively flat, fluctuating between 0 and -10.

* **Q-Anchored (HotpotQA):** (Dash-Dot Blue Line) Starts at approximately -30 and decreases to around -70 by layer 25.

* **Q-Anchored (NQ):** (Dotted Pink Line) Starts at approximately -20 and decreases to around -70 by layer 25.

* **A-Anchored (HotpotQA):** (Solid Green Line) Starts at approximately -30 and decreases to around -70 by layer 25.

* **A-Anchored (NQ):** (Dotted Grey Line) Remains relatively flat, fluctuating between 0 and -10.

### Key Observations

* The Q-Anchored lines (PopQA, TriviaQA, HotpotQA, and NQ) generally show a decreasing trend in ΔP as the layer number increases for both models.

* The A-Anchored lines (PopQA, TriviaQA, HotpotQA, and NQ) remain relatively flat across all layers for both models.

* The 3B model has more layers (25) than the 1B model (15).

* The performance drop (ΔP) is more pronounced for Q-Anchored data compared to A-Anchored data.

### Interpretation

The charts suggest that question anchoring has a more significant impact on performance as the model processes deeper layers. The decreasing ΔP for Q-Anchored data indicates that the model's performance degrades more noticeably with increasing layer depth when the question is the anchor. Conversely, answer anchoring seems to maintain a more stable performance across different layers. The 3B model, with its increased number of layers, exhibits similar trends to the 1B model, but the performance drop in Q-Anchored data is sustained over a larger number of layers. This could imply that the deeper layers in the 3B model are more sensitive to question-related information. The flat A-Anchored lines suggest that the model's performance is less affected by the layer depth when the answer is the anchor.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: ΔP vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the change in probability (ΔP) across layers for two Llama models: Llama-3.2-1B and Llama-3.2-3B. The charts display ΔP as a function of layer number, with different lines representing different anchoring methods and question-answering datasets.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 15 for the 1B model and 0 to 25 for the 3B model).

* **Y-axis:** ΔP (ranging from approximately -80 to 0).

* **Models:** Llama-3.2-1B (left chart), Llama-3.2-3B (right chart).

* **Anchoring Methods:** Q-Anchored, A-Anchored.

* **Question-Answering Datasets:** PopQA, TriviaQA, HotpotQA, NQ.

* **Legend:** Located at the bottom of the image, with color-coded lines corresponding to each combination of anchoring method and dataset.

### Detailed Analysis

**Llama-3.2-1B (Left Chart)**

* **Q-Anchored (PopQA):** (Blue line) Starts at approximately -2, decreases steadily to approximately -70 at layer 15.

* **A-Anchored (PopQA):** (Orange dashed line) Starts at approximately -1, decreases to approximately -50 at layer 15.

* **Q-Anchored (TriviaQA):** (Green line) Starts at approximately -5, decreases to approximately -60 at layer 15.

* **A-Anchored (TriviaQA):** (Purple line) Starts at approximately -3, decreases to approximately -55 at layer 15.

* **Q-Anchored (HotpotQA):** (Light Blue line) Starts at approximately -1, decreases to approximately -65 at layer 15.

* **A-Anchored (HotpotQA):** (Yellow line) Starts at approximately -2, decreases to approximately -55 at layer 15.

* **Q-Anchored (NQ):** (Pink line) Starts at approximately -2, decreases to approximately -60 at layer 15.

* **A-Anchored (NQ):** (Grey line) Starts at approximately -1, decreases to approximately -50 at layer 15.

**Llama-3.2-3B (Right Chart)**

* **Q-Anchored (PopQA):** (Blue line) Starts at approximately -2, decreases to approximately -70 at layer 25.

* **A-Anchored (PopQA):** (Orange dashed line) Starts at approximately -1, decreases to approximately -50 at layer 25.

* **Q-Anchored (TriviaQA):** (Green line) Starts at approximately -5, decreases to approximately -60 at layer 25.

* **A-Anchored (TriviaQA):** (Purple line) Starts at approximately -3, decreases to approximately -55 at layer 25.

* **Q-Anchored (HotpotQA):** (Light Blue line) Starts at approximately -1, decreases to approximately -65 at layer 25.

* **A-Anchored (HotpotQA):** (Yellow line) Starts at approximately -2, decreases to approximately -55 at layer 25.

* **Q-Anchored (NQ):** (Pink line) Starts at approximately -2, decreases to approximately -60 at layer 25.

* **A-Anchored (NQ):** (Grey line) Starts at approximately -1, decreases to approximately -50 at layer 25.

In both charts, all lines generally exhibit a downward trend, indicating a decrease in ΔP as the layer number increases. The Q-Anchored lines consistently show a steeper decline than the A-Anchored lines for all datasets.

### Key Observations

* The 3B model (right chart) extends to a higher layer number (25) compared to the 1B model (15).

* The Q-Anchored method consistently results in a larger negative ΔP compared to the A-Anchored method across all datasets and models.

* The PopQA dataset generally shows the lowest ΔP values for both anchoring methods.

* The lines representing different datasets are relatively close to each other within each anchoring method, suggesting that the anchoring method has a more significant impact on ΔP than the specific dataset.

### Interpretation

The charts demonstrate how the change in probability (ΔP) evolves across layers in the Llama models, influenced by the anchoring method and the question-answering dataset used. The consistent downward trend suggests that the models' internal representations become more specialized or refined as information propagates through deeper layers.

The steeper decline observed with Q-Anchoring indicates that anchoring the query representation has a stronger effect on reducing the probability difference compared to anchoring the answer representation. This could imply that the query representation is more crucial for capturing the relevant information for accurate question answering.

The differences in ΔP values across datasets suggest that the models perform differently depending on the complexity or characteristics of the questions. PopQA, showing the lowest ΔP, might represent a more challenging dataset for the models.

The overall pattern suggests that the models are learning to differentiate between correct and incorrect answers as they process information through deeper layers, and the anchoring method plays a critical role in shaping this learning process. The fact that the trends are similar for both model sizes suggests that the underlying mechanisms are consistent, even as the model capacity increases.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Charts: ΔP Across Layers for Llama-3.2 Models

### Overview

The image displays two side-by-side line charts comparing the performance change (ΔP) across the layers of two different-sized language models: Llama-3.2-1B (left) and Llama-3.2-3B (right). Each chart plots multiple data series representing different experimental conditions (Q-Anchored vs. A-Anchored) across four question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ). The charts illustrate how the measured metric ΔP evolves as information passes through the model's layers.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **X-Axis (Both Charts):** Labeled `Layer`. Represents the sequential layers of the neural network.

* Llama-3.2-1B: Ticks at 0, 5, 10, 15. The axis spans approximately layers 0 to 16.

* Llama-3.2-3B: Ticks at 0, 5, 10, 15, 20, 25. The axis spans approximately layers 0 to 26.

* **Y-Axis (Both Charts):** Labeled `ΔP`. Represents a change in probability or performance metric. The scale ranges from approximately -80 to +10, with major ticks at -80, -60, -40, -20, 0.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, differentiating lines by color and style (solid vs. dashed).

* **Q-Anchored (Solid Lines):**

* `Q-Anchored (PopQA)`: Blue solid line.

* `Q-Anchored (TriviaQA)`: Green solid line.

* `Q-Anchored (HotpotQA)`: Purple solid line.

* `Q-Anchored (NQ)`: Pink solid line.

* **A-Anchored (Dashed Lines):**

* `A-Anchored (PopQA)`: Orange dashed line.

* `A-Anchored (TriviaQA)`: Red dashed line.

* `A-Anchored (HotpotQA)`: Brown dashed line.

* `A-Anchored (NQ)`: Gray dashed line.

### Detailed Analysis

**Llama-3.2-1B Chart (Left):**

* **Q-Anchored Series (Solid Lines):** All four solid lines show a strong, consistent downward trend. They start near ΔP = -10 to -20 at Layer 0 and decline steadily to between -60 and -80 by Layer 16. The lines are tightly clustered, with the green (TriviaQA) and blue (PopQA) lines often at the lower bound of the cluster. Shaded regions around each line indicate variance or confidence intervals.

* **A-Anchored Series (Dashed Lines):** All four dashed lines remain relatively flat and close to ΔP = 0 across all layers. They exhibit minor fluctuations but no significant upward or downward trend. The orange (PopQA) and red (TriviaQA) dashed lines show slightly more volatility than the others, occasionally dipping to around -10.

**Llama-3.2-3B Chart (Right):**

* **Q-Anchored Series (Solid Lines):** The pattern is similar to the 1B model but extended over more layers. The downward trend begins at Layer 0 (ΔP ≈ -20) and continues to Layer 26, where values reach between -70 and -80. The decline appears slightly less linear than in the 1B model, with some minor plateaus and variations. The green (TriviaQA) line again often represents the lowest values.

* **A-Anchored Series (Dashed Lines):** Consistent with the 1B model, these lines hover near ΔP = 0 throughout all 26 layers. They show minor noise but no directional trend. The orange (PopQA) dashed line is again the most volatile within this group.

### Key Observations

1. **Fundamental Dichotomy:** There is a stark, consistent difference between the behavior of Q-Anchored and A-Anchored conditions. Q-Anchored leads to a severe, layer-dependent degradation in ΔP, while A-Anchored maintains a stable ΔP near zero.

2. **Model Size Scaling:** The core trend is preserved when scaling from the 1B to the 3B parameter model. The 3B model simply extends the layer-wise analysis further, showing the Q-Anchored decline continues predictably with depth.

3. **Dataset Similarity:** Within each anchoring condition (Q or A), the four datasets (PopQA, TriviaQA, HotpotQA, NQ) produce remarkably similar trajectories. This suggests the observed effect is robust across different data sources and not an artifact of a specific dataset.

4. **Variance:** The shaded error bands are relatively narrow, indicating the reported trends are consistent across multiple runs or samples.

### Interpretation

This visualization presents a clear technical finding about the internal dynamics of Llama-3.2 models during a specific task (likely related to question answering or knowledge recall).

* **What the Data Suggests:** The metric ΔP, which likely measures the model's confidence or probability assigned to a correct answer, is highly sensitive to the "anchoring" method used during processing. "Q-Anchored" (possibly meaning the model's processing is conditioned heavily on the question) causes a catastrophic, layer-by-layer erosion of this confidence. In contrast, "A-Anchored" (possibly conditioning on the answer or a different representation) preserves the initial confidence level throughout the network's depth.

* **Relationship Between Elements:** The charts demonstrate that this degradation is a function of network depth (layer number) and is intrinsic to the model architecture/training, as it manifests identically in both the 1B and 3B variants. The consistency across datasets reinforces that this is a general mechanistic property, not a data-specific quirk.

* **Notable Implications:** The findings imply that for this task, the way information is "anchored" or represented as it flows through the model's layers is critical. The Q-Anchored pathway appears to suffer from a form of signal degradation or interference that accumulates with depth. This could inform techniques for improving model performance, such as modifying how question information is propagated or introducing architectural changes to stabilize representations in deeper layers. The stability of the A-Anchored condition provides a potential baseline or target for such interventions.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: ΔP vs. Layer for Llama-3.2-1B and Llama-3.2-3B Models

### Overview

The image contains two side-by-side line graphs comparing the performance of Q-Anchored and A-Anchored models across layers for two versions of the Llama-3.2 architecture (1B and 3B parameters). The y-axis represents ΔP (change in performance), and the x-axis represents the layer number. Each graph includes multiple data series differentiated by color, line style, and legend labels.

---

### Components/Axes

- **X-axis (Layer)**:

- Llama-3.2-1B: 0 to 15 (increments of 5)

- Llama-3.2-3B: 0 to 25 (increments of 5)

- **Y-axis (ΔP)**:

- Range: -80 to 0 (increments of 20)

- **Legend**:

- Positioned at the bottom of both graphs.

- Labels include:

- **Q-Anchored (PopQA)**: Blue solid line

- **A-Anchored (PopQA)**: Orange dashed line

- **Q-Anchored (TriviaQA)**: Green solid line

- **A-Anchored (TriviaQA)**: Red dashed line

- **Q-Anchored (HotpotQA)**: Purple solid line

- **A-Anchored (HotpotQA)**: Gray dashed line

- **Q-Anchored (NQ)**: Pink solid line

- **A-Anchored (NQ)**: Black dashed line

---

### Detailed Analysis

#### Llama-3.2-1B Graph

- **Q-Anchored (PopQA)**: Starts at ~-20 (Layer 0), decreases to ~-60 (Layer 15). Trend: Steady decline.

- **A-Anchored (PopQA)**: Starts at ~0 (Layer 0), decreases to ~-20 (Layer 15). Trend: Gradual decline.

- **Q-Anchored (TriviaQA)**: Starts at ~-40 (Layer 0), decreases to ~-80 (Layer 15). Trend: Sharp decline.

- **A-Anchored (TriviaQA)**: Starts at ~-20 (Layer 0), decreases to ~-60 (Layer 15). Trend: Moderate decline.

- **Q-Anchored (HotpotQA)**: Starts at ~-60 (Layer 0), decreases to ~-80 (Layer 15). Trend: Slight decline.

- **A-Anchored (HotpotQA)**: Starts at ~-40 (Layer 0), decreases to ~-60 (Layer 15). Trend: Steady decline.

- **Q-Anchored (NQ)**: Starts at ~-80 (Layer 0), decreases to ~-100 (Layer 15). Trend: Sharp decline.

- **A-Anchored (NQ)**: Starts at ~-60 (Layer 0), decreases to ~-80 (Layer 15). Trend: Moderate decline.

#### Llama-3.2-3B Graph

- **Q-Anchored (PopQA)**: Starts at ~-10 (Layer 0), decreases to ~-50 (Layer 25). Trend: Steady decline.

- **A-Anchored (PopQA)**: Starts at ~0 (Layer 0), decreases to ~-20 (Layer 25). Trend: Gradual decline.

- **Q-Anchored (TriviaQA)**: Starts at ~-30 (Layer 0), decreases to ~-70 (Layer 25). Trend: Sharp decline.

- **A-Anchored (TriviaQA)**: Starts at ~-10 (Layer 0), decreases to ~-50 (Layer 25). Trend: Moderate decline.

- **Q-Anchored (HotpotQA)**: Starts at ~-50 (Layer 0), decreases to ~-70 (Layer 25). Trend: Slight decline.

- **A-Anchored (HotpotQA)**: Starts at ~-30 (Layer 0), decreases to ~-50 (Layer 25). Trend: Steady decline.

- **Q-Anchored (NQ)**: Starts at ~-70 (Layer 0), decreases to ~-90 (Layer 25). Trend: Sharp decline.

- **A-Anchored (NQ)**: Starts at ~-50 (Layer 0), decreases to ~-70 (Layer 25). Trend: Moderate decline.

---

### Key Observations

1. **General Trend**: All data series show a downward trend in ΔP as layer number increases, indicating performance degradation with deeper layers.

2. **Model Size Impact**:

- Llama-3.2-3B (larger model) exhibits less severe ΔP declines compared to Llama-3.2-1B, suggesting better layer-wise performance in larger models.

3. **Dataset-Specific Patterns**:

- **NQ (Natural Questions)**: Shows the steepest declines, indicating higher sensitivity to layer depth.

- **PopQA**: Exhibits the least severe declines, suggesting it is less affected by layer depth.

4. **Q-Anchored vs. A-Anchored**:

- Q-Anchored models consistently show lower ΔP values than A-Anchored models across all datasets, implying better performance stability.

---

### Interpretation

The data suggests that Q-Anchored models (e.g., PopQA, TriviaQA, HotpotQA, NQ) generally outperform A-Anchored models in terms of ΔP across layers. This could indicate that Q-Anchored architectures are more effective at maintaining performance in deeper layers. The larger Llama-3.2-3B model demonstrates improved layer-wise stability compared to the smaller 1B version, highlighting the benefits of increased model size. The NQ dataset’s pronounced declines suggest it is more challenging for the models, while PopQA’s minimal changes imply it is easier to handle. These trends may reflect differences in dataset complexity or model architecture design.

DECODING INTELLIGENCE...