TECHNICAL ASSET FINGERPRINT

106aa70c7c286857c0b32b2a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

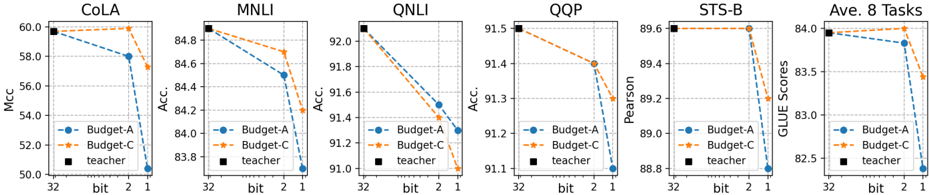

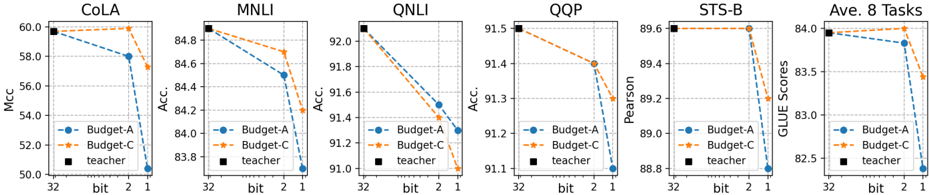

## Chart Type: Multiple Line Graphs Comparing Model Performance

### Overview

The image presents six line graphs arranged horizontally, each evaluating the performance of different models (Budget-A, Budget-C, and Teacher) on various tasks (CoLA, MNLI, QNLI, QQP, STS-B, and Average of 8 Tasks). The x-axis represents the bit size (32, 2, 1), and the y-axis represents the performance metric specific to each task (Mcc, Accuracy, Pearson, GLUE Scores). The graphs compare the performance of two budget models (A and C) against a teacher model.

### Components/Axes

* **X-Axis:** "bit" with values 32, 2, and 1. The x-axis is consistent across all six graphs.

* **Y-Axis:** Varies depending on the task:

* **CoLA:** "Mcc" (Matthews correlation coefficient), scale from 50.0 to 60.0.

* **MNLI:** "Acc." (Accuracy), scale from 83.8 to 84.8.

* **QNLI:** "Acc." (Accuracy), scale from 91.0 to 92.0.

* **QQP:** "Acc." (Accuracy), scale from 91.1 to 91.5.

* **STS-B:** "Pearson", scale from 88.8 to 89.6.

* **Ave. 8 Tasks:** "GLUE Scores", scale from 82.5 to 84.0.

* **Legend:** Located below each graph, indicating:

* **Blue Line with Circle Markers:** "Budget-A"

* **Orange Dashed Line with Star Markers:** "Budget-C"

* **Black Square Marker:** "teacher"

### Detailed Analysis

**1. CoLA**

* **Budget-A (Blue):** Starts at approximately 60.0 at bit=32, decreases to approximately 58.0 at bit=2, and further decreases to approximately 50.5 at bit=1.

* **Budget-C (Orange):** Remains relatively constant at approximately 60.0 across all bit values.

* **Teacher (Black):** Constant at approximately 60.0.

**2. MNLI**

* **Budget-A (Blue):** Starts at approximately 84.8 at bit=32, decreases to approximately 84.5 at bit=2, and further decreases to approximately 83.7 at bit=1.

* **Budget-C (Orange):** Starts at approximately 84.8 at bit=32, decreases to approximately 84.7 at bit=2, and further decreases to approximately 84.2 at bit=1.

* **Teacher (Black):** Constant at approximately 84.8.

**3. QNLI**

* **Budget-A (Blue):** Starts at approximately 92.1 at bit=32, decreases to approximately 91.6 at bit=2, and further decreases to approximately 91.2 at bit=1.

* **Budget-C (Orange):** Starts at approximately 92.1 at bit=32, decreases to approximately 91.8 at bit=2, and further decreases to approximately 91.1 at bit=1.

* **Teacher (Black):** Constant at approximately 92.1.

**4. QQP**

* **Budget-A (Blue):** Starts at approximately 91.5 at bit=32, decreases to approximately 91.3 at bit=2, and further decreases to approximately 91.2 at bit=1.

* **Budget-C (Orange):** Starts at approximately 91.5 at bit=32, decreases to approximately 91.4 at bit=2, and further decreases to approximately 91.3 at bit=1.

* **Teacher (Black):** Constant at approximately 91.5.

**5. STS-B**

* **Budget-A (Blue):** Remains relatively constant at approximately 89.6 at bit=32, decreases to approximately 89.3 at bit=2, and further decreases to approximately 89.1 at bit=1.

* **Budget-C (Orange):** Remains relatively constant at approximately 89.6 across all bit values.

* **Teacher (Black):** Constant at approximately 89.6.

**6. Ave. 8 Tasks**

* **Budget-A (Blue):** Starts at approximately 83.9 at bit=32, decreases to approximately 83.8 at bit=2, and further decreases to approximately 82.6 at bit=1.

* **Budget-C (Orange):** Starts at approximately 84.0 at bit=32, decreases to approximately 83.9 at bit=2, and further decreases to approximately 83.4 at bit=1.

* **Teacher (Black):** Constant at approximately 84.0.

### Key Observations

* The "teacher" model consistently performs at the highest level across all tasks and bit sizes.

* Both "Budget-A" and "Budget-C" models generally show a decrease in performance as the bit size decreases from 32 to 1.

* The performance drop is more pronounced for "Budget-A" in some tasks (e.g., CoLA, Ave. 8 Tasks).

* For the CoLA task, Budget-C maintains a constant performance across all bit sizes, matching the teacher model.

### Interpretation

The graphs illustrate the impact of bit size reduction on the performance of different models across various natural language processing tasks. The "teacher" model serves as a benchmark, demonstrating the potential performance ceiling. The "Budget-A" and "Budget-C" models, presumably smaller or more efficient versions, experience performance degradation as the bit size is reduced, indicating a trade-off between model size/efficiency and accuracy. The extent of this degradation varies depending on the task and the specific budget model. The CoLA task shows a unique case where Budget-C maintains performance, suggesting it may be more robust to bit size reduction for this particular task. Overall, the data suggests that reducing bit size can negatively impact model performance, and the choice of model architecture (Budget-A vs. Budget-C) can influence the extent of this impact.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: Performance Comparison of Budget-A, Budget-C, and Teacher Models Across Various Tasks

### Overview

The image presents six line charts, each representing the performance of three models – Budget-A, Budget-C, and a "teacher" model – on different natural language processing (NLP) tasks. The charts display how performance metrics change with varying bit precisions (1, 2, and 32 bit). The tasks are COLA, MNLI, QNLI, QQP, STS-B, and an average across all tasks ("Ave. 8 Tasks").

### Components/Axes

Each chart shares the following components:

* **X-axis:** Bit precision (labeled as "bit" with markers at 32, 2, and 1).

* **Y-axis:** Performance metric (varying across charts):

* COLA: Matthews Correlation Coefficient (Mcc)

* MNLI, QNLI, QQP: Accuracy (Acc.)

* STS-B: Pearson correlation

* Ave. 8 Tasks: GLUE Scores

* **Legend:** Located in the bottom-left corner of each chart, identifying the three models:

* Budget-A (Blue, dashed line)

* Budget-C (Orange, dashed-dotted line)

* Teacher (Black, solid line with square markers)

* **Title:** Each chart has a title indicating the specific task being evaluated (e.g., "COLA", "MNLI").

### Detailed Analysis or Content Details

**1. COLA:**

* The "teacher" model starts at approximately 59.5 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 57.5 at 32 bit, decreases to around 56.5 at 2 bit, and further to approximately 55.5 at 1 bit.

* Budget-C starts at approximately 58.5 at 32 bit, decreases to around 57.5 at 2 bit, and further to approximately 56.5 at 1 bit.

**2. MNLI:**

* The "teacher" model starts at approximately 88.5 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 84.5 at 32 bit, decreases to around 83.5 at 2 bit, and further to approximately 82.5 at 1 bit.

* Budget-C starts at approximately 85.5 at 32 bit, decreases to around 84.5 at 2 bit, and further to approximately 83.5 at 1 bit.

**3. QNLI:**

* The "teacher" model starts at approximately 92.5 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 91.5 at 32 bit, decreases to around 91.0 at 2 bit, and further to approximately 90.5 at 1 bit.

* Budget-C starts at approximately 92.0 at 32 bit, decreases to around 91.5 at 2 bit, and further to approximately 91.0 at 1 bit.

**4. QQP:**

* The "teacher" model starts at approximately 95.0 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 94.0 at 32 bit, decreases to around 93.5 at 2 bit, and further to approximately 93.0 at 1 bit.

* Budget-C starts at approximately 94.5 at 32 bit, decreases to around 94.0 at 2 bit, and further to approximately 93.5 at 1 bit.

**5. STS-B:**

* The "teacher" model starts at approximately 89.5 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 89.0 at 32 bit, decreases to around 88.5 at 2 bit, and further to approximately 88.0 at 1 bit.

* Budget-C starts at approximately 90.0 at 32 bit, decreases to around 89.5 at 2 bit, and further to approximately 89.0 at 1 bit.

**6. Ave. 8 Tasks:**

* The "teacher" model starts at approximately 84.0 and remains relatively flat across bit precisions.

* Budget-A starts at approximately 83.0 at 32 bit, decreases to around 82.0 at 2 bit, and further to approximately 81.0 at 1 bit.

* Budget-C starts at approximately 83.5 at 32 bit, decreases to around 82.5 at 2 bit, and further to approximately 81.5 at 1 bit.

### Key Observations

* Across all tasks, the "teacher" model consistently outperforms both Budget-A and Budget-C, and its performance is largely unaffected by changes in bit precision.

* Both Budget-A and Budget-C exhibit a decreasing trend in performance as bit precision decreases.

* Budget-C generally performs slightly better than Budget-A across all tasks and bit precisions.

* The performance drop from 32-bit to 2-bit and 1-bit is more pronounced for Budget-A and Budget-C than for the teacher model.

### Interpretation

The data suggests that reducing bit precision has a more detrimental effect on the performance of smaller models (Budget-A and Budget-C) compared to a larger, more robust model ("teacher"). This indicates that the smaller models are more sensitive to the loss of information caused by lower precision. The consistent outperformance of the teacher model highlights the benefits of model size and capacity. The relatively stable performance of the teacher model across different bit precisions suggests that it has learned more robust representations that are less susceptible to quantization errors. The slight advantage of Budget-C over Budget-A could be due to architectural differences or training procedures. Overall, the charts demonstrate a trade-off between model size/complexity and sensitivity to quantization, with larger models being more resilient to reduced precision.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Multi-Chart Performance Comparison: Model Quantization Impact

### Overview

The image displays a series of six line charts arranged horizontally, comparing the performance of two model compression methods ("Budget-A" and "Budget-C") against a full-precision "teacher" model across different bit-widths (32, 2, and 1 bit). The charts track performance on five specific natural language understanding tasks (CoLA, MNLI, QNLI, QQP, STS-B) and one aggregate metric (Ave. 8 Tasks).

### Components/Axes

* **Titles (Top of each chart):** CoLA, MNLI, QNLI, QQP, STS-B, Ave. 8 Tasks.

* **X-Axis (All charts):** Labeled "bit". Markers at positions labeled "32", "2", and "1".

* **Y-Axes (Vary by chart):**

* CoLA: Labeled "Mcc". Scale from 50.0 to 60.0.

* MNLI: Labeled "Acc.". Scale from 83.8 to 84.8.

* QNLI: Labeled "Acc.". Scale from 91.0 to 92.0.

* QQP: Labeled "Acc.". Scale from 91.1 to 91.5.

* STS-B: Labeled "Pearson". Scale from 88.8 to 89.6.

* Ave. 8 Tasks: Labeled "GLUE Scores". Scale from 82.5 to 84.0.

* **Legend (Present in each chart, positioned in the lower-left or center-left):**

* `Budget-A`: Blue solid line with circle markers.

* `Budget-C`: Orange dashed line with triangle markers.

* `teacher`: Black square marker (appears only at the 32-bit position).

### Detailed Analysis

**1. CoLA (Matthews Correlation Coefficient - Mcc)**

* **Trend:** Both Budget-A and Budget-C show a sharp decline in performance as bit-width decreases. The drop from 2 bits to 1 bit is particularly severe.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 59.8, Budget-C ≈ 59.8, teacher ≈ 59.8 (all converge).

* **2 bits:** Budget-A ≈ 58.0, Budget-C ≈ 59.8 (Budget-C maintains near-baseline performance).

* **1 bit:** Budget-A ≈ 50.5, Budget-C ≈ 57.5 (both drop significantly, Budget-C performs better).

**2. MNLI (Accuracy - Acc.)**

* **Trend:** A steady, near-linear decline for both methods from 32 to 2 bits, followed by a steeper drop to 1 bit. Budget-C consistently outperforms Budget-A at lower bit-widths.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 84.8, Budget-C ≈ 84.8, teacher ≈ 84.8.

* **2 bits:** Budget-A ≈ 84.5, Budget-C ≈ 84.7.

* **1 bit:** Budget-A ≈ 83.9, Budget-C ≈ 84.2.

**3. QNLI (Accuracy - Acc.)**

* **Trend:** A consistent, steep downward slope for both methods across all bit reductions.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 92.0, Budget-C ≈ 92.0, teacher ≈ 92.0.

* **2 bits:** Budget-A ≈ 91.5, Budget-C ≈ 91.6.

* **1 bit:** Budget-A ≈ 91.0, Budget-C ≈ 91.1.

**4. QQP (Accuracy - Acc.)**

* **Trend:** A moderate decline from 32 to 2 bits, followed by a sharper drop to 1 bit. The performance gap between Budget-C and Budget-A widens at lower bits.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 91.5, Budget-C ≈ 91.5, teacher ≈ 91.5.

* **2 bits:** Budget-A ≈ 91.4, Budget-C ≈ 91.4.

* **1 bit:** Budget-A ≈ 91.1, Budget-C ≈ 91.3.

**5. STS-B (Pearson Correlation)**

* **Trend:** Performance is stable from 32 to 2 bits for both methods, then plummets dramatically at 1 bit.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 89.6, Budget-C ≈ 89.6, teacher ≈ 89.6.

* **2 bits:** Budget-A ≈ 89.6, Budget-C ≈ 89.6.

* **1 bit:** Budget-A ≈ 88.8, Budget-C ≈ 89.0.

**6. Ave. 8 Tasks (GLUE Scores)**

* **Trend:** This aggregate view shows a gradual decline from 32 to 2 bits, followed by a very steep drop at 1 bit, summarizing the overall trend across tasks.

* **Data Points (Approximate):**

* **32 bits:** Budget-A ≈ 84.0, Budget-C ≈ 84.0, teacher ≈ 84.0.

* **2 bits:** Budget-A ≈ 83.8, Budget-C ≈ 84.0.

* **1 bit:** Budget-A ≈ 82.5, Budget-C ≈ 83.4.

### Key Observations

1. **Universal Degradation:** Performance for both compression methods decreases as model bit-width is reduced from 32 to 1 bit across all tasks.

2. **Critical Threshold:** The drop in performance from 2 bits to 1 bit is consistently more severe than the drop from 32 bits to 2 bits, suggesting a critical loss of information or representational capacity at 1-bit quantization.

3. **Method Superiority:** "Budget-C" (orange dashed line) consistently outperforms or matches "Budget-A" (blue solid line) at every reduced bit-width (2 and 1 bit) across all six charts. The gap is most pronounced at 1 bit.

4. **Task Sensitivity:** The impact varies by task. For example, STS-B shows almost no loss at 2 bits before crashing at 1 bit, while QNLI shows a steady decline. CoLA and the average score show the most dramatic relative drops at 1 bit.

5. **Baseline Convergence:** At 32 bits, all methods and the teacher model converge to the same performance point, establishing a common baseline.

### Interpretation

This data demonstrates the trade-off between model compression (reducing bit-width) and task performance in natural language processing. The "teacher" model represents the uncompressed, full-precision baseline.

The key finding is that **moderate quantization (to 2 bits) is relatively robust**, causing only minor to moderate performance degradation (e.g., ~0.2-1.8 points on GLUE average). However, **extreme quantization (to 1 bit) is highly detrimental**, causing severe performance loss (e.g., ~1.5 points on GLUE average for Budget-C, ~1.5 for Budget-A). This suggests that 1-bit representations are insufficient to capture the necessary nuances for these language tasks.

The consistent superiority of "Budget-A" over "Budget-C" indicates that the compression algorithm or strategy used in Budget-C is more effective at preserving performance under constrained bit-widths. This could be due to better handling of weight distributions, more sophisticated quantization schemes, or superior training objectives during the compression process.

The variation in degradation slopes across tasks (e.g., the cliff-edge drop in STS-B vs. the steady decline in QNLI) implies that different linguistic tasks have different sensitivities to the precision of model weights. Tasks relying on fine-grained semantic similarity (like STS-B) may tolerate moderate precision loss until a breaking point, while tasks involving more complex inference (like QNLI) may degrade more linearly.

In summary, the charts argue for the feasibility of aggressive model compression (to 2 bits) with minimal performance loss, while cautioning against extreme 1-bit quantization. They also highlight that the choice of compression method (Budget-C vs. Budget-A) is a critical factor in maintaining performance.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Tasks

### Overview

The image contains six line graphs comparing the performance of three models (Budget-A, Budget-C, and Teacher) across six natural language processing tasks: CoLA, MNLI, QNLI, QQP, STS-B, and Ave. 8 Tasks. Each graph plots accuracy (%) on the y-axis against model compression (bits: 32, 2, 1) on the x-axis. The graphs use dashed lines for Budget-A (blue), Budget-C (orange), and solid lines for the Teacher model (black).

---

### Components/Axes

- **X-axis**: Labeled "bit" with discrete values: 32 (full precision), 2 (low precision), and 1 (extreme compression).

- **Y-axis**: Labeled "Acc." (Accuracy, %) with ranges varying by task:

- CoLA: 50–60%

- MNLI: 83–85%

- QNLI: 91–92%

- QQP: 91–92%

- STS-B: 88–89.6%

- Ave. 8 Tasks: 82.5–84%

- **Legend**: Positioned in the bottom-left corner of each graph, with:

- Blue dashed line: Budget-A

- Orange dashed line: Budget-C

- Black solid line: Teacher

---

### Detailed Analysis

#### CoLA

- **Budget-A**: Starts at 58% (32 bits), drops to 56% (2 bits), then 54% (1 bit).

- **Budget-C**: Starts at 59% (32 bits), drops to 57% (2 bits), then 55% (1 bit).

- **Teacher**: Flat at 58% across all bits.

#### MNLI

- **Budget-A**: Starts at 84.8% (32 bits), drops to 84.2% (2 bits), then 83.8% (1 bit).

- **Budget-C**: Starts at 84.6% (32 bits), drops to 84.0% (2 bits), then 83.6% (1 bit).

- **Teacher**: Flat at 84.8% across all bits.

#### QNLI

- **Budget-A**: Starts at 91.8% (32 bits), drops to 91.4% (2 bits), then 91.0% (1 bit).

- **Budget-C**: Starts at 91.6% (32 bits), drops to 91.2% (2 bits), then 90.8% (1 bit).

- **Teacher**: Flat at 91.8% across all bits.

#### QQP

- **Budget-A**: Starts at 91.5% (32 bits), drops to 91.3% (2 bits), then 91.1% (1 bit).

- **Budget-C**: Starts at 91.4% (32 bits), drops to 91.2% (2 bits), then 91.0% (1 bit).

- **Teacher**: Flat at 91.5% across all bits.

#### STS-B

- **Budget-A**: Starts at 89.6% (32 bits), drops to 89.4% (2 bits), then 89.2% (1 bit).

- **Budget-C**: Starts at 89.6% (32 bits), drops to 89.4% (2 bits), then 89.2% (1 bit).

- **Teacher**: Flat at 89.6% across all bits.

#### Ave. 8 Tasks

- **Budget-A**: Starts at 84% (32 bits), drops to 83.7% (2 bits), then 82.7% (1 bit).

- **Budget-C**: Starts at 84% (32 bits), drops to 83.7% (2 bits), then 83.3% (1 bit).

- **Teacher**: Flat at 84% across all bits.

---

### Key Observations

1. **Consistent Decline in Budget Models**: Both Budget-A and Budget-C show a downward trend in accuracy as model compression increases (32 → 2 → 1 bits).

2. **Teacher Model Robustness**: The Teacher model maintains stable accuracy across all tasks and compression levels, indicating superior robustness to quantization.

3. **Task-Specific Performance**:

- QNLI and QQP show the highest accuracy (91–92%) but are sensitive to compression.

- STS-B and CoLA exhibit smaller accuracy drops compared to MNLI and QNLI.

4. **Budget-C vs. Budget-A**: Budget-C consistently outperforms Budget-A in all tasks, though both follow similar compression trends.

---

### Interpretation

The data demonstrates that model compression (reducing bits) degrades performance for Budget-A and Budget-C, with steeper declines observed in tasks requiring higher precision (e.g., QNLI, QQP). The Teacher model’s stability suggests it is less affected by quantization, likely due to its larger capacity or more robust training. This highlights a trade-off between model efficiency (via compression) and accuracy, with the Teacher model serving as a benchmark for minimal performance loss. The consistent outperformance of Budget-C over Budget-A implies architectural or training differences that enhance its resilience to compression.

DECODING INTELLIGENCE...