## Diagram: Simple Neural Network Computational Graph

### Overview

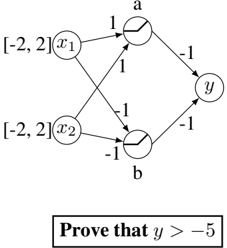

The image displays a directed graph representing a simple two-layer neural network or computational model. It consists of input nodes, intermediate (hidden) nodes, and a single output node, with weighted connections between them. A mathematical proof statement is presented below the diagram.

### Components/Axes

**Nodes (Circles):**

- **Input Layer (Left):**

- Node labeled `x₁` (top-left)

- Node labeled `x₂` (bottom-left)

- **Hidden Layer (Center):**

- Node labeled `a` (top-center)

- Node labeled `b` (bottom-center)

- **Output Layer (Right):**

- Node labeled `y` (center-right)

**Connections (Arrows) and Weights:**

- Arrow from `x₁` to `a` with weight `1`

- Arrow from `x₂` to `a` with weight `1`

- Arrow from `x₁` to `b` with weight `-1`

- Arrow from `x₂` to `b` with weight `-1`

- Arrow from `a` to `y` with weight `-1`

- Arrow from `b` to `y` with weight `-1`

**Annotations:**

- To the left of `x₁`: Text `[-2, 2]`

- To the left of `x₂`: Text `[-2, 2]`

- Below the entire diagram: A rectangular box containing the text `Prove that y > -5`

### Detailed Analysis

**Network Computation Flow:**

1. **Node `a` Calculation:** `a = (1 * x₁) + (1 * x₂) = x₁ + x₂`

2. **Node `b` Calculation:** `b = (-1 * x₁) + (-1 * x₂) = -x₁ - x₂`

3. **Output `y` Calculation:** `y = (-1 * a) + (-1 * b) = -a - b`

**Substituting the expressions for `a` and `b`:**

`y = -(x₁ + x₂) - (-x₁ - x₂) = -x₁ - x₂ + x₁ + x₂ = 0`

**Input Constraints:**

- The annotation `[-2, 2]` next to both `x₁` and `x₂` indicates that each input variable is constrained to the closed interval from -2 to 2. That is, `-2 ≤ x₁ ≤ 2` and `-2 ≤ x₂ ≤ 2`.

### Key Observations

1. **Simplification to Zero:** The specific weights in this network cause the output `y` to algebraically simplify to exactly `0` for any values of `x₁` and `x₂`. The terms cancel out completely.

2. **Contradictory Proof Statement:** The text box states "Prove that y > -5". Given the network's computation, `y` is always `0`. Since `0` is indeed greater than `-5`, the statement is true. However, the proof is trivial because `y` is constant, not dependent on the inputs within their given ranges.

3. **Symmetry:** The network is perfectly symmetric. The path through node `a` (summing inputs) is the exact inverse of the path through node `b` (negating the sum of inputs). Their combination at the output node results in cancellation.

### Interpretation

This diagram likely serves as an educational example or a puzzle in a technical context (e.g., a textbook on neural networks, linear algebra, or logic). Its primary purpose is to demonstrate how a network's architecture and weight assignments determine its function.

The core insight is that despite having variable inputs (`x₁`, `x₂`) within a defined range `[-2, 2]`, the specific weight configuration (`1, 1, -1, -1, -1, -1`) creates a system where the output is invariant—it is always `0`. This makes the proof statement `y > -5` universally true for this model, but trivially so.

The "investigative" reading reveals a potential pedagogical trick: a student might attempt to find the minimum value of `y` by testing the boundaries of the input space (e.g., `x₁ = -2, x₂ = -2`), but the algebraic simplification shows no such effort is needed. The diagram teaches the importance of analyzing the underlying equations of a model before performing numerical exploration.