## Line Graph: Accuracy vs. Number of Operations for Different 'n' Values

### Overview

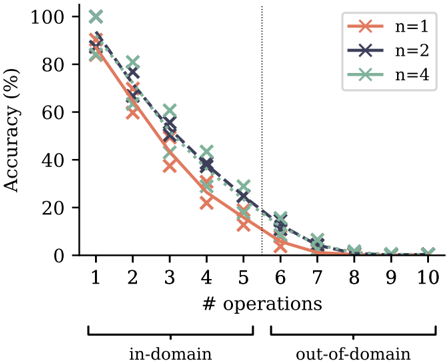

The image is a line graph plotting model accuracy (as a percentage) against the number of sequential operations performed. It compares three different model configurations, labeled by the parameter `n` (n=1, n=2, n=4). The graph is divided into two distinct domains: "in-domain" and "out-of-domain," separated by a vertical dotted line. The overall trend shows a sharp, consistent decline in accuracy as the number of operations increases for all configurations.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy (%)". Scale runs from 0 to 100 in increments of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis:** Labeled "# operations". Discrete integer markers from 1 to 10.

* **Domain Segmentation:** A vertical dotted line is positioned between x=5 and x=6. A bracket below the x-axis labels the region from 1 to 5 as "in-domain" and the region from 6 to 10 as "out-of-domain".

* **Legend:** Located in the top-right corner of the plot area. It defines three data series:

* `n=1`: Represented by an orange line with 'x' markers.

* `n=2`: Represented by a dark blue (navy) line with 'x' markers.

* `n=4`: Represented by a green line with 'x' markers.

* **Data Series:** Three lines, each connecting 'x' markers at integer x-values from 1 to 10.

### Detailed Analysis

**Trend Verification:** All three lines exhibit a strong, monotonic downward trend. The slope is steepest in the "in-domain" region (operations 1-5) and flattens as accuracy approaches zero in the "out-of-domain" region (operations 6-10).

**Data Point Extraction (Approximate Values):**

* **n=1 (Orange):**

* In-domain: Starts at ~90% (op 1), drops to ~60% (op 2), ~40% (op 3), ~25% (op 4), ~15% (op 5).

* Out-of-domain: ~5% (op 6), ~2% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

* **n=2 (Dark Blue):**

* In-domain: Starts at ~95% (op 1), drops to ~70% (op 2), ~50% (op 3), ~35% (op 4), ~20% (op 5).

* Out-of-domain: ~10% (op 6), ~5% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

* **n=4 (Green):**

* In-domain: Starts at ~100% (op 1), drops to ~80% (op 2), ~60% (op 3), ~40% (op 4), ~25% (op 5).

* Out-of-domain: ~15% (op 6), ~5% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

**Cross-Reference & Spatial Grounding:** The legend is positioned in the top-right, clear of the data lines. The color and marker for each series are consistent throughout the plot. For every x-value, the vertical ordering of the points is consistent: the green line (`n=4`) is highest, followed by the dark blue line (`n=2`), and then the orange line (`n=1`). This hierarchy holds from operation 1 through approximately operation 7, after which all converge near zero.

### Key Observations

1. **Universal Performance Degradation:** Accuracy for all models decays rapidly with an increasing number of operations. No model maintains high accuracy beyond 5-6 operations.

2. **Domain Shift Impact:** The transition from "in-domain" to "out-of-domain" at operation 6 coincides with all models already being at very low accuracy (<15%). The most significant performance loss occurs *within* the in-domain region.

3. **Parameter `n` Effect:** Higher `n` values (n=4) provide a consistent, but diminishing, accuracy advantage over lower values (n=1, n=2) across the first ~7 operations. The advantage is most pronounced at lower operation counts (e.g., at op 1: ~10% gap between n=4 and n=1).

4. **Convergence to Zero:** By operation 8, all models have effectively reached 0% accuracy, and this persists through operation 10.

### Interpretation

This graph demonstrates a fundamental limitation in the evaluated system's ability to maintain performance through sequential reasoning or multi-step tasks. The steep, linear-like decline suggests an error accumulation or compounding effect where each additional operation significantly reduces the probability of a correct final outcome.

The parameter `n` likely represents a model capacity or ensemble size factor. While increasing `n` improves baseline accuracy and slows the rate of decay slightly, it does not change the fundamental trajectory toward zero. This implies that simply scaling this parameter is insufficient to solve the core problem of robust multi-step inference.

The "in-domain" vs. "out-of-domain" split is somewhat misleading in its visual emphasis, as the catastrophic failure is already well underway before the domain shift occurs. The primary takeaway is not the difference between domains, but the universal and severe degradation with task complexity (number of operations). This pattern is characteristic of systems lacking robust compositional generalization or those prone to cascading errors.