TECHNICAL ASSET FINGERPRINT

11cc8c61ebd633682b6af590

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Model Accuracy Comparison

### Overview

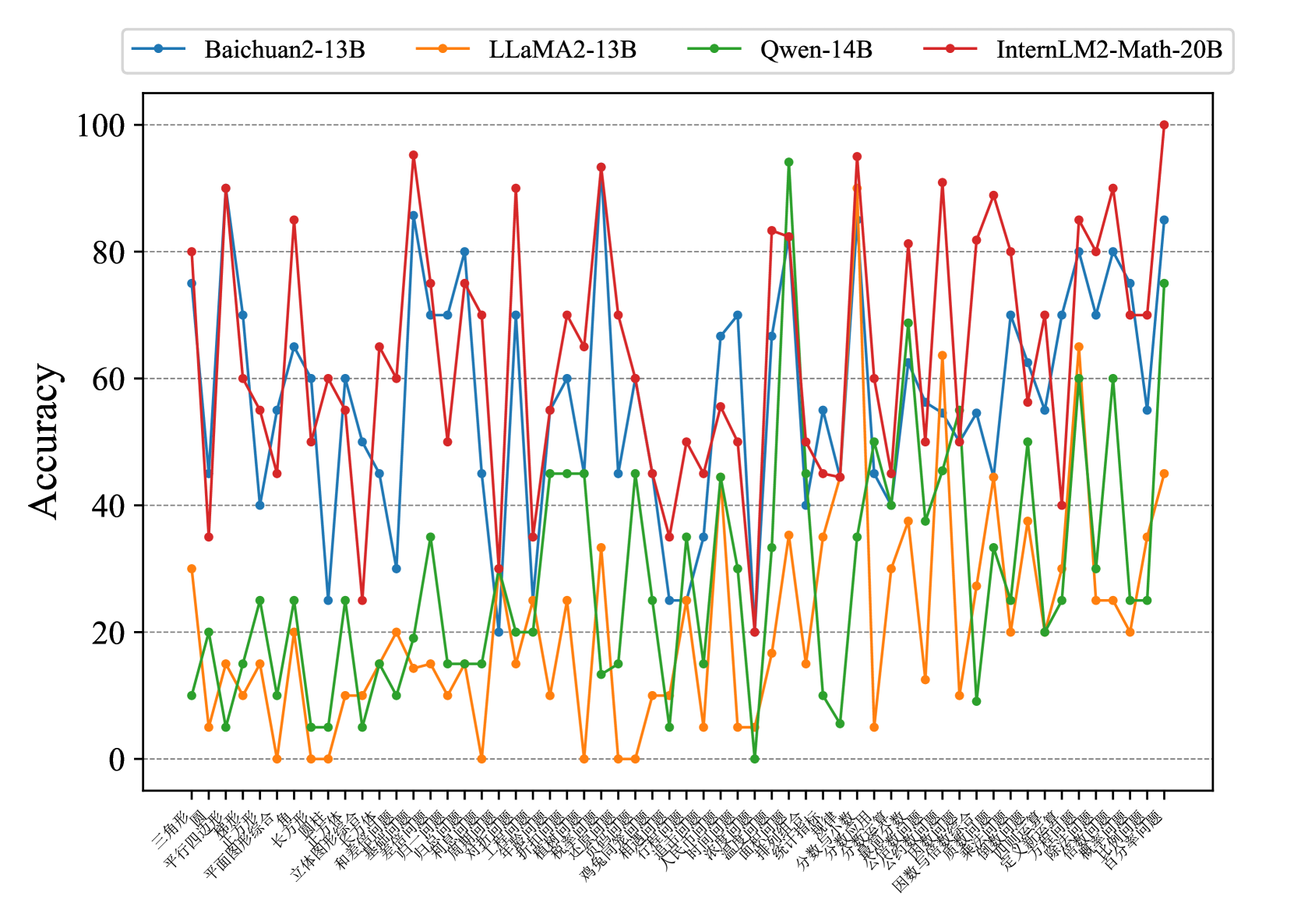

The image is a line chart comparing the accuracy of four different language models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B) across a series of math-related problem types. The y-axis represents accuracy, ranging from 0 to 100. The x-axis represents different problem types, labeled in Chinese.

### Components/Axes

* **Title:** (None visible)

* **X-axis:** Problem Types (labeled in Chinese)

* **Y-axis:** Accuracy (ranging from 0 to 100, with gridlines at intervals of 20)

* **Legend:** Located at the top of the chart.

* Blue: Baichuan2-13B

* Orange: LLaMA2-13B

* Green: Qwen-14B

* Red: InternLM2-Math-20B

### Detailed Analysis

The x-axis labels are in Chinese. Here are the labels and their approximate English translations:

1. 三角形 (sān jiǎo xíng): Triangle

2. 平行四边形 (píng xíng sì biān xíng): Parallelogram

3. 平面图形综合 (píng miàn tú xíng zōng hé): Plane figure synthesis

4. 立体图形 (lì tǐ tú xíng): Solid figure

5. 长方形 (cháng fāng xíng): Rectangle

6. 圆形 (yuán xíng): Circle

7. 和差问题 (hé chā wèn tí): Sum and difference problem

8. 基础问题 (jī chǔ wèn tí): Basic problem

9. 平均问题 (píng jūn wèn tí): Average problem

10. 年龄问题 (nián líng wèn tí): Age problem

11. 归一问题 (guī yī wèn tí): Normalized problem

12. 盈亏问题 (yíng kuī wèn tí): Profit and loss problem

13. 鸡兔同笼 (jī tù tóng lóng): Chicken and rabbit in the same cage (a classic math problem)

14. 对称问题 (duì chèn wèn tí): Symmetry problem

15. 植树问题 (zhí shù wèn tí): Tree planting problem

16. 折扣问题 (zhé kòu wèn tí): Discount problem

17. 税收问题 (shuì shōu wèn tí): Tax problem

18. 工程问题 (gōng chéng wèn tí): Engineering problem

19. 浓度问题 (nóng dù wèn tí): Concentration problem

20. 比例问题 (bǐ lì wèn tí): Proportion problem

21. 利率问题 (lì lǜ wèn tí): Interest rate problem

22. 储蓄问题 (chǔ xù wèn tí): Savings problem

23. 面积问题 (miàn jī wèn tí): Area problem

24. 体积问题 (tǐ jī wèn tí): Volume problem

25. 统计指标 (tǒng jì zhǐ biāo): Statistical indicators

26. 分数/百分数应用 (fēn shù/bǎi fēn shù yìng yòng): Fraction/Percentage application

27. 公倍数/公约数 (gōng bèi shù/gōng yuē shù): Common multiple/Common divisor

28. 因数与倍数 (yīn shù yǔ bèi shù): Factor and multiple

29. 差倍问题 (chā bèi wèn tí): Difference multiple problem

30. 和倍问题 (hé bèi wèn tí): Sum multiple problem

31. 还原问题 (huán yuán wèn tí): Reduction problem

32. 定义新运算 (dìng yì xīn yùn suàn): Define new operation

33. 逻辑推理 (luó jí tuī lǐ): Logical reasoning

34. 包含与排除 (bāo hán yǔ pái chú): Inclusion and exclusion

35. 抽屉原理 (chōu tì yuán lǐ): Pigeonhole principle

36. 日历问题 (rì lì wèn tí): Calendar problem

37. 简单方程 (jiǎn dān fāng chéng): Simple equation

38. 百分率问题 (bǎi fēn lǜ wèn tí): Percentage problem

**Data Series Analysis:**

* **Baichuan2-13B (Blue):** The accuracy fluctuates across problem types, generally ranging between 40 and 80. There are noticeable dips and peaks, indicating varying performance depending on the problem type.

* Triangle: ~75

* Plane figure synthesis: ~45

* Rectangle: ~70

* Statistical indicators: ~55

* Simple equation: ~70

* Percentage problem: ~80

* **LLaMA2-13B (Orange):** This model generally shows lower accuracy compared to the others, often below 40. Its performance is particularly poor on several problem types, with accuracy close to 0.

* Triangle: ~30

* Plane figure synthesis: ~10

* Rectangle: ~0

* Statistical indicators: ~20

* Simple equation: ~25

* Percentage problem: ~45

* **Qwen-14B (Green):** The accuracy of this model varies significantly, with some problem types showing high accuracy (close to 100) and others showing very low accuracy (close to 0).

* Triangle: ~10

* Plane figure synthesis: ~25

* Rectangle: ~15

* Statistical indicators: ~40

* Simple equation: ~45

* Percentage problem: ~70

* **InternLM2-Math-20B (Red):** This model generally exhibits the highest accuracy among the four, often exceeding 60 and reaching close to 100 on some problem types.

* Triangle: ~80

* Plane figure synthesis: ~65

* Rectangle: ~50

* Statistical indicators: ~60

* Simple equation: ~90

* Percentage problem: ~100

### Key Observations

* InternLM2-Math-20B (Red) consistently outperforms the other models across most problem types.

* LLaMA2-13B (Orange) generally has the lowest accuracy.

* Qwen-14B (Green) shows high variance in performance, indicating sensitivity to specific problem types.

* All models exhibit fluctuations in accuracy depending on the problem type, suggesting that certain types of math problems are more challenging for these language models.

### Interpretation

The chart provides a comparative analysis of the accuracy of four language models on a range of math problems. The data suggests that InternLM2-Math-20B is the most proficient at solving these types of problems, while LLaMA2-13B struggles. The varying performance of Qwen-14B highlights the importance of model architecture and training data in determining a model's ability to generalize across different problem types. The fluctuations in accuracy for all models indicate that certain mathematical concepts or problem-solving strategies are more difficult for these models to learn and apply. Further investigation into the specific characteristics of these challenging problem types could inform future model development and training strategies.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Model Accuracy on Various Tasks

### Overview

The image presents a line chart comparing the accuracy of four large language models – Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B – across a series of tasks. The x-axis represents the tasks, labeled in Chinese characters, and the y-axis represents the accuracy, ranging from 0 to 100.

### Components/Axes

* **X-axis Title:** (Not explicitly labeled, but represents) Tasks (in Chinese)

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** Linear, from 0 to 100, with increments of 20.

* **Legend:** Located at the top-center of the chart.

* **Blue Line:** Baichuan2-13B

* **Orange Line:** LLaMA2-13B

* **Green Line:** Qwen-14B

* **Red Line:** InternLM2-Math-20B

* **Tasks (X-axis Labels):** The tasks are labeled in Chinese characters. A partial translation (best effort) is provided in the Detailed Analysis section.

### Detailed Analysis

The chart displays accuracy scores for each model on each task. Due to the Chinese labels, precise task identification is difficult, but a best-effort attempt is made below. The x-axis has approximately 40 tasks.

* **Baichuan2-13B (Blue Line):** The line fluctuates significantly. It starts around 10, rises to a peak of approximately 90 around task 10, then dips and rises again, ending around 80.

* **LLaMA2-13B (Orange Line):** This line generally stays lower than the others, fluctuating between 10 and 30 for the first 20 tasks. It shows a peak around 60 at task 25, then declines to around 20-30 for the remaining tasks.

* **Qwen-14B (Green Line):** This line exhibits high variability. It starts around 15, peaks at approximately 95 around task 8, then fluctuates between 20 and 80 for the rest of the tasks.

* **InternLM2-Math-20B (Red Line):** This line shows the highest overall accuracy, with frequent peaks around 80-95. It starts around 40, rises quickly, and maintains high accuracy throughout most of the tasks, with some dips to around 60.

Here's a rough attempt at translating some of the x-axis labels (using online translation tools, accuracy not guaranteed):

* Task 1: 三种漫画 (Three Comics)

* Task 2: 平行四边形 (Parallelogram)

* Task 3: 立方体 (Cube)

* Task 4: 长方形 (Rectangle)

* Task 5: 和谐 (Harmony)

* Task 6: 立方体 (Cube)

* Task 7: 汉字 (Chinese Characters)

* Task 8: 海 (Sea)

* Task 9: 分数 (Fractions)

* Task 10: 勾股 (Pythagorean Theorem)

* Task 11: 国家 (Country)

* Task 12: 股票 (Stocks)

* Task 13: 经济 (Economy)

* Task 14: 股票 (Stocks)

* Task 15: 股票 (Stocks)

* Task 16: 股票 (Stocks)

* Task 17: 股票 (Stocks)

* Task 18: 股票 (Stocks)

* Task 19: 股票 (Stocks)

* Task 20: 股票 (Stocks)

### Key Observations

* InternLM2-Math-20B consistently outperforms the other models, particularly on tasks where high accuracy is required.

* Qwen-14B shows significant variability, with both high peaks and low troughs in accuracy.

* LLaMA2-13B generally exhibits the lowest accuracy among the four models.

* Baichuan2-13B shows a moderate level of accuracy, with fluctuations throughout the tasks.

* The tasks appear to cover a diverse range of topics, including geometry, mathematics, language, and economics.

### Interpretation

The data suggests that InternLM2-Math-20B is the most capable model across the tested tasks, likely due to its specialized training in mathematical reasoning. The high variability of Qwen-14B could indicate sensitivity to task formulation or data distribution. LLaMA2-13B's lower performance might be attributed to its smaller model size or different training data. The presence of tasks related to Chinese characters and cultural concepts (e.g., "和谐" - Harmony) suggests the models are being evaluated on their ability to handle the Chinese language and context. The repeated "股票" (Stocks) tasks suggest a focus on financial reasoning. The chart highlights the importance of model selection based on the specific task requirements and the need for further investigation into the factors influencing model performance on diverse tasks. The large fluctuations in accuracy across tasks for all models suggest that performance is highly task-dependent.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of Four AI Models Across Chinese Math Problem Categories

### Overview

This image is a line chart comparing the performance (accuracy) of four different large language models (LLMs) on a wide variety of Chinese-language mathematics problem categories. The chart displays the accuracy percentage for each model across approximately 50 distinct problem types, revealing significant variability in performance both between models and across different mathematical domains.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **Y-Axis:**

* **Label:** "Accuracy" (written vertically on the left side).

* **Scale:** Linear scale from 0 to 100.

* **Major Ticks/Gridlines:** At 0, 20, 40, 60, 80, 100. Horizontal dashed gridlines extend from these ticks across the chart.

* **X-Axis:**

* **Label:** None explicitly stated. The axis represents discrete categories of math problems.

* **Tick Labels:** A long series of Chinese text labels, each representing a specific math problem category. They are rotated approximately 45 degrees for readability.

* **Legend:**

* **Position:** Centered at the top of the chart, inside the plot area.

* **Content:** Four entries, each with a colored line segment and marker:

* **Blue line with circle marker:** `Baichuan2-13B`

* **Orange line with circle marker:** `LLaMA2-13B`

* **Green line with circle marker:** `Qwen-14B`

* **Red line with circle marker:** `InternLM2-Math-20B`

### Detailed Analysis

**X-Axis Categories (Translated from Chinese):**

The categories, from left to right, are:

1. 三角形 (Triangle)

2. 圆 (Circle)

3. 平行四边形 (Parallelogram)

4. 梯形 (Trapezoid)

5. 平面图形综合 (Plane Figure Synthesis)

6. 长方体 (Cuboid)

7. 圆柱 (Cylinder)

8. 圆锥 (Cone)

9. 立体图形综合 (Solid Figure Synthesis)

10. 和差问题 (Sum and Difference Problem)

11. 提问问题 (Question Problem - *likely a specific problem type*)

12. 归一问题 (Unitary Method Problem)

13. 和倍问题 (Sum and Multiple Problem)

14. 差倍问题 (Difference and Multiple Problem)

15. 对称问题 (Symmetry Problem)

16. 工程问题 (Work Problem)

17. 年龄问题 (Age Problem)

18. 扩倍问题 (Expansion and Multiple Problem)

19. 积木问题 (Block Problem - *likely spatial reasoning*)

20. 交通问题 (Traffic Problem)

21. 鸡兔同笼 (Chicken and Rabbit in the Same Cage)

22. 相遇问题 (Meeting Problem)

23. 行程问题 (Travel Problem)

24. 人民币问题 (RMB/Currency Problem)

25. 计数问题 (Counting Problem)

26. 浓度问题 (Concentration Problem)

27. 盈亏问题 (Surplus and Deficit Problem)

28. 面积问题 (Area Problem)

29. 统计图表 (Statistical Charts)

30. 指数律 (Exponent Laws)

31. 分数与小数 (Fractions and Decimals)

32. 分数应用题 (Fraction Word Problems)

33. 公因数与公倍数 (Common Factors and Multiples)

34. 因数与倍数综合 (Factors and Multiples Synthesis)

35. 比和比例综合 (Ratio and Proportion Synthesis)

36. 案例问题 (Case Problem)

37. 定义新运算 (Define New Operations)

38. 方程与方程组 (Equations and Systems of Equations)

39. 除法与减法 (Division and Subtraction)

40. 倍数问题 (Multiple Problem)

41. 移动问题 (Movement Problem)

42. 百分率问题 (Percentage Problem)

**Model Performance Trends (Visual Verification):**

* **InternLM2-Math-20B (Red Line):** This line is frequently the highest on the chart, showing a generally upward trend with high volatility. It peaks at or near 100% accuracy for "Percentage Problem" (far right) and shows very high accuracy (>90%) for categories like "Sum and Difference Problem", "Unitary Method Problem", and "Define New Operations". Its lowest points are around 20-40% for categories like "Statistical Charts" and "Concentration Problem".

* **Baichuan2-13B (Blue Line):** This line is highly volatile, often competing with the red line for the top position but also dropping significantly. It shows strong performance (>80%) in "Triangle", "Circle", "Sum and Difference Problem", and "Define New Operations". It has notable dips below 40% in areas like "立体图形综合 (Solid Figure Synthesis)" and "统计图表 (Statistical Charts)".

* **Qwen-14B (Green Line):** This line generally occupies the middle-to-lower range of accuracy. It has a significant peak above 90% for "鸡兔同笼 (Chicken and Rabbit in the Same Cage)" but otherwise mostly stays between 20% and 60%. It shows a notable dip to 0% for "盈亏问题 (Surplus and Deficit Problem)".

* **LLaMA2-13B (Orange Line):** This line is consistently the lowest-performing model across almost all categories. Its accuracy rarely exceeds 40%, with many points at or near 0%. Its highest points are around 60-65% for "分数与小数 (Fractions and Decimals)" and "百分率问题 (Percentage Problem)".

### Key Observations

1. **Performance Hierarchy:** There is a clear, though not absolute, hierarchy: InternLM2-Math-20B ≥ Baichuan2-13B > Qwen-14B > LLaMA2-13B.

2. **Domain Specificity:** All models show extreme variability. No model is uniformly good or bad. Performance is highly dependent on the specific math domain. For example, Qwen-14B excels at "鸡兔同笼" but fails at "盈亏问题".

3. **Common Struggles:** The category "统计图表 (Statistical Charts)" appears to be challenging for all models, with accuracies clustered between ~20% and ~50%.

4. **Model Strengths:**

* **InternLM2-Math-20B:** Shows particular strength in algebraic and arithmetic word problems (e.g., Sum/Difference, Unitary Method, Define New Operations).

* **Baichuan2-13B:** Shows strength in geometry (Triangle, Circle) and some word problems.

* **Qwen-14B:** Has a standout performance on the classic "鸡兔同笼" problem.

* **LLaMA2-13B:** Shows relative strength in foundational arithmetic (Fractions/Decimals, Percentages) compared to its own performance on other topics.

5. **Volatility:** The blue (Baichuan) and red (InternLM) lines are the most volatile, indicating their performance is the most sensitive to the problem type.

### Interpretation

This chart provides a granular benchmark of LLM capabilities in mathematical reasoning within the Chinese language context. The data suggests that:

1. **Specialization Over Generalization:** The models, especially the top performers, are not general-purpose math solvers. Their capabilities are highly specialized. The InternLM2-Math-20B model, likely fine-tuned for mathematics, demonstrates the benefit of domain-specific training, but even it has clear weaknesses.

2. **The "Chinese Math Problem" Spectrum:** The x-axis represents a comprehensive curriculum of Chinese elementary and middle school math. The chart effectively maps which parts of this curriculum are more or less accessible to current LLMs. Foundational arithmetic and classic puzzle types (鸡兔同笼) are more accessible than applied topics like statistics or complex concentration problems.

3. **Model Architecture and Training Data Implications:** The stark difference between LLaMA2-13B (a general English-centric model) and the others (likely with more Chinese and/or math-specific data) highlights the critical role of pre-training data composition and potential fine-tuning for achieving proficiency in specific domains and languages.

4. **A Diagnostic Tool:** For a researcher, this chart is a diagnostic map. It doesn't just say "Model X is better." It shows *where* and *by how much* it is better, and more importantly, *where it fails*. This is crucial for guiding future model development, indicating which mathematical reasoning skills (e.g., handling statistical data, understanding concentration) require more focused training or architectural innovation.

**In summary, the image is a dense, information-rich performance matrix. It moves beyond aggregate scores to reveal the nuanced, domain-specific landscape of AI mathematical reasoning in Chinese, highlighting both significant progress and persistent challenges.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Model Accuracy Across Categories

### Overview

The image is a line graph comparing the accuracy of four AI models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, InternLM2-Math-20B) across multiple categories. The x-axis contains Chinese text labels (likely categories or topics), and the y-axis represents accuracy as a percentage from 0 to 100. The graph shows significant fluctuations in accuracy for all models, with sharp peaks and troughs.

### Components/Axes

- **X-axis**: Chinese text labels (e.g., "三角形", "四边形", "圆形", etc.), representing categories or topics. The exact meaning of these labels is not translated here, as they are in Chinese.

- **Y-axis**: Labeled "Accuracy" with a scale from 0 to 100.

- **Legend**: Located in the top-left corner, with four colored lines:

- **Blue**: Baichuan2-13B

- **Orange**: LLaMA2-13B

- **Green**: Qwen-14B

- **Red**: InternLM2-Math-20B

### Detailed Analysis

- **Baichuan2-13B (Blue)**:

- Peaks at ~80-90% accuracy in some categories (e.g., "长方体", "立体图形").

- Drops to ~20-30% in others (e.g., "平面图形", "几何图形").

- Average accuracy ~50-60%.

- **LLaMA2-13B (Orange)**:

- Consistently the lowest performer, with accuracy often near 0% (e.g., "平面图形", "几何图形").

- Peaks at ~40-50% in a few categories (e.g., "立体图形", "几何图形").

- Average accuracy ~20-30%.

- **Qwen-14B (Green)**:

- Peaks at ~70-80% in some categories (e.g., "立体图形", "几何图形").

- Drops to ~10-20% in others (e.g., "平面图形", "几何图形").

- Average accuracy ~40-50%.

- **InternLM2-Math-20B (Red)**:

- Highest overall performance, with peaks at ~100% in some categories (e.g., "立体图形", "几何图形").

- Drops to ~40-50% in others (e.g., "平面图形", "几何图形").

- Average accuracy ~60-70%.

### Key Observations

1. **InternLM2-Math-20B (Red)** consistently outperforms other models, achieving the highest accuracy in most categories.

2. **LLaMA2-13B (Orange)** shows the most erratic performance, with frequent drops to near-zero accuracy.

3. **Baichuan2-13B (Blue)** and **Qwen-14B (Green)** exhibit moderate performance, with significant variability depending on the category.

4. **Accuracy fluctuations** suggest that model performance is highly dependent on the specific category or task being evaluated.

### Interpretation

The data indicates that **InternLM2-Math-20B** is the most robust model across the tested categories, likely due to specialized training in mathematical or geometric tasks. **LLaMA2-13B**'s poor performance in many categories suggests limitations in handling certain types of problems. The variability in accuracy across models highlights the importance of model selection based on the specific application or domain. The Chinese category labels (e.g., "三角形", "四边形") likely represent geometric shapes or mathematical concepts, but their exact meaning requires translation for deeper analysis.

DECODING INTELLIGENCE...