TECHNICAL ASSET FINGERPRINT

11eb45c01e3f184458484f97

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: LLM Reasoning Approaches

### Overview

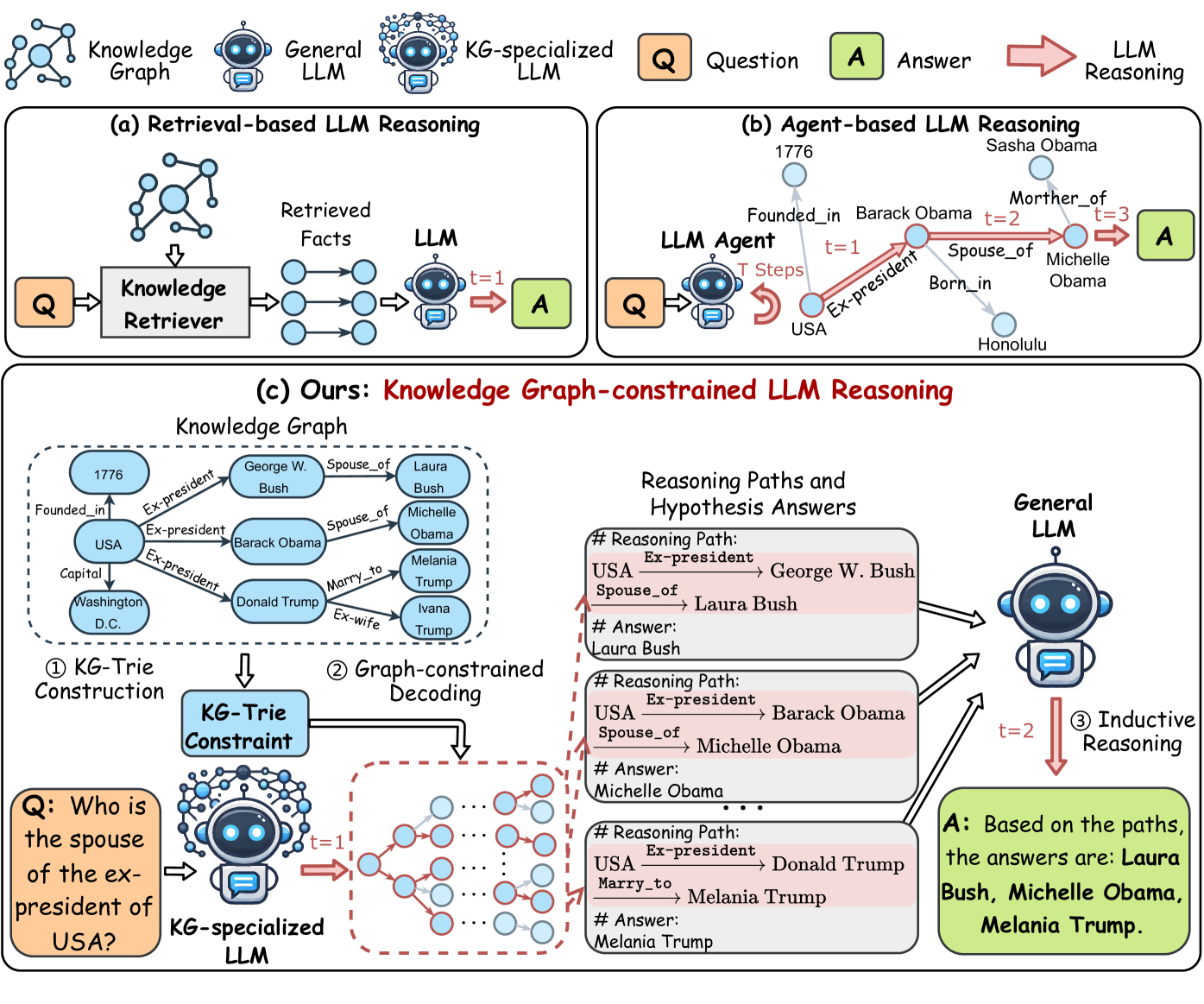

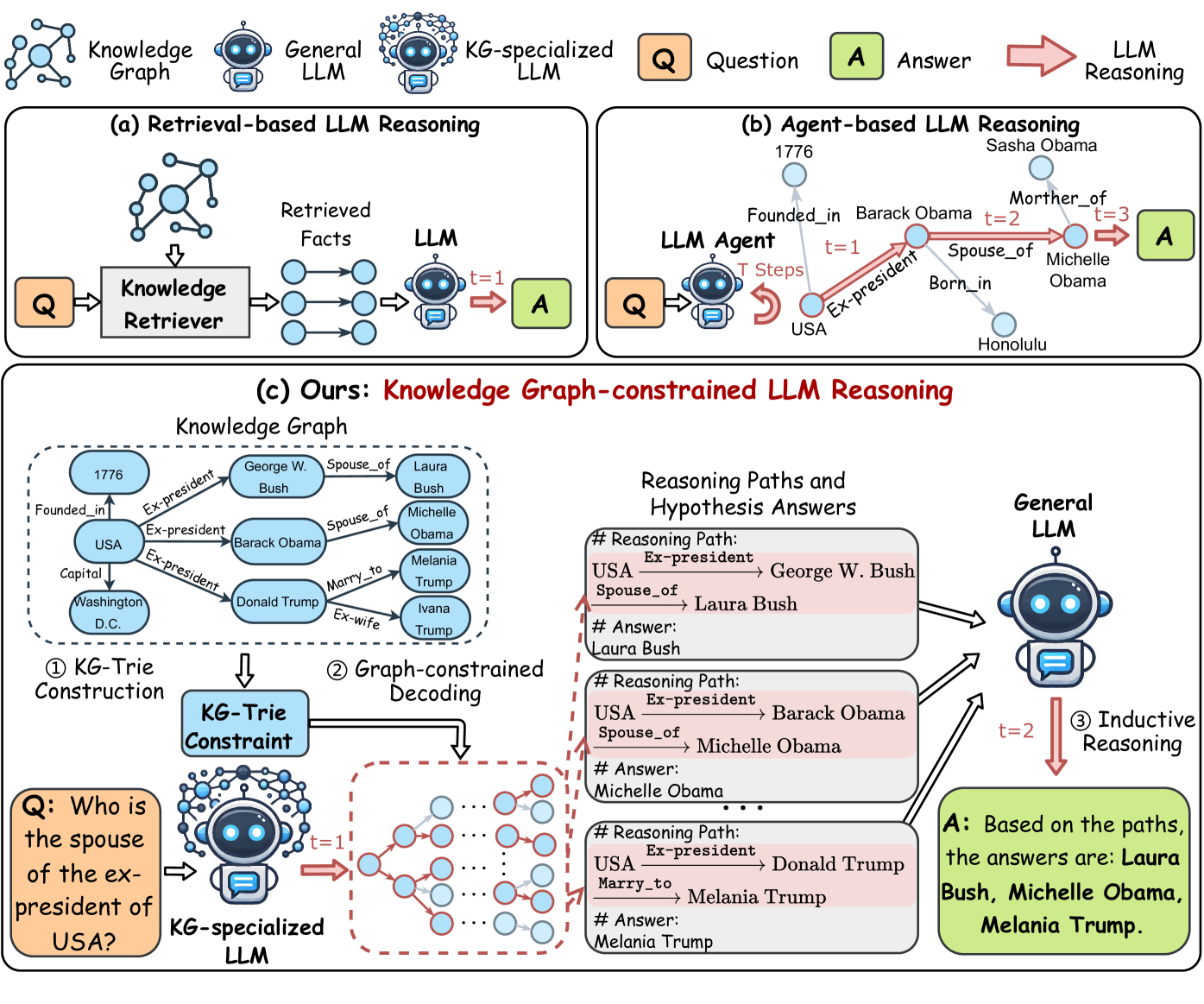

The image presents a comparative diagram illustrating three different approaches to Language Model (LLM) reasoning: Retrieval-based, Agent-based, and Knowledge Graph-constrained. Each approach is depicted with its components and flow, highlighting the differences in how they process questions and generate answers.

### Components/Axes

* **Legend:** Located at the top of the image.

* `Q`: Question (orange square)

* `A`: Answer (green square)

* `LLM Reasoning`: Red arrow

* **(a) Retrieval-based LLM Reasoning:**

* `Knowledge Graph`: A network of interconnected nodes.

* `General LLM`: A standard language model.

* `KG-specialized LLM`: A language model specialized in knowledge graphs.

* `Knowledge Retriever`: Component that retrieves relevant facts.

* `Retrieved Facts`: The output of the Knowledge Retriever.

* `t=1`: Indicates a time step.

* **(b) Agent-based LLM Reasoning:**

* `LLM Agent`: An agent-based language model.

* `T Steps`: Indicates multiple reasoning steps.

* Nodes representing entities and relationships (e.g., "Barack Obama," "Michelle Obama," "Founded_in," "Spouse_of").

* `1776`, `Sasha Obama`, `Honolulu`

* **(c) Ours: Knowledge Graph-constrained LLM Reasoning:**

* `Knowledge Graph`: A graph containing entities and relationships (e.g., "George W. Bush," "Laura Bush," "Barack Obama," "Donald Trump," "Melania Trump," "Ivana Trump," "USA," "Washington D.C.").

* `KG-Trie Construction`: The process of building a KG-Trie.

* `KG-Trie Constraint`: The constrained KG-Trie structure.

* `Graph-constrained Decoding`: The decoding process using the constrained graph.

* `Reasoning Paths and Hypothesis Answers`: Boxes showing reasoning paths and corresponding answers.

* `General LLM`: A standard language model.

* `Inductive Reasoning`: The reasoning process.

### Detailed Analysis

* **(a) Retrieval-based LLM Reasoning:**

* A question (Q) is fed into a Knowledge Retriever.

* The Knowledge Retriever retrieves relevant facts from a Knowledge Graph.

* The retrieved facts are then processed by a General LLM to generate an answer (A) at time step t=1.

* **(b) Agent-based LLM Reasoning:**

* A question (Q) is input into an LLM Agent.

* The LLM Agent performs multiple reasoning steps (T Steps) based on relationships within the knowledge.

* For example, starting from "Barack Obama," the agent reasons through "Founded_in" (1776), "Ex-president" (USA), "Spouse_of" (Michelle Obama), and "Mother_of" (Sasha Obama) across t=1, t=2, and t=3.

* The final answer (A) is generated after these steps.

* **(c) Ours: Knowledge Graph-constrained LLM Reasoning:**

* A Knowledge Graph is constructed with entities and relationships.

* The KG-Trie Construction process builds a KG-Trie Constraint.

* A question (Q) is input into a KG-specialized LLM at time step t=1.

* Graph-constrained Decoding is performed using the KG-Trie Constraint.

* Reasoning Paths and Hypothesis Answers are generated, showing the reasoning steps.

* For example, one path is "USA Ex-president -> George W. Bush Spouse_of -> Laura Bush," leading to the answer "Laura Bush."

* These paths are then processed by a General LLM through Inductive Reasoning at time step t=2.

* The final answer (A) is generated based on the paths: "Laura Bush, Michelle Obama, Melania Trump."

### Key Observations

* The diagram highlights three distinct approaches to LLM reasoning, each with its own architecture and process.

* Retrieval-based reasoning relies on retrieving relevant facts before processing.

* Agent-based reasoning involves multiple reasoning steps within the LLM Agent.

* Knowledge Graph-constrained reasoning uses a KG-Trie Constraint to guide the decoding process.

### Interpretation

The diagram illustrates the evolution and diversification of LLM reasoning techniques. The progression from simple retrieval-based methods to more sophisticated agent-based and knowledge graph-constrained approaches demonstrates an increasing emphasis on structured knowledge and reasoning paths. The "Ours" approach, which combines a KG-Trie Constraint with inductive reasoning, suggests an attempt to leverage the strengths of both structured knowledge and general language understanding. The diagram suggests that by incorporating structured knowledge and reasoning paths, LLMs can generate more accurate and reliable answers.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Knowledge Graph-constrained LLM Reasoning

### Overview

The image presents a comparative diagram illustrating three different approaches to Large Language Model (LLM) reasoning: Retrieval-based, Agent-based, and a novel Knowledge Graph-constrained approach. The diagram visually breaks down the process of question answering in each method, highlighting the role of knowledge graphs and specialized LLMs. The diagram is divided into three main sections (a, b, and c), each representing a different reasoning approach.

### Components/Axes

The diagram consists of several key components:

* **Knowledge Graph:** Represented as interconnected nodes and edges, symbolizing a structured knowledge base.

* **General LLM:** A standard Large Language Model.

* **KG-specialized LLM:** An LLM specifically trained on knowledge graph data.

* **Question (Q):** The input query.

* **Answer (A):** The output response.

* **Knowledge Retriever:** A component that fetches relevant facts from the knowledge graph.

* **LLM Agent:** A component that orchestrates reasoning steps.

* **KG-Trie Construction:** A process for building a knowledge graph trie.

* **Graph-constrained Decoding:** A process for decoding information using the knowledge graph.

* **Reasoning Paths and Hypothesis Answers:** A section displaying reasoning paths and corresponding answers.

* **Inductive Reasoning:** A process for generating answers based on reasoning paths.

* **Time Steps (t=1, t=2, t=3):** Indicate the progression of reasoning steps.

### Detailed Analysis or Content Details

**(a) Retrieval-based LLM Reasoning:**

* A question (Q) is input into a General LLM.

* The General LLM interacts with a Knowledge Graph via a KG-specialized LLM.

* The KG-specialized LLM provides an answer (A).

**(b) Agent-based LLM Reasoning:**

* A question (Q) is input into an LLM Agent.

* The LLM Agent takes 'T Steps' to reason.

* The LLM Agent interacts with a Knowledge Graph, retrieving facts.

* The LLM Agent uses the retrieved facts to generate an answer (A).

* Example: Question: "Who is Sasha Obama?" Answer: "Mother_of_3 Michelle Obama". The Knowledge Graph shows "Founded_in" Barack Obama, "Ex-president" Barack Obama, "Born_in" Honolulu.

**(c) Ours: Knowledge Graph-constrained LLM Reasoning:**

* **KG-Trie Construction:** A Knowledge Graph is used to construct a KG-Trie. The graph includes nodes like "USA", "Ex-president", "George W. Bush", "Laura Bush", "Barack Obama", "Donald Trump", "Melania Trump", "Ivana Trump", "Washington D.C.", and relationships like "Spouse_of", "Marry_to", "Founded_in", "Capital".

* **Graph-constrained Decoding:** The KG-Trie is used to constrain the decoding process.

* **Inductive Reasoning:** A General LLM performs inductive reasoning based on the constrained decoding.

* **Question:** "Who is the spouse of the ex-president of USA?"

* **Reasoning Paths and Hypothesis Answers:**

* Path 1: USA → Ex-president → George W. Bush. Answer: Laura Bush.

* Path 2: USA → Ex-president → Barack Obama. Answer: Michelle Obama.

* Path 3: USA → Ex-president → Donald Trump. Answer: Melania Trump.

* **Final Answer:** Based on the paths, the answers are: Laura Bush, Michelle Obama, Melania Trump.

### Key Observations

* The Knowledge Graph-constrained approach (c) explicitly incorporates the knowledge graph into the reasoning process at multiple stages (trie construction and decoding).

* The Agent-based approach (b) uses the knowledge graph as a source of retrieved facts, but the reasoning is primarily driven by the LLM Agent.

* The Retrieval-based approach (a) is the simplest, relying on a KG-specialized LLM to directly provide answers.

* The diagram highlights the importance of structured knowledge (Knowledge Graph) in enhancing LLM reasoning capabilities.

* The example question in (c) demonstrates how the knowledge graph enables the LLM to consider multiple possible answers based on different reasoning paths.

### Interpretation

The diagram illustrates a progression in LLM reasoning techniques, moving from simple retrieval to more sophisticated graph-constrained approaches. The core idea is that integrating structured knowledge from a knowledge graph can significantly improve the accuracy, reliability, and explainability of LLM responses. The Knowledge Graph-constrained approach (c) appears to be the most promising, as it leverages the knowledge graph not only as a data source but also as a constraint on the reasoning process itself. This constraint helps to focus the LLM's attention on relevant information and avoid generating incorrect or nonsensical answers. The inclusion of reasoning paths and hypothesis answers provides transparency into the LLM's decision-making process, which is crucial for building trust and understanding. The diagram suggests that future LLM research should focus on developing more effective methods for integrating knowledge graphs into the reasoning pipeline. The use of KG-Tries is a novel approach to encoding the knowledge graph for efficient reasoning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of LLM Reasoning Approaches

### Overview

The image is a technical diagram illustrating and comparing three different methodologies for Large Language Model (LLM) reasoning, particularly in the context of answering questions using structured knowledge. The three approaches are: (a) Retrieval-based LLM Reasoning, (b) Agent-based LLM Reasoning, and (c) the proposed method, "Knowledge Graph-constrained LLM Reasoning." The diagram uses a consistent set of icons and flow arrows to depict the process for each method.

### Components/Axes

The diagram is divided into three main panels, labeled (a), (b), and (c). A legend at the top defines the visual symbols used throughout:

* **Knowledge Graph**: Represented by a network of blue nodes and edges.

* **General LLM**: Represented by a blue robot icon.

* **KG-specialized LLM**: Represented by a blue robot icon with a network overlay.

* **Question (Q)**: Represented by an orange square with a "Q".

* **Answer (A)**: Represented by a green square with an "A".

* **LLM Reasoning**: Represented by a red arrow.

### Detailed Analysis

#### Panel (a): Retrieval-based LLM Reasoning

* **Flow**: Question (Q) → Knowledge Retriever → Retrieved Facts (blue nodes) → General LLM (t=1) → Answer (A).

* **Description**: This is a linear pipeline. A question is sent to a "Knowledge Retriever" module, which fetches relevant facts from a knowledge graph. These retrieved facts are then fed into a General LLM, which performs reasoning in a single step (t=1) to produce the final answer.

#### Panel (b): Agent-based LLM Reasoning

* **Flow**: Question (Q) → LLM Agent → (Multi-step graph traversal) → Answer (A).

* **Description**: An LLM Agent interacts with a knowledge graph over multiple time steps (T Steps). The example shows a path: `USA` → (Ex-president, t=1) → `Barack Obama` → (Spouse_of, t=2) → `Michelle Obama` → (Mother_of, t=3) → `Sasha Obama`. The agent navigates the graph step-by-step to arrive at an answer.

#### Panel (c): Ours: Knowledge Graph-constrained LLM Reasoning

This panel is the most detailed and is subdivided into several components.

**1. Knowledge Graph (Example Subgraph)**

* A dashed box contains an example knowledge graph with entities and relations:

* `1776` → (Founded_in) → `USA`

* `USA` → (Capital) → `Washington D.C.`

* `USA` → (Ex-president) → `George W. Bush`, `Barack Obama`, `Donald Trump`

* `George W. Bush` → (Spouse_of) → `Laura Bush`

* `Barack Obama` → (Spouse_of) → `Michelle Obama`

* `Donald Trump` → (Marry_to) → `Melania Trump`; (Ex-wife) → `Ivana Trump`

**2. Process Flow**

* **Step ①: KG-Trie Construction**: The knowledge graph is processed to create a "KG-Trie Constraint" data structure.

* **Step ②: Graph-constrained Decoding**:

* **Input**: The question "Q: Who is the spouse of the ex-president of USA?" is fed into a "KG-specialized LLM".

* **Process**: The LLM's decoding process is constrained by the KG-Trie. The diagram shows a branching path of possible token sequences (red and blue nodes), guided by the graph structure.

* **Output**: This generates multiple "Reasoning Paths and Hypothesis Answers".

* **Reasoning Paths and Hypothesis Answers (Output of Step ②)**:

* **Path 1**: `USA` → (Ex-president) → `George W. Bush` → (Spouse_of) → `Laura Bush`. **Hypothesis Answer**: Laura Bush.

* **Path 2**: `USA` → (Ex-president) → `Barack Obama` → (Spouse_of) → `Michelle Obama`. **Hypothesis Answer**: Michelle Obama.

* **Path 3**: `USA` → (Ex-president) → `Donald Trump` → (Marry_to) → `Melania Trump`. **Hypothesis Answer**: Melania Trump.

* **Step ③: Inductive Reasoning**: All generated reasoning paths and their hypothesis answers are fed into a General LLM (t=2). This LLM performs inductive reasoning to synthesize a final, consolidated answer.

* **Final Answer (A)**: "Based on the paths, the answers are: **Laura Bush, Michelle Obama, Melania Trump.**"

### Key Observations

1. **Methodological Progression**: The diagram shows an evolution from simple retrieval (a), to interactive but potentially unguided exploration (b), to a structured, constrained generation process (c) that explicitly uses the knowledge graph to guide the LLM's reasoning path.

2. **Multi-Path Generation**: The core innovation in (c) is the generation of multiple valid reasoning paths from the KG, rather than a single path or a set of disconnected facts.

3. **Two-Stage LLM Use**: The proposed method uses two LLMs: a KG-specialized LLM for constrained path generation and a General LLM for final inductive synthesis.

4. **Spatial Layout**: Panel (c) is the largest and is positioned at the bottom, emphasizing it as the main contribution. The flow within (c) moves from left (input/KG) to center (constrained decoding) to right (output/synthesis).

### Interpretation

The diagram argues for a hybrid reasoning architecture that combines the structured, factual grounding of a Knowledge Graph with the generative and inductive reasoning capabilities of LLMs.

* **Problem with (a)**: Retrieval-based methods may miss complex relational paths or provide facts without clear connective logic for the LLM to use.

* **Problem with (b)**: Agent-based methods can be computationally expensive and may wander or follow suboptimal paths in large graphs without clear guidance.

* **Solution in (c)**: The "KG-constrained" approach directly bakes the graph structure into the LLM's generation process. By using a KG-Trie, it ensures that every generated token sequence corresponds to a valid path in the knowledge graph. This produces not just an answer, but an *explainable reasoning path* (or multiple paths) that led to it. The final inductive reasoning step allows the model to aggregate and validate these paths into a comprehensive answer, handling cases where multiple correct answers exist (like the spouses of multiple ex-presidents). This method aims to be more faithful to the source knowledge, more explainable, and more robust than the other two approaches.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparative Analysis of LLM Reasoning Methods

### Overview

The diagram compares three approaches to Large Language Model (LLM) reasoning:

1. **Retrieval-based LLM Reasoning**

2. **Agent-based LLM Reasoning**

3. **Knowledge Graph-constrained LLM Reasoning (Proposed Method)**

It illustrates workflows, components, and reasoning paths for each method, with a focus on knowledge graph integration in the proposed approach.

---

### Components/Axes

#### Header Section

- **Knowledge Graph**: Visualized as interconnected nodes (e.g., "USA", "Barack Obama", "Michelle Obama").

- **General LLM**: Represented by a robot icon with a speech bubble.

- **KG-specialized LLM**: Robot icon with a speech bubble surrounded by interconnected nodes.

- **Question (Q)**: Orange box labeled "Q".

- **Answer (A)**: Green box labeled "A".

- **LLM Reasoning**: Arrows labeled "LLM Reasoning" connect components.

#### Main Diagram (Bottom Section)

1. **Knowledge Graph**:

- Nodes: "USA", "George W. Bush", "Barack Obama", "Donald Trump", "Michelle Obama", "Melania Trump", "Laura Bush", "Ivana Trump".

- Edges: Relationships like "Ex-president", "Spouse_of", "Born_in", "Marry_to".

- Example: "USA" → "Ex-president" → "Barack Obama" → "Spouse_of" → "Michelle Obama".

2. **KG-Trie Construction**:

- Process for building a knowledge graph trie (tree-like structure).

3. **Graph-constrained Decoding**:

- Visualized as a decision tree with nodes labeled "t=1", "t=2", etc.

4. **Reasoning Paths and Hypothesis Answers**:

- Three numbered reasoning paths:

- **Path 1**: USA → George W. Bush → Laura Bush (Answer: Laura Bush).

- **Path 2**: USA → Barack Obama → Michelle Obama (Answer: Michelle Obama).

- **Path 3**: USA → Donald Trump → Melania Trump (Answer: Melania Trump).

5. **Inductive Reasoning**:

- Final answer aggregates all paths: "Laura Bush, Michelle Obama, Melania Trump".

---

### Detailed Analysis

#### Retrieval-based LLM Reasoning (a)

- **Workflow**:

- Question (Q) → Knowledge Retriever → Retrieved Facts → LLM → Answer (A).

- **Key Elements**:

- "Retrieved Facts" represented as interconnected nodes.

- Direct flow from retrieved facts to LLM.

#### Agent-based LLM Reasoning (b)

- **Workflow**:

- Question (Q) → LLM Agent → T Steps → Answer (A).

- **Key Elements**:

- Example steps: "Founded_in", "Ex-president", "Spouse_of".

- Temporal progression (t=1, t=2, t=3).

#### Knowledge Graph-constrained LLM Reasoning (c)

- **Workflow**:

- **KG-Trie Construction**: Builds a structured knowledge graph.

- **Graph-constrained Decoding**: Uses the trie to guide reasoning.

- **Inductive Reasoning**: Combines multiple paths for a comprehensive answer.

- **Key Elements**:

- Explicit use of "KG-Trie Constraint" to limit LLM exploration.

- Multiple reasoning paths with hypothesis answers.

---

### Key Observations

1. **Structured Reasoning**:

- The proposed method (c) explicitly uses a knowledge graph to constrain LLM reasoning, reducing ambiguity.

2. **Multiple Hypotheses**:

- The answer aggregates results from different reasoning paths (e.g., spouses of multiple ex-presidents).

3. **Temporal Progression**:

- Agent-based reasoning (b) includes time steps (t=1, t=2), while the proposed method focuses on graph traversal.

4. **Knowledge Graph Integration**:

- The KG-constrained method leverages relationships (e.g., "Spouse_of", "Ex-president") for accurate answers.

---

### Interpretation

- **Purpose**: Demonstrates how knowledge graphs improve LLM reasoning by providing structured context.

- **Advantages of Proposed Method**:

- Reduces reliance on ad-hoc retrieval (a) or sequential agent steps (b).

- Explicitly models relationships (e.g., "Spouse_of") to avoid incorrect inferences.

- **Notable Trends**:

- The answer aggregates multiple valid hypotheses, reflecting real-world complexity (e.g., multiple spouses of ex-presidents).

- **Underlying Logic**:

- Knowledge graphs act as a "constraint" to guide LLMs toward factually grounded answers, mitigating hallucination risks.

This diagram emphasizes the importance of structured knowledge integration in enhancing LLM reasoning capabilities.

DECODING INTELLIGENCE...