## Diagram: LLM Reasoning Approaches

### Overview

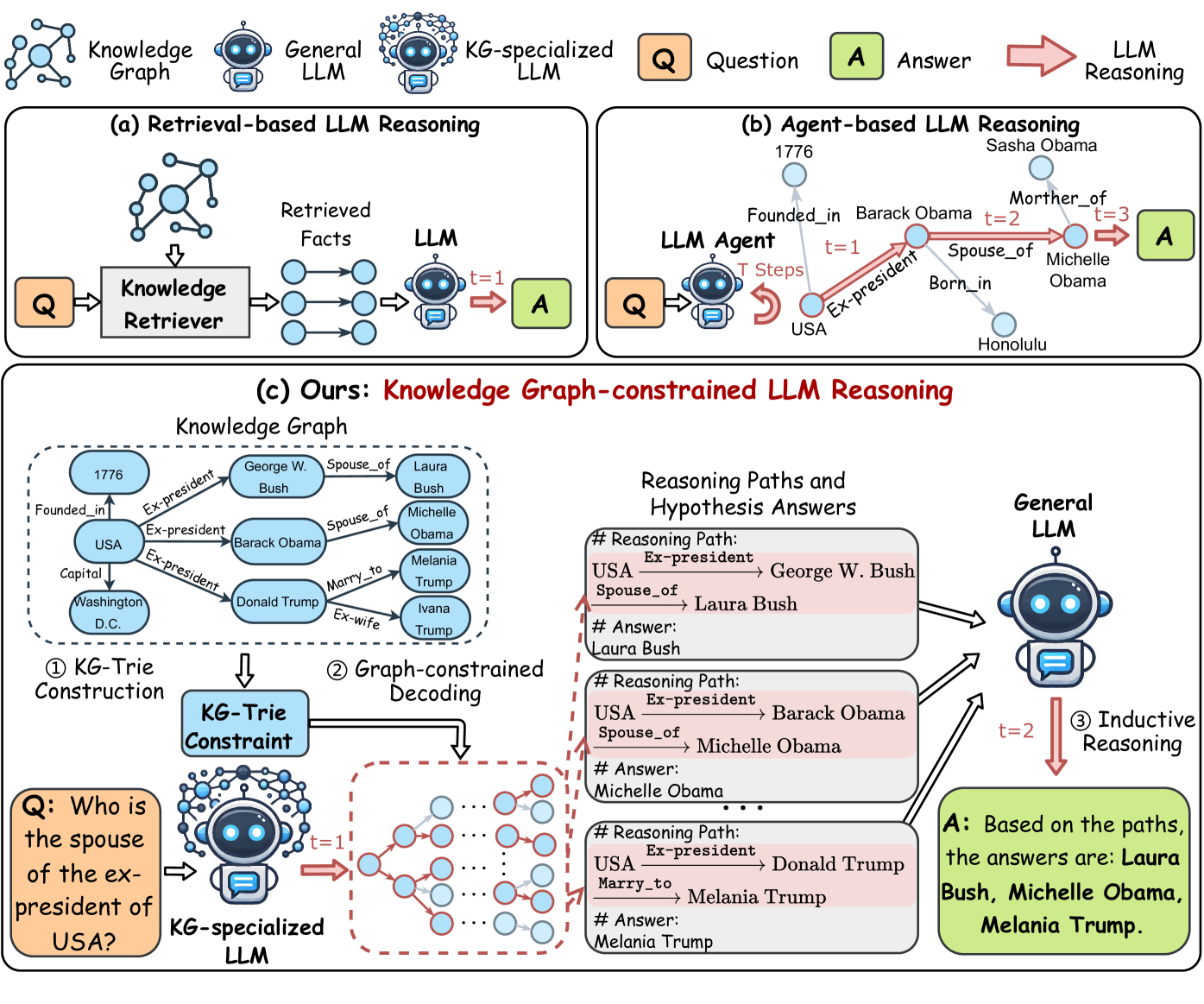

The image presents a comparative diagram illustrating three different approaches to Language Model (LLM) reasoning: Retrieval-based, Agent-based, and Knowledge Graph-constrained. Each approach is depicted with its components and flow, highlighting the differences in how they process questions and generate answers.

### Components/Axes

* **Legend:** Located at the top of the image.

* `Q`: Question (orange square)

* `A`: Answer (green square)

* `LLM Reasoning`: Red arrow

* **(a) Retrieval-based LLM Reasoning:**

* `Knowledge Graph`: A network of interconnected nodes.

* `General LLM`: A standard language model.

* `KG-specialized LLM`: A language model specialized in knowledge graphs.

* `Knowledge Retriever`: Component that retrieves relevant facts.

* `Retrieved Facts`: The output of the Knowledge Retriever.

* `t=1`: Indicates a time step.

* **(b) Agent-based LLM Reasoning:**

* `LLM Agent`: An agent-based language model.

* `T Steps`: Indicates multiple reasoning steps.

* Nodes representing entities and relationships (e.g., "Barack Obama," "Michelle Obama," "Founded_in," "Spouse_of").

* `1776`, `Sasha Obama`, `Honolulu`

* **(c) Ours: Knowledge Graph-constrained LLM Reasoning:**

* `Knowledge Graph`: A graph containing entities and relationships (e.g., "George W. Bush," "Laura Bush," "Barack Obama," "Donald Trump," "Melania Trump," "Ivana Trump," "USA," "Washington D.C.").

* `KG-Trie Construction`: The process of building a KG-Trie.

* `KG-Trie Constraint`: The constrained KG-Trie structure.

* `Graph-constrained Decoding`: The decoding process using the constrained graph.

* `Reasoning Paths and Hypothesis Answers`: Boxes showing reasoning paths and corresponding answers.

* `General LLM`: A standard language model.

* `Inductive Reasoning`: The reasoning process.

### Detailed Analysis

* **(a) Retrieval-based LLM Reasoning:**

* A question (Q) is fed into a Knowledge Retriever.

* The Knowledge Retriever retrieves relevant facts from a Knowledge Graph.

* The retrieved facts are then processed by a General LLM to generate an answer (A) at time step t=1.

* **(b) Agent-based LLM Reasoning:**

* A question (Q) is input into an LLM Agent.

* The LLM Agent performs multiple reasoning steps (T Steps) based on relationships within the knowledge.

* For example, starting from "Barack Obama," the agent reasons through "Founded_in" (1776), "Ex-president" (USA), "Spouse_of" (Michelle Obama), and "Mother_of" (Sasha Obama) across t=1, t=2, and t=3.

* The final answer (A) is generated after these steps.

* **(c) Ours: Knowledge Graph-constrained LLM Reasoning:**

* A Knowledge Graph is constructed with entities and relationships.

* The KG-Trie Construction process builds a KG-Trie Constraint.

* A question (Q) is input into a KG-specialized LLM at time step t=1.

* Graph-constrained Decoding is performed using the KG-Trie Constraint.

* Reasoning Paths and Hypothesis Answers are generated, showing the reasoning steps.

* For example, one path is "USA Ex-president -> George W. Bush Spouse_of -> Laura Bush," leading to the answer "Laura Bush."

* These paths are then processed by a General LLM through Inductive Reasoning at time step t=2.

* The final answer (A) is generated based on the paths: "Laura Bush, Michelle Obama, Melania Trump."

### Key Observations

* The diagram highlights three distinct approaches to LLM reasoning, each with its own architecture and process.

* Retrieval-based reasoning relies on retrieving relevant facts before processing.

* Agent-based reasoning involves multiple reasoning steps within the LLM Agent.

* Knowledge Graph-constrained reasoning uses a KG-Trie Constraint to guide the decoding process.

### Interpretation

The diagram illustrates the evolution and diversification of LLM reasoning techniques. The progression from simple retrieval-based methods to more sophisticated agent-based and knowledge graph-constrained approaches demonstrates an increasing emphasis on structured knowledge and reasoning paths. The "Ours" approach, which combines a KG-Trie Constraint with inductive reasoning, suggests an attempt to leverage the strengths of both structured knowledge and general language understanding. The diagram suggests that by incorporating structured knowledge and reasoning paths, LLMs can generate more accurate and reliable answers.