## Diagram: Comparison of LLM Reasoning Approaches

### Overview

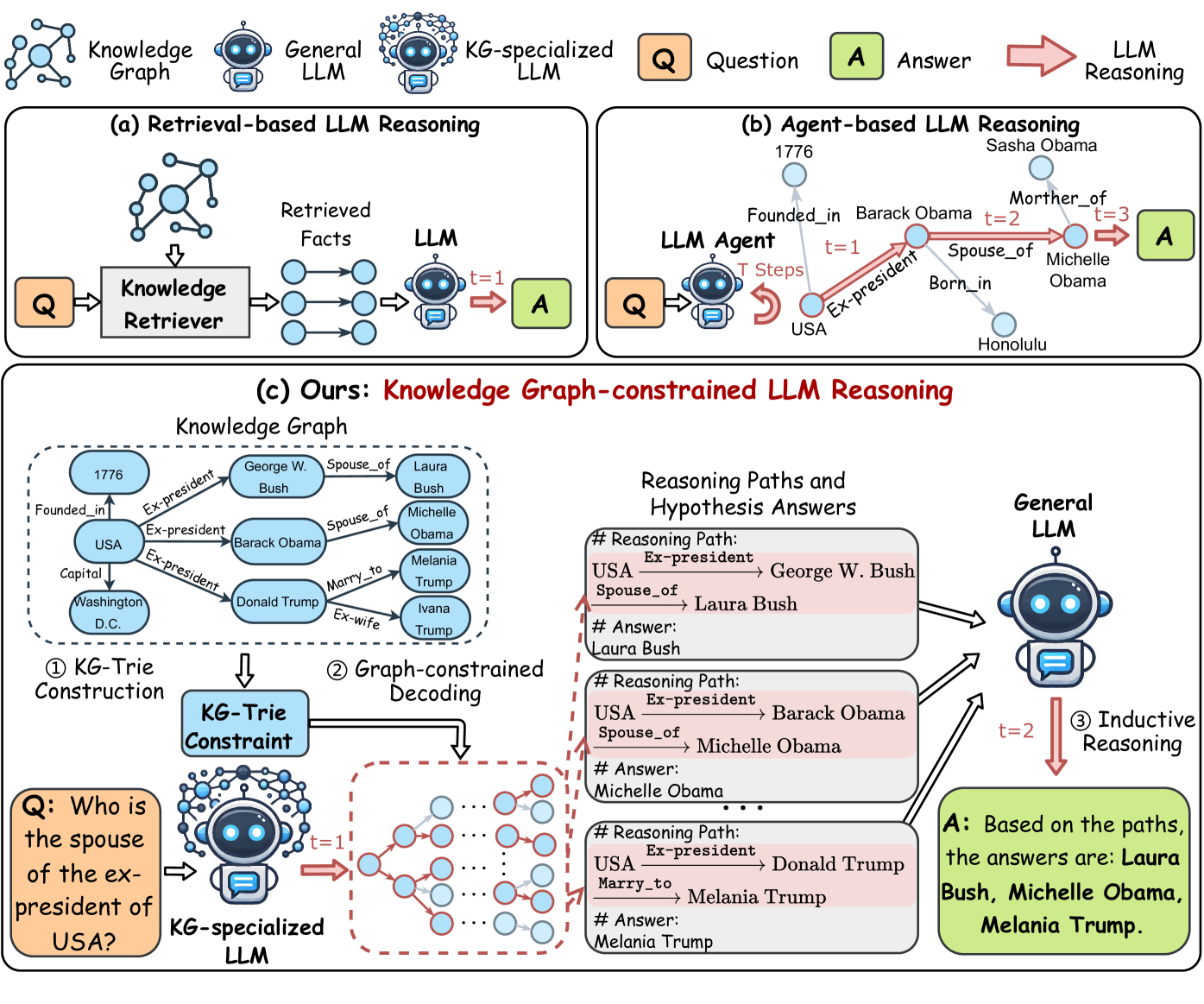

The image is a technical diagram illustrating and comparing three different methodologies for Large Language Model (LLM) reasoning, particularly in the context of answering questions using structured knowledge. The three approaches are: (a) Retrieval-based LLM Reasoning, (b) Agent-based LLM Reasoning, and (c) the proposed method, "Knowledge Graph-constrained LLM Reasoning." The diagram uses a consistent set of icons and flow arrows to depict the process for each method.

### Components/Axes

The diagram is divided into three main panels, labeled (a), (b), and (c). A legend at the top defines the visual symbols used throughout:

* **Knowledge Graph**: Represented by a network of blue nodes and edges.

* **General LLM**: Represented by a blue robot icon.

* **KG-specialized LLM**: Represented by a blue robot icon with a network overlay.

* **Question (Q)**: Represented by an orange square with a "Q".

* **Answer (A)**: Represented by a green square with an "A".

* **LLM Reasoning**: Represented by a red arrow.

### Detailed Analysis

#### Panel (a): Retrieval-based LLM Reasoning

* **Flow**: Question (Q) → Knowledge Retriever → Retrieved Facts (blue nodes) → General LLM (t=1) → Answer (A).

* **Description**: This is a linear pipeline. A question is sent to a "Knowledge Retriever" module, which fetches relevant facts from a knowledge graph. These retrieved facts are then fed into a General LLM, which performs reasoning in a single step (t=1) to produce the final answer.

#### Panel (b): Agent-based LLM Reasoning

* **Flow**: Question (Q) → LLM Agent → (Multi-step graph traversal) → Answer (A).

* **Description**: An LLM Agent interacts with a knowledge graph over multiple time steps (T Steps). The example shows a path: `USA` → (Ex-president, t=1) → `Barack Obama` → (Spouse_of, t=2) → `Michelle Obama` → (Mother_of, t=3) → `Sasha Obama`. The agent navigates the graph step-by-step to arrive at an answer.

#### Panel (c): Ours: Knowledge Graph-constrained LLM Reasoning

This panel is the most detailed and is subdivided into several components.

**1. Knowledge Graph (Example Subgraph)**

* A dashed box contains an example knowledge graph with entities and relations:

* `1776` → (Founded_in) → `USA`

* `USA` → (Capital) → `Washington D.C.`

* `USA` → (Ex-president) → `George W. Bush`, `Barack Obama`, `Donald Trump`

* `George W. Bush` → (Spouse_of) → `Laura Bush`

* `Barack Obama` → (Spouse_of) → `Michelle Obama`

* `Donald Trump` → (Marry_to) → `Melania Trump`; (Ex-wife) → `Ivana Trump`

**2. Process Flow**

* **Step ①: KG-Trie Construction**: The knowledge graph is processed to create a "KG-Trie Constraint" data structure.

* **Step ②: Graph-constrained Decoding**:

* **Input**: The question "Q: Who is the spouse of the ex-president of USA?" is fed into a "KG-specialized LLM".

* **Process**: The LLM's decoding process is constrained by the KG-Trie. The diagram shows a branching path of possible token sequences (red and blue nodes), guided by the graph structure.

* **Output**: This generates multiple "Reasoning Paths and Hypothesis Answers".

* **Reasoning Paths and Hypothesis Answers (Output of Step ②)**:

* **Path 1**: `USA` → (Ex-president) → `George W. Bush` → (Spouse_of) → `Laura Bush`. **Hypothesis Answer**: Laura Bush.

* **Path 2**: `USA` → (Ex-president) → `Barack Obama` → (Spouse_of) → `Michelle Obama`. **Hypothesis Answer**: Michelle Obama.

* **Path 3**: `USA` → (Ex-president) → `Donald Trump` → (Marry_to) → `Melania Trump`. **Hypothesis Answer**: Melania Trump.

* **Step ③: Inductive Reasoning**: All generated reasoning paths and their hypothesis answers are fed into a General LLM (t=2). This LLM performs inductive reasoning to synthesize a final, consolidated answer.

* **Final Answer (A)**: "Based on the paths, the answers are: **Laura Bush, Michelle Obama, Melania Trump.**"

### Key Observations

1. **Methodological Progression**: The diagram shows an evolution from simple retrieval (a), to interactive but potentially unguided exploration (b), to a structured, constrained generation process (c) that explicitly uses the knowledge graph to guide the LLM's reasoning path.

2. **Multi-Path Generation**: The core innovation in (c) is the generation of multiple valid reasoning paths from the KG, rather than a single path or a set of disconnected facts.

3. **Two-Stage LLM Use**: The proposed method uses two LLMs: a KG-specialized LLM for constrained path generation and a General LLM for final inductive synthesis.

4. **Spatial Layout**: Panel (c) is the largest and is positioned at the bottom, emphasizing it as the main contribution. The flow within (c) moves from left (input/KG) to center (constrained decoding) to right (output/synthesis).

### Interpretation

The diagram argues for a hybrid reasoning architecture that combines the structured, factual grounding of a Knowledge Graph with the generative and inductive reasoning capabilities of LLMs.

* **Problem with (a)**: Retrieval-based methods may miss complex relational paths or provide facts without clear connective logic for the LLM to use.

* **Problem with (b)**: Agent-based methods can be computationally expensive and may wander or follow suboptimal paths in large graphs without clear guidance.

* **Solution in (c)**: The "KG-constrained" approach directly bakes the graph structure into the LLM's generation process. By using a KG-Trie, it ensures that every generated token sequence corresponds to a valid path in the knowledge graph. This produces not just an answer, but an *explainable reasoning path* (or multiple paths) that led to it. The final inductive reasoning step allows the model to aggregate and validate these paths into a comprehensive answer, handling cases where multiple correct answers exist (like the spouses of multiple ex-presidents). This method aims to be more faithful to the source knowledge, more explainable, and more robust than the other two approaches.