## Diagram: Comparative Analysis of LLM Reasoning Methods

### Overview

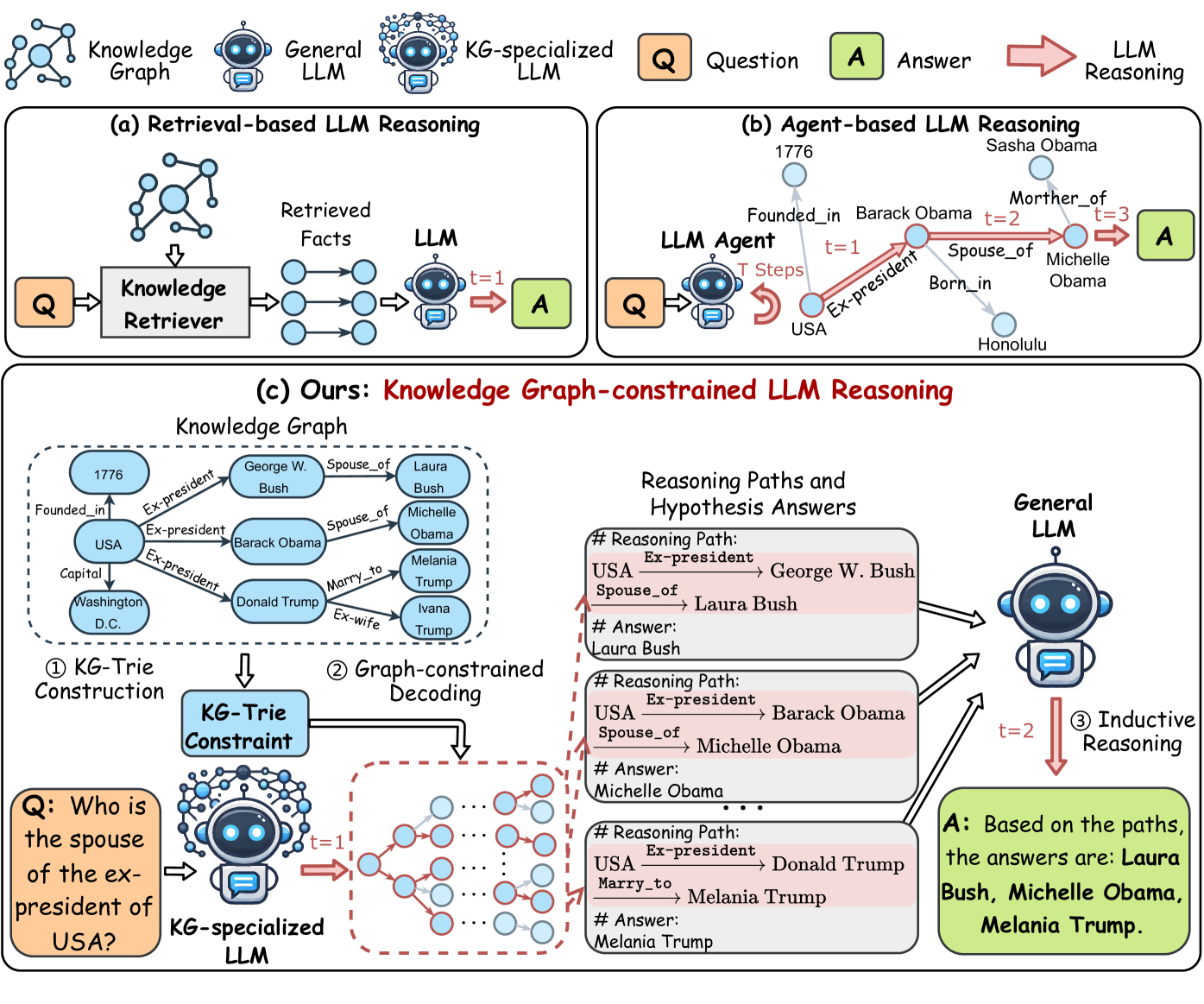

The diagram compares three approaches to Large Language Model (LLM) reasoning:

1. **Retrieval-based LLM Reasoning**

2. **Agent-based LLM Reasoning**

3. **Knowledge Graph-constrained LLM Reasoning (Proposed Method)**

It illustrates workflows, components, and reasoning paths for each method, with a focus on knowledge graph integration in the proposed approach.

---

### Components/Axes

#### Header Section

- **Knowledge Graph**: Visualized as interconnected nodes (e.g., "USA", "Barack Obama", "Michelle Obama").

- **General LLM**: Represented by a robot icon with a speech bubble.

- **KG-specialized LLM**: Robot icon with a speech bubble surrounded by interconnected nodes.

- **Question (Q)**: Orange box labeled "Q".

- **Answer (A)**: Green box labeled "A".

- **LLM Reasoning**: Arrows labeled "LLM Reasoning" connect components.

#### Main Diagram (Bottom Section)

1. **Knowledge Graph**:

- Nodes: "USA", "George W. Bush", "Barack Obama", "Donald Trump", "Michelle Obama", "Melania Trump", "Laura Bush", "Ivana Trump".

- Edges: Relationships like "Ex-president", "Spouse_of", "Born_in", "Marry_to".

- Example: "USA" → "Ex-president" → "Barack Obama" → "Spouse_of" → "Michelle Obama".

2. **KG-Trie Construction**:

- Process for building a knowledge graph trie (tree-like structure).

3. **Graph-constrained Decoding**:

- Visualized as a decision tree with nodes labeled "t=1", "t=2", etc.

4. **Reasoning Paths and Hypothesis Answers**:

- Three numbered reasoning paths:

- **Path 1**: USA → George W. Bush → Laura Bush (Answer: Laura Bush).

- **Path 2**: USA → Barack Obama → Michelle Obama (Answer: Michelle Obama).

- **Path 3**: USA → Donald Trump → Melania Trump (Answer: Melania Trump).

5. **Inductive Reasoning**:

- Final answer aggregates all paths: "Laura Bush, Michelle Obama, Melania Trump".

---

### Detailed Analysis

#### Retrieval-based LLM Reasoning (a)

- **Workflow**:

- Question (Q) → Knowledge Retriever → Retrieved Facts → LLM → Answer (A).

- **Key Elements**:

- "Retrieved Facts" represented as interconnected nodes.

- Direct flow from retrieved facts to LLM.

#### Agent-based LLM Reasoning (b)

- **Workflow**:

- Question (Q) → LLM Agent → T Steps → Answer (A).

- **Key Elements**:

- Example steps: "Founded_in", "Ex-president", "Spouse_of".

- Temporal progression (t=1, t=2, t=3).

#### Knowledge Graph-constrained LLM Reasoning (c)

- **Workflow**:

- **KG-Trie Construction**: Builds a structured knowledge graph.

- **Graph-constrained Decoding**: Uses the trie to guide reasoning.

- **Inductive Reasoning**: Combines multiple paths for a comprehensive answer.

- **Key Elements**:

- Explicit use of "KG-Trie Constraint" to limit LLM exploration.

- Multiple reasoning paths with hypothesis answers.

---

### Key Observations

1. **Structured Reasoning**:

- The proposed method (c) explicitly uses a knowledge graph to constrain LLM reasoning, reducing ambiguity.

2. **Multiple Hypotheses**:

- The answer aggregates results from different reasoning paths (e.g., spouses of multiple ex-presidents).

3. **Temporal Progression**:

- Agent-based reasoning (b) includes time steps (t=1, t=2), while the proposed method focuses on graph traversal.

4. **Knowledge Graph Integration**:

- The KG-constrained method leverages relationships (e.g., "Spouse_of", "Ex-president") for accurate answers.

---

### Interpretation

- **Purpose**: Demonstrates how knowledge graphs improve LLM reasoning by providing structured context.

- **Advantages of Proposed Method**:

- Reduces reliance on ad-hoc retrieval (a) or sequential agent steps (b).

- Explicitly models relationships (e.g., "Spouse_of") to avoid incorrect inferences.

- **Notable Trends**:

- The answer aggregates multiple valid hypotheses, reflecting real-world complexity (e.g., multiple spouses of ex-presidents).

- **Underlying Logic**:

- Knowledge graphs act as a "constraint" to guide LLMs toward factually grounded answers, mitigating hallucination risks.

This diagram emphasizes the importance of structured knowledge integration in enhancing LLM reasoning capabilities.