## Bubble Chart: LLM Agent Benchmark Score vs. Release Time

### Overview

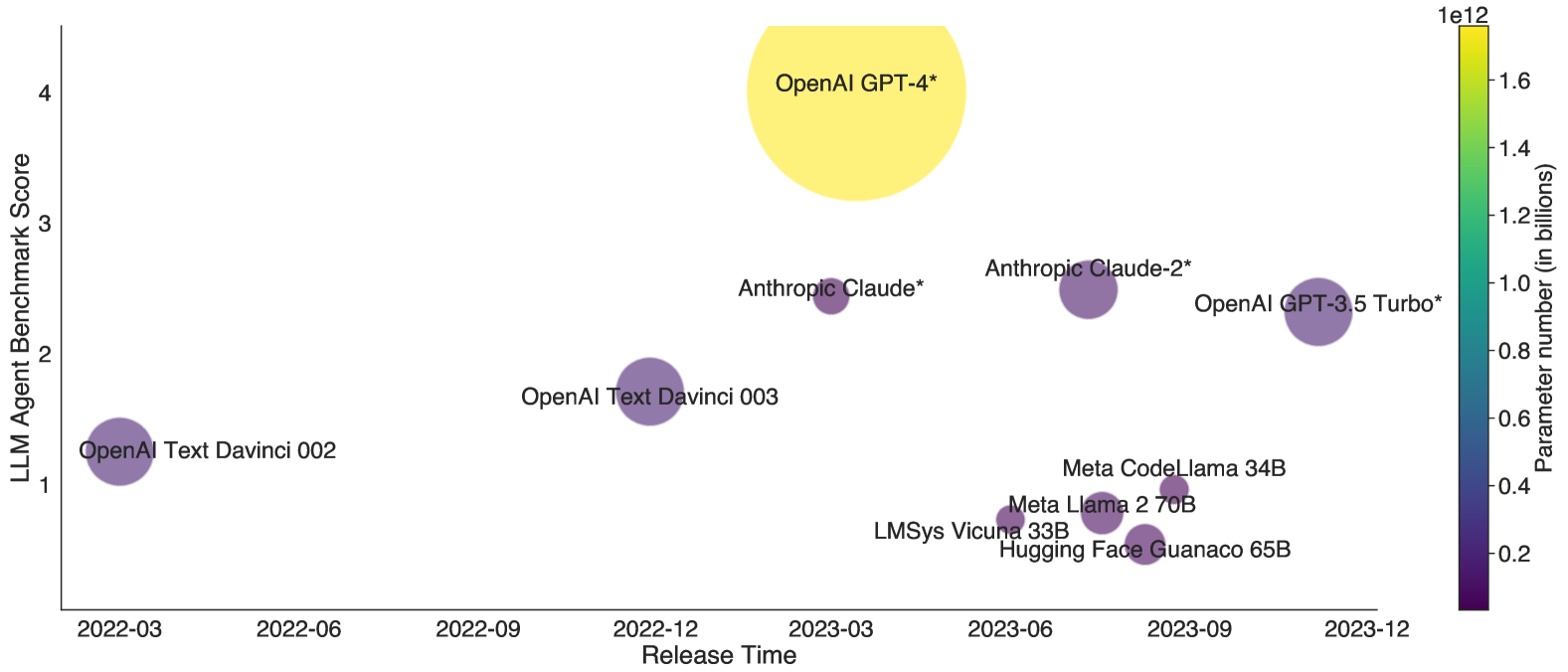

This is a bubble chart plotting various Large Language Models (LLMs) based on their release date and performance on an "LLM Agent Benchmark." The size and color of each bubble represent the model's parameter count, with a color scale provided. The chart visualizes the evolution of model performance and scale over time from early 2022 to late 2023.

### Components/Axes

* **Chart Type:** Bubble Chart / Scatter Plot with size and color encoding.

* **X-Axis:** "Release Time". The scale is chronological, with major tick marks labeled: `2022-03`, `2022-06`, `2022-09`, `2022-12`, `2023-03`, `2023-06`, `2023-09`, `2023-12`.

* **Y-Axis:** "LLM Agent Benchmark Score". The scale is linear, ranging from 1 to 4, with major tick marks at `1`, `2`, `3`, `4`.

* **Color/Legend (Right Side):** A vertical color bar titled "Parameter number (in billions)". The scale is logarithmic, indicated by the `1e12` (1 trillion) multiplier at the top. The gradient runs from dark purple (low) to bright yellow (high). Key labeled ticks are: `0.2`, `0.4`, `0.6`, `0.8`, `1.0`, `1.2`, `1.4`, `1.6`. The unit is billions, so `1.6` represents 1.6 trillion parameters.

* **Data Points (Bubbles):** Each bubble is labeled with the model name. The placement, size, and color of each bubble encode three dimensions of data: release time (x), benchmark score (y), and parameter count (size/color).

### Detailed Analysis

**Data Points (Listed by approximate release time, from left to right):**

1. **OpenAI Text Davinci 002**

* **Position:** X ≈ March 2022, Y ≈ 1.2.

* **Size/Color:** Medium-small bubble, dark purple. Color corresponds to the low end of the parameter scale, likely < 0.2 trillion (200 billion) parameters.

2. **OpenAI Text Davinci 003**

* **Position:** X ≈ December 2022, Y ≈ 1.8.

* **Size/Color:** Medium bubble, purple. Slightly larger and lighter than Davinci 002, indicating a higher parameter count, perhaps in the 0.2-0.4 trillion range.

3. **Anthropic Claude\***

* **Position:** X ≈ March 2023, Y ≈ 2.5.

* **Size/Color:** Medium-small bubble, purple. Similar in size/color to Davinci 003, suggesting a comparable parameter count.

4. **OpenAI GPT-4\***

* **Position:** X ≈ March 2023, Y = 4.0 (at the top of the axis).

* **Size/Color:** Very large bubble, bright yellow. This is the largest and highest-scoring model. Its color matches the top of the scale (~1.6 trillion parameters or more). It is a clear outlier in both performance and scale.

5. **Anthropic Claude-2\***

* **Position:** X ≈ July 2023, Y ≈ 2.6.

* **Size/Color:** Medium bubble, purple. Slightly larger than the original Claude, indicating an increase in parameters.

6. **OpenAI GPT-3.5 Turbo\***

* **Position:** X ≈ November 2023, Y ≈ 2.3.

* **Size/Color:** Medium-large bubble, purple. Larger than the Claude models but smaller than GPT-4. Its color suggests a parameter count in the mid-range of the scale (perhaps ~0.6-0.8 trillion).

7. **Cluster of Models (Mid-2023):** A group of four smaller, dark purple bubbles clustered between June and September 2023, all with benchmark scores below 1.0.

* **LMSys Vicuna 33B:** X ≈ June 2023, Y ≈ 0.8.

* **Meta Llama 2 70B:** X ≈ July 2023, Y ≈ 0.9.

* **Hugging Face Guanaco 65B:** X ≈ August 2023, Y ≈ 0.7.

* **Meta CodeLlama 34B:** X ≈ September 2023, Y ≈ 0.8.

* **Size/Color:** All are small bubbles, dark purple. Their color places them at the very low end of the parameter scale (< 0.2 trillion), consistent with their names (33B, 70B, 65B, 34B = 0.033 to 0.07 trillion).

### Key Observations

1. **Performance Leap:** There is a dramatic, isolated jump in benchmark score with the release of OpenAI GPT-4* in early 2023, reaching the maximum score of 4.

2. **General Upward Trend:** Excluding the outlier GPT-4, there is a general, gradual upward trend in benchmark scores for the major commercial models (Davinci series, Claude series, GPT-3.5 Turbo) from early 2022 to late 2023.

3. **Parameter Count vs. Performance:** Higher parameter count (indicated by larger, yellower bubbles) generally correlates with higher benchmark scores, but not perfectly. For example, GPT-3.5 Turbo has a high score but a smaller bubble than GPT-4. The cluster of open-source models (Vicuna, Llama 2, etc.) has significantly lower scores and much smaller parameter counts.

4. **Temporal Clustering:** Model releases are not uniform. There was a cluster of activity in mid-2023, particularly among open-source models.

5. **Asterisks:** The labels for GPT-4, Claude, Claude-2, and GPT-3.5 Turbo include an asterisk (*), which likely denotes a specific version, API access, or a note not visible in the chart itself.

### Interpretation

This chart illustrates the rapid and competitive evolution of the LLM landscape between 2022 and 2023. It suggests that while incremental improvements in performance (benchmark score) were occurring with each new model release, the field experienced a **paradigm shift** with the introduction of GPT-4. This model represents a significant outlier in both capability (score) and scale (parameters), indicating a potential architectural or training breakthrough.

The data highlights a bifurcation in the market: **high-performance, likely closed-source models** (from OpenAI, Anthropic) occupying the upper-right quadrant (later release, higher score, larger scale), and a group of **smaller, open-source models** clustered in the lower-right (mid-2023 release, lower score, much smaller scale). This visualizes the trade-off between raw performance and accessibility/scalability.

The asterisks hint at important nuances—these benchmark scores may be contingent on specific prompting, evaluation frameworks, or model versions. The chart effectively argues that in the race for LLM agent capabilities, sheer scale (parameters) has been a primary, but not sole, driver of performance, with one model achieving a disproportionate leap ahead of its contemporaries.