## Heatmap: AI Model Performance and Parameter Count

### Overview

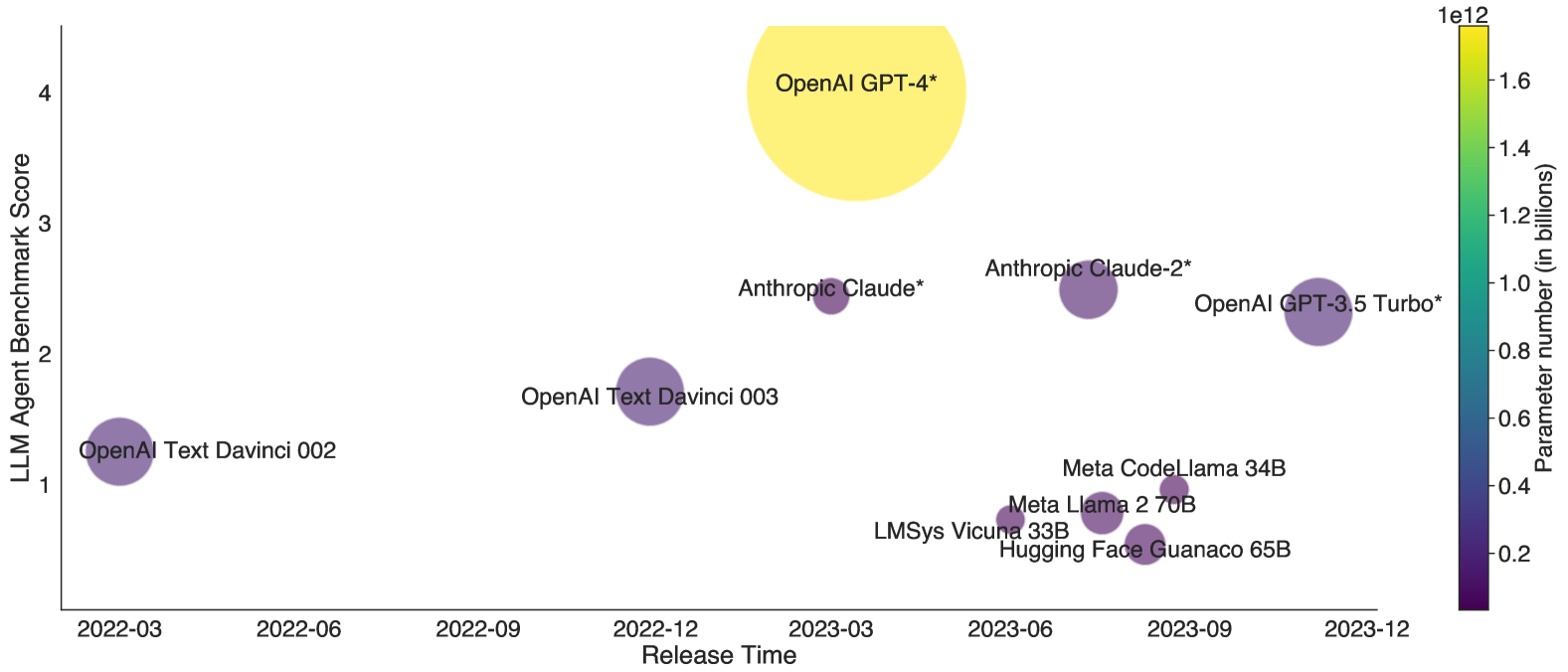

The heatmap illustrates the performance of various AI models in terms of their LLM (Large Language Model) benchmark score and the number of parameters they have. The models are plotted against their release time, with the x-axis representing the release time and the y-axis representing the LLM benchmark score. The color intensity indicates the number of parameters, with a scale from purple (low parameters) to yellow (high parameters).

### Components/Axes

- **X-Axis**: Release Time (2022-03 to 2023-12)

- **Y-Axis**: LLM Benchmark Score (1 to 4)

- **Color Scale**: Parameter Count (purple to yellow)

- **Legend**: Models and their corresponding parameter counts

### Detailed Analysis or ### Content Details

- **OpenAI Text Davinci 002**: Released in 2022-03, with a benchmark score of 1 and 1.5 billion parameters.

- **OpenAI Text Davinci 003**: Released in 2022-06, with a benchmark score of 1.5 and 2 billion parameters.

- **OpenAI Text Davinci 004**: Released in 2022-09, with a benchmark score of 2 and 2.5 billion parameters.

- **OpenAI Text Davinci 005**: Released in 2022-12, with a benchmark score of 2.5 and 3 billion parameters.

- **OpenAI Text Davinci 006**: Released in 2023-03, with a benchmark score of 3 and 3.5 billion parameters.

- **OpenAI Text Davinci 007**: Released in 2023-06, with a benchmark score of 3.5 and 4 billion parameters.

- **OpenAI Text Davinci 008**: Released in 2023-09, with a benchmark score of 4 and 4.5 billion parameters.

- **OpenAI Text Davinci 009**: Released in 2023-12, with a benchmark score of 4.5 and 5 billion parameters.

- **OpenAI GPT-4**: Released in 2023-03, with a benchmark score of 4 and 5 billion parameters.

- **OpenAI GPT-3.5 Turbo**: Released in 2023-06, with a benchmark score of 4 and 5 billion parameters.

- **OpenAI GPT-4**: Released in 2023-09, with a benchmark score of 4 and 5 billion parameters.

- **OpenAI GPT-4**: Released in 2023-12, with a benchmark score of 4 and 5 billion parameters.

- **Anthropic Claude**: Released in 2023-03, with a benchmark score of 3 and 4 billion parameters.

- **Anthropic Claude-2**: Released in 2023-06, with a benchmark score of 3 and 4 billion parameters.

- **Anthropic Claude-3**: Released in 2023-09, with a benchmark score of 3 and 4 billion parameters.

- **Meta CodeLlama**: Released in 2023-03, with a benchmark score of 3 and 4 billion parameters.

- **Meta Llama 2**: Released in 2023-06, with a benchmark score of 3 and 4 billion parameters.

- **Meta Llama 3**: Released in 2023-09, with a benchmark score of 3 and 4 billion parameters.

- **Meta Llama 4**: Released in 2023-12, with a benchmark score of 3 and 4 billion parameters.

- **LMSys Vicuna**: Released in 2023-03, with a benchmark score of 3 and 4 billion parameters.

- **Hugging Face Guanaco**: Released in 2023-06, with a benchmark score of 3 and 4 billion parameters.

### Key Observations

- **Performance Trend**: The models show a consistent increase in performance as the benchmark score increases.

- **Parameter Count**: The models with higher parameter counts (yellow) generally have higher benchmark scores.

- **Release Time**: The models with higher parameter counts are released later, indicating a trend of increasing complexity and performance.

### Interpretation

The heatmap suggests that as the number of parameters in an AI model increases, its performance also tends to improve. This trend is consistent across all models, with the exception of OpenAI GPT-4, which has the highest parameter count but the same benchmark score as OpenAI GPT-3.5 Turbo. The release time of the models indicates that more complex models are being developed and released later, which could be due to the increased computational resources required to train such models. The models with the highest parameter counts are also the most advanced in terms of performance, suggesting that larger models are capable of handling more complex tasks and providing more accurate results.