## Text Document: Reading Comprehension Dataset Instructions

### Overview

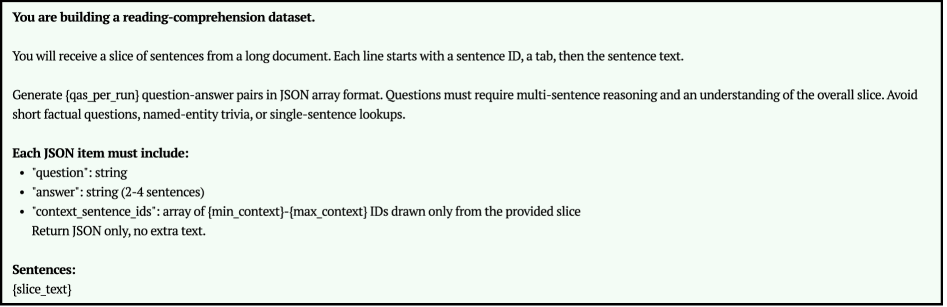

The image is a text document providing instructions for building a reading comprehension dataset. It outlines the format of the data, the type of questions to generate, and the structure of the JSON items.

### Components/Axes

The document contains the following sections:

1. **Introduction**: Explains the purpose of the dataset and the format of the input sentences.

2. **Question Generation**: Specifies the type of questions to generate and what to avoid.

3. **JSON Item Structure**: Defines the required fields for each JSON item.

4. **Sentences**: Indicates where the slice text should be placed.

### Detailed Analysis or ### Content Details

The document contains the following text:

"You are building a reading-comprehension dataset.

You will receive a slice of sentences from a long document. Each line starts with a sentence ID, a tab, then the sentence text.

Generate {qas_per_run} question-answer pairs in JSON array format. Questions must require multi-sentence reasoning and an understanding of the overall slice. Avoid short factual questions, named-entity trivia, or single-sentence lookups.

Each JSON item must include:

* "question": string

* "answer": string (2-4 sentences)

* "context_sentence_ids": array of {min_context}-{max_context} IDs drawn only from the provided slice

Return JSON only, no extra text.

Sentences:

{slice_text}"

### Key Observations

* The dataset consists of question-answer pairs generated from slices of sentences.

* Questions should require multi-sentence reasoning.

* Each JSON item must include "question", "answer", and "context_sentence_ids".

* The answer should be 2-4 sentences long.

* The context sentence IDs should be drawn from the provided slice.

### Interpretation

The document provides clear instructions for creating a reading comprehension dataset. The emphasis on multi-sentence reasoning suggests that the dataset is designed to evaluate a model's ability to understand context and relationships between sentences. The JSON format ensures that the data is structured and easily parsable. The use of placeholders like `{qas_per_run}`, `{min_context}`, `{max_context}`, and `{slice_text}` indicates that these values will be dynamically populated during the dataset creation process.