\n

## Text Document: Reading-Comprehension Dataset Instructions

### Overview

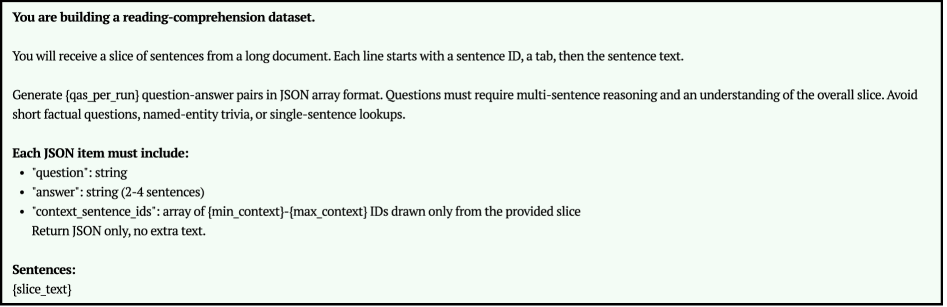

The image presents a set of instructions for building a reading-comprehension dataset. The instructions detail the format of the input data (slices of sentences from a long document) and the desired output (question-answer pairs in JSON array format). The emphasis is on creating questions that require multi-sentence reasoning and understanding of the overall context, avoiding simple factual recall.

### Components/Axes

The document consists of a series of paragraphs outlining the requirements for the dataset creation process. Key elements include:

* **Title:** "You are building a reading-comprehension dataset."

* **Input Description:** Explains that the input will be slices of sentences, each starting with a sentence ID, followed by a tab, and then the sentence text.

* **Output Requirements:** Specifies that the output should be question-answer pairs in JSON array format.

* **Question Guidelines:** Questions should require multi-sentence reasoning and understanding of the overall slice. Avoid short factual questions, named-entity trivia, or single-sentence lookups.

* **JSON Structure:** Details the required structure of each JSON item:

* `"question": string`

* `"answer": string (2-4 sentences)`

* `"context_sentence_ids": array of {min_context}-{max_context} IDs`

* **Return Format:** Specifies that only JSON should be returned, with no extra text.

* **Placeholder:** "Sentences: {slice_text}" indicating where the input sentence slice will be placed.

### Detailed Analysis / Content Details

The document provides a precise set of instructions. The core requirements are:

1. **Input Format:** Sentences are provided as a slice, identified by an ID and separated by a tab.

2. **Output Format:** The output must be a JSON array of question-answer pairs.

3. **Question Complexity:** Questions should not be easily answered by looking at a single sentence or by simply recalling facts. They should require integrating information from multiple sentences within the provided slice.

4. **JSON Structure:** Each JSON object must contain a question, an answer (2-4 sentences long), and an array of context sentence IDs. The context sentence IDs should indicate which sentences from the input slice were used to answer the question.

5. **Output Purity:** The output should consist *only* of the JSON array, without any surrounding text or explanations.

### Key Observations

The instructions are very specific and aim to create a challenging reading-comprehension dataset. The emphasis on multi-sentence reasoning and the avoidance of trivial questions suggest a focus on evaluating deeper understanding of text. The inclusion of `context_sentence_ids` is crucial for traceability and understanding the reasoning process behind each answer.

### Interpretation

This document outlines the requirements for generating a high-quality reading-comprehension dataset. The goal is to move beyond simple question-answering tasks and create a dataset that assesses a model's ability to understand the relationships between sentences and draw inferences from a larger context. The JSON format and the requirement for context sentence IDs are designed to facilitate the evaluation of these reasoning abilities. The placeholder "{slice_text}" indicates that the actual text content will be provided separately, and the dataset creation process will involve generating questions and answers based on this input text. The instructions are clear and concise, leaving little room for ambiguity in the dataset creation process.