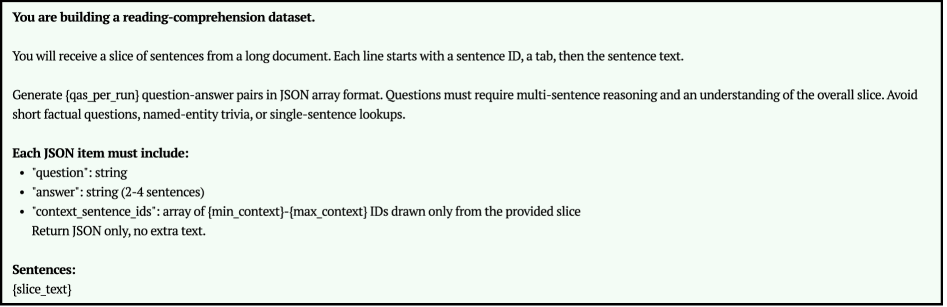

## Screenshot: Reading-Comprehension Dataset Generation Instructions

### Overview

The image is a screenshot of a text-based instruction set for building a reading-comprehension dataset. The content is presented as a single block of text within a light gray (#f0f0f0) bordered box against a white background. The text provides a precise, technical specification for a data generation task.

### Components/Axes

This is not a chart or diagram with axes. The components are purely textual instructions structured as follows:

- **Title/Instruction Header**: A bolded statement of the primary task.

- **Process Description**: A paragraph explaining the input format and the core generation task.

- **Output Specification**: A bulleted list defining the required JSON structure for each output item.

- **Input Placeholder**: A label indicating where the source text data will be inserted.

### Detailed Analysis / Content Details

**Full Text Transcription:**

```

You are building a reading-comprehension dataset.

You will receive a slice of sentences from a long document. Each line starts with a sentence ID, a tab, then the sentence text.

Generate {qas_per_run} question-answer pairs in JSON array format. Questions must require multi-sentence reasoning and understanding of the overall slice. Avoid short factual questions, named-entity trivia, or single-sentence lookups.

Each JSON item must include:

• "question": string

• "answer": string (2-4 sentences)

• "context_sentence_ids": array of {min_context}-{max_context} IDs drawn only from the provided slice

Return JSON only, no extra text.

Sentences:

{slice_text}

```

**Key Elements and Placeholders:**

1. **Task Definition**: The user is instructed to act as a builder of a reading-comprehension dataset.

2. **Input Format**: Data will be provided as a "slice" of sentences. Each sentence line has the format: `[sentence ID]\t[sentence text]`.

3. **Generation Parameter**: `{qas_per_run}` is a variable placeholder indicating the number of question-answer pairs to generate per execution.

4. **Question Quality Constraint**: Questions must necessitate **multi-sentence reasoning** and comprehension of the entire provided text slice. Explicitly forbidden are:

- Short factual questions.

- Named-entity trivia.

- Single-sentence lookups.

5. **Output Schema (JSON Array)**: Each object in the array must contain three fields:

- `"question"`: A string.

- `"answer"`: A string answer spanning 2 to 4 sentences.

- `"context_sentence_ids"`: An array of sentence ID ranges (e.g., `["1-3", "5-7"]`). The IDs must be drawn **exclusively** from the IDs in the provided input slice.

6. **Output Constraint**: The final output must be **only the JSON array**, with no additional explanatory text.

7. **Data Placeholder**: `Sentences:` followed by `{slice_text}` marks where the actual sentence data (the "slice") will be inserted for processing.

### Key Observations

- **Precision of Instruction**: The text is highly specific about the input format, output structure, and, crucially, the *cognitive level* of the required questions (multi-sentence reasoning).

- **Use of Placeholders**: The instructions use template variables (`{qas_per_run}`, `{slice_text}`), indicating this is likely a prompt or specification for an automated system or a human annotator following a strict protocol.

- **Negative Constraints**: The instructions explicitly define what *not* to do (avoid simple questions), which is as important as the positive requirements for ensuring dataset quality.

- **Visual Layout**: The text is left-aligned within a defined container. The bullet points use standard round bullets (•). The font appears to be a common sans-serif typeface (e.g., Arial, Helvetica).

### Interpretation

This image outlines the foundational rules for creating a high-quality, reasoning-focused reading comprehension dataset. The design reveals several underlying principles:

1. **Purpose-Driven Design**: The dataset is not for testing basic fact retrieval but for evaluating a model's ability to synthesize information across multiple sentences. This suggests it's intended for advanced NLP model evaluation or training, targeting skills like inference, causality, and summarization.

2. **Controlled Generation**: The use of placeholders (`{qas_per_run}`, `{slice_text}`) and the strict JSON schema indicate this is a component within a larger, likely automated, data pipeline. The system is designed for batch processing and consistency.

3. **Data Integrity**: The requirement that `context_sentence_ids` must be drawn *only* from the provided slice ensures that questions and answers are grounded in the given context, preventing leakage or the use of external knowledge. This is critical for creating a valid and reproducible benchmark.

4. **Implicit Workflow**: The process implies a two-stage workflow: first, a "slice" of text is extracted from a larger document and formatted with IDs; second, this slice is processed (by an AI or human) according to these rules to generate the QA pairs. The final output is a clean, structured JSON file ready for integration into a dataset.

In essence, this is a technical specification for generating a **reasoning-centric** subset of a reading comprehension dataset, emphasizing structured output, contextual grounding, and complex question design.