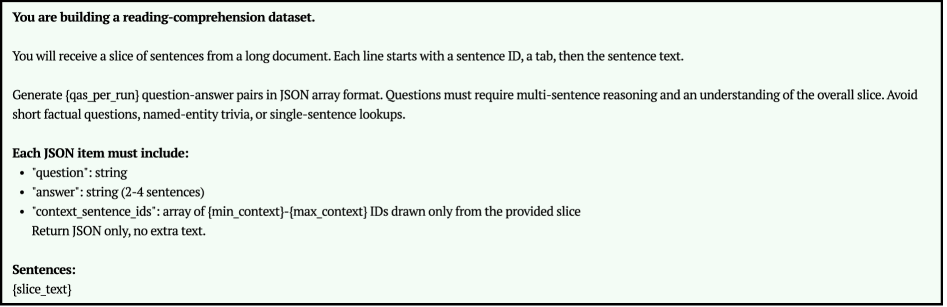

## Screenshot: Technical Instructions for Reading-Comprehension Dataset Creation

### Overview

The image contains a technical specification for generating a reading-comprehension dataset. It outlines requirements for processing a slice of sentences from a document to create question-answer pairs in JSON format. The instructions emphasize multi-sentence reasoning, avoidance of trivial questions, and strict adherence to a defined JSON structure.

### Components/Axes

- **Input Format**:

- Sentences are provided as a slice from a longer document.

- Each line starts with a sentence ID (e.g., `{slice_text}`), followed by a tab and the sentence text.

- **Output Requirements**:

- Generate `{qas_per_run}` question-answer pairs in JSON array format.

- Questions must require multi-sentence reasoning and understanding of the overall slice.

- Avoid short factual questions, named-entity trivia, or single-sentence lookups.

- **JSON Structure**:

- Each JSON item must include:

- `"question"`: string

- `"answer"`: string (2-4 sentences)

- `"context_sentence_ids"`: array of `{min_context}`-`{max_context}` IDs (drawn only from the provided slice)

### Detailed Analysis

1. **Task Objective**:

- The primary goal is to build a dataset that tests comprehension across multiple sentences, requiring models to synthesize information rather than recall isolated facts.

2. **Input Constraints**:

- Sentences are pre-sliced from a larger document, with IDs and text separated by tabs.

- Example placeholder: `{slice_text}` indicates where actual sentence data would be inserted.

3. **JSON Output Rules**:

- **Question**: Must be a string that cannot be answered by a single sentence.

- **Answer**: A string of 2-4 sentences, ensuring multi-sentence synthesis.

- **Context IDs**: An array of sentence IDs (e.g., `[1, 3, 5]`) that define the relevant context for the question-answer pair.

4. **Excluded Question Types**:

- Short factual questions (e.g., "What is the capital of France?").

- Named-entity trivia (e.g., "Who wrote *Hamlet*?").

- Single-sentence lookups (e.g., "What is the main theme of paragraph 2?").

### Key Observations

- The instructions prioritize **multi-sentence reasoning** over isolated fact retrieval.

- The JSON structure enforces consistency in data labeling, critical for downstream model training.

- The exclusion of trivial questions ensures the dataset focuses on higher-order comprehension.

### Interpretation

This specification is designed to create a robust reading-comprehension benchmark. By requiring answers to span multiple sentences and context IDs, it forces models to understand relationships between ideas rather than memorize surface-level details. The strict JSON format ensures reproducibility and compatibility with automated evaluation pipelines. The exclusion of trivial questions suggests an intent to filter out low-complexity data, though this may introduce subjectivity in question design. The use of `{min_context}`-`{max_context}` IDs implies flexibility in context window sizing, allowing adaptability to different dataset requirements.

*Note: The image does not contain numerical data, charts, or diagrams. All information is textual and structural.*