\n

## Diagram: GRPO (Group Relative Policy Optimization) Training Loop

### Overview

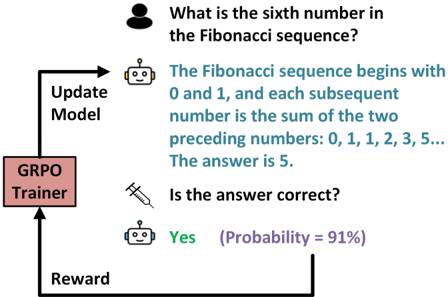

The image is a flowchart diagram illustrating a reinforcement learning feedback loop for training an AI model, specifically using a method called GRPO (Group Relative Policy Optimization). The diagram shows a cyclical process where a model's response to a user query is evaluated, and the result is used to update the model.

### Components/Axes

The diagram is composed of several key components connected by directional arrows, forming a closed loop:

1. **User Icon (Top Center):** A simple black silhouette of a person, representing the user or prompt source.

2. **User Query (Text, Top):** The text "What is the sixth number in the Fibonacci sequence?" is positioned next to the user icon.

3. **Model Response (Text, Center):** A block of text in a teal/blue color, positioned below the user query. It reads: "The Fibonacci sequence begins with 0 and 1, and each subsequent number is the sum of the two preceding numbers: 0, 1, 1, 2, 3, 5... The answer is 5."

4. **Model Icons (Two instances):** Gray robot head icons are placed next to the model's response and the verification step.

5. **Verification Question (Text, Center-Right):** The text "Is the answer correct?" with a pencil icon, positioned below the model's response.

6. **Verification Response (Text, Lower Center):** The text "Yes" in green, followed by "(Probability = 91%)" in purple, positioned below the verification question.

7. **GRPO Trainer (Box, Left):** A pink rectangular box labeled "GRPO Trainer" in black text. This is the central processing unit of the loop.

8. **Flow Arrows & Labels:**

* An arrow labeled **"Update Model"** points from the "GRPO Trainer" box up towards the model response area.

* An arrow labeled **"Reward"** points from the verification response ("Yes (Probability = 91%)") back to the "GRPO Trainer" box.

### Detailed Analysis

The diagram depicts a single, complete iteration of a training step:

1. **Input:** A user asks a factual question: "What is the sixth number in the Fibonacci sequence?"

2. **Model Output:** The AI model generates a detailed, correct response, explaining the sequence and stating the answer is 5.

3. **Evaluation:** A separate process (or the same model in a different mode) evaluates the correctness of the answer. It concludes "Yes" with a high confidence probability of 91%.

4. **Feedback Signal:** This evaluation result ("Yes" with 91% probability) is sent as a **"Reward"** signal to the **GRPO Trainer**.

5. **Model Update:** The GRPO Trainer processes this reward signal and sends an **"Update Model"** command back to the model, presumably to reinforce the behavior that led to the correct, high-confidence answer.

### Key Observations

* **Correctness and Confidence:** The model's answer is factually correct. The evaluation step not only confirms correctness but also provides a confidence score (91%), which is a crucial piece of metadata for the reward signal.

* **Feedback Loop Structure:** The diagram clearly shows a closed-loop system. The model's output directly influences the signal that is used to update it.

* **Component Roles:** The "GRPO Trainer" is isolated as the component that translates the reward into a model update, suggesting it contains the core optimization algorithm.

* **Visual Emphasis:** Color is used functionally: teal for the model's generated text, green for the positive verification, purple for the probability metric, and pink to highlight the central trainer component.

### Interpretation

This diagram is a conceptual illustration of **Reinforcement Learning from Human Feedback (RLHF)** or a similar technique applied to fine-tuning large language models. It demonstrates how a model can be improved not just on raw data, but on the quality and correctness of its outputs.

* **The Process:** The loop shows the model generating a response, that response being judged (likely by a separate "reward model" trained on human preferences), and the judgment being used to adjust the original model's parameters via the GRPO algorithm. GRPO is a specific method for this adjustment.

* **Why It Matters:** This process is key to aligning AI models with human expectations for accuracy, helpfulness, and safety. The high probability (91%) attached to the "Yes" indicates the system is not just binary (right/wrong) but operates on a spectrum of confidence, allowing for more nuanced learning.

* **Underlying Mechanism:** The "GRPO Trainer" likely implements a policy gradient method. The "Reward" is the numerical signal derived from the evaluation (e.g., a high value for a correct, confident answer). The "Update Model" step adjusts the model's internal parameters (weights) to make similar, high-reward responses more likely in the future.

* **Simplification:** The diagram is a high-level abstraction. In practice, this loop would run on thousands of examples, and the "evaluation" step might involve complex comparisons between multiple model outputs rather than a single yes/no check.