\n

## Diagram: Multiple-Choice and Open-Style Question Paths

### Overview

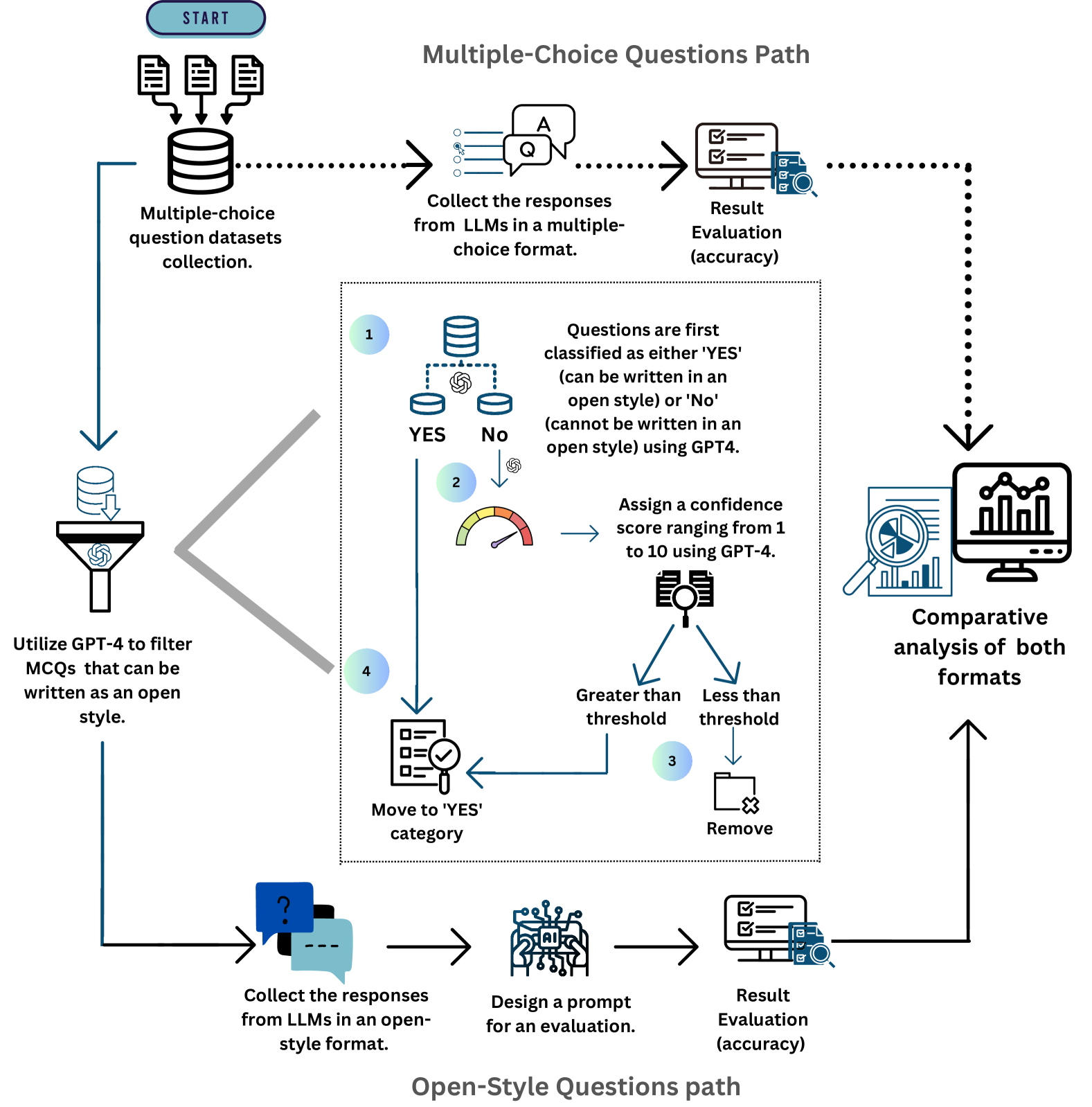

This diagram illustrates the workflow for processing multiple-choice questions (MCQs) and open-style questions using Large Language Models (LLMs) and GPT-4. It depicts two parallel paths: one for MCQs and one for open-style questions, culminating in a comparative analysis. The diagram uses a flowchart style with icons representing processes and decision points.

### Components/Axes

The diagram is segmented into two main paths: "Multiple-Choice Questions Path" (top) and "Open-Style Questions Path" (bottom). Key components include:

* **Start:** Initial point of the process.

* **Multiple-choice question datasets collection:** Represents the input data.

* **Collect the responses from LLMs in a multiple-choice format:** LLM response collection for MCQs.

* **Result Evaluation (accuracy):** Evaluation of MCQ responses.

* **Utilize GPT-4 to filter MCQs that can be written as an open style:** Filtering process using GPT-4.

* **Questions are first classified as either 'YES' (can be written in an open style) or 'No' (cannot be written in an open style) using GPT4:** Decision point based on GPT-4 classification.

* **Assign a confidence score ranging from 1 to 10 using GPT-4:** Confidence scoring process.

* **Greater than threshold / Less than threshold:** Decision point based on confidence score.

* **Move to 'YES' category / Remove:** Actions based on the threshold comparison.

* **Collect the responses from LLMs in an open-style format:** LLM response collection for open-style questions.

* **Design a prompt for an evaluation:** Prompt design for open-style evaluation.

* **Result Evaluation (accuracy):** Evaluation of open-style responses.

* **Comparative analysis of both formats:** Final analysis step.

### Detailed Analysis or Content Details

The diagram details a two-pronged approach to question answering.

**Multiple-Choice Questions Path:**

1. The process begins with a "Multiple-choice question datasets collection".

2. Responses are collected from LLMs in a multiple-choice format.

3. These responses undergo "Result Evaluation (accuracy)".

4. A subset of these MCQs are then filtered using GPT-4 to determine if they can be re-written as open-style questions.

5. GPT-4 classifies the questions as "YES" (can be open-style) or "No" (cannot be open-style).

6. For questions classified as "YES", a confidence score (1-10) is assigned by GPT-4.

7. A threshold is applied to the confidence score. If the score is "Greater than threshold", the question is moved to the "YES" category. If "Less than threshold", it is removed.

8. The "YES" category questions are then processed through the open-style question path.

**Open-Style Questions Path:**

1. Responses are collected from LLMs in an open-style format.

2. A prompt is designed for evaluation.

3. These responses undergo "Result Evaluation (accuracy)".

**Convergence:**

1. Both paths (MCQ and open-style) converge at a "Comparative analysis of both formats" stage.

### Key Observations

* The diagram highlights the use of GPT-4 for both filtering MCQs and assigning confidence scores.

* A threshold-based decision-making process is used to determine which MCQs are suitable for conversion to open-style questions.

* The diagram emphasizes the importance of accuracy evaluation for both question formats.

* The final step involves a comparative analysis, suggesting a goal of understanding the performance differences between the two question formats.

### Interpretation

The diagram illustrates a sophisticated workflow for leveraging LLMs and GPT-4 to analyze and evaluate question-answering performance. The process aims to identify MCQs that can be effectively answered in an open-style format, potentially unlocking more nuanced and insightful responses. The use of a confidence score and threshold suggests a desire to maintain quality control and avoid introducing unreliable data into the open-style analysis. The comparative analysis at the end indicates a focus on understanding the strengths and weaknesses of each question format, which could inform future question design and LLM evaluation strategies. The diagram suggests a research or development context where the goal is to optimize question-answering systems and gain a deeper understanding of LLM capabilities. The entire process is designed to improve the quality and depth of responses obtained from LLMs.