## Diagram: Knowledge Graph Construction from Cloze Questions via LLMs

### Overview

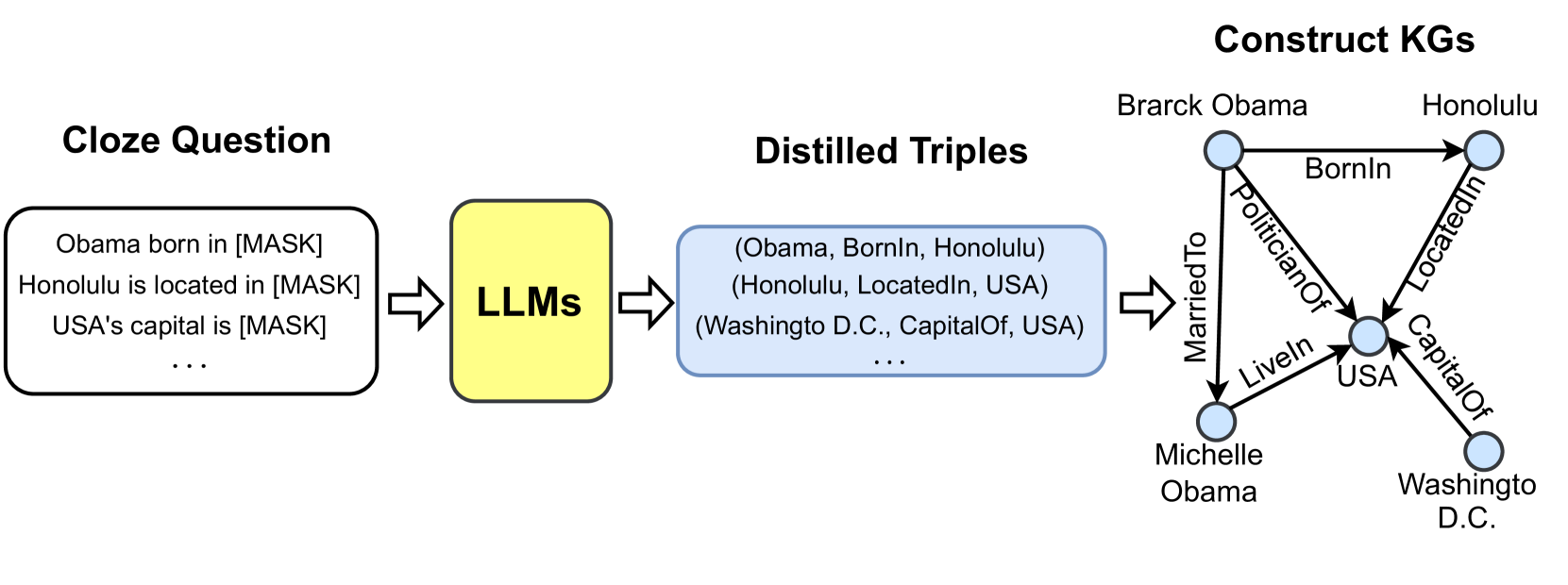

This image is a flowchart diagram illustrating a three-stage process for constructing a Knowledge Graph (KG). The process begins with natural language cloze-style (fill-in-the-blank) questions, which are processed by Large Language Models (LLMs) to extract structured relational triples. These triples are then used to build a connected knowledge graph. The diagram is presented on a light gray background.

### Components/Axes

The diagram is segmented into four primary components, arranged from left to right, connected by right-pointing block arrows.

1. **Cloze Question (Leftmost Component):**

* A black-bordered, rounded rectangle containing example text.

* **Text Content:**

* `Obama born in [MASK]`

* `Honolulu is located in [MASK]`

* `USA's capital is [MASK]`

* `...` (indicating more questions)

2. **LLMs (Central Processing Component):**

* A yellow, rounded rectangle with a black border.

* **Label:** `LLMs` (centered within the box).

3. **Distilled Triples (Right-Center Component):**

* A light blue, rounded rectangle with a black border.

* **Text Content (Structured Triples):**

* `(Obama, BornIn, Honolulu)`

* `(Honolulu, LocatedIn, USA)`

* `(Washingto D.C., CapitalOf, USA)`

* `...` (indicating more triples)

* **Note:** The text contains a typo: "Washingto" instead of "Washington".

4. **Construct KGs (Rightmost Component):**

* A directed graph (knowledge graph) with labeled nodes and edges.

* **Title:** `Construct KGs` (bold, top-right).

* **Nodes (Entities):** Represented by light blue circles with black outlines.

* `Brarck Obama` (Note: Typo for "Barack")

* `Honolulu`

* `USA`

* `Michelle Obama`

* `Washingto D.C.` (Note: Typo for "Washington")

* **Edges (Relationships):** Represented by black arrows with text labels.

* From `Brarck Obama` to `Honolulu`: `BornIn`

* From `Brarck Obama` to `USA`: `PoliticianOf`

* From `Brarck Obama` to `Michelle Obama`: `MarriedTo`

* From `Michelle Obama` to `USA`: `LiveIn`

* From `Honolulu` to `USA`: `LocatedIn`

* From `Washingto D.C.` to `USA`: `CapitalOf`

### Detailed Analysis

The diagram explicitly maps the transformation of unstructured text into structured data.

* **Stage 1 (Input):** The "Cloze Question" box provides the raw input format. The `[MASK]` token signifies the missing piece of information the LLM is expected to predict or fill.

* **Stage 2 (Processing):** The "LLMs" box acts as the inference engine. It takes the cloze questions as input and performs the task of filling in the masks, effectively performing relation extraction.

* **Stage 3 (Intermediate Output):** The "Distilled Triples" box shows the direct output of the LLM processing. Each line is a structured triple in the format `(Subject, Predicate, Object)`. The ellipsis (`...`) indicates this is a sample from a larger set.

* **Stage 4 (Final Output):** The "Construct KGs" section visualizes how the extracted triples are assembled into a graph database. Each unique entity from the triples becomes a node. Each triple defines a directed, labeled edge between two nodes. The graph shows a small, interconnected network centered around the entity `USA`.

### Key Observations

1. **Data Flow:** The process is linear and unidirectional: Unstructured Text -> LLM Inference -> Structured Triples -> Knowledge Graph.

2. **Entity Consolidation:** The graph demonstrates entity resolution. For example, the subject "Obama" in the triple becomes the node `Brarck Obama`, and the object "Honolulu" becomes the node `Honolulu`.

3. **Relationship Expansion:** The final graph contains relationships (`PoliticianOf`, `MarriedTo`, `LiveIn`) that were not explicitly shown in the sample "Distilled Triples" box, implying the ellipsis (`...`) represents a more comprehensive set of extracted facts.

4. **Typos/Errors:** Two consistent typos are present: "Brarck" for "Barack" and "Washingto" for "Washington". These appear in both the triple and the graph node, suggesting they originate from the source data or the extraction process.

### Interpretation

This diagram serves as a conceptual pipeline for automated knowledge base population. It demonstrates a method to leverage the latent knowledge within Large Language Models to structure information from plain text.

* **What it suggests:** The core idea is that LLMs can be prompted with cloze-style questions to reliably extract factual, relational knowledge. This extracted knowledge is not stored as text but as structured triples, which are the fundamental building blocks of knowledge graphs.

* **How elements relate:** The cloze questions define the *scope* of knowledge to be extracted (e.g., birthplaces, locations, capitals). The LLM is the *transformer* that converts this scope into structured assertions. The triples are the *atomic units* of knowledge. The knowledge graph is the *integrated system* that reveals connections between entities (e.g., linking Barack Obama to the USA via multiple relationships).

* **Notable implications:** The presence of typos highlights a critical challenge in this automated pipeline: data quality and consistency. Errors in the initial extraction (like misspelling "Barack") propagate directly into the final knowledge graph, potentially affecting its reliability for downstream tasks like reasoning or question answering. The diagram effectively argues for the feasibility of this approach while implicitly underscoring the need for robust error-checking and entity normalization steps.