\n

## Grouped Bar Charts: Model Accuracy by Problem Difficulty

### Overview

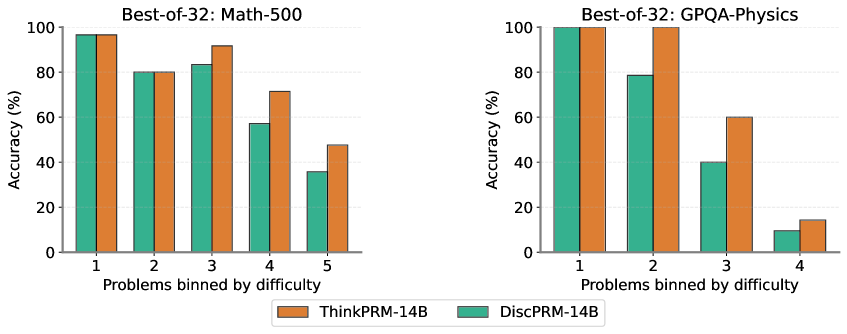

The image displays two side-by-side grouped bar charts comparing the performance of two AI models, "ThinkPRM-14B" and "DiscPRM-14B," on two different problem sets. The charts show the percentage accuracy of each model as problem difficulty increases. The overall trend indicates that accuracy decreases for both models as problems become more difficult, with ThinkPRM-14B consistently outperforming DiscPRM-14B, especially on harder problems.

### Components/Axes

* **Chart Titles:**

* Left Chart: "Best-of-32: Math-500"

* Right Chart: "Best-of-32: GPQA-Physics"

* **Y-Axis (Both Charts):** Labeled "Accuracy (%)". The scale runs from 0 to 100 in increments of 20.

* **X-Axis (Both Charts):** Labeled "Problems binned by difficulty". The bins are numbered sequentially.

* Left Chart (Math-500): Bins 1, 2, 3, 4, 5.

* Right Chart (GPQA-Physics): Bins 1, 2, 3, 4.

* **Legend:** Located at the bottom center of the image, spanning both charts.

* Orange square: "ThinkPRM-14B"

* Teal square: "DiscPRM-14B"

* **Data Series:** Each difficulty bin contains two bars, one for each model, placed side-by-side. The left bar in each pair is teal (DiscPRM-14B), and the right bar is orange (ThinkPRM-14B).

### Detailed Analysis

**Left Chart: Best-of-32: Math-500**

* **Trend Verification:** Both models show a clear downward trend in accuracy as difficulty increases from bin 1 to bin 5. ThinkPRM-14B's decline is less steep.

* **Data Points (Approximate):**

* **Bin 1:** DiscPRM-14B ≈ 98%, ThinkPRM-14B ≈ 98%. (Nearly identical, very high accuracy).

* **Bin 2:** DiscPRM-14B ≈ 80%, ThinkPRM-14B ≈ 80%. (Identical, significant drop from bin 1).

* **Bin 3:** DiscPRM-14B ≈ 84%, ThinkPRM-14B ≈ 92%. (ThinkPRM shows a notable advantage).

* **Bin 4:** DiscPRM-14B ≈ 58%, ThinkPRM-14B ≈ 72%. (ThinkPRM maintains a ~14 percentage point lead).

* **Bin 5:** DiscPRM-14B ≈ 36%, ThinkPRM-14B ≈ 48%. (Both models struggle, ThinkPRM retains a ~12 point lead).

**Right Chart: Best-of-32: GPQA-Physics**

* **Trend Verification:** A very steep downward trend for both models. The performance gap between the models widens significantly in the middle bins before converging at the highest difficulty.

* **Data Points (Approximate):**

* **Bin 1:** DiscPRM-14B ≈ 100%, ThinkPRM-14B ≈ 100%. (Perfect or near-perfect accuracy).

* **Bin 2:** DiscPRM-14B ≈ 79%, ThinkPRM-14B ≈ 100%. (ThinkPRM maintains perfect accuracy, while DiscPRM drops significantly).

* **Bin 3:** DiscPRM-14B ≈ 40%, ThinkPRM-14B ≈ 60%. (Both drop sharply, ThinkPRM leads by ~20 points).

* **Bin 4:** DiscPRM-14B ≈ 10%, ThinkPRM-14B ≈ 15%. (Both models perform very poorly, with a minimal difference).

### Key Observations

1. **Consistent Superiority:** ThinkPRM-14B (orange bars) achieves equal or higher accuracy than DiscPRM-14B (teal bars) in every single difficulty bin across both subjects.

2. **Difficulty Impact:** The "GPQA-Physics" problems appear to be more challenging overall, especially at higher difficulties. While both models start at 100% in bin 1, their accuracy plummets to 10-15% by bin 4. In contrast, the "Math-500" decline is more gradual, with both models still scoring between 36-48% at bin 5.

3. **Performance Gap:** The advantage of ThinkPRM-14B is most pronounced in the middle difficulty ranges (Bin 3 for Math, Bins 2 & 3 for Physics). This suggests its reasoning capabilities are particularly beneficial for moderately complex problems.

4. **Convergence at Extremes:** At the easiest problems (Bin 1 for both, Bin 2 for Physics/ThinkPRM) and the hardest problems (Bin 5 for Math, Bin 4 for Physics), the performance gap between the two models narrows or disappears.

### Interpretation

The data demonstrates a clear hierarchy in problem-solving capability between the two models under a "Best-of-32" sampling strategy. ThinkPRM-14B exhibits more robust reasoning, as evidenced by its slower decay in accuracy with increasing problem difficulty. This is especially evident in the physics domain, where it maintains perfect accuracy one bin longer than DiscPRM-14B.

The stark difference in the slope of decline between Math-500 and GPQA-Physics suggests the nature of the challenges differs. The physics problems likely involve more complex, multi-step reasoning or specialized knowledge that causes a catastrophic drop in performance for both models once a certain complexity threshold is crossed. The math problems, while difficult, allow for a more linear degradation of performance.

From a Peircean perspective, the charts are an indexical sign of the models' underlying reasoning architecture. The consistent performance gap points to a fundamental difference in how ThinkPRM and DiscPRM process and solve problems, with ThinkPRM's approach being more resilient to complexity. The charts do not reveal *why* this is the case, but they provide strong evidence *that* it is the case, prompting investigation into the architectural or training differences between the "Think" and "Disc" paradigms.