## Line Chart: Validation Accuracy Comparison Across Epochs

### Overview

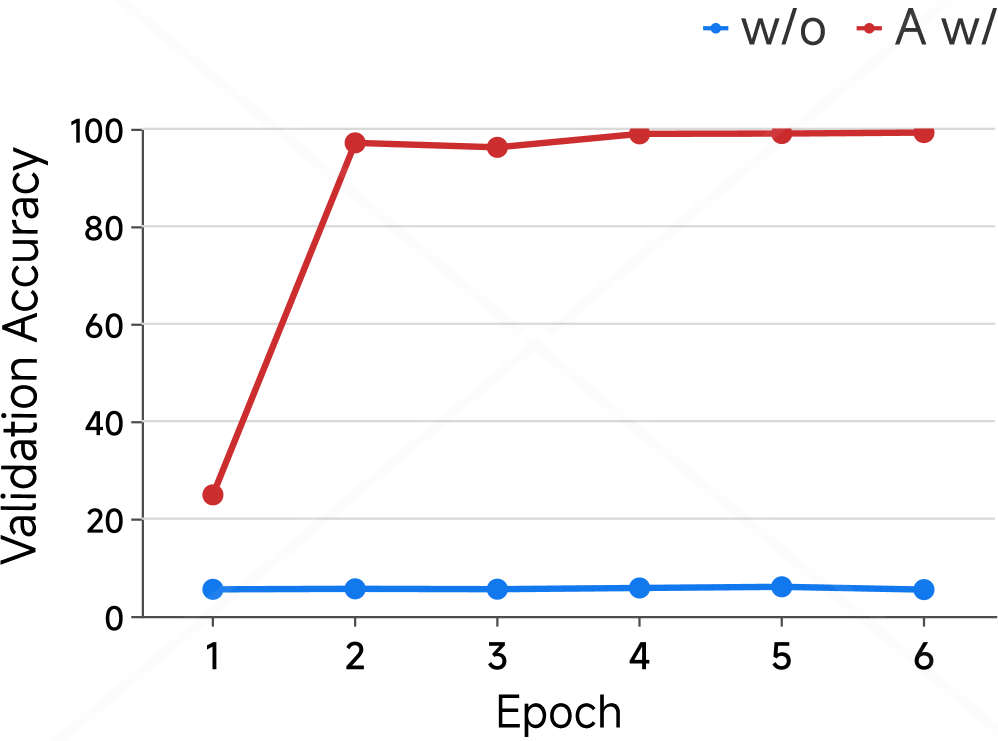

The image displays a line chart comparing the validation accuracy of two different model training conditions over six epochs. The chart clearly demonstrates a dramatic performance difference between the two conditions, with one showing rapid convergence to high accuracy and the other remaining stagnant at a very low accuracy level.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **Y-Axis (Vertical):**

* **Label:** "Validation Accuracy"

* **Scale:** Linear scale from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **X-Axis (Horizontal):**

* **Label:** "Epoch"

* **Scale:** Discrete integer scale from 1 to 6.

* **Tick Marks:** 1, 2, 3, 4, 5, 6.

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Series 1:** Blue line with circular markers, labeled "w/o".

* **Series 2:** Red line with circular markers, labeled "A w/".

* **Data Series:** Two distinct lines, each connecting data points (circular markers) for epochs 1 through 6.

### Detailed Analysis

**Data Series 1: "w/o" (Blue Line)**

* **Trend Verification:** The blue line is essentially flat, showing no significant upward or downward trend across all epochs. It represents a consistently poor performance.

* **Data Points (Approximate):**

* Epoch 1: ~5%

* Epoch 2: ~5%

* Epoch 3: ~5%

* Epoch 4: ~5%

* Epoch 5: ~6%

* Epoch 6: ~5%

* **Summary:** Validation accuracy remains stagnant at approximately 5-6% for the entire training duration.

**Data Series 2: "A w/" (Red Line)**

* **Trend Verification:** The red line shows a sharp, near-vertical increase between epoch 1 and epoch 2, followed by a plateau at a very high accuracy level for the remaining epochs.

* **Data Points (Approximate):**

* Epoch 1: ~25%

* Epoch 2: ~97%

* Epoch 3: ~96%

* Epoch 4: ~99%

* Epoch 5: ~99%

* Epoch 6: ~99%

* **Summary:** Validation accuracy starts low (~25%), experiences a massive jump to near-perfect performance (~97%) by the second epoch, and then stabilizes between 96% and 99% for epochs 3-6.

### Key Observations

1. **Performance Disparity:** There is a colossal gap in final validation accuracy between the two conditions. The "A w/" condition achieves near-perfect results (~99%), while the "w/o" condition fails to learn effectively (~5%).

2. **Convergence Speed:** The "A w/" model converges extremely quickly, reaching its performance plateau by the second epoch.

3. **Stability:** Both models show stable performance after their initial state. The "w/o" model is stably poor, and the "A w/" model is stably excellent after epoch 2.

4. **Initial Condition:** Even at epoch 1, the "A w/" model (~25%) performs significantly better than the "w/o" model (~5%), suggesting an immediate benefit from the condition denoted by "A w/".

### Interpretation

This chart provides strong empirical evidence for the effectiveness of the technique or component represented by "A w/" (likely shorthand for "with Augmentation" or a similar method). The data suggests that:

* **The "A w/" condition is critical for successful learning.** Without it ("w/o"), the model fails to improve beyond random or near-random performance, indicating a fundamental training failure.

* **The technique enables rapid and robust learning.** The dramatic leap in accuracy from epoch 1 to 2 implies that "A w/" provides the model with a highly informative signal or regularization that allows it to quickly identify and lock onto the correct patterns in the data.

* **The result is not a minor improvement but a categorical difference.** The chart doesn't show one model being 10% better; it shows one model working and the other not working at all. This points to "A w/" being a necessary component for this specific task/model architecture, not merely an optional enhancer.

**In essence, the chart tells a story of a binary outcome: with the "A w/" component, the model succeeds brilliantly and quickly; without it, the model fails completely.** This is a powerful visualization for justifying the inclusion of that component in a machine learning pipeline.