\n

## Diagram: Language CoT (Chain of Thought) Training Stages

### Overview

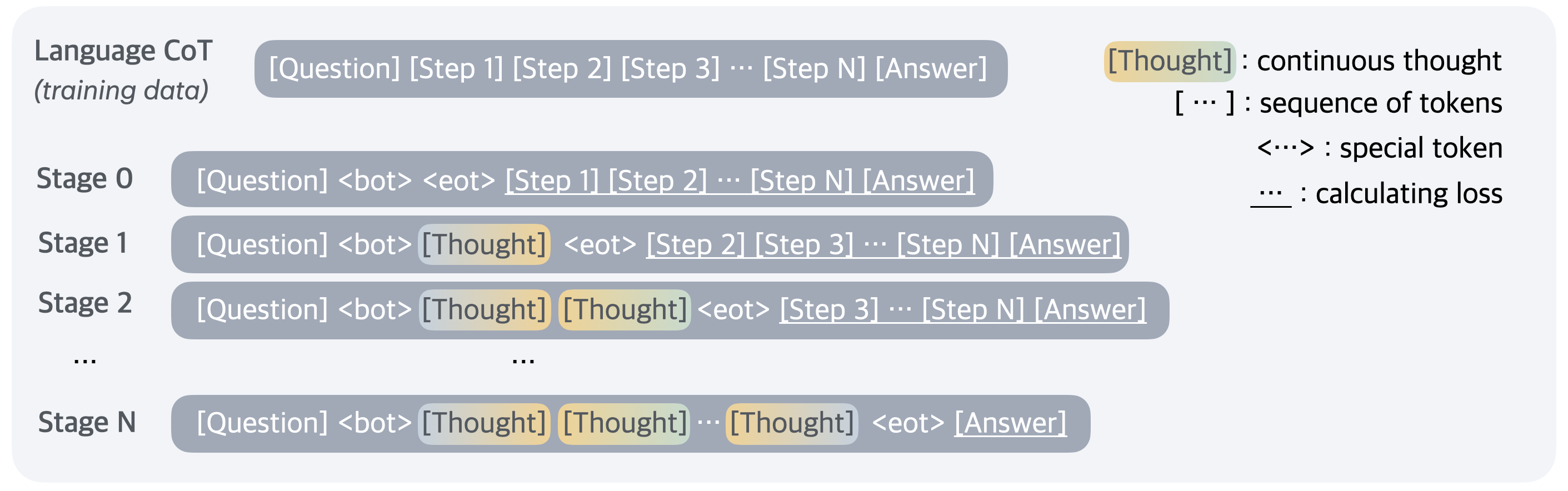

This diagram illustrates the stages of training a Language Chain of Thought (CoT) model. It depicts how the model's input structure evolves across different stages, incorporating "Thought" tokens to enhance reasoning capabilities. The diagram visually represents the progression from standard training data to a more sophisticated approach that encourages step-by-step reasoning.

### Components/Axes

The diagram is structured vertically, representing stages of training. The left side shows the input structure for each stage, while the right side provides a legend explaining the meaning of the bracketed tokens.

* **Title:** "Language CoT (training data)" at the top-left.

* **Stages:** Labeled "Stage 0", "Stage 1", "Stage 2", and "Stage N" (representing a generalized Nth stage). Stages are arranged vertically.

* **Legend:** Located at the top-right, defining the meaning of tokens:

* "[Thought] : continuous thought"

* "[...]: sequence of tokens"

* "<<>> : special token"

* "... : calculating loss"

* **Input Structure:** Each stage shows a bracketed sequence representing the model's input: "[Question] [Step 1] [Step 2] ... [Step N] [Answer]". The inclusion of "<bot>" and "<eot>" tokens varies across stages.

### Detailed Analysis or Content Details

The diagram shows a clear progression in the input structure across stages:

* **Stage 0:** "[Question] <bot> <eot> [Step 1] [Step 2] ... [Step N] [Answer]" - This stage introduces the "<bot>" and "<eot>" tokens.

* **Stage 1:** "[Question] <bot> [Thought] <eot> [Step 2] [Step 3] ... [Step N] [Answer]" - The first "[Thought]" token is inserted after "<bot>".

* **Stage 2:** "[Question] <bot> [Thought] [Thought] <eot> [Step 3] ... [Step N] [Answer]" - A second "[Thought]" token is added.

* **Stage N:** "[Question] <bot> [Thought] [Thought] ... [Thought] <eot> [Answer]" - This generalized stage shows multiple "[Thought]" tokens, indicated by "...".

The ellipsis ("...") consistently represents a sequence of tokens, and the number of "[Thought]" tokens increases with each stage. The "<eot>" token appears to act as a separator.

### Key Observations

The key observation is the systematic addition of "[Thought]" tokens into the input sequence as the training progresses. This suggests a method for explicitly guiding the model to generate intermediate reasoning steps. The "<bot>" and "<eot>" tokens likely serve as delimiters or control signals within the input.

### Interpretation

This diagram illustrates a training methodology for Language CoT models, aiming to improve their reasoning abilities. By explicitly incorporating "[Thought]" tokens, the model is encouraged to generate a chain of reasoning steps between the question and the final answer. This is a form of prompting or fine-tuning that guides the model towards more interpretable and potentially more accurate responses. The stages demonstrate a gradual increase in the complexity of the input, suggesting a progressive learning process. The inclusion of "<bot>" and "<eot>" tokens likely helps the model understand the boundaries of the input and the expected output format. The "calculating loss" notation in the legend suggests that the model's performance is evaluated based on its ability to generate correct reasoning steps and answers. The diagram highlights a shift from simply predicting the answer to generating a coherent thought process leading to the answer.