## Line Chart: Convergence of HMC Performance Across Dimensions

### Overview

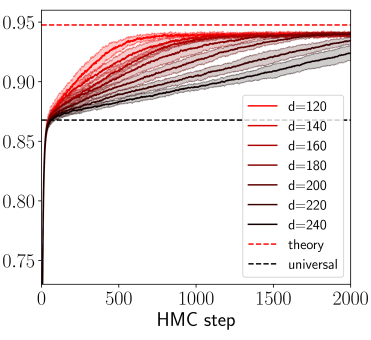

The image is a line chart plotting a performance metric (y-axis) against the number of Hamiltonian Monte Carlo (HMC) steps (x-axis) for various dimensionality values (`d`). The chart illustrates how the convergence behavior and final performance plateau differ as the dimensionality of the problem increases.

### Components/Axes

* **X-Axis:**

* **Label:** `HMC step`

* **Scale:** Linear, from 0 to 2000.

* **Major Ticks:** 0, 500, 1000, 1500, 2000.

* **Y-Axis:**

* **Scale:** Linear, from 0.75 to 0.95.

* **Major Ticks:** 0.75, 0.80, 0.85, 0.90, 0.95.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains 9 entries:

1. `d=120` (Bright red line)

2. `d=140` (Red-orange line)

3. `d=160` (Orange line)

4. `d=180` (Dark orange/brown line)

5. `d=200` (Brown line)

6. `d=220` (Dark brown line)

7. `d=240` (Black line)

8. `theory` (Red dashed line)

9. `universal` (Black dashed line)

* **Reference Lines:**

* A horizontal red dashed line (`theory`) at y = 0.95.

* A horizontal black dashed line (`universal`) at approximately y = 0.87.

### Detailed Analysis

The chart displays 7 solid data series, each corresponding to a different dimension `d`, and 2 horizontal reference lines.

**Trend Verification & Data Point Extraction:**

All 7 solid lines originate from approximately the same point at HMC step 0: y ≈ 0.87 (coinciding with the `universal` reference line). From there, they diverge based on their `d` value.

* **Low Dimensions (Redder lines, `d=120` to `d=160`):** These lines exhibit a steep initial ascent. They rise sharply from ~0.87, crossing y=0.90 before HMC step 250, and begin to plateau as they approach the `theory` line (y=0.95). The `d=120` line is the highest, nearly reaching 0.95 by step 2000. The `d=140` and `d=160` lines follow closely but plateau at slightly lower values (approx. 0.945 and 0.94, respectively).

* **Mid Dimensions (Brown lines, `d=180`, `d=200`):** These lines have a more moderate ascent. They cross y=0.90 later (around step 500-750) and continue to rise steadily, reaching approximately 0.935 (`d=180`) and 0.93 (`d=200`) by step 2000.

* **High Dimensions (Darker/Black lines, `d=220`, `d=240`):** These lines show the slowest convergence. Their ascent is gradual. The `d=220` line reaches ~0.925, and the `d=240` line (the lowest solid line) reaches only ~0.92 by step 2000. They remain significantly below the `theory` line and are still visibly rising at the end of the plot.

**Spatial Grounding of Legend:** The legend is placed in the lower right, over an area where the lines are densely packed and rising. The color gradient in the legend from red (`d=120`) to black (`d=240`) perfectly matches the vertical ordering of the lines on the chart, with red lines on top and black lines at the bottom.

### Key Observations

1. **Dimensionality-Dependent Convergence Rate:** There is a clear, monotonic relationship: as dimension `d` increases, the rate of convergence (the slope of the line) decreases. Lower-dimensional problems converge faster.

2. **Performance Plateau:** The final performance value at HMC step 2000 is inversely related to `d`. Lower `d` values achieve a higher final metric, approaching the `theory` limit.

3. **Common Starting Point:** All simulations, regardless of dimension, begin at the same performance level (~0.87), which is defined by the `universal` reference line.

4. **Asymptotic Behavior:** The lines for lower `d` appear to asymptotically approach the `theory` line (y=0.95), while lines for higher `d` appear to be asymptotically approaching a lower value, potentially closer to the `universal` line, but within the plotted range they have not yet plateaued.

### Interpretation

This chart demonstrates a fundamental challenge in Hamiltonian Monte Carlo (HMC) sampling: the "curse of dimensionality." The performance metric (likely a measure of sampling efficiency, such as the effective sample size or a correlation metric) degrades as the problem's dimensionality (`d`) increases.

* **The `theory` line (y=0.95)** likely represents an idealized, theoretical upper bound on performance for this specific model or target distribution, achievable under optimal conditions or in low dimensions.

* **The `universal` line (y≈0.87)** represents a baseline or worst-case performance level that all runs start from, possibly the performance of a naive sampler or the initial state.

* **The core finding** is that while HMC can eventually approach theoretical optimality in lower dimensions (`d=120`), in higher dimensions (`d=240`), it converges much more slowly and, within a practical computational budget (2000 steps), achieves a significantly lower performance. This implies that for high-dimensional problems, either many more HMC steps are required to reach the same level of performance, or that the achievable performance ceiling is inherently lower. The chart visually quantifies the cost of added complexity in terms of both convergence speed and final result quality.