## Heatmap: Layer Importance vs. Parameter

### Overview

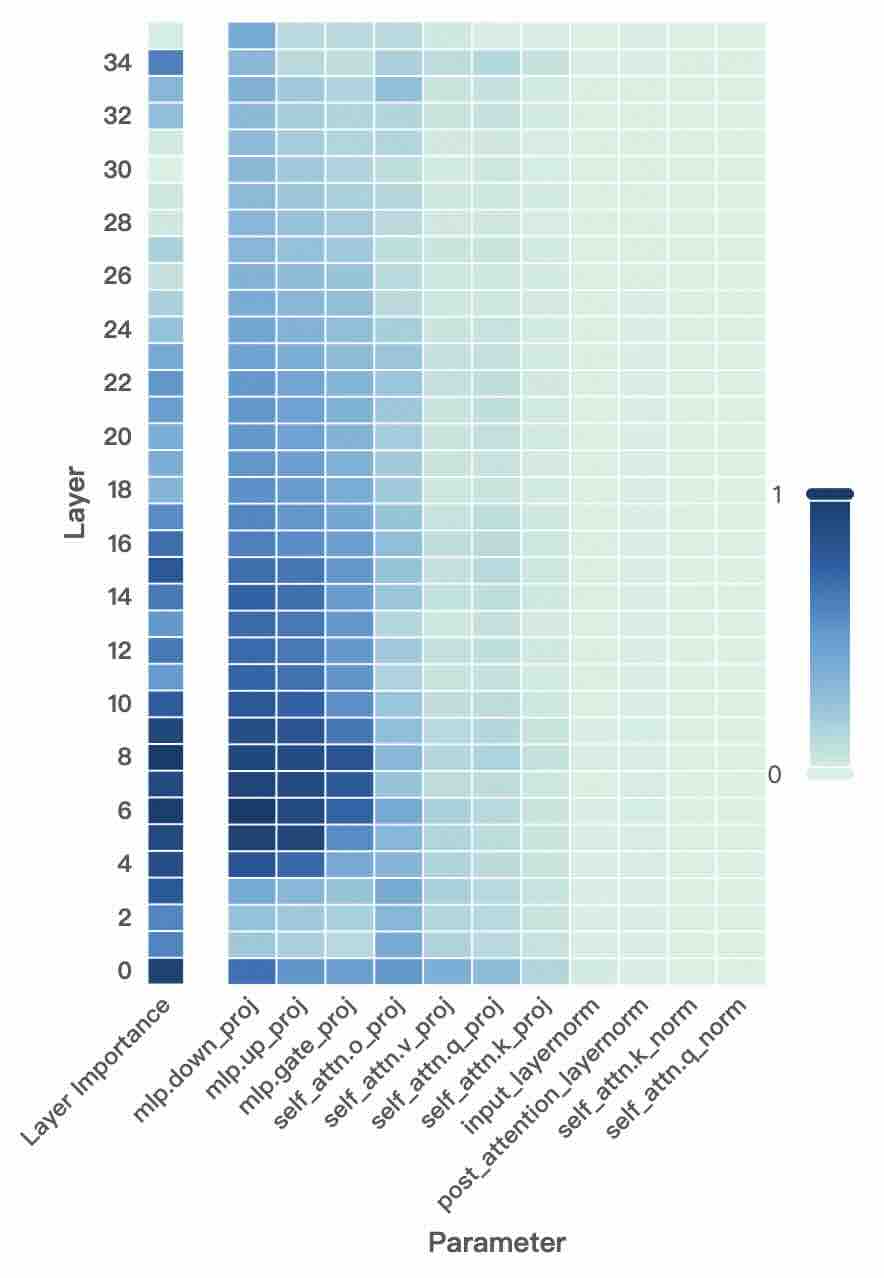

The image is a heatmap visualizing the importance of different layers in a neural network with respect to various parameters. The color intensity represents the level of importance, ranging from dark blue (high importance, value of 1) to light green (low importance, value of 0). The y-axis represents the layer number, and the x-axis represents the different parameters.

### Components/Axes

* **X-axis (Parameter):**

* mlp.down\_proj

* mlp.up\_proj

* mlp.gate\_proj

* self\_attn.o\_proj

* self\_attn.v\_proj

* self\_attn.q\_proj

* self\_attn.k\_proj

* input\_layernorm

* post\_attention\_layernorm

* self\_attn.k\_norm

* self\_attn.q\_norm

* **Y-axis (Layer):** Numerical values from 0 to 34, incrementing by 2.

* **Legend:** A vertical color gradient from light green (0) to dark blue (1), indicating the level of importance. Located on the right side of the heatmap.

* **Title:** Layer Importance (y-axis label) vs Parameter (x-axis label)

### Detailed Analysis

The heatmap shows the importance of each layer (0-34) for each parameter. Darker blue indicates higher importance, while lighter green indicates lower importance.

* **mlp.down\_proj:** High importance (dark blue) from layer 0 to approximately layer 16, then gradually decreasing to light green by layer 34.

* **mlp.up\_proj:** High importance (dark blue) from layer 0 to approximately layer 16, then gradually decreasing to light green by layer 34.

* **mlp.gate\_proj:** High importance (dark blue) from layer 4 to approximately layer 8, then gradually decreasing to light green by layer 34.

* **self\_attn.o\_proj:** Starts with a medium blue around layer 0, decreasing to light green by layer 34.

* **self\_attn.v\_proj:** Starts with a medium blue around layer 0, decreasing to light green by layer 34.

* **self\_attn.q\_proj:** Starts with a light blue around layer 0, decreasing to light green by layer 34.

* **self\_attn.k\_proj:** Starts with a light blue around layer 0, decreasing to light green by layer 34.

* **input\_layernorm:** Light green across all layers, indicating low importance.

* **post\_attention\_layernorm:** Light green across all layers, indicating low importance.

* **self\_attn.k\_norm:** Light green across all layers, indicating low importance.

* **self\_attn.q\_norm:** Light green across all layers, indicating low importance.

### Key Observations

* The parameters `mlp.down_proj`, `mlp.up_proj`, and `mlp.gate_proj` show the highest importance in the lower layers (0-16).

* The parameters `input_layernorm`, `post_attention_layernorm`, `self_attn.k_norm`, and `self_attn.q_norm` have consistently low importance across all layers.

* The importance of most parameters decreases as the layer number increases.

### Interpretation

The heatmap suggests that the lower layers of the neural network are more critical for the `mlp.down_proj`, `mlp.up_proj`, and `mlp.gate_proj` parameters. The layernorm parameters (`input_layernorm`, `post_attention_layernorm`, `self_attn.k_norm`, and `self_attn.q_norm`) appear to have minimal impact on the network's performance across all layers, based on this importance metric. The decreasing importance of most parameters with increasing layer number could indicate that the initial layers are responsible for extracting fundamental features, while later layers refine these features with less reliance on the specified parameters.