TECHNICAL ASSET FINGERPRINT

14ae597f3ffa786193ebff05

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

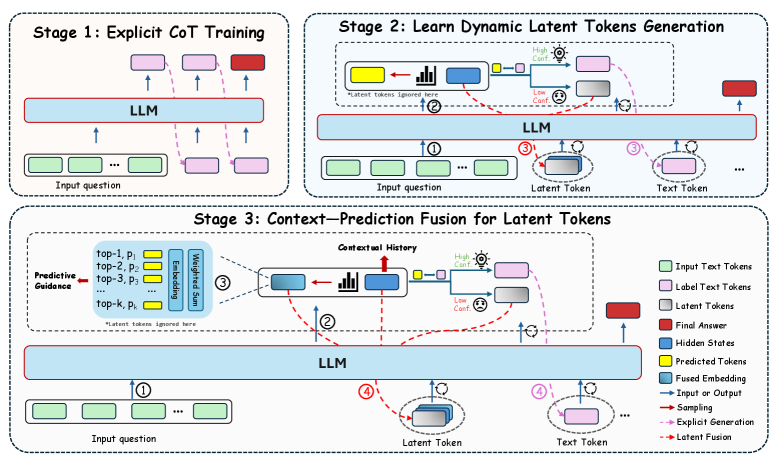

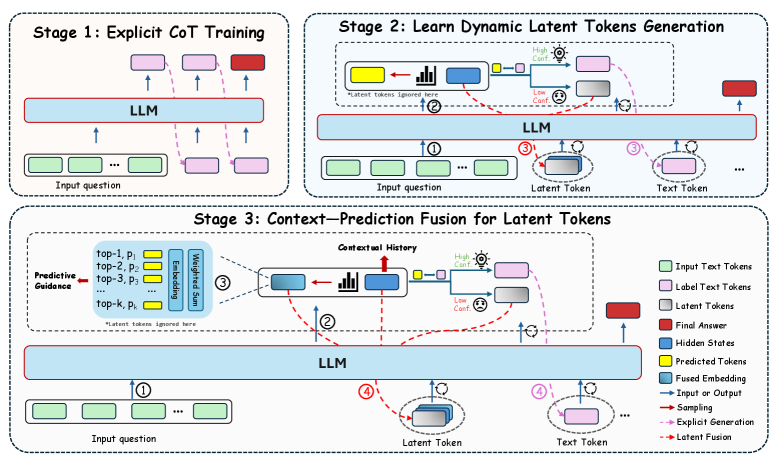

## Diagram: Multi-Stage Training Process for LLMs with Latent Tokens

### Overview

The image presents a three-stage training process for Large Language Models (LLMs), focusing on incorporating latent tokens. The stages are: 1) Explicit Chain-of-Thought (CoT) Training, 2) Learning Dynamic Latent Token Generation, and 3) Context-Prediction Fusion for Latent Tokens. The diagram illustrates the flow of information and the interaction between different components within each stage.

### Components/Axes

* **Stages:** The diagram is divided into three distinct stages, each enclosed in a rounded-corner rectangle.

* **LLM:** A central "LLM" block represents the Large Language Model. It's a light blue rectangle.

* **Input Question:** Represented by green rounded rectangles.

* **Label Text Tokens:** Represented by light pink rounded rectangles.

* **Latent Tokens:** Represented by gray rounded rectangles.

* **Final Answer:** Represented by a red rounded rectangle.

* **Hidden States:** Not explicitly shown as shapes, but implied within the LLM.

* **Predicted Tokens:** Represented by yellow rounded rectangles.

* **Fused Embedding:** Represented by blue rounded rectangles with a bar chart inside.

* **Arrows:** Different types of arrows indicate the flow of information:

* Solid blue arrows: Input or Output

* Solid red arrows: Sampling

* Dashed pink arrows: Explicit Generation

* Dashed brown arrows: Latent Fusion

* **Legend:** Located in the bottom-right corner, explaining the different shapes and arrow types.

### Detailed Analysis

**Stage 1: Explicit CoT Training**

* **Description:** This stage focuses on training the LLM with explicit Chain-of-Thought reasoning.

* **Flow:**

1. An "Input question" (green) is fed into the "LLM".

2. The LLM generates "Label Text Tokens" (pink) in a chain-of-thought manner.

3. The final output is the "Final Answer" (red).

* The arrows are solid blue (Input/Output) and dashed pink (Explicit Generation).

**Stage 2: Learn Dynamic Latent Tokens Generation**

* **Description:** This stage focuses on generating dynamic latent tokens.

* **Flow:**

1. An "Input question" (green) is fed into the "LLM" (blue arrow labeled "1").

2. The LLM generates a "Fused Embedding" (blue rectangle with a bar chart inside).

3. The "Fused Embedding" is used to generate "Predicted Tokens" (yellow).

4. The "Predicted Tokens" are used to generate "Latent Tokens" (gray) (dashed brown arrow labeled "2").

5. The "Latent Tokens" and "Text Tokens" are fed back into the "LLM" (blue arrows labeled "3").

6. The LLM generates the "Final Answer" (red).

* **Additional Elements:**

* "Latent tokens ignored here" is written below the "Predicted Tokens" and "Fused Embedding".

* "High Conf." and "Low Conf." icons are present near the "Predicted Tokens" and "Latent Tokens" respectively.

**Stage 3: Context-Prediction Fusion for Latent Tokens**

* **Description:** This stage focuses on fusing context and prediction to generate latent tokens.

* **Flow:**

1. An "Input question" (green) is fed into the "LLM" (blue arrow labeled "1").

2. "Predictive Guidance" is provided, consisting of "top-1, p1", "top-2, p2", "top-3, p3", ..., "top-k, pk" (blue box).

3. These are processed through "Embedding" and "Weighted Sum" to create a "Fused Embedding" (blue rectangle with a bar chart inside).

4. The "Fused Embedding" is used to generate "Predicted Tokens" (yellow).

5. The "Predicted Tokens" are used to generate "Latent Tokens" (gray) (dashed brown arrow labeled "2").

6. The "Latent Tokens" and "Text Tokens" are fed back into the "LLM" (blue arrows labeled "4").

7. The LLM generates the "Final Answer" (red).

* **Additional Elements:**

* "Contextual History" is indicated above the "Fused Embedding".

* "Latent tokens ignored here" is written below the "Predictive Guidance" box.

* "High Conf." and "Low Conf." icons are present near the "Predicted Tokens" and "Latent Tokens" respectively.

### Key Observations

* The diagram illustrates a progressive training process, starting with explicit CoT training and moving towards incorporating latent tokens.

* Stages 2 and 3 introduce the concept of "Latent Tokens" and their generation through "Predicted Tokens" and "Fused Embedding".

* Stage 3 incorporates "Predictive Guidance" to influence the generation of "Latent Tokens".

* The "High Conf." and "Low Conf." icons suggest a mechanism for evaluating the confidence of the generated tokens.

### Interpretation

The diagram outlines a sophisticated approach to training LLMs by integrating latent tokens. The progression from explicit CoT training to context-prediction fusion suggests an attempt to enhance the model's reasoning capabilities by leveraging both explicit and implicit information. The use of "Predictive Guidance" in Stage 3 indicates an effort to control and refine the generation of latent tokens, potentially leading to more accurate and relevant outputs. The confidence indicators ("High Conf." and "Low Conf.") likely play a role in filtering or weighting the generated tokens, ensuring that only the most reliable information is used in the final prediction. This multi-stage approach aims to improve the LLM's ability to understand and respond to complex queries by incorporating a richer representation of the input and its context.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Training Stages

### Overview

The image depicts a three-stage process for training a Large Language Model (LLM), focusing on incorporating dynamic latent tokens to improve performance. The stages are: Explicit Chain-of-Thought (CoT) Training, Learning Dynamic Latent Tokens Generation, and Context-Prediction Fusion for Latent Tokens. The diagram uses a flowchart-like structure with LLM blocks, input/output tokens, and arrows indicating data flow.

### Components/Axes

The diagram is segmented into three stages, each with its own LLM block and associated input/output elements. A legend in the bottom-right corner defines the color-coding for different token types and process indicators.

**Legend:**

* **Input Text Tokens:** Light Green

* **Label Text Tokens:** Pink

* **Latent Tokens:** Red

* **Final Answer:** Dark Blue

* **Hidden States:** Light Blue

* **Predicted Tokens:** Yellow

* **Fused Embedding:** Teal

* **Input or Output:** Black

* **Sampling:** Orange

* **Explicit Generation:** Purple

* **Late Fusion:** Brown

Each stage has an "Input question" block (light green) and an LLM block (light blue). Arrows indicate the flow of information. Stage 3 includes additional components like "Predictive Guidance" (top-1, p1, top-2, p2, top-3, p3), "Embedding", "Weighted Sum", and "Contextual History".

### Detailed Analysis or Content Details

**Stage 1: Explicit CoT Training**

* An "Input question" block (light green) feeds into an LLM block (light blue).

* The LLM outputs "Label Text Tokens" (pink) which are fed back into the LLM.

* Arrows indicate a cyclical process within the LLM.

**Stage 2: Learn Dynamic Latent Tokens Generation**

* An "Input question" block (light green) feeds into an LLM block (light blue).

* The LLM generates "Latent Tokens" (red). A small chart is shown with "High Conf." and "Low Conf." labels.

* The "Latent Tokens" are then converted into "Text Tokens" (yellow) and fed back into the LLM.

* A note states "*Latent tokens ignored here*".

* Numbered circles (1, 2, 3) indicate the flow of information.

**Stage 3: Context-Prediction Fusion for Latent Tokens**

* An "Input question" block (light green) feeds into a "Predictive Guidance" block (containing top-1, p1, top-2, p2, top-3, p3).

* The "Predictive Guidance" block feeds into an "Embedding" block, then a "Weighted Sum" block, and finally into a "Contextual History" block.

* The "Contextual History" block feeds into an LLM block (light blue).

* The LLM generates "Latent Tokens" (red). A small chart is shown with "High Conf." and "Low Conf." labels.

* The "Latent Tokens" are then converted into "Text Tokens" (yellow) and fed back into the LLM.

* A note states "*Latent tokens ignored here*".

* Numbered circles (1, 2, 3, 4) indicate the flow of information.

* Arrows indicate the flow of information, including "Sampling" (orange), "Explicit Generation" (purple), and "Late Fusion" (brown).

### Key Observations

The diagram highlights a progressive refinement of the LLM's ability to generate and utilize latent tokens. Stage 1 establishes a baseline through explicit CoT training. Stage 2 introduces the generation of latent tokens, while Stage 3 focuses on fusing contextual information with predicted latent tokens to improve accuracy and relevance. The inclusion of confidence levels ("High Conf." and "Low Conf.") suggests a mechanism for evaluating the quality of generated latent tokens.

### Interpretation

This diagram illustrates a sophisticated approach to LLM training that moves beyond simple input-output mapping. By introducing latent tokens, the model can represent and manipulate abstract concepts, potentially leading to more nuanced and accurate responses. The three stages represent a gradual increase in complexity, starting with explicit training, then moving to dynamic token generation, and finally incorporating contextual fusion. The use of predictive guidance and weighted sums suggests an attempt to balance exploration (sampling) with exploitation (predictive accuracy). The "Late Fusion" step indicates a strategy for integrating the latent tokens into the final output, potentially improving coherence and relevance. The diagram suggests a focus on improving the LLM's ability to reason and generate more human-like responses by leveraging the power of latent representations. The notes about ignoring latent tokens in certain steps suggest these are areas for future research or optimization.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Three-Stage LLM Enhancement with Latent Tokens

### Overview

The image is a technical diagram illustrating a three-stage process for enhancing a Large Language Model (LLM) through the use of latent tokens and context-prediction fusion. The diagram is divided into three distinct, labeled sections (Stage 1, Stage 2, Stage 3), each depicting a different phase of the model's training or inference architecture. The overall flow suggests a progression from explicit training to a more complex, dynamic system that integrates predictive guidance and contextual history.

### Components/Axes

The diagram is not a chart with axes but a process flow diagram. Its primary components are:

* **Three Main Stages:** Labeled "Stage 1: Explicit CoT Training", "Stage 2: Learn Dynamic Latent Tokens Generation", and "Stage 3: Context-Prediction Fusion for Latent Tokens".

* **Central Processing Unit:** A large, light blue rectangle labeled "LLM" is present in all three stages, representing the core language model.

* **Token Types (Legend):** A legend in the bottom-right corner defines the color-coding for various elements:

* Light Green Rectangle: **Input Text Tokens**

* Light Purple Rectangle: **Label Text Tokens**

* Light Purple Rectangle (with a different shade/pattern): **Latent Tokens**

* Red Rectangle: **Final Answer**

* Blue Rectangle: **Hidden States**

* Yellow Rectangle: **Predicted Tokens**

* Blue Rectangle with a gray border: **Fused Embedding**

* Solid Black Arrow: **Input or Output**

* Dashed Red Arrow: **Latent Generation**

* Dashed Red Arrow with a dot: **Explicit Generation**

* Dashed Red Arrow with a circle: **Latent Fusion**

* **Flow Arrows:** Various solid and dashed arrows indicate the direction of data flow, generation, and fusion between components.

* **Sub-components:** Specific boxes within stages, such as "Predictive Guidance" and "Contextual History" in Stage 3.

### Detailed Analysis

**Stage 1: Explicit CoT Training**

* **Process:** This stage depicts a standard supervised training setup.

* **Flow:** A sequence of **Input Text Tokens** (light green) is fed into the **LLM**. The LLM processes them and outputs a sequence of **Label Text Tokens** (light purple), culminating in a **Final Answer** (red). The solid black arrows show a direct input-to-output mapping.

**Stage 2: Learn Dynamic Latent Tokens Generation**

* **Process:** This stage introduces the generation of **Latent Tokens** alongside standard text tokens.

* **Flow:**

1. **Input Text Tokens** (light green) enter the **LLM** (Step ①).

2. The LLM produces **Hidden States** (blue).

3. A decision point occurs based on confidence (illustrated by a bar chart icon and "High Conf." / "Low Conf." labels).

4. **Latent Tokens** (light purple, distinct from label tokens) are generated via **Latent Generation** (dashed red arrow) and fed back into the LLM (Step ②).

5. **Text Tokens** (light purple, likely label tokens) are generated via **Explicit Generation** (dashed red arrow with a dot) (Step ③).

6. The process outputs a **Final Answer** (red).

**Stage 3: Context-Prediction Fusion for Latent Tokens**

* **Process:** This is the most complex stage, adding predictive guidance and fusing information.

* **Flow:**

1. **Input Text Tokens** (light green) enter the **LLM** (Step ①).

2. The LLM produces **Hidden States** (blue).

3. **Predictive Guidance:** A box on the left provides a ranked list ("top-1, p₁", "top-2, p₂", etc.) of **Predicted Tokens** (yellow) with their probabilities. This guidance is weighted and used to inform the process.

4. **Contextual History:** A central box shows the integration of **Hidden States** (blue) with a history of previous states (represented by a bar chart icon and a red arrow labeled "Contextual History").

5. **Fusion:** The predictive guidance and contextual history are fused, creating a **Fused Embedding** (blue with gray border).

6. **Latent Token Generation:** The **Fused Embedding** is used to generate **Latent Tokens** (light purple) via **Latent Fusion** (dashed red arrow with a circle) (Step ②).

7. **Text Token Generation:** **Text Tokens** (light purple) are also generated (Step ③).

8. Both latent and text tokens are fed back into the LLM (Step ④), leading to the final output.

### Key Observations

1. **Progressive Complexity:** The architecture evolves from a simple encoder-decoder (Stage 1) to a system with internal latent feedback (Stage 2), and finally to a system that incorporates external predictive signals and historical context (Stage 3).

2. **Role of Latent Tokens:** Latent tokens are introduced in Stage 2 as an intermediate, non-textual representation that the model learns to generate dynamically based on confidence. They become a core component fused with other signals in Stage 3.

3. **Confidence-Based Routing:** Stage 2 explicitly shows a decision mechanism ("High Conf." / "Low Conf.") that likely determines when to generate latent versus explicit text tokens.

4. **Information Fusion:** Stage 3's key innovation is the "Fused Embedding," which combines real-time predictive guidance (from a separate module) with the model's own contextual history before generating latent tokens.

5. **Feedback Loops:** Stages 2 and 3 feature prominent feedback loops (dashed red arrows) where generated tokens (latent or text) are fed back into the LLM, suggesting an iterative or autoregressive refinement process.

### Interpretation

This diagram outlines a sophisticated method for improving the reasoning and generation capabilities of Large Language Models. The core idea is to move beyond generating only human-readable text tokens.

* **Stage 1** establishes a baseline by training the model to produce explicit Chain-of-Thought (CoT) reasoning steps.

* **Stage 2** enhances the model by teaching it to create its own internal "thought" representations (Latent Tokens). These tokens likely capture abstract reasoning states or hypotheses that are more flexible and powerful than discrete text. The confidence-based routing suggests the model learns to use this latent pathway when uncertain or when deeper reasoning is required.

* **Stage 3** represents a significant architectural advancement. It doesn't rely solely on the model's internal state. Instead, it actively fuses two powerful external signals: 1) **Predictive Guidance**, which could come from a separate, specialized model or a retrieval system offering candidate next steps, and 2) **Contextual History**, ensuring continuity in long reasoning chains. By fusing these into a single embedding before generating latent tokens, the model can make more informed, context-aware, and guided "internal thoughts."

The overall progression suggests a research direction aimed at creating LLMs that can perform more deliberate, multi-step reasoning by developing an internal "workspace" of latent concepts, which can be dynamically shaped by both external knowledge and the model's own historical context. This could lead to improvements in complex problem-solving, planning, and tasks requiring long-term coherence.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: LLM-Based Latent Token Generation Framework

## Diagram Overview

The image depicts a three-stage framework for Latent Token Generation (LTG) using Large Language Models (LLMs). The diagram uses color-coded components and directional arrows to represent data flow and processing stages.

---

## Stage 1: Explicit Chain-of-Thought (CoT) Training

### Components:

1. **Input Question** (Green rectangles)

- Position: Bottom-left of the stage

- Flow: Arrows point upward to the LLM

2. **LLM** (Blue horizontal bar)

- Position: Central horizontal bar

- Function: Processes input tokens

3. **Label Text Tokens** (Pink rectangles)

- Position: Right side of the LLM

- Flow: Arrows connect to the LLM output

4. **Final Answer** (Red rectangle)

- Position: Top-right corner

- Connection: Dashed arrow from LLM output

### Legend Cross-Reference:

- Green = Input Text Tokens

- Pink = Label Text Tokens

- Red = Final Answer

---

## Stage 2: Learn Dynamic Latent Tokens Generation

### Components:

1. **Input Question** (Green rectangles)

- Position: Bottom-left

- Flow: Arrows point to LLM and Latent Token Generator

2. **LLM** (Blue horizontal bar)

- Position: Central

- Function: Processes input tokens

3. **Latent Token Generator** (Yellow/Blue blocks)

- Position: Right of LLM

- Sub-components:

- High Confidence (Yellow)

- Low Confidence (Blue)

4. **Text Token** (Gray rectangles)

- Position: Right of Latent Token Generator

- Flow: Arrows connect to LLM output

5. **Predicted Tokens** (Yellow rectangles)

- Position: Top-right

- Connection: Dashed arrow from Latent Token Generator

### Legend Cross-Reference:

- Blue = Hidden States

- Yellow = Predicted Tokens

- Red Dashed = Latent Fusion

---

## Stage 3: Context-Prediction Fusion for Latent Tokens

### Components:

1. **Input Question** (Green rectangles)

- Position: Bottom-left

- Flow: Arrows point to Predictive Guidance

2. **Predictive Guidance** (Blue blocks)

- Position: Left of LLM

- Sub-components:

- Top-k Predictions (Yellow)

- Embedding Sum (Blue)

3. **Contextual History** (Blue bar)

- Position: Center-left

- Function: Aggregates historical data

4. **LLM** (Blue horizontal bar)

- Position: Central

- Function: Processes fused inputs

5. **Latent Token** (Gray rectangles)

- Position: Right of LLM

- Flow: Arrows connect to Text Token

6. **Text Token** (Gray rectangles)

- Position: Right of Latent Token

- Flow: Arrows connect to Final Answer

7. **Final Answer** (Red rectangle)

- Position: Top-right

- Connection: Dashed arrow from LLM output

### Legend Cross-Reference:

- Blue = Fused Embedding

- Yellow = Predicted Tokens

- Gray = Latent Tokens

- Red = Final Answer

---

## Legend Analysis

- **Color-Coding**:

- Green: Input Text Tokens

- Pink: Label Text Tokens

- Red: Final Answer

- Blue: Hidden States / Fused Embedding

- Yellow: Predicted Tokens / Embedding Sum

- Gray: Latent Tokens / Text Tokens

- Dashed Red: Latent Fusion

- **Spatial Grounding**:

- Legend located on the right side of the diagram

- Color consistency verified across all stages

---

## Key Trends and Flow Analysis

1. **Stage 1** establishes explicit CoT training by connecting input questions to labeled outputs via the LLM.

2. **Stage 2** introduces dynamic latent token generation, splitting predictions into high/low confidence categories.

3. **Stage 3** fuses contextual history with predictive guidance to refine latent tokens before final answer generation.

---

## Textual Information Extraction

### Embedded Text in Diagrams:

- Stage 1: "Explicit CoT Training"

- Stage 2: "Learn Dynamic Latent Tokens Generation"

- Stage 3: "Context-Prediction Fusion for Latent Tokens"

- Component Labels:

- "Predictive Guidance"

- "Contextual History"

- "High Confidence"

- "Low Confidence"

- "Latent Token"

- "Text Token"

---

## Conclusion

This framework illustrates a progressive approach to enhancing LLM performance through explicit training, dynamic latent token generation, and context-aware fusion. The color-coded components and directional arrows provide a clear visualization of the data flow and processing stages.

DECODING INTELLIGENCE...