## Line Graph and Scatter Plot: Performance Analysis of Gemma Models with Bayesian GT Information Gain

### Overview

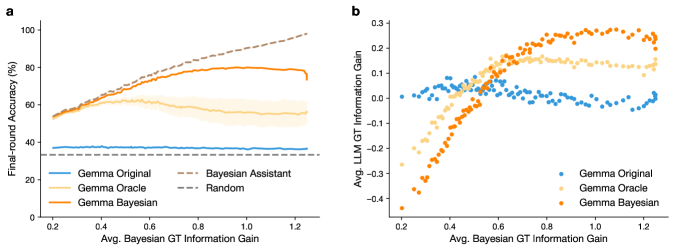

The image contains two charts (a and b) analyzing the performance of different Gemma models (Original, Oracle, Bayesian) and comparison methods (Bayesian Assistant, Random) across two metrics: final-round accuracy and information gain correlation. Chart a shows accuracy trends against Bayesian GT information gain, while Chart b examines the relationship between Bayesian and LLM GT information gains.

### Components/Axes

#### Chart a (Line Graph)

- **X-axis**: "Avg. Bayesian GT Information Gain" (range: 0.2–1.2)

- **Y-axis**: "Final-round Accuracy (%)" (range: 0–100)

- **Legend**: Located on the left, with five entries:

- **Blue**: Gemma Original

- **Yellow**: Gemma Oracle

- **Orange**: Gemma Bayesian

- **Gray dashed**: Bayesian Assistant

- **Dark gray dashed**: Random

- **Lines**:

- **Gemma Original**: Flat line near 35–40% (blue)

- **Gemma Oracle**: Flat line near 55–60% (yellow)

- **Gemma Bayesian**: Rising line from ~50% to ~80% (orange)

- **Bayesian Assistant**: Rising line from ~50% to ~95% (gray dashed)

- **Random**: Flat line near 35% (dark gray dashed)

#### Chart b (Scatter Plot)

- **X-axis**: "Avg. Bayesian GT Information Gain" (range: 0.2–1.2)

- **Y-axis**: "Avg. LLM GT Information Gain" (range: -0.4–0.3)

- **Legend**: Located on the right, with three entries:

- **Blue**: Gemma Original

- **Yellow**: Gemma Oracle

- **Orange**: Gemma Bayesian

- **Data Points**:

- **Gemma Original**: Scattered around x=0.4–0.8, y=-0.1–0.1

- **Gemma Oracle**: Clustered around x=0.6–1.0, y=0.0–0.2

- **Gemma Bayesian**: Clustered around x=0.8–1.2, y=0.1–0.3

### Detailed Analysis

#### Chart a Trends

1. **Gemma Bayesian (Orange)**: Steady upward trend, starting at ~50% accuracy at x=0.2 and reaching ~80% at x=1.2.

2. **Bayesian Assistant (Gray Dashed)**: Sharp rise from ~50% to ~95%, surpassing Gemma Bayesian at higher x-values.

3. **Gemma Oracle (Yellow)**: Flat line at ~55–60%, indicating no improvement with Bayesian GT gain.

4. **Gemma Original (Blue)**: Minimal variation (~35–40%), lowest performance.

5. **Random (Dark Gray Dashed)**: Flat line at ~35%, baseline performance.

#### Chart b Trends

1. **Positive Correlation**: Higher Bayesian GT gain (x-axis) aligns with higher LLM GT gain (y-axis).

2. **Gemma Bayesian (Orange)**: Data points form a tight cluster in the upper-right quadrant (x=0.8–1.2, y=0.1–0.3), showing strong performance.

3. **Gemma Oracle (Yellow)**: Moderate cluster (x=0.6–1.0, y=0.0–0.2), less consistent than Gemma Bayesian.

4. **Gemma Original (Blue)**: Scattered points with weak correlation (x=0.4–0.8, y=-0.1–0.1), indicating poor alignment between metrics.

### Key Observations

1. **Bayesian Methods Outperform**: Both Gemma Bayesian and Bayesian Assistant show significant accuracy gains in Chart a, with Bayesian Assistant achieving near-perfect performance at high Bayesian GT gains.

2. **Information Gain Alignment**: Chart b reveals that Gemma Bayesian’s LLM GT gains closely mirror its Bayesian GT gains, suggesting effective utilization of Bayesian insights.

3. **Oracle Limitations**: Gemma Oracle’s flat accuracy curve (Chart a) and moderate scatter (Chart b) indicate it does not benefit from Bayesian GT improvements.

4. **Original Model Weakness**: Gemma Original underperforms across both metrics, with minimal correlation between GT gains.

### Interpretation

The data demonstrates that **Bayesian GT information gain is a critical driver of model performance**. Gemma Bayesian and Bayesian Assistant leverage this gain effectively, achieving higher accuracy and stronger alignment between Bayesian and LLM GT metrics. In contrast, Gemma Original and Oracle show limited responsiveness to Bayesian GT improvements, suggesting suboptimal integration of Bayesian insights. The Bayesian Assistant’s near-perfect performance at high Bayesian GT gains implies it may serve as a superior benchmark for future model development. These findings highlight the importance of Bayesian GT in optimizing LLM performance, particularly for models like Gemma Bayesian designed to exploit such information.