## Diagram: Tokenization Tree Comparison (Ukrainian vs. Russian)

### Overview

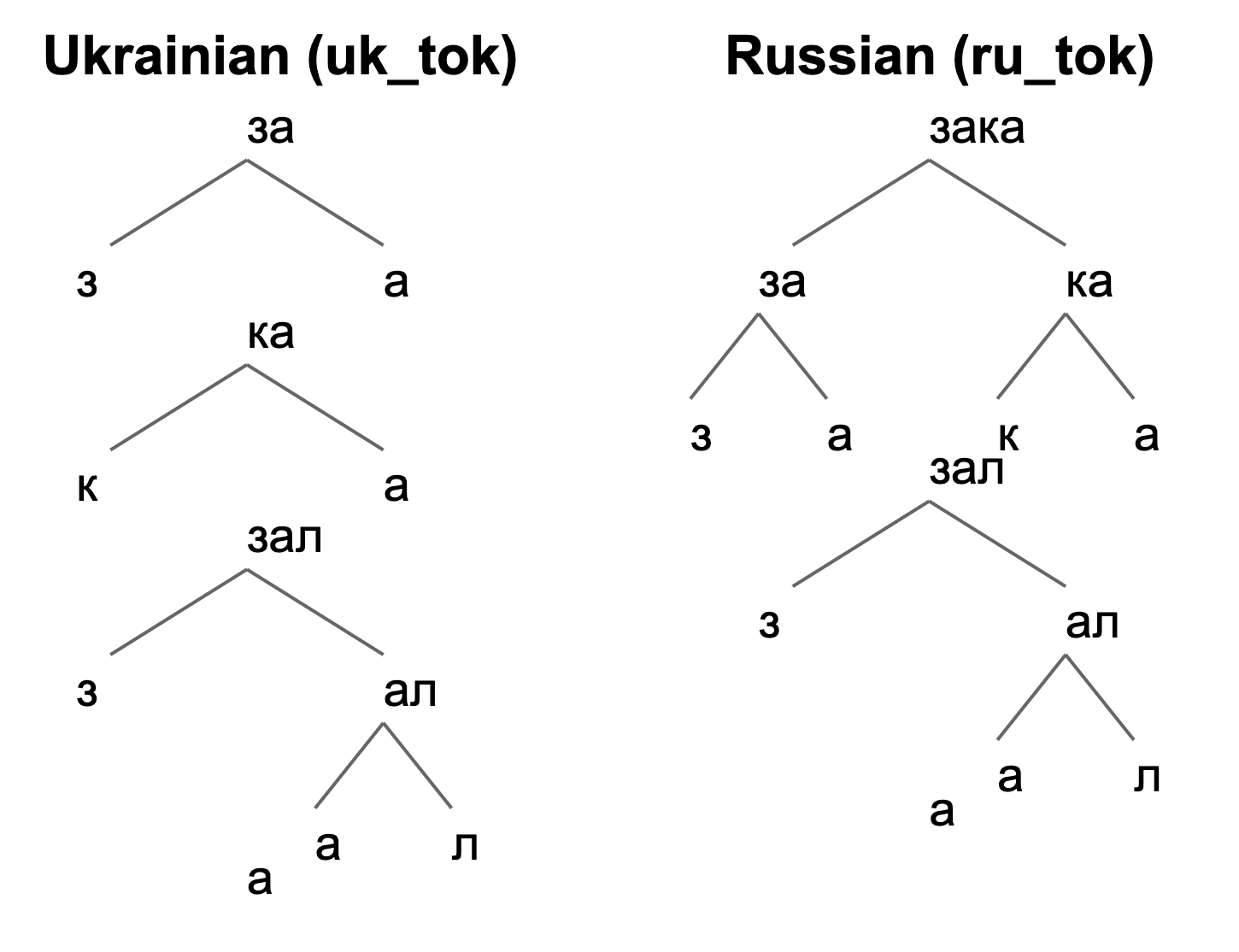

The image displays two side-by-side hierarchical tree diagrams illustrating the tokenization process for the same underlying word or phrase in two different languages: Ukrainian (left) and Russian (right). The diagrams visually break down the text into sub-word units (tokens) using a branching structure. The primary language of the text within the diagrams is Cyrillic. The titles are in English.

### Components/Axes

* **Titles:**

* Left Diagram: "Ukrainian (uk_tok)"

* Right Diagram: "Russian (ru_tok)"

* **Structure:** Each diagram is a tree with a root node at the top, branching downward into child nodes. Lines (gray) connect parent nodes to their child nodes.

* **Text Content (Cyrillic):** All nodes contain text in the Cyrillic alphabet. The specific words being tokenized appear to be related to the root "зал" (hall) with prefixes.

### Detailed Analysis

The diagrams show different tokenization strategies for what appears to be related lexical material.

**Ukrainian (uk_tok) Tree - Left Side:**

* **Root Node:** `за` (za)

* Splits into: `з` (z) and `а` (a)

* **Second Level Node:** `ка` (ka)

* Splits into: `к` (k) and `а` (a)

* **Third Level Node:** `зал` (zal)

* Splits into: `з` (z) and `ал` (al)

* The node `ал` (al) further splits into: `а` (a) and `л` (l)

* **Standalone Element:** There is a single, disconnected character `а` (a) positioned at the bottom-left of this diagram's area.

**Russian (ru_tok) Tree - Right Side:**

* **Root Node:** `зака` (zaka)

* Splits into: `за` (za) and `ка` (ka)

* **Second Level Nodes:**

* Node `за` (za) splits into: `з` (z) and `а` (a)

* Node `ка` (ka) splits into: `к` (k) and `а` (a)

* **Third Level Node:** `зал` (zal)

* Splits into: `з` (z) and `ал` (al)

* The node `ал` (al) further splits into: `а` (a) and `л` (l)

* **Standalone Element:** There is a single, disconnected character `а` (a) positioned at the bottom-left of this diagram's area.

### Key Observations

1. **Different Root Tokens:** The tokenization starts from different initial units. Ukrainian begins with the bigram `за`, while Russian begins with the four-character token `зака`.

2. **Shared Sub-Tokens:** Both trees share identical sub-token structures for the components `ка`, `зал`, and `ал`. The breakdown of `зал` -> `з` + `ал` -> `з` + (`а` + `л`) is consistent.

3. **Standalone Character:** Both diagrams include an isolated `а` (a) at the bottom-left, which is not connected to the main tree structure. Its purpose is unclear from the visual alone—it may represent a common sub-word unit, a token from a different part of the vocabulary, or a diagrammatic artifact.

4. **Visual Layout:** The Russian tree is more complex at the top level, showing a four-character token (`зака`) being split, whereas the Ukrainian tree starts with a simpler two-character token (`за`).

### Interpretation

This diagram is a technical illustration likely from the field of Natural Language Processing (NLP), specifically comparing **subword tokenization** algorithms (like BPE, WordPiece, or SentencePiece) applied to Ukrainian and Russian.

* **What it demonstrates:** It shows how the same semantic root (related to "за-зал" or "for the hall") is segmented into different sequences of tokens by a tokenizer trained on each respective language. The Russian tokenizer has learned to keep "зака" as a single unit initially, while the Ukrainian tokenizer starts with "за".

* **Linguistic Insight:** The difference highlights how tokenizers learn statistical patterns from training corpora. The Russian tokenizer may have encountered the sequence "зака" more frequently as a unit, while the Ukrainian one did not. The shared lower-level splits (`ка`, `зал`, `ал`) suggest common morphological patterns (like the prefix `за-` and the root `зал`) are recognized similarly in both languages.

* **Purpose:** Such visualizations help researchers understand and debug how a tokenizer behaves, which is crucial because tokenization directly impacts the performance of downstream NLP models (like large language models) for specific languages. The standalone `а` might be included to show it is a very frequent, single-character token in the vocabulary of both models.