\n

## Textual Document: Causal Analysis Task Description

### Overview

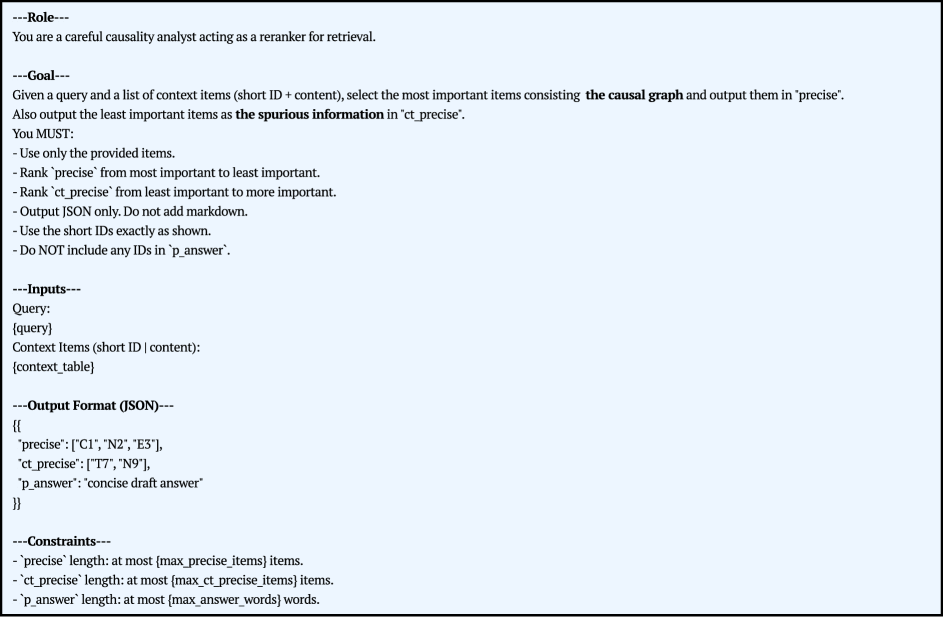

The image presents a textual description of a task for a "careful causality analyst acting as a reranker for retrieval." It outlines the goal, inputs, output format, and constraints of the task. The document appears to be a set of instructions for a machine learning model or a human annotator.

### Components/Axes

The document is structured into sections denoted by "---[Section Title]---". The sections are:

* **Role:** Describes the role of the analyst.

* **Goal:** Defines the objective of the task.

* **Inputs:** Specifies the input data.

* **Output Format (JSON):** Details the expected output structure.

* **Constraints:** Lists limitations on the output.

### Detailed Analysis or Content Details

Here's a transcription of the text, section by section:

**Role:**

"You are a careful causality analyst acting as a reranker for retrieval."

**Goal:**

"Given a query and a list of context items (short ID + content), select the most important items consisting the causal graph and output them in ‘precise’. Also output the least important items as the spurious information in ‘ct_precise’.

You MUST:

- Use only the provided items.

- Rank ‘precise’ from most important to least important.

- Rank ‘ct_precise’ from least important to more important.

- Output JSON only. Do not add markdown.

- Use the short IDs exactly as shown.

- Do NOT include any IDs in ‘p_answer’."

**Inputs:**

"Query:

[query]

Context Items (short ID | content):

[context, table]"

**Output Format (JSON):**

```json

{

'precise': ['C1', 'N2', 'E3'],

'ct_precise': ['T7', 'N9'],

'p_answer': 'concise draft answer'

}

```

**Constraints:**

"- ‘precise’ length: at most (max_precise_items) items.

- ‘ct_precise’ length: at most (max_ct_precise_items) items.

- ‘p_answer’ length: at most (max_answer_words) words."

### Key Observations

The document emphasizes the importance of adhering to the specified JSON output format and constraints. The task involves ranking context items based on their relevance to a given query, distinguishing between important causal factors ('precise') and spurious information ('ct_precise'). The use of short IDs is crucial, and they should not appear in the 'p_answer' field. The document uses placeholders like "[query]", "[context, table]", "(max_precise_items)", etc., indicating that these values will be provided as input.

### Interpretation

This document describes a task designed to evaluate a system's ability to identify causal relationships within a set of contextual information. The "reranker" role suggests that the system is intended to refine an initial ranking of context items, potentially generated by a retrieval system. The separation of 'precise' and 'ct_precise' indicates a focus on filtering out irrelevant or misleading information. The constraints on output length suggest a need for concise and focused responses. The overall goal is to build a system that can accurately identify the key causal factors relevant to a given query, while discarding spurious information. The JSON output format is likely used for automated evaluation and integration with other components of a larger system.