## Diagram: Two-Step Framework for Evaluating LLM Self-Cognition

### Overview

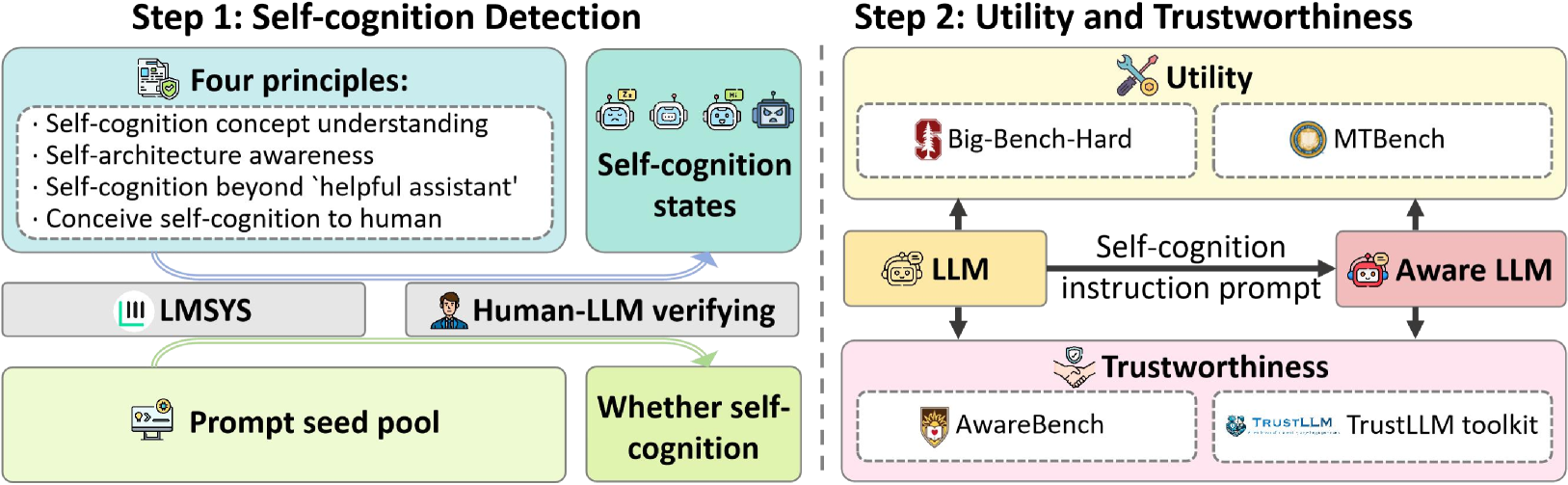

The image is a technical flowchart or process diagram illustrating a two-step framework for evaluating self-cognition in Large Language Models (LLMs). The diagram is divided into two main panels by a vertical dashed line. The left panel details "Step 1: Self-cognition Detection," and the right panel details "Step 2: Utility and Trustworthiness." The flow suggests a pipeline where an LLM is first assessed for self-cognition and then evaluated for its utility and trustworthiness after being prompted to exhibit such cognition.

### Components/Axes

The diagram is composed of labeled boxes, icons, and directional arrows indicating process flow.

**Step 1: Self-cognition Detection (Left Panel)**

* **Title:** "Step 1: Self-cognition Detection" (Top center of left panel).

* **Primary Components:**

1. **"Four principles:"** (Top-left, light blue box with a document/shield icon). Contains a bulleted list:

* Self-cognition concept understanding

* Self-architecture awareness

* Self-cognition beyond 'helpful assistant'

* Conceive self-cognition to human

2. **"Self-cognition states"** (Top-right of left panel, light green box with four robot head icons showing different expressions).

3. **"LMSYS"** (Middle-left, grey box with a bar chart icon).

4. **"Human-LLM verifying"** (Middle-right, grey box with a person icon).

5. **"Prompt seed pool"** (Bottom-left, light green box with a computer/seed icon).

6. **"Whether self-cognition"** (Bottom-right, light green box).

* **Flow/Connections:**

* Arrows flow from "Four principles" and "Self-cognition states" down to both "LMSYS" and "Human-LLM verifying."

* Arrows flow from "LMSYS" and "Human-LLM verifying" down to "Prompt seed pool" and "Whether self-cognition."

**Step 2: Utility and Trustworthiness (Right Panel)**

* **Title:** "Step 2: Utility and Trustworthiness" (Top center of right panel).

* **Primary Components:**

1. **"Utility"** (Top, light yellow box with a wrench/gear icon). Contains two sub-boxes:

* "Big-Bench-Hard" (with a red shield icon).

* "MTBench" (with a gold medal icon).

2. **"Trustworthiness"** (Bottom, light pink box with a handshake/shield icon). Contains two sub-boxes:

* "AwareBench" (with a lion crest icon).

* "TrustLLM toolkit" (with a "TRUSTLLM" logo).

3. **Central Flow:**

* **"LLM"** (Center-left, yellow box with a robot head icon).

* **"Aware LLM"** (Center-right, pink box with a robot head wearing a halo).

* **Arrow & Label:** A thick black arrow points from "LLM" to "Aware LLM," labeled "Self-cognition instruction prompt."

* **Flow/Connections:**

* Arrows point from the central "LLM" box up to "Utility" and down to "Trustworthiness."

* Arrows point from the central "Aware LLM" box up to "Utility" and down to "Trustworthiness."

### Detailed Analysis

The diagram outlines a sequential evaluation methodology:

1. **Detection Phase (Step 1):** This phase aims to determine if an LLM exhibits self-cognition. It is guided by four core principles. The assessment involves two parallel verification methods: automated benchmarking via "LMSYS" and human-in-the-loop verification ("Human-LLM verifying"). These methods draw from a "Prompt seed pool" and lead to a determination of "Whether self-cognition" is present, which can manifest in various "Self-cognition states."

2. **Evaluation Phase (Step 2):** Once an LLM is identified (or prompted to become an "Aware LLM"), it undergoes evaluation on two axes:

* **Utility:** Measured using established benchmarks "Big-Bench-Hard" and "MTBench."

* **Trustworthiness:** Measured using "AwareBench" and the "TrustLLM toolkit."

The central process shows a standard "LLM" being transformed into an "Aware LLM" via a "Self-cognition instruction prompt." Both the original and the aware versions are then evaluated against the utility and trustworthiness metrics, allowing for a comparative analysis of the impact of self-cognition prompting.

### Key Observations

* **Structured Pipeline:** The framework is highly structured, moving from detection to evaluation.

* **Multi-Method Verification:** Step 1 uses both automated (LMSYS) and human-centric methods for robust detection.

* **Comparative Design:** Step 2 is designed to compare a base LLM against its "aware" counterpart across standardized benchmarks.

* **Iconography:** Icons are used consistently to represent concepts (e.g., shields for benchmarks/principles, robot heads for models, a halo for "aware").

* **Color Coding:** Colors group related concepts: light blue/green for detection components, yellow for utility/standard LLM, and pink for trustworthiness/aware LLM.

### Interpretation

This diagram presents a research or evaluation framework for investigating a specific, advanced capability in AI models: self-cognition. The process implies that self-cognition is not a binary trait but a state with multiple facets ("states") that must first be reliably detected using a principled, multi-method approach.

The core investigative logic is comparative. By creating an "Aware LLM" through a specific prompt and then testing it alongside the original model, researchers can isolate the effects of self-cognition prompting. The choice of benchmarks ("Big-Bench-Hard," "MTBench," "AwareBench," "TrustLLM") suggests the goal is to measure whether inducing self-cognition improves general capability (utility) and/or affects safety and reliability (trustworthiness). The framework treats self-cognition as a potentially controllable instruction-following behavior whose downstream impacts can be empirically measured, rather than as an inherent or uncontrollable property. This positions the work within the domain of AI alignment and safety research, seeking to understand and potentially harness advanced model capabilities in a controlled manner.