## Scatter Plot: AI Model Performance on SWE-Bench Verified vs. Activated Model Size

### Overview

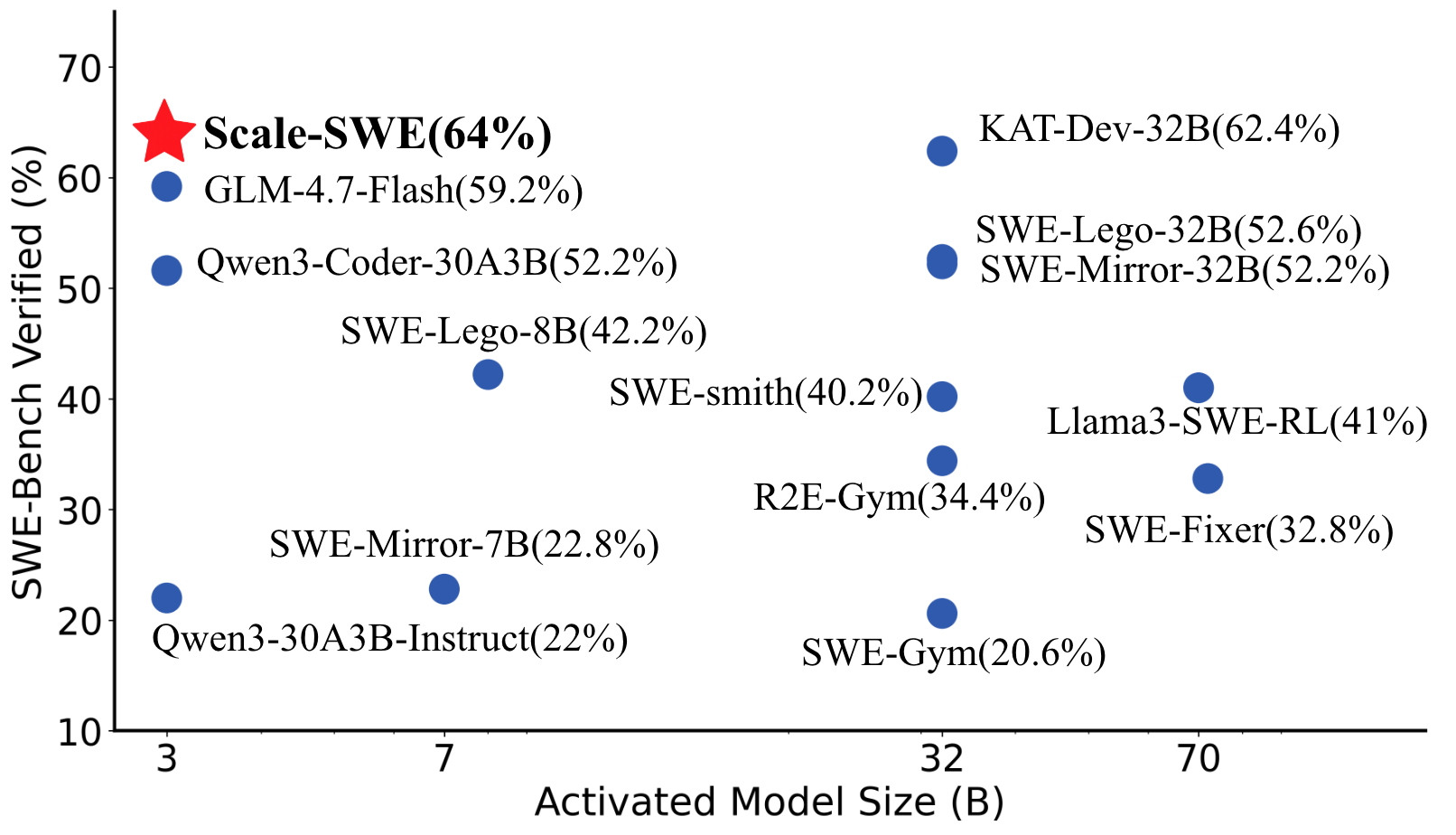

This image is a scatter plot comparing the performance of various AI models on the "SWE-Bench Verified" benchmark against their "Activated Model Size" in billions of parameters (B). The chart highlights the performance of a model named "Scale-SWE" with a prominent red star, indicating it as a key result or the subject of the analysis.

### Components/Axes

* **X-Axis:** Labeled "Activated Model Size (B)". It has major tick marks at values 3, 7, 32, and 70. The axis represents the scale of the model in billions of parameters.

* **Y-Axis:** Labeled "SWE-Bench Verified (%)". It ranges from 10 to 70 with increments of 10. This axis represents the accuracy or success rate percentage on the benchmark.

* **Data Points:** Each model is represented by a blue circular dot, except for "Scale-SWE" which is marked with a large red star. Each data point is accompanied by a text label stating the model name and its exact performance percentage in parentheses.

* **Legend:** There is no separate legend box. The identification of each data point is provided by its adjacent text label.

### Detailed Analysis

The plot contains 14 distinct data points. Below is a list of all models, their approximate activated size (based on x-axis position), and their exact SWE-Bench Verified score:

1. **Scale-SWE (64%)**: Marked with a red star. Positioned at approximately 3B on the x-axis and 64% on the y-axis.

2. **GLM-4.7-Flash (59.2%)**: Blue dot. Positioned at ~3B, 59.2%.

3. **Qwen3-Coder-30A3B (52.2%)**: Blue dot. Positioned at ~3B, 52.2%.

4. **Qwen3-30A3B-Instruct (22%)**: Blue dot. Positioned at ~3B, 22%.

5. **SWE-Mirror-7B (22.8%)**: Blue dot. Positioned at ~7B, 22.8%.

6. **SWE-Lego-8B (42.2%)**: Blue dot. Positioned at ~8B (slightly right of 7B), 42.2%.

7. **SWE-smith (40.2%)**: Blue dot. Positioned at ~32B, 40.2%.

8. **R2E-Gym (34.4%)**: Blue dot. Positioned at ~32B, 34.4%.

9. **SWE-Gym (20.6%)**: Blue dot. Positioned at ~32B, 20.6%.

10. **KAT-Dev-32B (62.4%)**: Blue dot. Positioned at ~32B, 62.4%.

11. **SWE-Lego-32B (52.6%)**: Blue dot. Positioned at ~32B, 52.6%.

12. **SWE-Mirror-32B (52.2%)**: Blue dot. Positioned at ~32B, 52.2%.

13. **Llama3-SWE-RL (41%)**: Blue dot. Positioned at ~70B, 41%.

14. **SWE-Fixer (32.8%)**: Blue dot. Positioned at ~70B, 32.8%.

### Key Observations

* **Performance vs. Size Trend:** There is a general, but not strict, positive correlation between activated model size and benchmark performance. The highest-performing models (Scale-SWE, KAT-Dev-32B) are at the top of the chart.

* **Significant Outlier:** **Scale-SWE** is a major outlier. It achieves the highest score (64%) while having one of the smallest activated model sizes (~3B). This suggests exceptional efficiency or a different architectural approach.

* **Clustering at 32B:** A dense cluster of models exists around the 32B size mark, with performance varying widely from 20.6% (SWE-Gym) to 62.4% (KAT-Dev-32B).

* **Diminishing Returns at 70B:** The two models shown at 70B (Llama3-SWE-RL, SWE-Fixer) do not outperform the top models at 32B or 3B, indicating that simply increasing size does not guarantee better performance on this specific benchmark.

* **Performance Tiers:** Models can be loosely grouped into tiers:

* **Top Tier (>60%):** Scale-SWE, KAT-Dev-32B.

* **High Tier (50-60%):** GLM-4.7-Flash, SWE-Lego-32B, SWE-Mirror-32B, Qwen3-Coder-30A3B.

* **Mid Tier (40-50%):** SWE-Lego-8B, SWE-smith, Llama3-SWE-RL.

* **Lower Tier (<40%):** R2E-Gym, SWE-Fixer, SWE-Mirror-7B, Qwen3-30A3B-Instruct, SWE-Gym.

### Interpretation

This chart is likely from a technical report or paper introducing the "Scale-SWE" model. The primary message is that Scale-SWE achieves state-of-the-art performance (64%) on the SWE-Bench Verified benchmark while using a significantly smaller activated model size (~3B parameters) compared to other top performers like KAT-Dev-32B (32B). This challenges the common assumption that larger models always perform better and highlights the importance of model architecture, training data, or fine-tuning techniques (implied by names like "SWE-RL", "SWE-Lego", "SWE-Mirror").

The wide performance spread among models of similar size (especially at 32B) suggests that the SWE-Bench benchmark is highly sensitive to model specialization and training methodology for software engineering tasks, not just raw scale. The plot serves as a compelling visual argument for the efficiency and effectiveness of the Scale-SWE approach.