## Bar Chart: Model Accuracy on Generation and Multiple-choice Tasks

### Overview

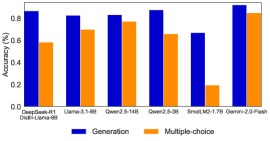

This image displays a bar chart comparing the accuracy of seven different language models across two distinct tasks: "Generation" and "Multiple-choice". Each model is represented by a pair of bars, with blue indicating "Generation" accuracy and orange indicating "Multiple-choice" accuracy. The Y-axis represents accuracy as a percentage (though scaled as a fraction from 0.0 to 0.8), and the X-axis lists the different models.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-axis:**

* **Title:** "Accuracy (%)"

* **Scale:** Ranges from 0.0 to approximately 0.9. Major tick marks are present at 0.0, 0.2, 0.4, 0.6, and 0.8.

* **X-axis:**

* **Labels:** The names of the language models being evaluated, listed from left to right:

* DeepSeek-R1

* Distil-Llama-8B

* Llama-3.1-8B

* Qwen2.5-14B

* Qwen2.5-3B

* SnoLM2-1.7B

* Gemini-2.0-Flash

* **Legend:** Located at the bottom-center of the chart.

* A blue square represents "Generation".

* An orange square represents "Multiple-choice".

### Detailed Analysis

The chart presents accuracy values for each model on the two tasks. The values are estimated based on the bar heights relative to the Y-axis scale.

1. **DeepSeek-R1:**

* **Generation (Blue):** The bar extends to approximately 0.85.

* **Multiple-choice (Orange):** The bar extends to approximately 0.58.

* *Trend:* Generation accuracy is notably higher than Multiple-choice accuracy.

2. **Distil-Llama-8B:**

* **Generation (Blue):** The bar extends to approximately 0.80.

* **Multiple-choice (Orange):** The bar extends to approximately 0.70.

* *Trend:* Generation accuracy is higher than Multiple-choice accuracy, but the gap is smaller than for DeepSeek-R1.

3. **Llama-3.1-8B:**

* **Generation (Blue):** The bar extends to approximately 0.83.

* **Multiple-choice (Orange):** The bar extends to approximately 0.78.

* *Trend:* Both accuracies are high, with a very small difference between Generation and Multiple-choice.

4. **Qwen2.5-14B:**

* **Generation (Blue):** The bar extends to approximately 0.87.

* **Multiple-choice (Orange):** The bar extends to approximately 0.67.

* *Trend:* Generation accuracy is significantly higher than Multiple-choice accuracy.

5. **Qwen2.5-3B:**

* **Generation (Blue):** The bar extends to approximately 0.68.

* **Multiple-choice (Orange):** The bar extends to approximately 0.19.

* *Trend:* Both accuracies are lower compared to the preceding models, with a very large disparity where Generation accuracy is much higher than Multiple-choice accuracy.

6. **SnoLM2-1.7B:**

* **Generation (Blue):** The bar extends to approximately 0.68.

* **Multiple-choice (Orange):** The bar extends to approximately 0.19.

* *Trend:* The bars for SnoLM2-1.7B are visually identical in height to those of Qwen2.5-3B, showing the same low performance on Multiple-choice and moderate performance on Generation.

7. **Gemini-2.0-Flash:**

* **Generation (Blue):** The bar extends to approximately 0.90.

* **Multiple-choice (Orange):** The bar extends to approximately 0.85.

* *Trend:* This model shows the highest accuracy for both tasks, with a relatively small difference between Generation and Multiple-choice.

### Key Observations

* **Overall Performance:** Gemini-2.0-Flash consistently achieves the highest accuracy for both Generation and Multiple-choice tasks among all models presented.

* **Task Disparity:** For most models, "Generation" accuracy (blue bars) is higher than "Multiple-choice" accuracy (orange bars).

* **Largest Disparity:** The models Qwen2.5-3B and SnoLM2-1.7B exhibit the most significant difference between Generation and Multiple-choice accuracy, with Multiple-choice performance being notably poor (around 0.19).

* **Smallest Disparity (High Performers):** Llama-3.1-8B and Gemini-2.0-Flash show relatively small gaps between their Generation and Multiple-choice accuracies, indicating more balanced performance across tasks.

* **Identical Performance:** Qwen2.5-3B and SnoLM2-1.7B show precisely the same accuracy values for both Generation (~0.68) and Multiple-choice (~0.19) tasks. This is a striking anomaly.

### Interpretation

The bar chart effectively demonstrates the comparative performance of various language models on two distinct types of tasks. The "Generation" task likely involves producing free-form text, while "Multiple-choice" requires selecting the correct answer from a given set.

The data suggests that:

* **Gemini-2.0-Flash is a strong performer**, excelling in both generative and discriminative (multiple-choice) capabilities. Its high accuracy across both tasks indicates a robust understanding and reasoning ability.

* **Most models show a bias towards generation tasks**, achieving higher accuracy in generating text than in selecting correct answers. This could imply that the generation tasks are either inherently easier, evaluated with more leniency, or that the models' architectures are more optimized for text production rather than precise factual recall or complex reasoning required for multiple-choice questions.

* **The significant drop in Multiple-choice accuracy for Qwen2.5-3B and SnoLM2-1.7B** highlights a potential weakness in these models for tasks requiring precise answer selection or deeper comprehension. This could be due to their smaller model sizes (3B and 1.7B parameters respectively, compared to 8B or 14B for others), which might limit their ability to handle the nuances of multiple-choice questions effectively.

* **The identical performance of Qwen2.5-3B and SnoLM2-1.7B is a critical point.** This could indicate several possibilities:

1. **Shared Architecture/Training Data:** The models might be closely related, perhaps SnoLM2-1.7B is a fine-tuned or distilled version of Qwen2.5-3B, or they share a common base that leads to identical performance on this specific benchmark.

2. **Benchmark Saturation/Floor:** For these particular models and tasks, they might have hit a performance floor or ceiling that results in identical scores.

3. **Data Presentation Anomaly:** Less likely, but it could be an error in data collection or presentation where the same data points were inadvertently used for two different model labels. However, given the precision, it's more likely a genuine observation about their relative capabilities on this benchmark.

Overall, the chart provides valuable insights into the strengths and weaknesses of different language models across varying task complexities, emphasizing the trade-offs between model size, architecture, and performance on specific types of evaluations.