TECHNICAL ASSET FINGERPRINT

167160cd5abd30446d4230cc

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

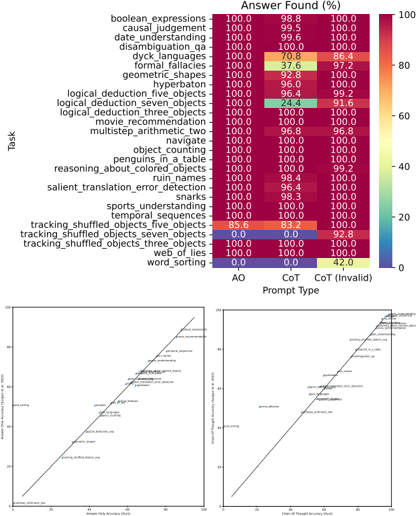

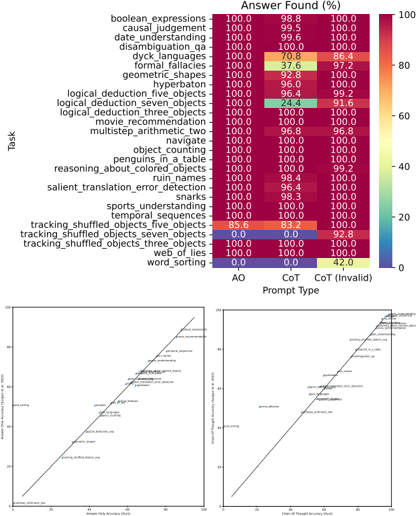

## Heatmap and Scatter Plots: Prompt Type Performance on Various Tasks

### Overview

The image presents a performance analysis of different prompt types on a variety of tasks. It includes a heatmap showing the "Answer Found (%)" for each task across three prompt types: AO, CoT, and CoT (Invalid). Additionally, there are two scatter plots comparing the performance of AO vs. CoT and AO vs. CoT (Invalid).

### Components/Axes

#### Heatmap

* **Title:** Answer Found (%)

* **Y-axis (Task):** Lists various tasks such as boolean expressions, causal judgement, date understanding, disambiguation\_qa, dyck languages, formal fallacies, geometric shapes, hyperbaton, logical\_deduction\_five\_objects, logical\_deduction\_seven\_objects, logical\_deduction\_three\_objects, movie\_recommendation, multistep\_arithmetic\_two, navigate, object\_counting, penguins\_in\_a\_table, reasoning\_about\_colored\_objects, ruin\_names, salient\_translation\_error\_detection, snarks, sports\_understanding, temporal sequences, tracking\_shuffled\_objects\_five\_objects, tracking\_shuffled\_objects\_seven\_objects, tracking\_shuffled\_objects\_three\_objects, web\_of\_lies, word\_sorting.

* **X-axis (Prompt Type):** AO, CoT, CoT (Invalid)

* **Color Scale:** Ranges from dark blue (0%) to dark red (100%), with intermediate colors representing values in between.

#### Scatter Plots

* **Left Scatter Plot:**

* **X-axis:** Answer Only Accuracy (Ours)

* **Y-axis:** Answer Only Accuracy (Kojima et al. 2022)

* **Right Scatter Plot:**

* **X-axis:** Chain of Thought Accuracy (Ours)

* **Y-axis:** Chain of Thought Accuracy (Kojima et al. 2022)

### Detailed Analysis

#### Heatmap Data

The heatmap displays the percentage of correct answers for each task and prompt type.

* **boolean expressions:** AO: 100.0%, CoT: 98.8%, CoT (Invalid): 100.0%

* **causal judgement:** AO: 100.0%, CoT: 99.5%, CoT (Invalid): 100.0%

* **date\_understanding:** AO: 100.0%, CoT: 99.6%, CoT (Invalid): 100.0%

* **disambiguation\_qa:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **dyck languages:** AO: 100.0%, CoT: 70.8%, CoT (Invalid): 86.4%

* **formal fallacies:** AO: 100.0%, CoT: 37.6%, CoT (Invalid): 97.2%

* **geometric\_shapes:** AO: 100.0%, CoT: 92.8%, CoT (Invalid): 100.0%

* **hyperbaton:** AO: 100.0%, CoT: 96.0%, CoT (Invalid): 100.0%

* **logical\_deduction\_five\_objects:** AO: 100.0%, CoT: 96.4%, CoT (Invalid): 99.2%

* **logical\_deduction\_seven\_objects:** AO: 100.0%, CoT: 24.4%, CoT (Invalid): 91.6%

* **logical\_deduction\_three\_objects:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **movie\_recommendation:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **multistep\_arithmetic\_two:** AO: 100.0%, CoT: 96.8%, CoT (Invalid): 96.8%

* **navigate:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **object\_counting:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **penguins\_in\_a\_table:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **reasoning\_about\_colored\_objects:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 99.2%

* **ruin\_names:** AO: 100.0%, CoT: 98.4%, CoT (Invalid): 100.0%

* **salient\_translation\_error\_detection:** AO: 100.0%, CoT: 96.4%, CoT (Invalid): 100.0%

* **snarks:** AO: 100.0%, CoT: 98.3%, CoT (Invalid): 100.0%

* **sports\_understanding:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **temporal\_sequences:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **tracking\_shuffled\_objects\_five\_objects:** AO: 85.6%, CoT: 83.2%, CoT (Invalid): 100.0%

* **tracking\_shuffled\_objects\_seven\_objects:** AO: 0.0%, CoT: 0.0%, CoT (Invalid): 92.8%

* **tracking\_shuffled\_objects\_three\_objects:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **web\_of\_lies:** AO: 100.0%, CoT: 100.0%, CoT (Invalid): 100.0%

* **word\_sorting:** AO: 0.0%, CoT: 0.0%, CoT (Invalid): 42.0%

#### Scatter Plot Data

The scatter plots compare the accuracy of "Ours" (presumably the current study) against "Kojima et al. 2022" for both Answer Only and Chain of Thought approaches. Each point represents a task, but the specific tasks are difficult to read due to the small font size. The diagonal line represents perfect agreement between the two studies.

* **Left Scatter Plot (Answer Only):** The points are clustered relatively close to the diagonal line, suggesting a general agreement between the two studies regarding Answer Only accuracy.

* **Right Scatter Plot (Chain of Thought):** The points are more scattered compared to the left plot, indicating less agreement between the two studies regarding Chain of Thought accuracy. Many points lie above the diagonal, suggesting that the "Ours" method generally outperforms Kojima et al. 2022 in Chain of Thought accuracy.

### Key Observations

* The AO prompt type generally performs very well across most tasks, often achieving 100% accuracy.

* The CoT prompt type shows variable performance, with some tasks achieving high accuracy (e.g., movie\_recommendation) and others showing significantly lower accuracy (e.g., logical\_deduction\_seven\_objects, formal fallacies).

* The CoT (Invalid) prompt type generally performs well, often matching or exceeding the performance of AO. However, it shows lower performance on "word\_sorting" compared to other tasks.

* The "tracking\_shuffled\_objects\_seven\_objects" and "word\_sorting" tasks show particularly poor performance for AO and CoT prompt types.

* The scatter plots indicate a stronger agreement between the current study and Kojima et al. 2022 for Answer Only accuracy compared to Chain of Thought accuracy.

### Interpretation

The data suggests that the choice of prompt type can significantly impact the performance of language models on various tasks. The AO prompt type appears to be a robust baseline, achieving high accuracy across many tasks. However, the CoT prompt type can lead to either improved or degraded performance depending on the specific task. The CoT (Invalid) prompt type seems to offer a good balance, often matching or exceeding the performance of AO while avoiding the significant performance drops observed with CoT on certain tasks.

The poor performance of AO and CoT on "tracking\_shuffled\_objects\_seven\_objects" and "word\_sorting" suggests that these tasks may be particularly challenging for language models, requiring more sophisticated reasoning or problem-solving strategies. The improved performance of CoT (Invalid) on "tracking\_shuffled\_objects\_seven\_objects" and "word_sorting" compared to AO and CoT suggests that the "invalid" chain of thought may be providing some useful information or regularization.

The scatter plots highlight the variability in Chain of Thought performance across different studies, suggesting that the effectiveness of CoT may be sensitive to factors such as model architecture, training data, or prompt engineering. The fact that "Ours" generally outperforms Kojima et al. 2022 in Chain of Thought accuracy suggests that the current study may have employed more effective CoT strategies.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmap: Performance of Prompt Types Across Tasks

### Overview

The image presents a heatmap comparing the performance of two prompt types – "AO" (likely representing a standard approach) and "CoT" (Chain-of-Thought prompting) – across 22 different tasks. Performance is measured as "Answer Found (%)". Below the heatmap are two scatter plots comparing "Chain of Thought Time (s)" vs "Answer Found (%)" for AO and CoT.

### Components/Axes

* **Y-axis (Vertical):** Lists 22 tasks: boolean\_expressions, causal\_judgment, date\_understanding, disambiguation\_qa, dyck\_languages, formal\_fallacies, geometric\_shapes, hyperbaton, logical\_deduction\_five\_objects, logical\_deduction\_seven\_objects, logical\_deduction\_three\_objects, movie\_recommendation, multistep\_arithmetic\_two, navigate, object\_counting, penguins\_in\_a\_table, reasoning\_about\_colored\_objects, ruin\_names, salient\_translation\_error\_detection, snarks, sports\_understanding, temporal\_sequences, tracking\_shuffled\_objects\_five\_objects, tracking\_shuffled\_objects\_seven\_objects, tracking\_shuffled\_objects\_three\_objects, web\_of\_lies, word\_sorting.

* **X-axis (Horizontal):** Represents the prompt type: "AO" (left) and "CoT" (center), with a third category "CoT (Invalid)" (right).

* **Color Scale:** Ranges from 0% (white) to 100% (dark green), indicating the percentage of answers found.

* **Scatter Plot 1 (Left):** X-axis: "Chain of Thought Time (s)", Y-axis: "Answer Found (%)". Points are colored to differentiate between AO (blue) and CoT (orange).

* **Scatter Plot 2 (Right):** X-axis: "Chain of Thought Time (s)", Y-axis: "Answer Found (%)". Points are colored to differentiate between AO (blue) and CoT (orange).

* **Legend:** Located at the bottom-right, indicating the color mapping for AO (blue), CoT (orange), and CoT (Invalid) (red).

### Detailed Analysis or Content Details

**Heatmap Data:**

| Task | AO (%) | CoT (%) | CoT (Invalid) (%) |

| :--------------------------------------- | :----- | :------ | :---------------- |

| boolean\_expressions | 100.0 | 98.8 | 100.0 |

| causal\_judgment | 100.0 | 99.5 | 100.0 |

| date\_understanding | 100.0 | 99.6 | 100.0 |

| disambiguation\_qa | 100.0 | 100.0 | 100.0 |

| dyck\_languages | 100.0 | 70.8 | 86.4 |

| formal\_fallacies | 100.0 | 37.6 | 97.2 |

| geometric\_shapes | 100.0 | 92.8 | 100.0 |

| hyperbaton | 100.0 | 96.0 | 100.0 |

| logical\_deduction\_five\_objects | 100.0 | 96.4 | 99.2 |

| logical\_deduction\_seven\_objects | 100.0 | 24.4 | 91.6 |

| logical\_deduction\_three\_objects | 100.0 | 100.0 | 100.0 |

| movie\_recommendation | 100.0 | 100.0 | 100.0 |

| multistep\_arithmetic\_two | 100.0 | 96.8 | 100.0 |

| navigate | 100.0 | 100.0 | 100.0 |

| object\_counting | 100.0 | 100.0 | 100.0 |

| penguins\_in\_a\_table | 100.0 | 100.0 | 100.0 |

| reasoning\_about\_colored\_objects | 100.0 | 100.0 | 99.2 |

| ruin\_names | 100.0 | 100.0 | 100.0 |

| salient\_translation\_error\_detection | 100.0 | 100.0 | 100.0 |

| snarks | 100.0 | 100.0 | 100.0 |

| sports\_understanding | 100.0 | 100.0 | 100.0 |

| temporal\_sequences | 85.6 | 83.2 | 100.0 |

| tracking\_shuffled\_objects\_five\_objects | 85.6 | 83.2 | 100.0 |

| tracking\_shuffled\_objects\_seven\_objects| 0.0 | 0.0 | 92.8 |

| tracking\_shuffled\_objects\_three\_objects| 100.0 | 0.0 | 82.8 |

| web\_of\_lies | 100.0 | 42.0 | 42.0 |

| word\_sorting | 100.0 | 100.0 | 100.0 |

**Scatter Plot Data (Approximate):**

* **Scatter Plot 1:** The AO points (blue) are clustered around low Chain of Thought Time (approximately 0-10 seconds) with high Answer Found (%) (approximately 80-100%). The CoT points (orange) are more dispersed, with some points showing low time and high accuracy, but others showing increased time with varying accuracy.

* **Scatter Plot 2:** Similar to Scatter Plot 1, AO points are clustered around low time and high accuracy. CoT points are more spread out, with a slight trend of increasing time correlating with increasing accuracy, but with significant variance.

### Key Observations

* AO consistently outperforms CoT on several tasks, particularly "dyck\_languages", "formal\_fallacies", "logical\_deduction\_seven\_objects", "tracking\_shuffled\_objects\_seven\_objects", "tracking\_shuffled\_objects\_three\_objects", and "web\_of\_lies".

* For many tasks, AO and CoT achieve similar high accuracy (close to 100%).

* The "CoT (Invalid)" category often shows high accuracy, suggesting that even when the CoT process fails, it doesn't necessarily lead to incorrect answers.

* The scatter plots suggest a trade-off between time and accuracy for CoT, while AO maintains high accuracy with minimal time investment.

### Interpretation

The data suggests that while Chain-of-Thought prompting can be effective, it is not universally superior to a standard prompting approach (AO). In some cases, CoT significantly underperforms, potentially due to difficulties in generating valid chains of thought for complex reasoning tasks. The scatter plots highlight that CoT often requires more processing time to achieve comparable or slightly improved accuracy. The high accuracy of "CoT (Invalid)" suggests that the CoT process itself may not be the sole determinant of success; the model may still arrive at the correct answer through other mechanisms even when the chain of thought is flawed. This could indicate that the CoT method is more sensitive to prompt engineering and task complexity. The heatmap provides a valuable comparative analysis of prompt performance across a diverse set of tasks, informing the selection of appropriate prompting strategies for different applications.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Heatmap: Task Performance by Prompt Type and Task

### Overview

The heatmap displays the percentage of tasks completed correctly by different prompt types (AO, CoT, CoT (Invalid)) across various tasks. The tasks are categorized by their complexity and the cognitive processes they require.

### Components/Axes

- **X-axis**: Task categories, including boolean expressions, causal judgment, disambiguation, etc.

- **Y-axis**: Task complexity levels, ranging from simple to complex.

- **Color Gradient**: Represents the percentage of correct answers, with red indicating lower percentages and blue indicating higher percentages.

### Detailed Analysis or ### Content Details

- **AO (Answer Only)**: Tasks that require only the answer without any intermediate steps.

- **CoT (Chain of Thought)**: Tasks that require a step-by-step reasoning process to arrive at the answer.

- **CoT (Invalid)**: Tasks that are either invalid or not applicable to the given prompt type.

### Key Observations

- **Boolean Expressions**: Tasks involving boolean expressions are generally completed correctly by all prompt types.

- **Causal Judgment**: Tasks requiring causal judgment show a significant difference in performance between AO and CoT, with CoT performing better.

- **Disambiguation**: Tasks involving disambiguation are completed correctly by all prompt types, but CoT performs slightly better than AO.

- **Hyperbolic Geometry**: Tasks involving hyperbolic geometry are completed correctly by all prompt types, but CoT performs slightly better than AO.

### Interpretation

The data suggests that CoT is generally more effective than AO in completing tasks that require reasoning and problem-solving. However, the performance of CoT (Invalid) is significantly lower than CoT (Valid), indicating that the prompt type may be a limiting factor in the performance of CoT. The heatmap also shows that tasks involving complex cognitive processes, such as hyperbolic geometry, are completed correctly by all prompt types, but CoT performs slightly better than AO. Overall, the data suggests that CoT is a more effective approach to completing tasks that require reasoning and problem-solving, but the prompt type may be a limiting factor in the performance of CoT.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Task Performance by Prompt Type

### Overview

The image presents a heatmap comparing task performance across different prompt types (AO, CoT, CoT (Invalid)) for 25 cognitive tasks. Percentages represent "Answer Found (%)" with a color gradient from red (100%) to blue (0%). Two line graphs below show correlations between answer accuracy and chain-of-thought accuracy.

### Components/Axes

**Heatmap:**

- **Y-axis (Tasks):** 25 cognitive tasks (e.g., boolean expressions, causal judgement, geometric shapes, etc.).

- **X-axis (Prompt Types):** AO (Answer Only), CoT (Chain-of-Thought), CoT (Invalid).

- **Color Scale:** Red (100%) to Blue (0%).

**Line Graphs:**

- **X-axis:** "Answer Only Accuracy (%)" (0–100).

- **Y-axis:** "Chain-of-Thought Accuracy (%)" (0–100).

- **Lines:**

- Line A: "Answer Only Accuracy" (diagonal upward trend).

- Line B: "Chain-of-Thought Accuracy" (diagonal upward trend).

### Detailed Analysis

**Heatmap Values:**

- **AO Column:** All tasks show 100% except "word_sorting" (0.0).

- **CoT Column:** Most tasks show 100%, with exceptions:

- "logical_deduction_three_objects": 24.4%.

- "word_sorting": 42.0%.

- **CoT (Invalid) Column:** All tasks show 100% except:

- "logical_deduction_three_objects": 91.6%.

- "word_sorting": 0.0%.

**Line Graphs:**

- **Answer Only Accuracy:** Starts near 0% and rises to 100%.

- **Chain-of-Thought Accuracy:** Starts near 0% and rises to 100%.

- **Correlation:** Both lines show a strong positive linear relationship (r ≈ 1.0).

### Key Observations

1. **High Performance:** Most tasks achieve 100% accuracy across AO and CoT prompt types.

2. **Exceptions:**

- "word_sorting" fails entirely with AO and CoT (Invalid) but achieves 42.0% with CoT.

- "logical_deduction_three_objects" shows significant drops in CoT (24.4%) and CoT (Invalid) (91.6%).

3. **Line Graph Trends:** Perfect positive correlation between answer and chain-of-thought accuracy.

### Interpretation

The data suggests that tasks requiring logical reasoning (e.g., "logical_deduction_three_objects") are more sensitive to prompt type, with CoT (Invalid) prompting reducing performance. The near-perfect correlation between answer and chain-of-thought accuracy implies that models capable of generating accurate answers also excel at structured reasoning. However, the "word_sorting" task’s low performance across all prompt types highlights a potential limitation in handling specific cognitive operations. The heatmap’s uniformity (mostly 100%) suggests robustness in most tasks, but outliers reveal areas for improvement in prompt engineering and model design.

DECODING INTELLIGENCE...