## Process Diagram: Autonomous Vehicle Operation Stages

### Overview

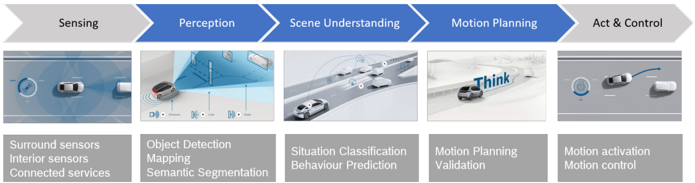

The diagram illustrates a five-stage workflow for autonomous vehicle operation, progressing from data acquisition to physical control. Each stage is represented by a blue arrow with a corresponding visual and textual description, emphasizing the integration of sensors, perception algorithms, and control systems.

### Components/Axes

1. **Stages (Left to Right)**:

- **Sensing** → **Perception** → **Scene Understanding** → **Motion Planning** → **Act & Control**

2. **Visual Elements**:

- Icons/images depicting cars with sensor arrays, 3D maps, and motion trajectories.

3. **Textual Descriptions**:

- Bullet points under each stage detail subsystems/components.

### Detailed Analysis

#### Sensing

- **Components**: Surround sensors, Interior sensors, Connected services

- **Visual**: Car with radar/ultrasonic sensors emitting waves; road with lane markings.

#### Perception

- **Components**: Object Detection, Mapping, Semantic Segmentation

- **Visual**: Car emitting 3D point cloud data; labeled objects (e.g., pedestrians, vehicles).

#### Scene Understanding

- **Components**: Situation Classification, Behaviour Prediction

- **Visual**: Traffic scenario with multiple vehicles; predictive trajectories.

#### Motion Planning

- **Components**: Motion Planning Validation

- **Visual**: Car navigating a curved road with "Think" annotation; decision-making logic.

#### Act & Control

- **Components**: Motion activation, Motion control

- **Visual**: Car executing a lane change with dynamic path planning.

### Key Observations

- **Sequential Flow**: Each stage depends on the output of the prior (e.g., perception data feeds into scene understanding).

- **Sensor Integration**: Combines external (surround sensors) and internal (interior sensors) data.

- **Validation Step**: Motion Planning includes a validation phase, suggesting iterative refinement.

### Interpretation

This diagram highlights the hierarchical decision-making process in autonomous vehicles. The **Sensing** stage captures environmental data, which is processed in **Perception** to identify objects and map surroundings. **Scene Understanding** contextualizes data (e.g., predicting pedestrian behavior), while **Motion Planning** generates safe trajectories validated for feasibility. Finally, **Act & Control** executes actions via motion control systems. The inclusion of "Connected services" in Sensing implies reliance on external data (e.g., traffic updates), and "Think" in Motion Planning underscores AI-driven decision-making. The workflow emphasizes redundancy (e.g., multiple sensor types) and validation to ensure safety.