## Network Diagram: Multi-Layered Transformation Processes

### Overview

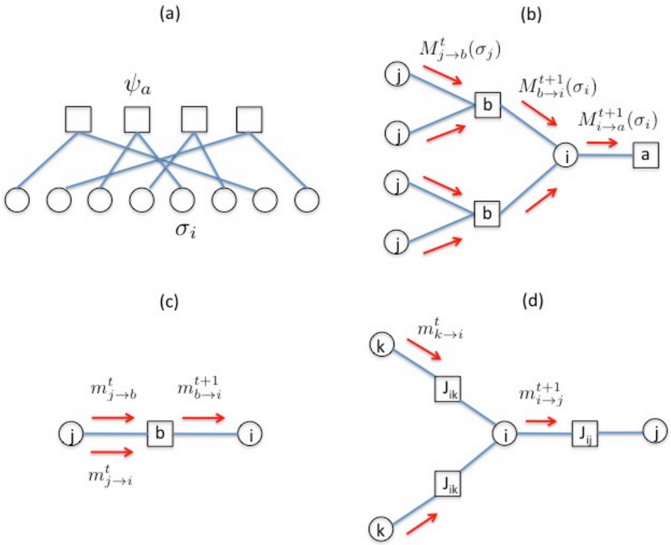

The image contains four interconnected diagrams (a)-(d) illustrating network structures with nodes, edges, and mathematical transformations. Each diagram represents a distinct layer or process within a hierarchical system, likely related to signal processing, neural networks, or dynamic systems.

---

### Components/Axes

#### Diagram (a)

- **Nodes**:

- Central node labeled **ψₐ** (likely a processing unit or activation function).

- Four square nodes (intermediate layers).

- Six circular nodes labeled **σᵢ** (input/output units).

- **Edges**:

- Blue lines connecting ψₐ to squares, squares to σᵢ.

- No explicit labels on edges.

#### Diagram (b)

- **Nodes**:

- Nodes **i**, **j**, **b**, **a**.

- Squares labeled **b** and **a** (processing units).

- **Edges**:

- Blue lines with red arrows indicating transformations:

- **Mᵗⱼ→ᵇ(σⱼ)**: Transformation from **j** to **b** at time **t**.

- **Mᵗ⁺¹ᵇ→ᵢ(σᵢ)**: Transformation from **b** to **i** at time **t+1**.

- **Mᵗ⁺¹ᵢ→ᵃ(σᵢ)**: Transformation from **i** to **a** at time **t+1**.

#### Diagram (c)

- **Nodes**:

- Nodes **i** and **b**.

- **Edges**:

- Bidirectional red arrows with labels:

- **mᵗⱼ→ᵇ**: Message from **j** to **b** at time **t**.

- **mᵗ⁺¹ᵇ→ᵢ**: Message from **b** to **i** at time **t+1**.

- **mᵗⱼ→ᵢ**: Message from **j** to **i** at time **t**.

- **mᵗ⁺¹ᵇ→ᵢ**: Message from **b** to **i** at time **t+1**.

#### Diagram (d)

- **Nodes**:

- Nodes **k**, **i**, **j**.

- Squares labeled **Jᵢₖ** and **Jᵢⱼ** (interaction matrices).

- **Edges**:

- Red arrows with labels:

- **mᵗₖ→ᵢ**: Message from **k** to **i** at time **t**.

- **mᵗ⁺¹ᵢ→ᵉ**: Message from **i** to **j** at time **t+1**.

- **Jᵢₖ** and **Jᵢⱼ**: Interaction terms between nodes.

---

### Detailed Analysis

#### Diagram (a)

- Represents a **feedforward network** with ψₐ as the central processor. The σᵢ nodes (likely inputs) are aggregated through intermediate square nodes before reaching ψₐ.

#### Diagram (b)

- Illustrates **temporal transformations** between nodes. The M functions (e.g., **Mᵗⱼ→ᵇ**) suggest time-dependent operations, possibly matrix multiplications or activation functions. The flow progresses from **j** → **b** → **i** → **a**, with time steps **t** and **t+1**.

#### Diagram (c)

- Depicts **bidirectional message passing** between **i** and **b**. The labels **mᵗ** and **mᵗ⁺¹** indicate sequential updates, possibly in a recurrent or iterative system.

#### Diagram (d)

- Shows a **multi-node interaction** with **Jᵢₖ** and **Jᵢⱼ** as coupling matrices. The flow from **k** → **i** → **j** involves time-dependent messages, suggesting a dynamic system with layered interactions.

---

### Key Observations

1. **Temporal Dynamics**: All diagrams use time indices (**t**, **t+1**), implying sequential processing or iterative updates.

2. **Hierarchical Structure**: Diagrams (a) and (b) show layered architectures, while (c) and (d) focus on localized interactions.

3. **Bidirectional Flow**: Diagram (c) explicitly models two-way communication, unlike the unidirectional flows in (a) and (b).

4. **Mathematical Notation**: The use of **M** (transformations) and **J** (interaction matrices) suggests linear algebra or tensor operations.

---

### Interpretation

These diagrams likely model a **neural network architecture** or **graph neural network (GNN)** with temporal and spatial dependencies. Key insights:

- **ψₐ** in (a) could represent an output layer aggregating inputs from σᵢ.

- The **M** and **J** functions in (b)-(d) may encode attention mechanisms, message passing, or weight updates.

- The **σᵢ** nodes (a) and **mᵗ** messages (c,d) suggest input-driven updates, possibly in a reinforcement learning or dynamic system context.

- The bidirectional arrows in (c) hint at **recurrent connections**, enabling feedback loops for memory or context retention.

The diagrams collectively emphasize **hierarchical processing**, **temporal evolution**, and **node interactions**, critical for tasks like sequence modeling, graph-based learning, or multi-agent systems.