## Diagram: Speculative Decoding Process Flow for Neural Machine Translation

### Overview

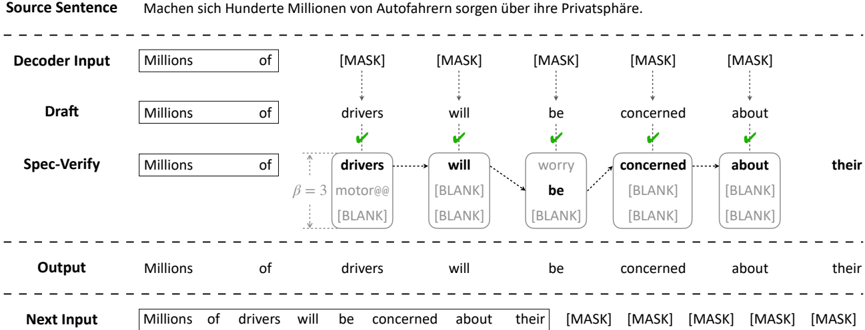

This image is a technical diagram illustrating a **speculative decoding** process, likely for a neural machine translation or text generation model. It shows the step-by-step flow from a source sentence to a final output, highlighting a draft generation and verification stage. The diagram is structured as a series of horizontal rows, each representing a different stage in the process, with text elements connected by arrows to show progression and relationships.

### Components/Axes

The diagram is organized into six distinct horizontal rows, labeled on the left side. From top to bottom:

1. **Source Sentence**: The input text.

2. **Decoder Input**: The initial input to the decoder model.

3. **Draft**: A preliminary generated sequence.

4. **Spec-Verify**: The speculative verification stage, which is the most complex component.

5. **Output**: The final verified sequence.

6. **Next Input**: The sequence used as input for the next decoding step.

**Key Visual Elements:**

* **Boxes**: Contain text tokens (words or sub-words).

* **Dashed Arrows**: Indicate the flow of information or sequence progression.

* **Solid Arrows with Green Checkmarks**: Indicate successful verification or acceptance of a token.

* **Dotted Lines**: Show alignment or correspondence between tokens in different rows.

* **`[MASK]` and `[BLANK]`**: Special tokens indicating positions to be filled or verified.

* **Parameter Notation**: `β = 3` is noted in the Spec-Verify row.

### Detailed Analysis

**1. Source Sentence (Top Row):**

* **Text (German):** `Machen sich Hunderte Millionen von Autofahrern sorgen über ihre Privatsphäre.`

* **English Translation:** "Are hundreds of millions of car drivers worried about their privacy?"

**2. Decoder Input (Second Row):**

* Contains two boxes: `Millions` and `of`.

* Followed by five boxes, each containing the token `[MASK]`.

**3. Draft (Third Row):**

* Contains a sequence of seven boxes: `Millions`, `of`, `drivers`, `will`, `be`, `concerned`, `about`.

* This represents a draft translation hypothesis generated by the model.

**4. Spec-Verify (Fourth Row - Central Component):**

* This row shows the verification process for the draft tokens.

* It starts with `Millions` and `of`, connected by a dashed arrow to the next box.

* The verification for the token `drivers` is shown in detail:

* A box contains `drivers` at the top.

* Below it, two alternative tokens are listed: `motorists` and `[BLANK]`.

* A vertical dotted line connects `drivers` to these alternatives.

* The parameter `β = 3` is noted to the left, likely indicating the number of candidate tokens considered (`drivers`, `motorists`, `[BLANK]`).

* A green checkmark and a solid arrow point from this box to the next, indicating `drivers` was verified and accepted.

* The token `will` is in its own box with a green checkmark.

* The token `be` is shown with a correction: the word `worry` is written above it and struck through, with `be` below it. This suggests `be` was selected over `worry`.

* The tokens `concerned` and `about` are each in their own boxes with green checkmarks.

* The final box in this row contains `their`, which is connected via a dashed arrow from the `about` box.

**5. Output (Fifth Row):**

* The final verified sequence: `Millions`, `of`, `drivers`, `will`, `be`, `concerned`, `about`, `their`.

* This matches the accepted tokens from the Spec-Verify stage.

**6. Next Input (Bottom Row):**

* Shows how the output feeds into the next iteration.

* The first eight boxes contain the full output sequence: `Millions of drivers will be concerned about their`.

* This is followed by four boxes containing `[MASK]`, ready for the next decoding step.

### Key Observations

1. **Iterative Process:** The diagram clearly shows an iterative loop where the `Output` becomes the base for the `Next Input`, which will generate a new draft and verification cycle.

2. **Candidate Selection:** The Spec-Verify stage for `drivers` explicitly shows multiple candidates (`drivers`, `motorists`, `[BLANK]`), with `β=3` quantifying this beam or candidate width.

3. **Error Correction:** The crossed-out `worry` in favor of `be` demonstrates the model's ability to correct its own draft during verification.

4. **Mask Filling:** The use of `[MASK]` tokens in the Decoder Input and Next Input rows indicates a fill-in-the-blank approach to generation.

5. **Spatial Flow:** The primary flow is left-to-right within each row. Vertical and dotted lines are used to show relationships and verification between rows, particularly between Draft and Spec-Verify.

### Interpretation

This diagram visually explains the **speculative decoding** technique used to accelerate inference in large language models, particularly for sequence-to-sequence tasks like translation.

* **What it demonstrates:** The process involves a smaller, faster "draft" model generating a candidate sequence (the `Draft` row). A larger, more accurate "verifier" model then checks this sequence in parallel (the `Spec-Verify` row). Tokens that are verified (green checkmarks) are accepted, while incorrect ones are corrected (e.g., `worry` -> `be`) or replaced. This is more efficient than generating and verifying tokens one by one sequentially.

* **Relationships:** The `Source Sentence` is the goal. The `Decoder Input` and `Draft` are attempts to reach that goal. The `Spec-Verify` is the quality control step. The `Output` is the result of this cycle, which then seeds the next cycle (`Next Input`), showing the autoregressive nature of the process.

* **Notable Patterns:** The parameter `β=3` is a key hyperparameter, controlling the trade-off between speed (higher β allows more parallel verification) and computational cost. The presence of `[BLANK]` as a candidate suggests the model can also decide to not generate a token at a position or to handle alignment. The entire flow emphasizes **parallel verification of multiple tokens** as the core efficiency gain over standard autoregressive decoding.