## Translation Process Diagram: Masked Language Model

### Overview

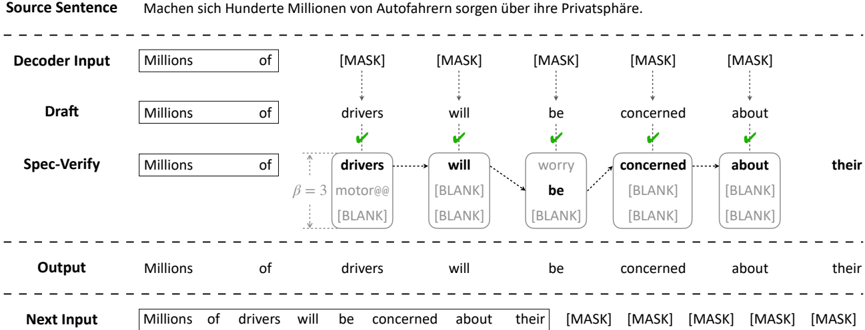

The image illustrates the process of translating a source sentence using a masked language model. It shows the input, draft translation, spec-verify step, output, and the next input for the model.

### Components/Axes

* **Source Sentence:** The original sentence to be translated.

* German: "Machen sich Hunderte Millionen von Autofahrern sorgen über ihre Privatsphäre."

* English Translation: "Hundreds of millions of motorists are concerned about their privacy."

* **Decoder Input:** The input to the decoder, which includes the initial words and masked tokens.

* **Draft:** The initial translation generated by the model.

* **Spec-Verify:** A step where the draft is refined based on specifications.

* **Output:** The final translated sentence.

* **Next Input:** The input for the next iteration of the translation process.

* **[MASK]:** Represents a masked token, where the model needs to predict the missing word.

* **β = 3:** A parameter used in the Spec-Verify step.

### Detailed Analysis or ### Content Details

1. **Source Sentence:**

* German: "Machen sich Hunderte Millionen von Autofahrern sorgen über ihre Privatsphäre."

* English Translation: "Hundreds of millions of motorists are concerned about their privacy."

2. **Decoder Input:**

* "Millions"

* "of"

* "[MASK]" (5 times)

3. **Draft:**

* "Millions"

* "of"

* "drivers"

* "will"

* "be"

* "concerned"

* "about"

4. **Spec-Verify:**

* "Millions"

* "of"

* "drivers"

* Alternatives: "motor@@", "[BLANK]"

* β = 3 (Indicates a parameter or threshold value)

* "will"

* Alternatives: "[BLANK]", "[BLANK]"

* "worry"

* Alternatives: "be", "[BLANK]", "[BLANK]"

* "concerned"

* Alternatives: "[BLANK]", "[BLANK]"

* "about"

* Alternatives: "[BLANK]", "[BLANK]"

* "their"

5. **Output:**

* "Millions"

* "of"

* "drivers"

* "will"

* "be"

* "concerned"

* "about"

* "their"

6. **Next Input:**

* "Millions"

* "of"

* "drivers"

* "will"

* "be"

* "concerned"

* "about"

* "their"

* "[MASK]" (5 times)

### Key Observations

* The diagram illustrates an iterative translation process using a masked language model.

* The "Spec-Verify" step involves refining the draft translation by considering alternative words or phrases.

* The "[MASK]" tokens are used to prompt the model to predict the missing words in the input sequence.

* Green checkmarks indicate the selected words in the draft are correct.

* Dotted arrows indicate the flow of information and dependencies between the steps.

### Interpretation

The diagram demonstrates how a masked language model can be used to translate sentences. The model starts with an initial input, generates a draft translation, and then refines it using a "Spec-Verify" step. This step involves considering alternative words or phrases and selecting the most appropriate ones based on the context. The "[MASK]" tokens play a crucial role in this process by prompting the model to predict the missing words and improve the accuracy of the translation. The iterative nature of the process allows the model to gradually refine the translation and produce a more accurate and fluent output. The parameter β = 3 likely controls the degree of refinement or the number of alternative words considered in the "Spec-Verify" step. The process shows how the model learns to generate coherent and contextually appropriate translations by iteratively predicting and refining its output.