## Diagram: Signal Processing Pipeline for Sound Localization

### Overview

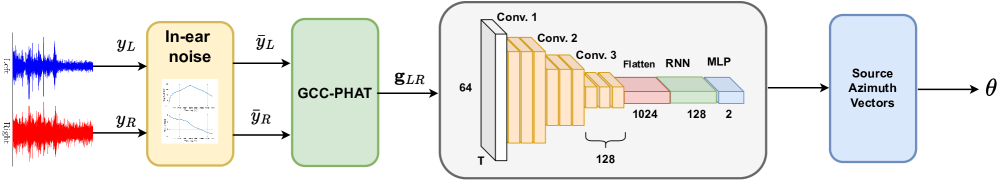

The diagram illustrates a multi-stage neural network architecture designed to process audio signals and estimate source azimuth vectors. It begins with raw left (blue) and right (red) audio signals, processes them through noise reduction, cross-correlation, and deep learning layers to output directional vectors.

### Components/Axes

1. **Input Signals**:

- **Left Channel**: Blue waveform labeled `y_L` (top-left).

- **Right Channel**: Red waveform labeled `y_R` (bottom-left).

2. **Processing Blocks**:

- **In-ear Noise**: Yellow block with internal HRTF (Head-Related Transfer Function) plots showing frequency response curves for left/right ears.

- **GCC-PHAT**: Green block computing cross-correlation (`g_LR`) between processed signals.

3. **Neural Network**:

- **Conv. 1**: 64 filters (orange).

- **Conv. 2**: 128 filters (orange).

- **Conv. 3**: 1024 filters (orange).

- **Flatten**: Transforms 3D convolutional output to 1D (pink).

- **RNN**: 128 units (green).

- **MLP**: 2-unit output layer (blue).

4. **Output**: Blue block labeled "Source Azimuth Vectors" (θ).

### Detailed Analysis

- **Signal Flow**:

- Raw audio (`y_L`, `y_R`) → Noise reduction (`ȳ_L`, `ȳ_R`) → Cross-correlation (`g_LR`) → Convolutional layers → RNN → MLP → Azimuth vectors (θ).

- **Layer Dimensions**:

- Conv. 1: Input → 64 filters.

- Conv. 2: 64 → 128 filters.

- Conv. 3: 128 → 1024 filters.

- Flatten: 1024 → 128 units.

- RNN: 128 → 128 units.

- MLP: 128 → 2 units (θ).

### Key Observations

- **Color Consistency**: Blue (left) and red (right) signals match their respective legend entries.

- **Architecture Depth**: Three convolutional layers increase channel depth exponentially (64 → 128 → 1024), followed by dimensionality reduction via flattening and sequential processing.

- **Output Specificity**: Final layer produces 2-dimensional vectors, likely representing azimuth (horizontal) and elevation (vertical) angles.

### Interpretation

This architecture combines traditional signal processing (GCC-PHAT for time-delay estimation) with deep learning to localize sound sources. The use of HRTF-informed noise reduction suggests adaptation to human auditory perception, while the CNN-RNN-MLP pipeline extracts spatial features from processed audio. The 2D output implies the model predicts both azimuth and elevation, though the diagram does not explicitly label elevation. The absence of batch size or temporal resolution details limits understanding of real-time performance constraints.