\n

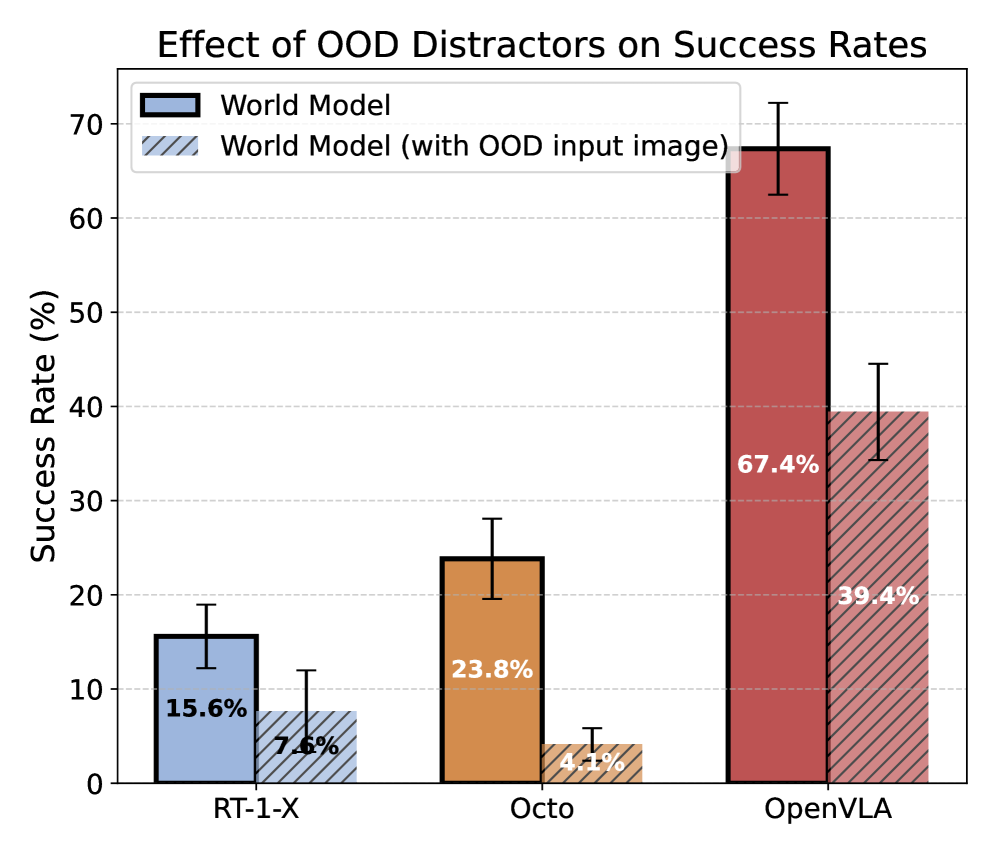

## Grouped Bar Chart: Effect of OOD Distractors on Success Rates

### Overview

This is a grouped bar chart comparing the performance of three different models (RT-1-X, Octo, OpenVLA) on a task, measured by success rate percentage. The chart specifically illustrates the negative impact of introducing Out-Of-Distribution (OOD) distractor images into the input. For each model, there are two bars: one representing the baseline "World Model" performance and another representing performance "with OOD input image."

### Components/Axes

* **Chart Title:** "Effect of OOD Distractors on Success Rates" (centered at the top).

* **Y-Axis:** Labeled "Success Rate (%)". The scale runs from 0 to 70, with major gridlines at intervals of 10 (0, 10, 20, 30, 40, 50, 60, 70).

* **X-Axis:** Lists three model categories: "RT-1-X", "Octo", and "OpenVLA".

* **Legend:** Positioned in the top-left corner of the plot area.

* A solid, light blue rectangle corresponds to "World Model".

* A light blue rectangle with diagonal hatching (///) corresponds to "World Model (with OOD input image)".

* **Data Series:** There are two data series, represented by paired bars for each model on the x-axis.

* **Series 1 (World Model):** Solid-colored bars. Colors are model-specific: light blue for RT-1-X, orange for Octo, and dark red for OpenVLA.

* **Series 2 (with OOD input image):** Hatched bars with the same base color as their solid counterpart for each model.

* **Error Bars:** Each bar has a black, vertical error bar extending above and below the top of the bar, indicating variability or confidence intervals.

### Detailed Analysis

The chart presents the following specific data points (values are labeled directly on the bars):

**1. Model: RT-1-X (Leftmost group)**

* **World Model (Solid Light Blue Bar):** Success Rate = **15.6%**. The error bar extends from approximately 12% to 19%.

* **With OOD input image (Hatched Light Blue Bar):** Success Rate = **7.6%**. The error bar extends from approximately 4% to 12%.

* **Trend:** Performance drops by approximately 8 percentage points (a ~51% relative decrease) when OOD distractors are introduced.

**2. Model: Octo (Center group)**

* **World Model (Solid Orange Bar):** Success Rate = **23.8%**. The error bar extends from approximately 20% to 28%.

* **With OOD input image (Hatched Orange Bar):** Success Rate = **4.1%**. The error bar extends from approximately 2% to 6%.

* **Trend:** Performance drops dramatically by approximately 19.7 percentage points (an ~83% relative decrease) with OOD distractors. This is the largest absolute and relative drop among the three models.

**3. Model: OpenVLA (Rightmost group)**

* **World Model (Solid Dark Red Bar):** Success Rate = **67.4%**. The error bar extends from approximately 62% to 72%.

* **With OOD input image (Hatched Dark Red Bar):** Success Rate = **39.4%**. The error bar extends from approximately 34% to 45%.

* **Trend:** Performance drops by approximately 28 percentage points (a ~42% relative decrease) with OOD distractors. Despite the drop, OpenVLA maintains the highest success rate in both conditions.

### Key Observations

1. **Universal Negative Impact:** All three models experience a significant decrease in success rate when tested with OOD input images compared to the standard World Model condition.

2. **Performance Hierarchy:** The baseline performance ranking (World Model) is OpenVLA (67.4%) > Octo (23.8%) > RT-1-X (15.6%). This hierarchy is preserved under the OOD condition: OpenVLA (39.4%) > RT-1-X (7.6%) > Octo (4.1%). Notably, Octo falls from second to last place under OOD conditions.

3. **Varying Robustness:** The models show different levels of robustness to OOD distractors.

* **Octo** is the most severely affected, losing over 80% of its baseline performance.

* **RT-1-X** and **OpenVLA** show more comparable relative degradation (~51% and ~42% loss, respectively), though OpenVLA's absolute drop is larger.

4. **Error Bar Overlap:** For RT-1-X and Octo, the error bars for the two conditions do not overlap, strongly suggesting the performance drop is statistically significant. For OpenVLA, the error bars also do not overlap.

### Interpretation

This chart demonstrates a critical vulnerability in the evaluated world models: their performance is highly sensitive to the presence of out-of-distribution visual distractors. The data suggests that:

* **OOD Distractors are a Major Failure Mode:** The introduction of irrelevant, unfamiliar visual elements (OOD distractors) severely degrades the models' ability to successfully complete their intended tasks. This indicates a lack of robustness and a potential over-reliance on specific, in-distribution visual cues.

* **Model Architecture/Training Matters:** The stark difference in impact between Octo and the others implies that the underlying design or training data of a model significantly influences its resilience to visual noise. OpenVLA's superior baseline and relative robustness might point to advantages in its architecture or training regimen.

* **Practical Implications:** For real-world deployment, where environments are uncontrolled and contain unexpected objects, this vulnerability is a serious concern. A model like Octo, which performs reasonably well in clean conditions, could fail catastrophically in a cluttered or novel setting. The results argue for the necessity of testing AI models, especially those for robotics or vision, under OOD conditions to assess their true reliability.

* **The "World Model" Concept is Fragile:** The title implies these are "World Models," yet their modeled understanding of the world breaks down when presented with slightly unfamiliar visual input. This challenges the robustness of the learned world representations and highlights a gap between performance in curated benchmarks and potential real-world utility.