\n

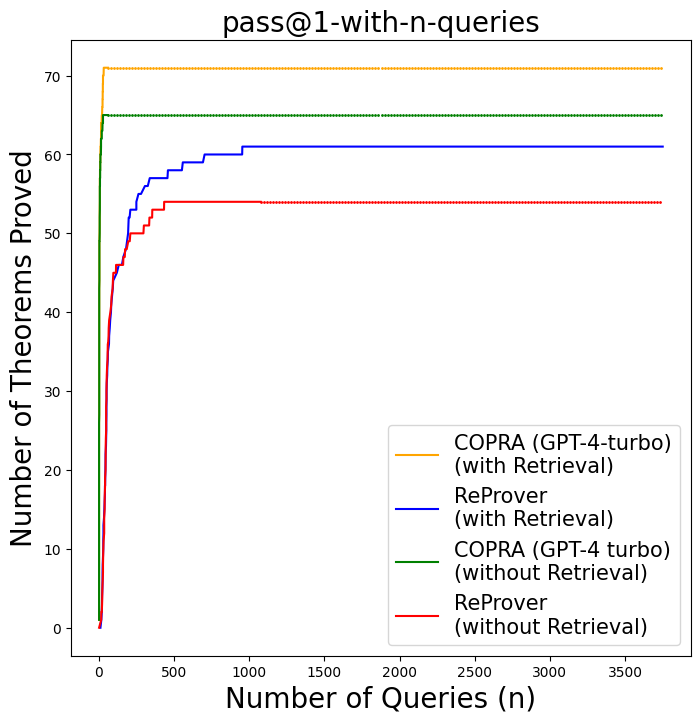

## Line Chart: pass@1-with-n-queries

### Overview

This line chart visualizes the number of theorems proved as a function of the number of queries (n) for four different models: COPRAGPT-4-turbo with and without retrieval, and ReProver with and without retrieval. The chart aims to compare the performance of these models in theorem proving based on the number of queries made.

### Components/Axes

* **Title:** pass@1-with-n-queries (positioned at the top-center)

* **X-axis:** Number of Queries (n) - Scale ranges from 0 to 3500, with tick marks at intervals of 500.

* **Y-axis:** Number of Theorems Proved - Scale ranges from 0 to 70, with tick marks at intervals of 10.

* **Legend:** Located in the top-right corner, containing the labels and corresponding colors for each data series.

* COPRA (GPT-4-turbo) (with Retrieval) - Orange

* ReProver (with Retrieval) - Blue

* COPRA (GPT-4-turbo) (without Retrieval) - Green

* ReProver (without Retrieval) - Red

### Detailed Analysis

* **COPRA (GPT-4-turbo) (with Retrieval) - Orange:** The line starts at approximately 0 theorems proved at 0 queries. It rapidly increases to around 65 theorems proved by approximately 400 queries, then plateaus around 68 theorems proved for the remainder of the query range.

* **ReProver (with Retrieval) - Blue:** The line begins at 0 theorems proved at 0 queries. It increases steadily, reaching approximately 58 theorems proved by 800 queries, and continues to increase, reaching around 62 theorems proved at 3500 queries.

* **COPRA (GPT-4-turbo) (without Retrieval) - Green:** The line starts at 0 theorems proved at 0 queries. It rises quickly to approximately 68 theorems proved by 200 queries, and then remains relatively flat, hovering around 68 theorems proved for the rest of the query range.

* **ReProver (without Retrieval) - Red:** The line starts at 0 theorems proved at 0 queries. It increases gradually, reaching approximately 52 theorems proved by 1000 queries, and then plateaus around 55 theorems proved for the remainder of the query range.

### Key Observations

* COPRA (GPT-4-turbo) with and without retrieval demonstrates the fastest initial performance, reaching a high number of theorems proved with a relatively small number of queries.

* ReProver with retrieval shows a slower but more consistent increase in theorems proved as the number of queries increases.

* ReProver without retrieval exhibits the slowest performance, with the lowest number of theorems proved across all query ranges.

* The performance of COPRA (GPT-4-turbo) plateaus quickly, suggesting diminishing returns with increased queries.

### Interpretation

The data suggests that COPRA (GPT-4-turbo) is more efficient at proving theorems initially, regardless of retrieval, compared to ReProver. The inclusion of retrieval appears to have a more significant positive impact on COPRA's performance, as it reaches a higher plateau. ReProver benefits from retrieval, but its overall performance remains lower than COPRA. The plateauing of COPRA's performance indicates that after a certain point, additional queries do not significantly contribute to proving more theorems, potentially due to the model encountering more complex or unsolvable theorems. The consistent, albeit slower, increase in ReProver's performance with retrieval suggests that it may be more capable of tackling a wider range of theorems, even if it requires more queries. The chart highlights the trade-off between initial efficiency (COPRA) and sustained performance (ReProver). The "pass@1" metric suggests that the models are evaluated on whether they can prove a theorem with a single attempt, and the "with-n-queries" aspect explores how performance scales with the number of attempts.