\n

## Chart: Test Accuracy vs. Lambda

### Overview

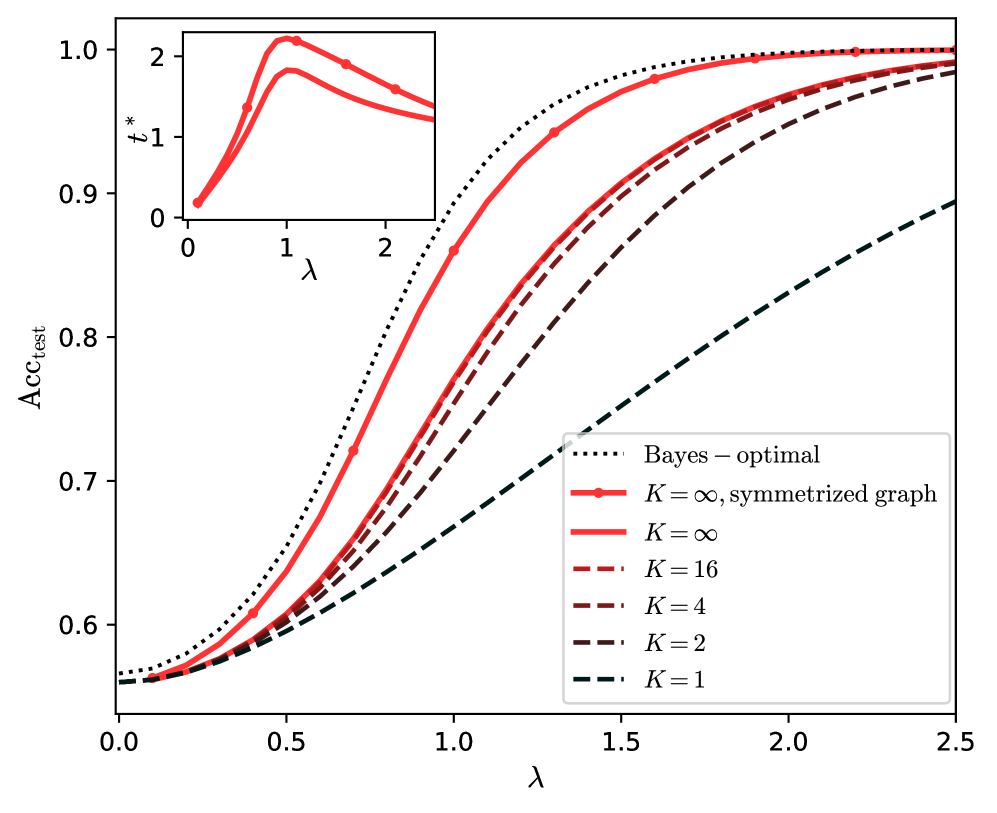

The image presents a line chart illustrating the relationship between a parameter lambda (λ) and the test accuracy (Acc<sub>test</sub>) for different values of K. A smaller inset chart shows the relationship between lambda and t*. The chart appears to be evaluating the performance of a model or algorithm under varying conditions.

### Components/Axes

* **X-axis:** Lambda (λ), ranging from 0.0 to 2.5.

* **Y-axis:** Test Accuracy (Acc<sub>test</sub>), ranging from 0.55 to 1.05.

* **Inset Chart X-axis:** Lambda (λ), ranging from 0.0 to 2.5.

* **Inset Chart Y-axis:** t*, ranging from 0.0 to 2.5.

* **Legend:** Located in the top-right corner, listing the different lines:

* Bayes – optimal (solid blue line)

* K = ∞, symmetrized graph (dotted red line)

* K = ∞ (solid red line)

* K = 16 (dashed brown line)

* K = 4 (dashed dark red line)

* K = 2 (dashed black line)

* K = 1 (dashed very dark black line)

### Detailed Analysis

The main chart displays several lines representing different values of K.

* **Bayes – optimal (solid blue line):** This line starts at approximately 0.62 at λ = 0.0, rises rapidly to approximately 0.95 at λ = 0.7, and then plateaus, remaining around 0.98-1.0 for λ > 1.0.

* **K = ∞, symmetrized graph (dotted red line):** This line begins at approximately 0.62 at λ = 0.0, increases steadily to approximately 0.95 at λ = 1.0, and then plateaus around 0.98-1.0 for λ > 1.0.

* **K = ∞ (solid red line):** This line starts at approximately 0.62 at λ = 0.0, rises steadily to approximately 0.95 at λ = 1.0, and then plateaus around 0.98-1.0 for λ > 1.0.

* **K = 16 (dashed brown line):** This line starts at approximately 0.62 at λ = 0.0, increases more slowly than the previous lines, reaching approximately 0.85 at λ = 1.0, and then continues to rise, approaching 0.95 at λ = 2.5.

* **K = 4 (dashed dark red line):** This line starts at approximately 0.62 at λ = 0.0, increases slowly, reaching approximately 0.75 at λ = 1.0, and then continues to rise, approaching 0.90 at λ = 2.5.

* **K = 2 (dashed black line):** This line starts at approximately 0.62 at λ = 0.0, increases very slowly, reaching approximately 0.68 at λ = 1.0, and then continues to rise, approaching 0.80 at λ = 2.5.

* **K = 1 (dashed very dark black line):** This line starts at approximately 0.62 at λ = 0.0, increases extremely slowly, reaching approximately 0.65 at λ = 1.0, and then continues to rise, approaching 0.75 at λ = 2.5.

The inset chart shows a curve for t* vs. λ. The curve peaks at approximately t* = 2.1 at λ = 0.8, and then decreases to approximately t* = 1.0 at λ = 2.5.

### Key Observations

* The "Bayes – optimal" and "K = ∞" lines achieve the highest test accuracy, plateauing around 0.98-1.0 for λ > 1.0.

* As K decreases, the test accuracy decreases, and the rate of increase with respect to λ slows down.

* The inset chart shows a non-monotonic relationship between t* and λ, with a peak around λ = 0.8.

* All lines start at the same accuracy level (approximately 0.62) when λ = 0.

### Interpretation

The chart demonstrates the impact of the parameter K on the test accuracy of a model as a function of lambda (λ). The "Bayes – optimal" line represents the theoretical upper bound on performance. The lines corresponding to higher values of K (K = ∞ and K = 16) approach this optimal performance more closely than those with lower values of K (K = 4, K = 2, and K = 1). This suggests that increasing K improves the model's ability to generalize to unseen data, up to a certain point.

The inset chart provides additional information about a parameter t* that is related to λ. The peak in the curve suggests that there is an optimal value of λ (around 0.8) that maximizes t*. The relationship between t* and λ may be indicative of the model's sensitivity to changes in λ.

The data suggests that for achieving high test accuracy, a larger value of K is preferable, and that there is a specific value of lambda that optimizes another parameter, t*. The choice of K and λ should be made based on the desired trade-off between accuracy and other factors, such as computational cost or model complexity.