## Neural Network Architecture Diagram: Input-Output Transformation

### Overview

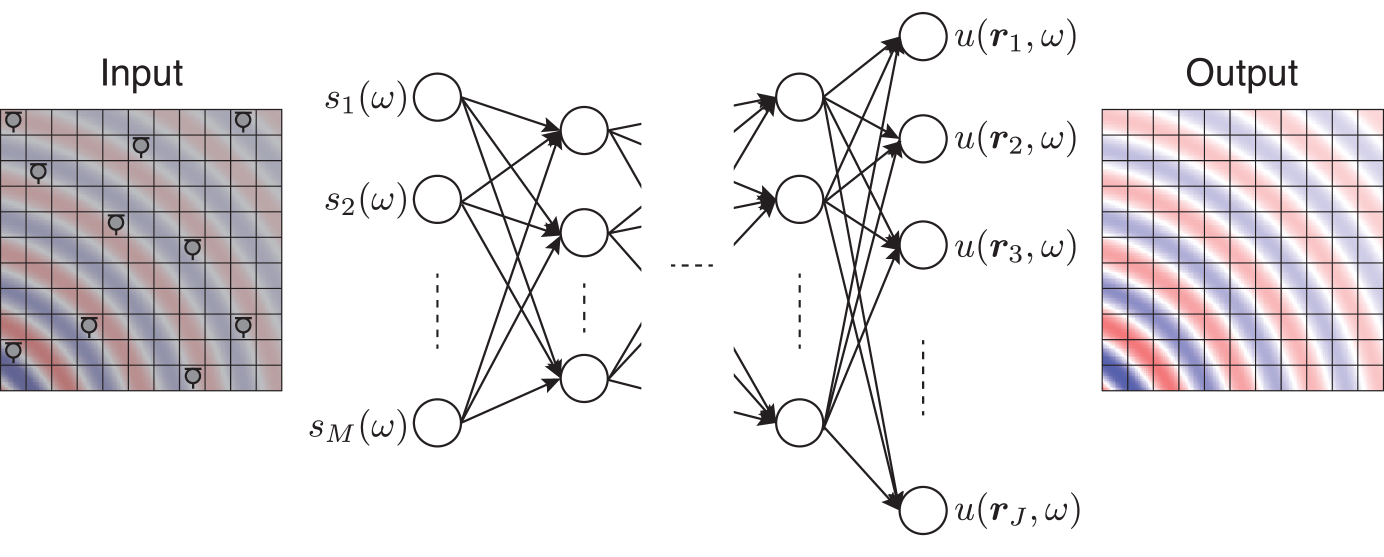

The image depicts a feedforward neural network processing input data through multiple hidden layers to produce output. The architecture includes:

- Input grid with spatial data points

- Hidden layer nodes with parameterized states

- Output grid with gradient-based activation patterns

- Full connectivity between layers

### Components/Axes

**Input Section (Left):**

- Grid: 5x5 matrix with gray circular markers

- Color gradient: Red-to-blue transition from bottom-left to top-right

- No explicit axis labels, but spatial coordinates implied

**Hidden Layer (Center):**

- Nodes labeled: s₁(ω), s₂(ω), ..., sₘ(ω)

- Black circular nodes with bidirectional arrows

- Parameter ω appears in all hidden layer states

**Output Section (Right):**

- Grid: 5x5 matrix with red/blue gradient

- Color gradient: Red-to-blue transition from top-left to bottom-right

- Output nodes labeled: u(r₁,ω), u(r₂,ω), ..., u(rⱼ,ω)

**Legend/Annotations:**

- No explicit legend present

- ω symbol consistently used across all node labels

- Dashed lines indicate hidden layer connections

### Detailed Analysis

**Input Grid:**

- 25 data points arranged in 5x5 matrix

- Gray circular markers suggest discrete input features

- Color gradient implies spatial correlation between adjacent cells

**Hidden Layer:**

- M nodes (exact count unspecified) between input/output

- Parameter ω suggests shared weight/bias across nodes

- Bidirectional arrows indicate recurrent connections within layer

**Output Grid:**

- 25 activation units with continuous color gradient

- Red areas (top-left) transition to blue (bottom-right)

- Output nodes maintain ω parameterization

**Architectural Features:**

- Fully connected architecture (all-to-all connections)

- No skip connections or residual pathways shown

- Parameter ω appears to be global across network

### Key Observations

1. Input-output grids share identical spatial dimensions (5x5)

2. Color gradients in input/output suggest inverse spatial relationships

3. Hidden layer uses parameter ω consistently across all nodes

4. Output activation pattern mirrors but inverts input gradient direction

5. No explicit activation function labels present

### Interpretation

This diagram illustrates a basic feedforward neural network with:

- Spatial input processing (5x5 grid)

- Parameterized hidden layer (M nodes with ω)

- Gradient-based output transformation

The consistent use of ω across all nodes suggests shared parameterization, potentially representing:

- Global weight matrix

- Batch normalization parameter

- Regularization factor

The inverted color gradient between input and output implies the network learns to:

1. Detect spatial patterns in input data

2. Transform these patterns through hidden representations

3. Produce output with opposite spatial correlation

The bidirectional arrows in the hidden layer indicate potential for:

- Recurrent processing within the layer

- Temporal dynamics (if ω represents time steps)

- Feature re-evaluation during forward pass

The absence of explicit activation functions suggests this is either:

- A conceptual diagram focusing on architecture

- A simplified representation omitting implementation details

- A visualization emphasizing parameter flow rather than computation

The matching grid dimensions between input and output imply:

- No dimensionality reduction/expansion

- Direct 1:1 correspondence between input features and output units

- Potential for identity mapping or spatial transformation

This architecture could be applied to tasks requiring:

- Spatial pattern recognition

- Feature transformation with parameter sharing

- Gradient-based output generation