\n

## System Architecture Diagram: Reinforcement Learning Training Pipeline

### Overview

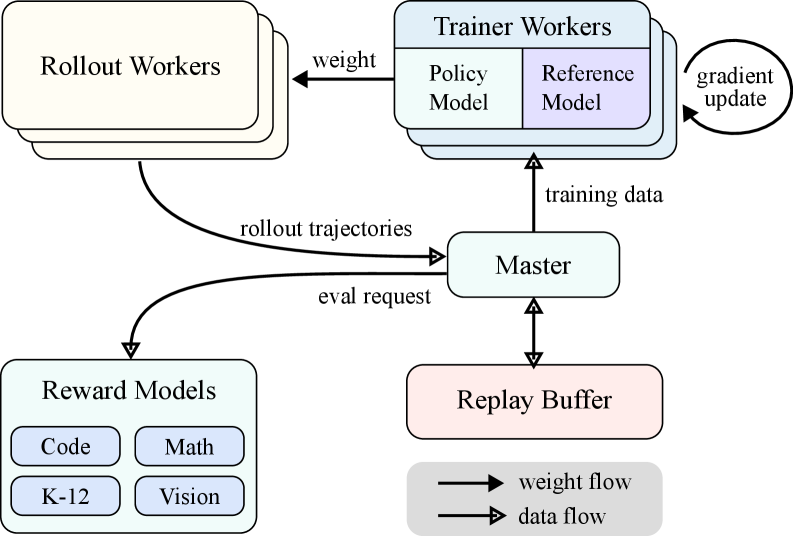

The image displays a technical system architecture diagram illustrating a distributed reinforcement learning (RL) training pipeline. The diagram uses labeled boxes to represent system components and arrows to indicate the flow of data and model weights between them. The overall flow suggests an iterative training process where a policy model is improved using feedback from specialized reward models.

### Components/Axes

The diagram consists of five primary component boxes and a legend, connected by directional arrows.

**Primary Components (Boxes):**

1. **Rollout Workers** (Top-left, cream-colored box): A stack of boxes, indicating multiple instances.

2. **Trainer Workers** (Top-right, light blue box): A stack of boxes containing two sub-components:

* **Policy Model** (Left sub-box, light blue)

* **Reference Model** (Right sub-box, light purple)

3. **Master** (Center, light green box): The central coordinating component.

4. **Reward Models** (Bottom-left, light blue box): Contains four specialized sub-models:

* **Code** (Top-left sub-box)

* **Math** (Top-right sub-box)

* **K-12** (Bottom-left sub-box)

* **Vision** (Bottom-right sub-box)

5. **Replay Buffer** (Bottom-right, pink box): A storage component.

**Legend (Bottom-right corner):**

* **Solid Arrow (→):** Labeled "weight flow"

* **Dashed Arrow (⇢):** Labeled "data flow"

### Detailed Analysis

**Flow and Connections (Traced from Legend and Labels):**

1. **Weight Flow (Solid Arrows):**

* From **Trainer Workers** to **Rollout Workers**: Labeled "weight". This indicates the current policy model weights are sent to the rollout workers for action generation.

* Within **Trainer Workers**: A circular arrow labeled "gradient update" points from the "Policy Model" back to itself, indicating the model parameters are updated via gradient descent during training.

2. **Data Flow (Dashed Arrows):**

* From **Rollout Workers** to **Master**: Labeled "rollout trajectories". The workers send generated experience data (state-action-reward sequences) to the master.

* From **Master** to **Reward Models**: Labeled "eval request". The master sends data to be evaluated by the specialized reward models.

* From **Master** to **Trainer Workers**: Labeled "training data". The master sends processed data (likely trajectories paired with rewards) to the trainers for policy updates.

* Between **Master** and **Replay Buffer**: A bidirectional dashed arrow (no explicit label). This indicates the master can both store new experiences in and retrieve old experiences from the replay buffer.

**Spatial Grounding:**

* The **Legend** is positioned in the bottom-right corner of the diagram.

* The **Master** component is centrally located, acting as the hub for all data flows.

* The **Reward Models** are positioned in the bottom-left, receiving evaluation requests from the central Master.

* The **Replay Buffer** is positioned in the bottom-right, adjacent to the legend.

### Key Observations

* **Modular Reward System:** The "Reward Models" component is explicitly segmented into four distinct domains (Code, Math, K-12, Vision), suggesting the system is designed to train a generalist model or evaluate performance across diverse, specialized tasks.

* **Centralized Coordination:** The "Master" node is critical, managing the flow of trajectories, evaluation requests, training data, and interaction with the replay buffer. It decouples the rollout, reward evaluation, and training processes.

* **Standard RL Components:** The architecture includes classic RL elements: Rollout Workers (for environment interaction), a Replay Buffer (for experience storage), Trainer Workers (for policy optimization), and a Reward Model (for providing feedback).

* **Dual-Model Training:** The "Trainer Workers" contain both a "Policy Model" (being trained) and a "Reference Model." This is a common setup in algorithms like PPO (Proximal Policy Optimization) or RLHF (Reinforcement Learning from Human Feedback), where the reference model provides a stability baseline to prevent the policy from diverging too far.

### Interpretation

This diagram outlines a scalable, distributed reinforcement learning system, likely for training large language models or multi-modal agents. The architecture is designed for efficiency and specialization.

* **What it demonstrates:** The system separates the computationally intensive tasks of generating experience (Rollout Workers), evaluating that experience (Reward Models), and updating the model (Trainer Workers). The Master orchestrates this pipeline, while the Replay Buffer enables learning from past experiences, improving sample efficiency.

* **Relationships:** The flow is cyclical and iterative: Weights go out -> Trajectories come in -> Rewards are evaluated -> Training data is prepared -> The model is updated -> New weights go out. The specialized Reward Models imply the trained agent is intended to perform well across a broad set of intellectual and perceptual tasks (code, math, education, vision).

* **Notable Implications:** The presence of a "Reference Model" strongly suggests the use of a constrained optimization method (like PPO) or an RLHF-style approach, which is crucial for aligning model behavior and preventing reward hacking. The multi-domain reward structure indicates an ambition to create a robust, general-purpose model rather than a narrow specialist. The architecture is built for parallelism, allowing each component to scale independently based on computational demand.