\n

## Heatmap: Attention Weight Modification

### Overview

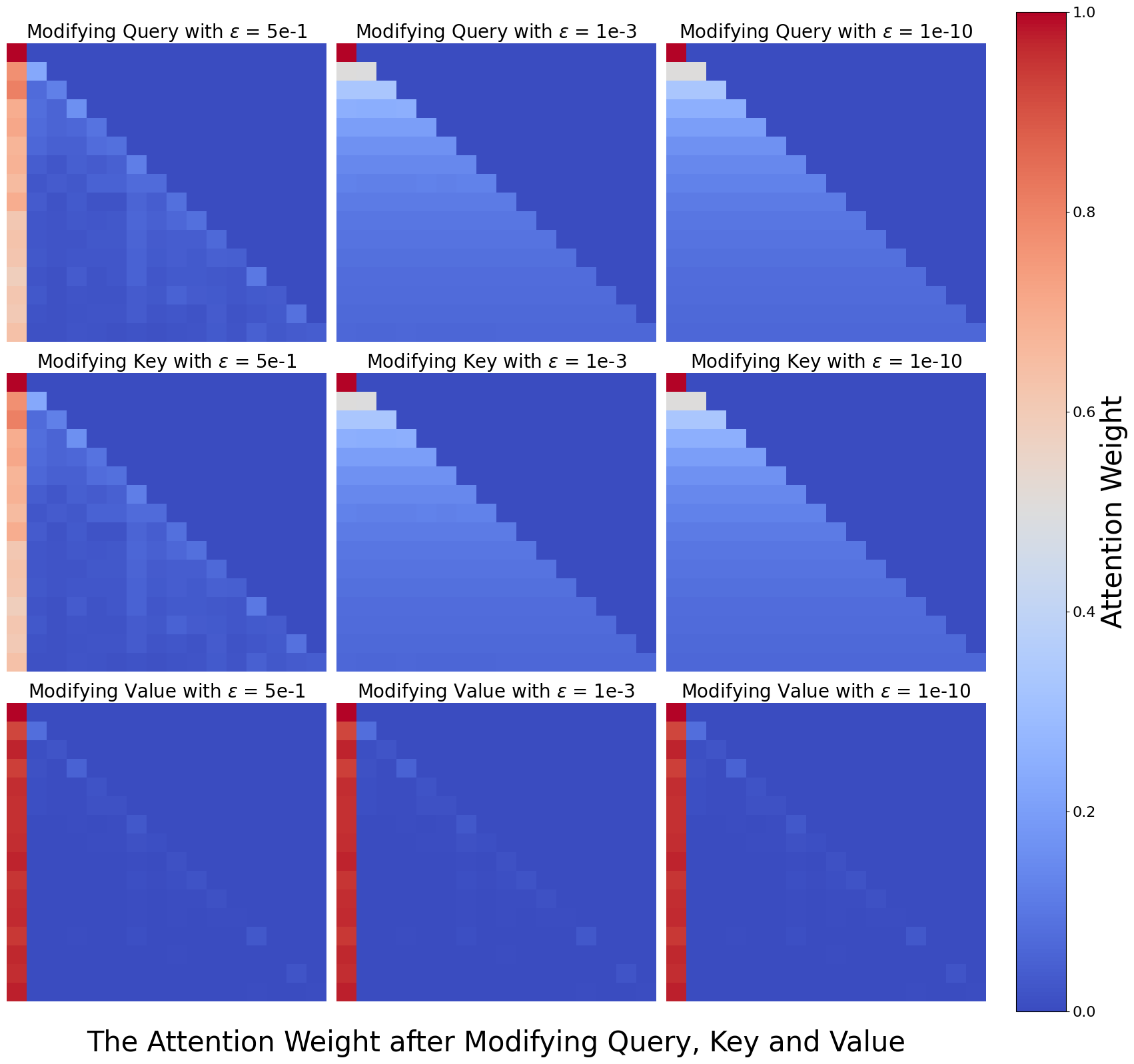

This image presents a 3x3 grid of heatmaps, visualizing the attention weight after modifying the Query, Key, and Value components with different epsilon (ε) values. The epsilon values are 5e-1, 1e-3, and 1e-10. Each heatmap displays attention weights on a scale from 0.0 to 1.0, represented by a color gradient from blue to red.

### Components/Axes

The image consists of nine individual heatmaps arranged in a 3x3 grid.

- **Rows:** Represent the modification applied: "Modifying Query", "Modifying Key", "Modifying Value".

- **Columns:** Represent the epsilon (ε) value used for modification: "ε = 5e-1", "ε = 1e-3", "ε = 1e-10".

- **Color Scale (Right Side):** Represents the "Attention Weight", ranging from 0.0 (blue) to 1.0 (red). The scale is linear.

- **Heatmap Cells:** Each cell represents the attention weight between two elements. The axes of the heatmaps are not explicitly labeled, but appear to represent indices or positions within a sequence.

### Detailed Analysis

Each heatmap is a square grid, approximately 10x10 cells. The attention weights are visualized using color intensity.

**1. Modifying Query:**

- **ε = 5e-1:** The heatmap shows a strong diagonal pattern with high attention weights (red) along the main diagonal. Attention weights decrease as you move away from the diagonal. Approximate values: Diagonal ~ 0.9-1.0, Upper/Lower off-diagonal ~ 0.2-0.4.

- **ε = 1e-3:** The diagonal pattern is still present, but less pronounced. Attention weights are generally lower than with ε = 5e-1. Approximate values: Diagonal ~ 0.7-0.9, Upper/Lower off-diagonal ~ 0.3-0.5.

- **ε = 1e-10:** The diagonal pattern is significantly weakened. Attention weights are more evenly distributed, with a general value around 0.5-0.6. Approximate values: Diagonal ~ 0.6-0.7, Upper/Lower off-diagonal ~ 0.4-0.6.

**2. Modifying Key:**

- **ε = 5e-1:** Similar to modifying the query with ε = 5e-1, a strong diagonal pattern is observed. Approximate values: Diagonal ~ 0.8-1.0, Upper/Lower off-diagonal ~ 0.2-0.4.

- **ε = 1e-3:** The diagonal pattern is less pronounced, with lower overall attention weights. Approximate values: Diagonal ~ 0.6-0.8, Upper/Lower off-diagonal ~ 0.3-0.5.

- **ε = 1e-10:** The diagonal pattern is significantly weakened, with attention weights more evenly distributed. Approximate values: Diagonal ~ 0.5-0.6, Upper/Lower off-diagonal ~ 0.4-0.6.

**3. Modifying Value:**

- **ε = 5e-1:** A strong diagonal pattern is visible, similar to the query and key modifications with ε = 5e-1. Approximate values: Diagonal ~ 0.8-1.0, Upper/Lower off-diagonal ~ 0.2-0.4.

- **ε = 1e-3:** The diagonal pattern is less pronounced, with lower attention weights. Approximate values: Diagonal ~ 0.6-0.8, Upper/Lower off-diagonal ~ 0.3-0.5.

- **ε = 1e-10:** The diagonal pattern is significantly weakened, with attention weights more evenly distributed. Approximate values: Diagonal ~ 0.5-0.6, Upper/Lower off-diagonal ~ 0.4-0.6.

### Key Observations

- As epsilon (ε) decreases, the strength of the diagonal pattern in the heatmaps diminishes. This suggests that smaller perturbations to the Query, Key, or Value components lead to a more diffuse attention distribution.

- The diagonal pattern indicates that the model initially focuses on the relationship between elements at the same position (self-attention).

- The color scale shows that attention weights are generally higher when the Query, Key, or Value are modified with larger epsilon values (5e-1).

### Interpretation

The data suggests that modifying the Query, Key, or Value components with different epsilon values impacts the attention mechanism. Larger epsilon values (5e-1) preserve a strong self-attention pattern, where the model primarily attends to elements at the same position. Smaller epsilon values (1e-10) disrupt this pattern, leading to a more uniform attention distribution. This could indicate that the model becomes less focused and more sensitive to all elements in the sequence when the components are perturbed with smaller values.

The weakening of the diagonal pattern with decreasing epsilon suggests a trade-off between stability and sensitivity. A strong diagonal pattern indicates a stable attention distribution, while a more diffuse pattern suggests a greater sensitivity to input variations. The choice of epsilon value could therefore influence the model's robustness and generalization ability. The image demonstrates the effect of adding noise to the Query, Key, and Value vectors, and how this noise impacts the attention weights.