## Heatmap Grid: Attention Weight after Modifying Query, Key and Value

### Overview

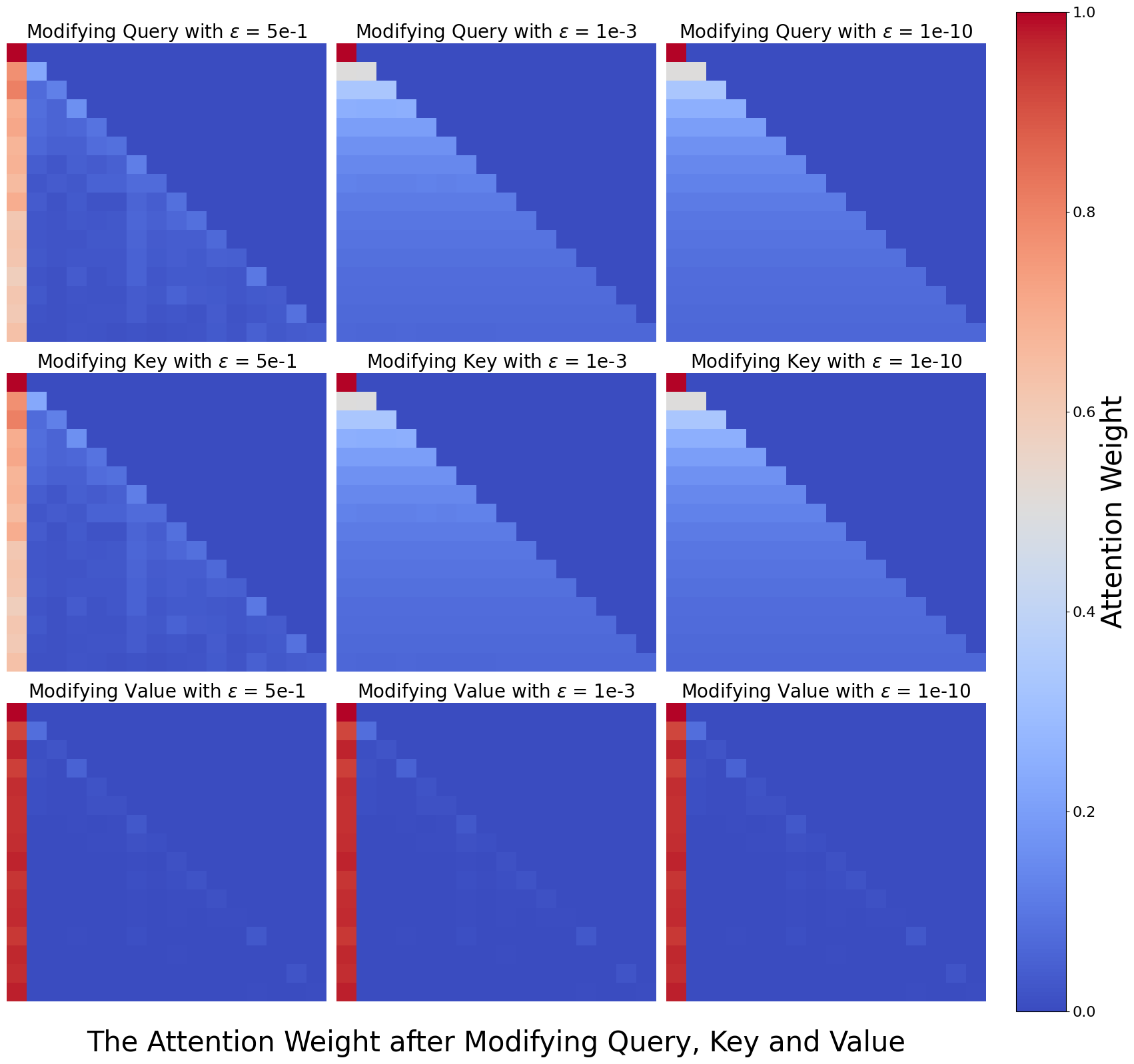

The image displays a 3x3 grid of square heatmaps, each visualizing an attention weight matrix. The overall title at the bottom reads: "The Attention Weight after Modifying Query, Key and Value". A vertical color bar on the right side of the grid serves as a legend for the "Attention Weight" scale.

### Components/Axes

* **Grid Structure:** 3 rows by 3 columns of individual heatmap plots.

* **Row Labels (Modification Type):**

* Top Row: "Modifying Query"

* Middle Row: "Modifying Key"

* Bottom Row: "Modifying Value"

* **Column Labels (Epsilon Value):**

* Left Column: `ε = 5e-1` (0.5)

* Middle Column: `ε = 1e-3` (0.001)

* Right Column: `ε = 1e-10` (0.0000000001)

* **Color Bar/Legend:** Located on the far right, spanning the full height of the grid.

* **Title:** "Attention Weight"

* **Scale:** Continuous gradient from 0.0 (bottom) to 1.0 (top).

* **Color Mapping:**

* 0.0: Dark Blue

* ~0.2: Medium Blue

* ~0.4: Light Blue / Grayish-Blue

* ~0.6: Light Orange / Peach

* ~0.8: Orange

* 1.0: Dark Red

* **Heatmap Axes:** The individual heatmaps do not have labeled x or y axes. They represent a matrix where both dimensions likely correspond to sequence positions (e.g., token indices in a self-attention mechanism). The pattern is a lower-triangular matrix, indicating a causal or autoregressive attention mask where a position can only attend to itself and previous positions.

### Detailed Analysis

Each heatmap is a lower-triangular matrix. The color of each cell represents the attention weight from a "query" position (y-axis, row) to a "key" position (x-axis, column).

**Row 1: Modifying Query**

* **ε = 5e-1 (Top-Left):** Shows a strong, sharp diagonal of high attention weights (red/orange) from the top-left corner. The first column (all rows) also shows moderately high weights (light orange). The rest of the lower triangle is a gradient of blue, with weights decreasing as you move away from the diagonal and the first column.

* **ε = 1e-3 (Top-Middle):** The sharp diagonal persists but is slightly less intense. The high-weight region expands into a broader band along the diagonal. The first column remains prominent. The overall pattern is smoother than the ε=5e-1 case.

* **ε = 1e-10 (Top-Right):** Very similar to the ε=1e-3 plot. The diagonal band is well-defined and smooth. The distinction between this and the middle plot is minimal, suggesting a saturation effect for very small epsilon.

**Row 2: Modifying Key**

* **ε = 5e-1 (Middle-Left):** Pattern is strikingly similar to "Modifying Query, ε=5e-1". A sharp diagonal and a prominent first column are visible.

* **ε = 1e-3 (Middle-Middle):** Similar to its counterpart in the Query row. A smooth, broad diagonal band of higher attention weights.

* **ε = 1e-10 (Middle-Right):** Again, nearly identical to the Query row's ε=1e-10 plot. A well-defined diagonal band.

**Row 3: Modifying Value**

* **ε = 5e-1 (Bottom-Left):** **This pattern is fundamentally different.** The entire first column is a solid, dark red band (attention weight ≈ 1.0). The rest of the lower triangle is almost entirely dark blue (weight ≈ 0.0), with only a very faint, sparse diagonal of slightly lighter blue cells.

* **ε = 1e-3 (Bottom-Middle):** Identical pattern to the ε=5e-1 case for Value modification. Solid red first column, near-zero weights elsewhere.

* **ε = 1e-10 (Bottom-Right):** Identical pattern to the other Value modification plots. No visible change with decreasing epsilon.

### Key Observations

1. **Two Distinct Patterns:** The grid reveals two primary attention patterns. Modifications to **Query** and **Key** produce a **diagonal-band pattern**, where attention is focused on recent tokens (the diagonal) and, to a lesser extent, the very first token. Modifications to **Value** produce a **first-column-only pattern**, where all attention is concentrated solely on the first token in the sequence.

2. **Effect of Epsilon (ε):** For Query and Key modifications, decreasing epsilon from 5e-1 to 1e-3 sharpens and smooths the diagonal attention pattern. Further decrease to 1e-10 shows negligible change, indicating the effect plateaus. For Value modifications, epsilon has no visible effect on the resulting pattern.

3. **Causal Mask:** All heatmaps are strictly lower-triangular, confirming the use of a causal (autoregressive) attention mask. No information flows from future positions.

4. **First Token Bias:** Even in the diagonal patterns (Query/Key mods), the first column (attention to the first token) shows consistently higher weights than other non-diagonal positions.

### Interpretation

This visualization demonstrates the sensitivity of a transformer's self-attention mechanism to targeted modifications of its core components (Query, Key, Value projections). The results suggest:

* **Query/Key Modifications Control "Where to Look":** Altering the Query or Key vectors primarily influences the *distribution* of attention across the sequence. The diagonal pattern indicates that these modifications preserve or enhance the model's tendency for local, sequential processing (attending to the most recent token). The persistence of the first-column bias suggests the first token (often a `[CLS]` or start token) holds inherent importance.

* **Value Modifications Control "What is Attended To":** Modifying the Value vectors has a drastic and categorical effect. It collapses the attention distribution, forcing the model to attend *exclusively* to the first token, regardless of the query. This implies that the Value projection is critical for determining the *content* that is aggregated, and disrupting it can lead to a degenerate attention state where only a single, fixed position is used.

* **Robustness and Saturation:** The system shows robustness to very small perturbations (ε=1e-3 vs. 1e-10), as the patterns stabilize. The stark difference between the Value modification results and the others highlights a potential asymmetry in how these components contribute to the attention output. This could be relevant for research in model editing, interpretability, or adversarial attacks on transformers.