## Chart Type: Line Chart with Confidence Intervals: Accuracy Over Iterations for Different Task Types

### Overview

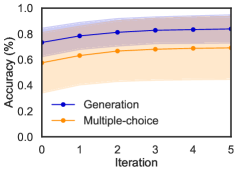

This image displays a 2D line chart illustrating the "Accuracy (%)" on the y-axis against "Iteration" on the x-axis. Two distinct data series, "Generation" and "Multiple-choice," are plotted, each with a central line representing the mean accuracy and a shaded region indicating a confidence interval or variability around that mean. The chart shows how the accuracy of these two task types evolves over a series of iterations.

### Components/Axes

* **X-axis Label**: "Iteration"

* **X-axis Range**: From 0 to 5.

* **X-axis Major Ticks**: 0, 1, 2, 3, 4, 5.

* **Y-axis Label**: "Accuracy (%)"

* **Y-axis Range**: From 0.0 to 1.0.

* **Y-axis Major Ticks**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend**: Located in the bottom-center of the plot area.

* **Blue line with circular markers**: Labeled "Generation".

* **Orange line with circular markers**: Labeled "Multiple-choice".

### Detailed Analysis

The chart presents two data series, each showing an increasing trend in accuracy with iterations, eventually plateauing.

1. **Generation (Blue Line with Circular Markers)**:

* **Visual Trend**: This line starts at a relatively high accuracy and increases steadily, then appears to plateau at a higher accuracy level. The shaded region around it is light purple/blue.

* **Approximate Data Points**:

* Iteration 0: Accuracy is approximately 0.75.

* Iteration 1: Accuracy is approximately 0.78.

* Iteration 2: Accuracy is approximately 0.82.

* Iteration 3: Accuracy is approximately 0.84.

* Iteration 4: Accuracy is approximately 0.84.

* Iteration 5: Accuracy is approximately 0.84.

* **Confidence Interval (Light Purple/Blue Shaded Area)**: This region starts roughly from 0.70 to 0.80 at Iteration 0, widens slightly to cover about 0.75 to 0.88 around Iteration 2, and then narrows to approximately 0.78 to 0.90 at Iteration 5.

2. **Multiple-choice (Orange Line with Circular Markers)**:

* **Visual Trend**: This line starts at a lower accuracy than "Generation" and shows a consistent increase, also plateauing, but at a lower overall accuracy level. The shaded region around it is light orange.

* **Approximate Data Points**:

* Iteration 0: Accuracy is approximately 0.58.

* Iteration 1: Accuracy is approximately 0.63.

* Iteration 2: Accuracy is approximately 0.67.

* Iteration 3: Accuracy is approximately 0.68.

* Iteration 4: Accuracy is approximately 0.69.

* Iteration 5: Accuracy is approximately 0.69.

* **Confidence Interval (Light Orange Shaded Area)**: This region starts roughly from 0.35 to 0.75 at Iteration 0, widens slightly to cover about 0.55 to 0.78 around Iteration 2, and then narrows to approximately 0.60 to 0.75 at Iteration 5.

### Key Observations

* The "Generation" task consistently achieves higher accuracy than the "Multiple-choice" task across all iterations shown.

* Both task types demonstrate an improvement in accuracy as the number of iterations increases.

* The most significant gains in accuracy for both tasks occur within the first 2-3 iterations. After Iteration 3, the accuracy for both tasks appears to plateau, indicating diminishing returns from further iterations.

* The confidence intervals for "Generation" and "Multiple-choice" are largely non-overlapping, especially for their mean values, reinforcing the significant performance difference between the two methods.

* The "Generation" method starts with a higher baseline accuracy (approx. 0.75) compared to "Multiple-choice" (approx. 0.58).

* The peak accuracy for "Generation" is around 0.84, while for "Multiple-choice" it is around 0.69.

### Interpretation

The data suggests that the system or model being evaluated performs significantly better on "Generation" tasks compared to "Multiple-choice" tasks. This superiority is evident from the initial iteration and maintained throughout the training or evaluation process. Both task types benefit from increased iterations, showing a learning curve where accuracy improves over time. However, this improvement is not indefinite; both curves flatten out, indicating that the system reaches a performance ceiling after a few iterations.

The clear separation of the mean accuracy lines and their respective confidence intervals strongly implies that the difference in performance between "Generation" and "Multiple-choice" is statistically significant. This could mean that the underlying model architecture, training data, or task formulation is inherently more suited or optimized for "Generation" tasks. Alternatively, "Generation" tasks might be less ambiguous or provide clearer signals for learning in this specific context. The plateauing effect suggests that beyond 3 iterations, the system has largely converged to its optimal performance for both task types under the given conditions. Further research might investigate why "Generation" tasks yield higher accuracy and whether the "Multiple-choice" task's performance can be improved through different optimization strategies or model architectures.