\n

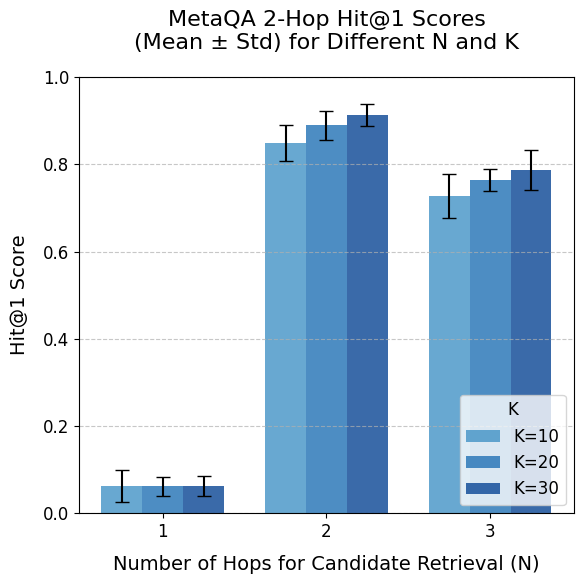

## Bar Chart: MetaQA 2-Hop Hit@1 Scores (Mean ± Std) for Different N and K

### Overview

This is a grouped bar chart displaying the performance of a system (likely a question-answering model) on the MetaQA dataset. The performance metric is the "Hit@1 Score," which measures the accuracy of the top retrieved answer. The chart compares performance across different numbers of hops for candidate retrieval (N) and different values of a parameter K. The data is presented as mean scores with error bars representing standard deviation.

### Components/Axes

* **Chart Title:** "MetaQA 2-Hop Hit@1 Scores (Mean ± Std) for Different N and K" (Position: Top center).

* **Y-Axis:**

* **Label:** "Hit@1 Score" (Position: Left side, rotated vertically).

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** "Number of Hops for Candidate Retrieval (N)" (Position: Bottom center).

* **Categories:** Three discrete values: 1, 2, and 3.

* **Legend:**

* **Title:** "K" (Position: Inside the plot area, bottom-right corner).

* **Categories & Colors:**

* `K=10`: Light blue bar.

* `K=20`: Medium blue bar.

* `K=30`: Dark blue bar.

* **Data Representation:** For each value of N (1, 2, 3), there is a group of three bars, one for each K value (10, 20, 30), ordered from left to right as K=10, K=20, K=30 within each group. Each bar has a black error bar extending vertically from its top.

### Detailed Analysis

**Data Points (Approximate Mean Values with Visual Uncertainty from Error Bars):**

* **For N = 1:**

* **Trend:** All scores are very low, clustered near the bottom of the chart.

* **K=10 (Light Blue):** Mean ≈ 0.06. Error bar spans approximately 0.02 to 0.10.

* **K=20 (Medium Blue):** Mean ≈ 0.06. Error bar spans approximately 0.03 to 0.09.

* **K=30 (Dark Blue):** Mean ≈ 0.06. Error bar spans approximately 0.03 to 0.09.

* **Observation:** Performance is uniformly poor and nearly identical across all K values for a single hop.

* **For N = 2:**

* **Trend:** A dramatic increase in performance compared to N=1. Scores are the highest on the chart. There is a slight positive trend with increasing K.

* **K=10 (Light Blue):** Mean ≈ 0.85. Error bar spans approximately 0.81 to 0.89.

* **K=20 (Medium Blue):** Mean ≈ 0.89. Error bar spans approximately 0.86 to 0.92.

* **K=30 (Dark Blue):** Mean ≈ 0.91. Error bar spans approximately 0.88 to 0.94.

* **Observation:** This is the peak performance configuration. The mean score increases monotonically with K, and the error bars are relatively small, indicating consistent high performance.

* **For N = 3:**

* **Trend:** Performance decreases compared to N=2 but remains significantly higher than N=1. The positive trend with increasing K persists.

* **K=10 (Light Blue):** Mean ≈ 0.73. Error bar spans approximately 0.68 to 0.78.

* **K=20 (Medium Blue):** Mean ≈ 0.77. Error bar spans approximately 0.74 to 0.80.

* **K=30 (Dark Blue):** Mean ≈ 0.79. Error bar spans approximately 0.75 to 0.83.

* **Observation:** Performance drops from the N=2 peak but is still robust. The standard deviation (error bar length) appears slightly larger for K=10 compared to K=20 and K=30 at this N.

### Key Observations

1. **Dominant Effect of N:** The number of hops (N) has the most significant impact on performance. There is a sharp, non-linear increase from N=1 to N=2, followed by a moderate decrease from N=2 to N=3.

2. **Secondary Effect of K:** For a fixed N (especially N=2 and N=3), increasing the parameter K leads to a consistent, though smaller, improvement in the mean Hit@1 score.

3. **Optimal Configuration:** The highest mean score (≈0.91) is achieved with N=2 and K=30.

4. **Low Performance at N=1:** The system performs very poorly (scores < 0.1) when only one hop is used for candidate retrieval, regardless of the K value.

5. **Error Bar Consistency:** The standard deviations (error bars) are generally proportional to the mean scores, being very small for low scores (N=1) and larger for higher scores (N=2, N=3). This suggests the variance in performance scales with the mean.

### Interpretation

The data suggests a clear narrative about the retrieval mechanism in this 2-hop QA task:

* **The Critical Role of Multi-Hop Retrieval:** The catastrophic failure at N=1 indicates that single-hop retrieval is fundamentally insufficient for this task. The system likely requires at least two retrieval steps (N=2) to gather the necessary evidence to answer 2-hop questions effectively. The drop at N=3 might indicate that introducing a third hop adds noise or irrelevant information, slightly degrading performance compared to the optimal two-hop process.

* **The Value of a Larger Candidate Pool (K):** Increasing K (the number of candidates considered at each retrieval step) consistently improves accuracy. This implies that casting a wider net during retrieval increases the likelihood of capturing the correct supporting facts. However, the gains from K show diminishing returns, as the improvement from K=20 to K=30 is smaller than from K=10 to K=20.

* **System Behavior:** The system appears to be well-calibrated for the core challenge (N=2), achieving high and stable accuracy. The performance profile is logical: too little retrieval (N=1) fails, optimal retrieval (N=2) succeeds, and excessive retrieval (N=3) introduces slight inefficiency. The consistent benefit of larger K suggests the retrieval module's recall is a key performance driver.

**In summary, the chart demonstrates that for MetaQA 2-hop questions, an optimal retrieval strategy involves two hops (N=2) with a sufficiently large candidate pool (K=30), yielding a high mean Hit@1 score of approximately 0.91.**