## Diagram: LLM Response Generation Flowchart

### Overview

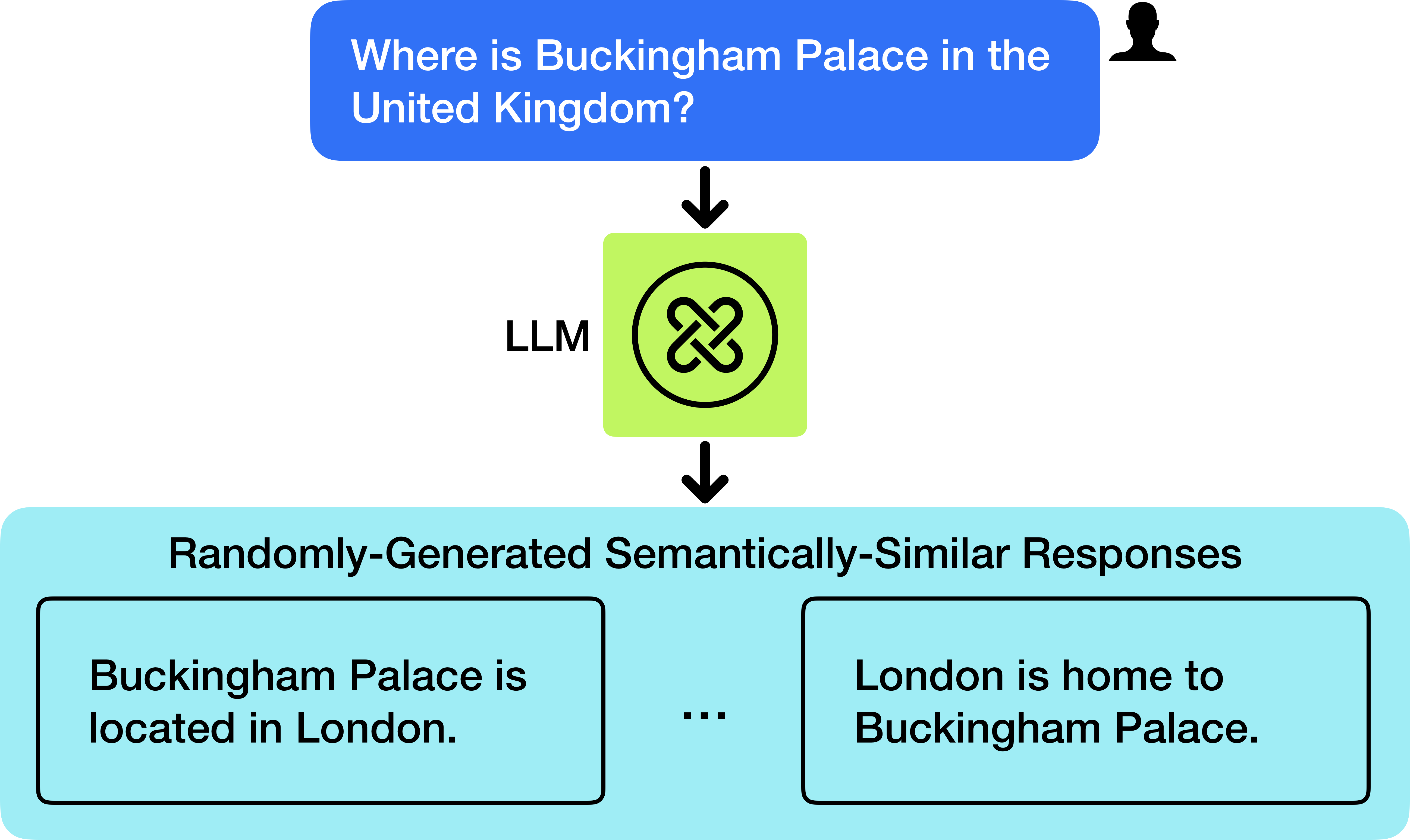

This image is a flowchart illustrating the process of a Large Language Model (LLM) generating multiple semantically similar responses to a single user query. The diagram uses a top-down flow with distinct colored blocks and connecting arrows to represent the stages of input, processing, and output.

### Components/Axes

The diagram is structured into three primary horizontal sections, connected by downward-pointing black arrows.

1. **Top Section (User Input):**

* **Component:** A blue, rounded rectangular speech bubble.

* **Position:** Top-center of the image.

* **Content:** Contains the text: "Where is Buckingham Palace in the United Kingdom?"

* **Associated Icon:** A black silhouette of a person's head and shoulders is positioned to the right of the speech bubble.

2. **Middle Section (Processing):**

* **Component:** A light green square.

* **Position:** Center of the image, below the user input bubble.

* **Label:** The text "LLM" is placed to the left of the square.

* **Icon:** Inside the green square is a black circular icon containing a stylized, interlocking knot or loop symbol.

3. **Bottom Section (Output):**

* **Component:** A large, light cyan rectangular container.

* **Position:** Bottom of the image, spanning most of the width.

* **Title:** The text "Randomly-Generated Semantically-Similar Responses" is centered at the top of this container.

* **Content:** Inside the container are two visible black-bordered rectangular boxes, separated by an ellipsis ("..."), indicating additional, unseen responses.

* **Left Response Box:** Contains the text: "Buckingham Palace is located in London."

* **Right Response Box:** Contains the text: "London is home to Buckingham Palace."

### Detailed Analysis

The flowchart depicts a linear, three-step process:

1. **Input:** A specific factual question is posed by a user.

2. **Processing:** The question is fed into an LLM, represented by a generic processing icon.

3. **Output:** The LLM produces a set of responses. The diagram explicitly shows two example outputs and implies more exist via the ellipsis. Both examples are factually correct and convey the same core information (Buckingham Palace is in London) but use different syntactic structures and sentence subjects.

### Key Observations

* **Semantic Similarity, Not Identity:** The two shown responses are paraphrases of each other. They are not identical strings but share the same meaning.

* **Random Generation:** The title explicitly states the responses are "Randomly-Generated," suggesting the LLM's output is not deterministic and can vary for the same input.

* **Visual Hierarchy:** The use of distinct colors (blue for input, green for processor, cyan for output) and clear directional arrows creates an easy-to-follow visual narrative of the data flow.

* **Ellipsis as a Symbol:** The "..." between the response boxes is a critical component, representing an open-ended set of possible outputs beyond the two examples provided.

### Interpretation

This diagram serves as a conceptual model for understanding a fundamental behavior of Large Language Models. It demonstrates that for a given prompt, an LLM does not simply retrieve a single, fixed answer from a database. Instead, it *generates* a response based on probabilistic patterns learned during training. This generative nature leads to variability in the output, producing multiple valid, semantically equivalent answers that differ in phrasing.

The choice of a simple, factual query ("Where is Buckingham Palace...") highlights that this variability occurs even for straightforward questions with clear answers. The diagram effectively communicates the core idea that interacting with an LLM involves a one-to-many relationship between a single input and a distribution of possible outputs, which is a key distinction from traditional search engines or deterministic software.