## Diagram: LLM Question and Response Generation

### Overview

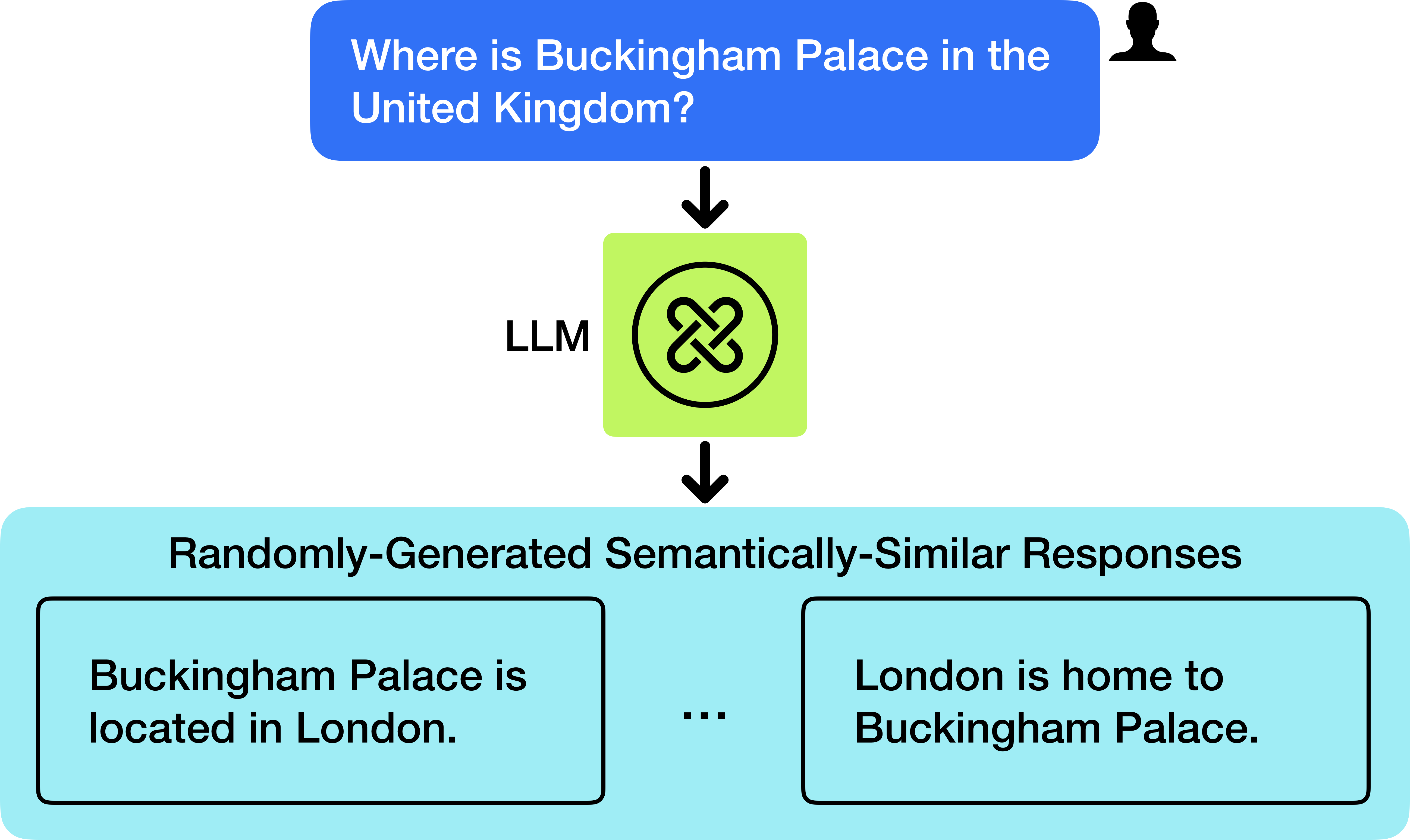

The image is a diagram illustrating the process of a Large Language Model (LLM) generating semantically similar responses to a question. It shows a user query, the LLM processing the query, and the resulting generated responses.

### Components/Axes

* **Top:** A blue rounded rectangle containing the question: "Where is Buckingham Palace in the United Kingdom?". A black silhouette of a person's head is located to the right of the question box.

* **Middle:** A green square containing a symbol resembling intertwined links, labeled "LLM" to the left of the square. An arrow points downwards from the question box to this square.

* **Bottom:** A light blue rounded rectangle labeled "Randomly-Generated Semantically-Similar Responses". An arrow points downwards from the green square to this rectangle. Inside this rectangle are two black-bordered boxes. The left box contains the text "Buckingham Palace is located in London." The right box contains the text "London is home to Buckingham Palace." Between the two boxes is an ellipsis (...).

### Detailed Analysis or ### Content Details

* **Question:** "Where is Buckingham Palace in the United Kingdom?"

* **LLM Processing:** The LLM processes the question. The symbol inside the green square is an abstract representation of this processing.

* **Responses:**

* "Buckingham Palace is located in London."

* "London is home to Buckingham Palace."

* The ellipsis indicates that there may be more responses.

### Key Observations

* The diagram illustrates a question-answering process using an LLM.

* The LLM generates multiple semantically similar responses to the same question.

* The responses are factually correct and relevant to the question.

### Interpretation

The diagram demonstrates how an LLM can take a user's question and generate multiple, slightly different, but semantically equivalent answers. This highlights the LLM's ability to understand the underlying meaning of the question and provide varied responses that convey the same information. The use of "Randomly-Generated Semantically-Similar Responses" suggests that the LLM can produce a range of phrasings while maintaining accuracy and relevance.