## Flowchart: LLM Response Generation Process

### Overview

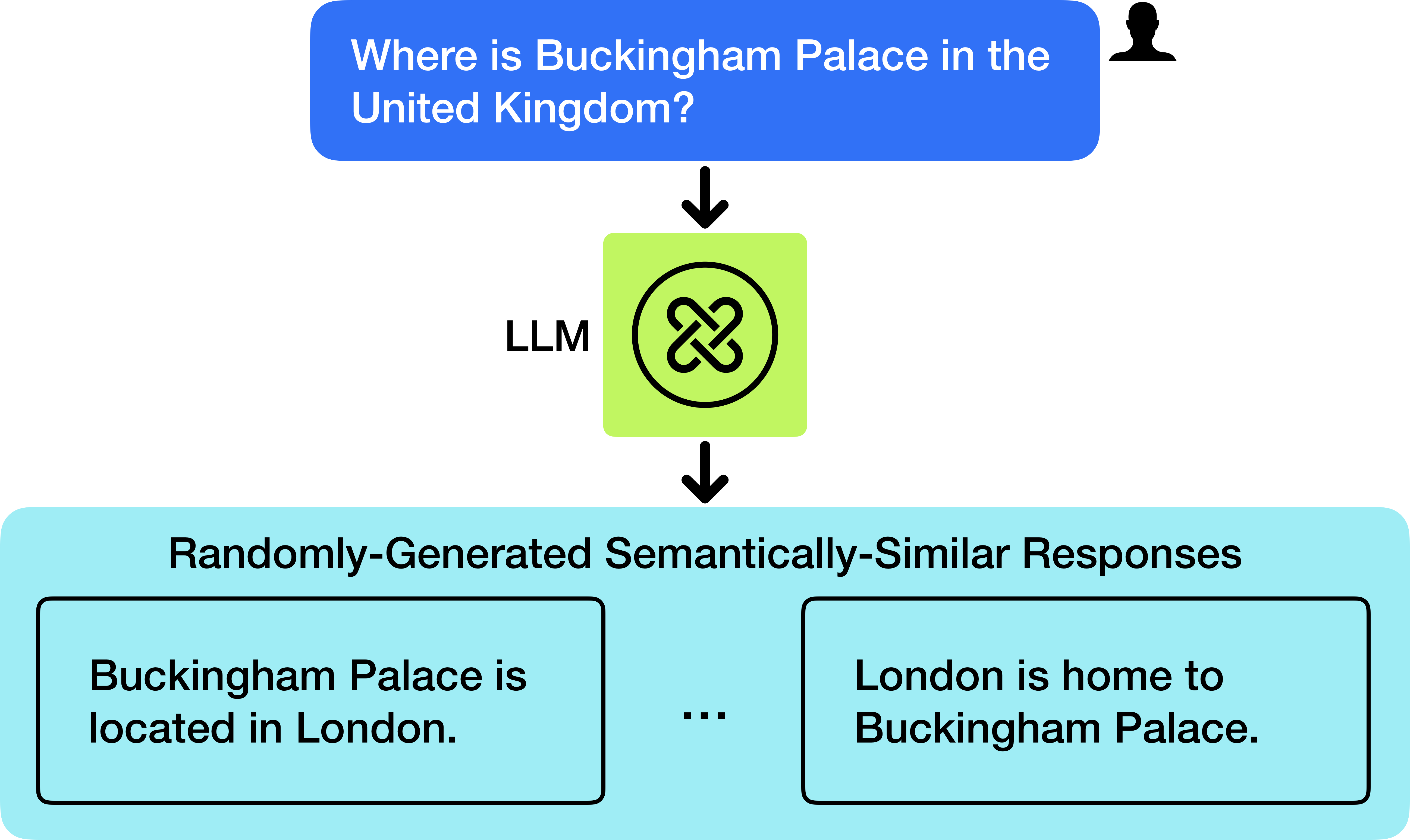

The image depicts a flowchart illustrating how a Large Language Model (LLM) processes a user query about Buckingham Palace's location and generates semantically similar responses. The diagram uses color-coded boxes, arrows, and symbols to represent the flow of information.

### Components/Axes

1. **Input Query**:

- A blue text box with white text: *"Where is Buckingham Palace in the United Kingdom?"*

- Positioned at the top-right, adjacent to a black silhouette icon (likely representing a user).

2. **LLM Processing Unit**:

- A green square with a black circular logo containing a knot-like symbol (resembling a Celtic knot or infinity loop).

- Labeled *"LLM"* in black text to the left of the square.

- Arrows connect the input query to the LLM box, indicating processing.

3. **Output Responses**:

- A light blue rectangular section titled *"Randomly-Generated Semantically-Similar Responses"* in black text.

- Contains two example responses in black text within bordered rectangles:

- *"Buckingham Palace is located in London."*

- *"London is home to Buckingham Palace."*

- Three ellipses (...) between the responses suggest variability in output.

### Detailed Analysis

- **Color Coding**:

- Blue: User input (question).

- Green: LLM processing unit (symbolizes computational logic).

- Light Blue: Output responses (semantic similarity).

- **Flow Direction**:

- Top-to-bottom vertical flow: Query → LLM → Responses.

- No branching or feedback loops; linear progression.

### Key Observations

1. The LLM generates responses that retain the core factual information (Buckingham Palace’s location in London) while varying phrasing.

2. The knot symbol in the LLM box may symbolize complexity, interconnectedness, or iterative processing.

3. Responses are factually identical but syntactically distinct, demonstrating semantic similarity.

### Interpretation

This diagram abstracts the LLM’s ability to rephrase information while preserving meaning. The use of a knot symbol for the LLM could imply:

- **Complexity**: The model’s intricate decision-making process.

- **Cyclical Nature**: Iterative refinement of responses.

- **Uniqueness**: Generating non-redundant outputs.

The flowchart emphasizes the LLM’s role in transforming rigid queries into flexible, contextually appropriate answers, highlighting its utility in natural language understanding and generation. No numerical data or trends are present, as the focus is on conceptual relationships rather than quantitative analysis.