TECHNICAL ASSET FINGERPRINT

1872c5edfb1886b0d542132c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

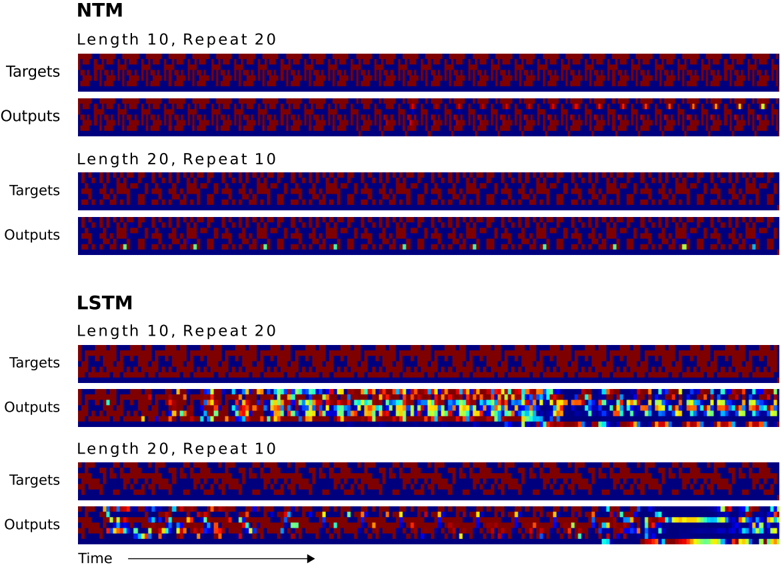

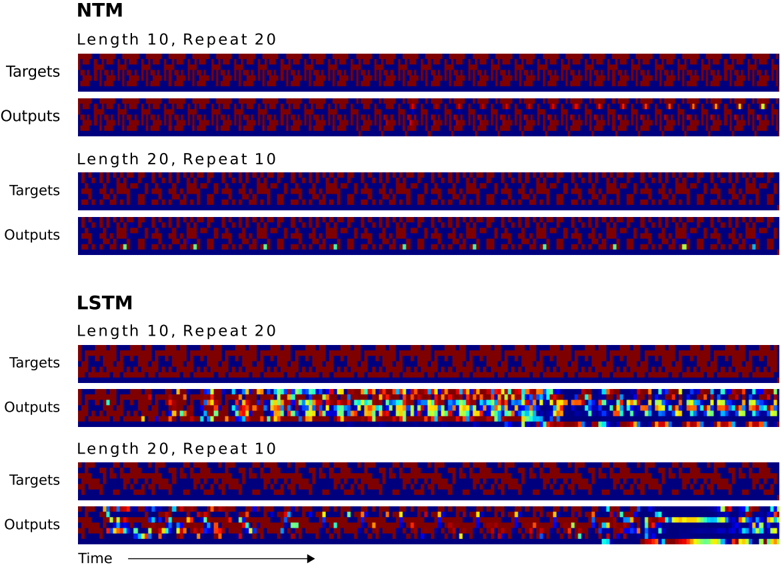

## Heatmap: NTM vs LSTM Performance

### Overview

The image presents a comparison of the performance of two neural network models, NTM (Neural Turing Machine) and LSTM (Long Short-Term Memory), on a sequence learning task. The performance is visualized as heatmaps, where each row represents either the target sequence or the model's output sequence. Two different sequence configurations are tested: "Length 10, Repeat 20" and "Length 20, Repeat 10". The heatmaps use color to indicate the activation level or the presence of a specific element in the sequence.

### Components/Axes

* **Models:** NTM (top) and LSTM (bottom)

* **Sequence Configurations:**

* Length 10, Repeat 20

* Length 20, Repeat 10

* **Rows:**

* Targets: The desired output sequence.

* Outputs: The sequence generated by the model.

* **X-axis:** Represents "Time" (indicated by an arrow pointing right).

* **Color Scale:** The heatmap uses a color scale, where blue likely represents a low activation or absence of an element, and colors progressing towards red, yellow, and potentially white represent increasing activation levels or presence.

### Detailed Analysis

**NTM:**

* **Length 10, Repeat 20:**

* **Targets:** The target sequence shows a repeating pattern of length 10. The pattern consists of alternating blocks of red and blue.

* **Outputs:** The output sequence closely matches the target sequence, with a similar repeating pattern of red and blue blocks. There are some minor deviations, indicated by slightly different color intensities.

* **Length 20, Repeat 10:**

* **Targets:** The target sequence shows a repeating pattern of length 20, with alternating blocks of red and blue.

* **Outputs:** The output sequence mostly matches the target sequence, but there are some errors. There are a few isolated pixels of a different color (likely green or yellow) scattered throughout the output.

**LSTM:**

* **Length 10, Repeat 20:**

* **Targets:** The target sequence shows a repeating pattern of length 10, with alternating blocks of red and blue.

* **Outputs:** The output sequence is significantly different from the target sequence. It shows a more complex pattern with a wider range of colors, including blue, cyan, yellow, and red. The repeating pattern is not as clear as in the target sequence.

* **Length 20, Repeat 10:**

* **Targets:** The target sequence shows a repeating pattern of length 20, with alternating blocks of red and blue.

* **Outputs:** The output sequence shows some resemblance to the target sequence, but it also contains significant errors. The repeating pattern is less clear, and there are regions with different colors, including blue, cyan, yellow, and red. Towards the end of the sequence, the output seems to align better with the target.

### Key Observations

* NTM performs better than LSTM on this sequence learning task, especially for the "Length 10, Repeat 20" configuration.

* LSTM struggles to accurately reproduce the target sequences, particularly for the "Length 10, Repeat 20" configuration.

* Both models show some errors in the "Length 20, Repeat 10" configuration, but NTM's errors are less pronounced.

* The color variations in the LSTM outputs suggest that the model is not confident in its predictions and is exploring a wider range of possibilities.

### Interpretation

The heatmaps provide a visual representation of the performance of NTM and LSTM on a sequence learning task. The results suggest that NTM is better suited for learning simple repeating patterns than LSTM. LSTM's struggle to accurately reproduce the target sequences may be due to its more complex architecture, which can lead to overfitting or difficulty in learning simple patterns. The errors in both models' outputs for the "Length 20, Repeat 10" configuration suggest that longer sequences are more challenging to learn. The color variations in the LSTM outputs indicate that the model is less confident in its predictions and is exploring a wider range of possibilities. This could be due to the model's internal state being less stable or its inability to capture the underlying structure of the sequence.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmaps: NTM and LSTM Performance Comparison

### Overview

The image presents four heatmaps visualizing the performance of two neural network models, NTM (Neural Turing Machine) and LSTM (Long Short-Term Memory), under different conditions. Each heatmap represents a model's "Targets" (desired outputs) versus its "Outputs" (actual predictions) for a specific combination of sequence length and repetition count. The heatmaps use a color gradient to represent the intensity of activation or similarity, with darker colors (approaching black) indicating lower values and brighter colors (red/yellow) indicating higher values.

### Components/Axes

The image is divided into two main sections, one for NTM and one for LSTM. Each section contains two heatmaps, defined by:

* **Model:** NTM or LSTM

* **Length:** 10 or 20 (representing the sequence length)

* **Repeat:** 20 or 10 (representing the number of repetitions)

* **Rows:** "Targets" and "Outputs"

* **Horizontal Axis:** Represents "Time" as indicated by the arrow at the bottom-right. The axis is not numerically labeled, but represents progression through the sequence.

* **Color Scale:** A gradient from dark blue (low activation) to red/yellow (high activation).

### Detailed Analysis or Content Details

**NTM - Length 10, Repeat 20:**

* **Targets:** The heatmap shows a relatively uniform distribution of dark blue and red pixels, indicating a consistent but not highly concentrated activation pattern. There are some areas of brighter red, but they are interspersed with darker blue.

* **Outputs:** Similar to "Targets", the "Outputs" heatmap displays a mix of dark blue and red pixels. There appears to be a slightly more structured pattern, with some vertical bands of red.

**NTM - Length 20, Repeat 10:**

* **Targets:** The "Targets" heatmap exhibits a more pronounced pattern of alternating dark blue and red bands. The red bands are more frequent and wider than in the previous heatmap.

* **Outputs:** The "Outputs" heatmap shows a similar pattern to "Targets", with alternating bands of dark blue and red. The red bands appear to be slightly less frequent and less defined than in the "Targets" heatmap.

**LSTM - Length 10, Repeat 20:**

* **Targets:** The "Targets" heatmap displays a very distinct and regular pattern of alternating dark blue and bright red pixels. This creates a clear, striped appearance.

* **Outputs:** The "Outputs" heatmap shows a more complex pattern. While there are still alternating bands, they are less regular and more fragmented than in the "Targets" heatmap. There are also areas of yellow and green, indicating intermediate activation levels.

**LSTM - Length 20, Repeat 10:**

* **Targets:** The "Targets" heatmap shows a similar pattern to the LSTM - Length 10, Repeat 20, with alternating dark blue and bright red bands.

* **Outputs:** The "Outputs" heatmap displays a highly dynamic pattern. The color distribution is more varied, with a gradient from blue to green to yellow, and finally to red. There is a clear progression of color from left to right, with the rightmost side being predominantly red.

### Key Observations

* **NTM:** The NTM heatmaps show a more diffuse activation pattern compared to the LSTM heatmaps. The patterns are less structured and less consistent.

* **LSTM:** The LSTM heatmaps exhibit a more distinct and regular activation pattern, particularly in the "Targets" heatmaps. The "Outputs" heatmaps show a more complex and dynamic pattern, with a clear progression of activation over time.

* **Length & Repeat:** Increasing the sequence length (from 10 to 20) appears to result in more pronounced patterns in both models. Decreasing the repetition count (from 20 to 10) seems to affect the complexity of the output patterns.

* **Targets vs. Outputs:** In general, the "Outputs" heatmaps are more complex and less structured than the "Targets" heatmaps, indicating that the models are not perfectly replicating the desired outputs.

### Interpretation

The heatmaps suggest that the LSTM model is better at learning and reproducing the target patterns compared to the NTM model, especially for the shorter sequence length (10). The clear, striped patterns in the LSTM "Targets" heatmaps indicate a strong ability to capture the underlying structure of the data. The more complex patterns in the LSTM "Outputs" heatmaps suggest that the model is actively processing the information and generating dynamic responses.

The NTM model, on the other hand, exhibits a more diffuse activation pattern, indicating that it may be struggling to capture the underlying structure of the data. The less structured patterns in the NTM "Outputs" heatmaps suggest that the model is generating less coherent and less predictable responses.

The changes in patterns with different sequence lengths and repetition counts suggest that the models' performance is sensitive to these parameters. The progression of color in the LSTM - Length 20, Repeat 10 "Outputs" heatmap may indicate that the model is learning to adapt its responses over time.

The image provides a visual comparison of the internal representations learned by the two models, offering insights into their strengths and weaknesses. The data suggests that LSTM is more effective at this task, but both models exhibit complex behavior that warrants further investigation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Comparison: NTM vs. LSTM Sequence Replication Performance

### Overview

The image displays a comparative visualization of sequence replication performance between two neural network architectures: a Neural Turing Machine (NTM) and a Long Short-Term Memory (LSTM) network. The comparison is presented as a series of horizontal heatmaps, showing the model's "Outputs" against the ground truth "Targets" for two different sequence tasks. The visualization demonstrates a clear performance disparity between the two models.

### Components/Axes

* **Main Sections:** The image is divided into two primary vertical sections, labeled at the top left:

1. **NTM** (Top section)

2. **LSTM** (Bottom section)

* **Task Conditions:** Each model section contains two sub-conditions, labeled above their respective heatmap pairs:

* `Length 10, Repeat 20`

* `Length 20, Repeat 10`

* **Row Labels:** For each condition, two rows are presented, labeled on the left:

* `Targets` (The ground truth sequence to be replicated)

* `Outputs` (The sequence generated by the model)

* **X-Axis:** Represents **Time** or sequence steps, indicated by a right-pointing arrow labeled `Time` at the very bottom of the image.

* **Color Scale (Implicit Legend):** The heatmaps use a color scale to represent values.

* **Deep Blue:** Represents low values (likely 0).

* **Dark Red:** Represents high values (likely 1).

* **Yellow/Green/Cyan:** Appear only in the LSTM `Outputs` rows, indicating intermediate or erroneous values, representing model prediction errors.

### Detailed Analysis

**1. NTM Section (Top Half):**

* **Condition: Length 10, Repeat 20**

* **Targets:** Shows a repeating pattern of a short sequence (length 10). The pattern consists of distinct vertical bands of red (high) and blue (low) pixels, repeated approximately 20 times across the time axis.

* **Outputs:** Visually nearly identical to the `Targets` row. The pattern of red and blue bands is replicated with high fidelity across the entire sequence. No visible yellow/green error pixels are present.

* **Condition: Length 20, Repeat 10**

* **Targets:** Shows a repeating pattern of a longer sequence (length 20). The pattern is more complex than the first condition but still consists of clear red/blue bands, repeated approximately 10 times.

* **Outputs:** Again, visually nearly identical to the `Targets` row. The longer, more complex pattern is replicated accurately across all repetitions.

**2. LSTM Section (Bottom Half):**

* **Condition: Length 10, Repeat 20**

* **Targets:** Identical pattern to the NTM's `Length 10, Repeat 20` target.

* **Outputs:** Shows significant degradation. The initial repetitions (left side) are somewhat recognizable but noisy. As time progresses to the right, the output becomes increasingly chaotic, filled with yellow, green, and cyan pixels, indicating severe prediction errors. The original repeating pattern is largely lost in the latter half.

* **Condition: Length 20, Repeat 10**

* **Targets:** Identical pattern to the NTM's `Length 20, Repeat 10` target.

* **Outputs:** Performance is poor from the outset. The output is a noisy, fragmented version of the target pattern. A prominent horizontal yellow bar appears in the latter third of the sequence, indicating a sustained, significant error. The model fails to maintain the correct sequence structure.

### Key Observations

1. **Perfect vs. Degraded Performance:** The NTM demonstrates near-perfect replication for both sequence lengths and repetition counts. The LSTM's performance degrades severely, especially as the sequence progresses.

2. **Error Propagation in LSTM:** The LSTM's errors are not random; they show a clear trend of degradation over time. The `Length 10, Repeat 20` output starts relatively well and deteriorates. The `Length 20, Repeat 10` output is poor throughout.

3. **Task Difficulty:** The `Length 20, Repeat 10` task appears more challenging for the LSTM than the `Length 10, Repeat 20` task, as its failure is more immediate and severe.

4. **Visual Signature of Error:** The introduction of yellow, green, and cyan colors in the LSTM outputs is a direct visual marker of prediction error, contrasting sharply with the clean red/blue of the targets and NTM outputs.

### Interpretation

This visualization provides strong empirical evidence for the core claim of the Neural Turing Machine paper: that augmenting a neural network with an external memory and attention mechanisms grants it a superior capacity for algorithmic tasks involving precise sequence storage and recall.

* **What the data suggests:** The NTM behaves like a reliable storage system. It can "write" a sequence to its memory and "read" it back perfectly, regardless of the sequence length (within the tested bounds) or the number of repetitions required. The LSTM, a standard recurrent network, struggles with this precise, long-term copy task. Its internal memory appears insufficient or too "lossy" to perfectly retain and reproduce the sequence over many time steps, leading to error accumulation.

* **Relationship between elements:** The direct side-by-side comparison of identical target sequences under the same conditions isolates the model architecture as the sole variable. The stark contrast in output quality is therefore directly attributable to the NTM's memory architecture versus the LSTM's recurrent architecture.

* **Notable implications:** This is not merely a chart of "better" vs. "worse" performance. It illustrates a qualitative difference in capability. The NTM demonstrates **algorithmic generalization**—it appears to have learned the *rule* of copying, while the LSTM appears to be performing a much less robust form of sequence prediction that fails under the stress of exact, long-term replication. The yellow error bars in the LSTM output are visual proof of its failure to maintain the algorithmic state.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Comparison: NTM vs LSTM Model Performance

### Overview

The image presents a comparative analysis of two neural network architectures (NTM and LSTM) using heatmaps to visualize their performance across different sequence configurations. The data is organized into four primary sections:

1. **NTM (Neural Turing Machine)**

- Length 10, Repeat 20

- Length 20, Repeat 10

2. **LSTM (Long Short-Term Memory)**

- Length 10, Repeat 20

- Length 20, Repeat 10

Each section compares **Targets** (input patterns) and **Outputs** (model predictions) across time steps, with color gradients representing value intensities.

---

### Components/Axes

- **X-axis**: Labeled "Time" with a rightward arrow, indicating temporal progression.

- **Y-axis**: Categorized into "Targets" (input data) and "Outputs" (model predictions).

- **Legend**: Implied through color coding (no explicit legend box). Colors include:

- **Red**: High intensity/value

- **Blue**: Low intensity/value

- **Yellow/Cyan**: Intermediate values (transition zones).

---

### Detailed Analysis

#### NTM Section

1. **Length 10, Repeat 20**

- **Targets**: Uniform red-blue striped pattern, suggesting periodic input.

- **Outputs**: Nearly identical to Targets, with minor yellow/cyan deviations at the end, indicating high accuracy.

2. **Length 20, Repeat 10**

- **Targets**: More fragmented red-blue pattern, reflecting increased sequence complexity.

- **Outputs**: Slightly noisier than Targets, with scattered yellow/cyan pixels, showing reduced precision in longer sequences.

#### LSTM Section

1. **Length 10, Repeat 20**

- **Targets**: Similar striped pattern to NTM but with irregular red patches.

- **Outputs**: Highly variable, with red/yellow/cyan clusters, suggesting overfitting or instability.

2. **Length 20, Repeat 10**

- **Targets**: Dense red-blue noise, indicating complex input patterns.

- **Outputs**: Gradient from red (left) to blue (right), with yellow/cyan streaks, implying gradual degradation in performance over time.

---

### Key Observations

1. **NTM Consistency**: Maintains near-perfect output matching Targets in shorter sequences (Length 10). Performance degrades slightly in longer sequences but remains stable.

2. **LSTM Variability**: Outputs exhibit significant color dispersion, especially in longer sequences, suggesting difficulty in capturing long-term dependencies.

3. **Color Gradient Trends**:

- NTM Outputs: Minimal deviation from Targets (red/blue dominance).

- LSTM Outputs: Increasing blue dominance (lower values) in longer sequences, indicating potential underperformance.

---

### Interpretation

The data demonstrates that **NTM outperforms LSTM** in tasks requiring precise sequence repetition, particularly in longer configurations. The LSTM’s outputs show a clear trend of degradation (red → blue gradient) in the "Length 20, Repeat 10" case, likely due to vanishing gradients or memory limitations. NTM’s architecture, designed for external memory, better preserves input patterns over time.

**Notable Anomalies**:

- LSTM’s "Length 10, Repeat 20" Outputs have unexpected red patches, possibly indicating transient overfitting.

- NTM’s "Length 20, Repeat 10" Outputs retain Target structure despite increased complexity, highlighting robustness.

This analysis underscores the importance of architectural design in handling sequence length and repetition tasks, with NTM’s external memory mechanism providing a critical advantage over LSTM’s internal state management.

DECODING INTELLIGENCE...