TECHNICAL ASSET FINGERPRINT

18e606bffc180826892ba5cc

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

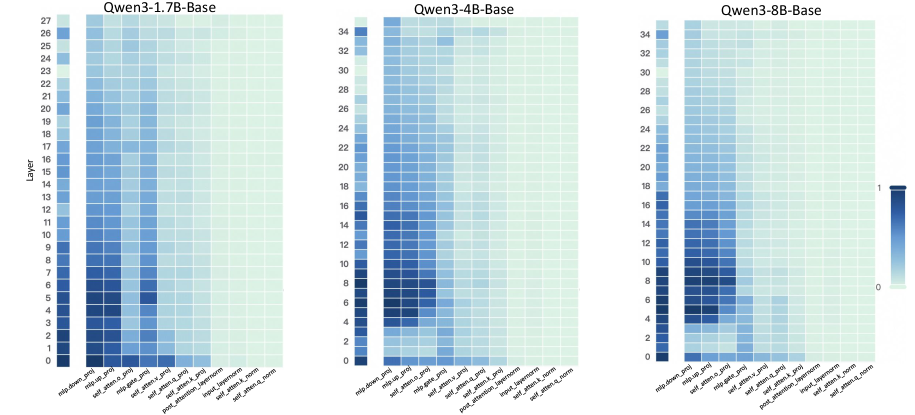

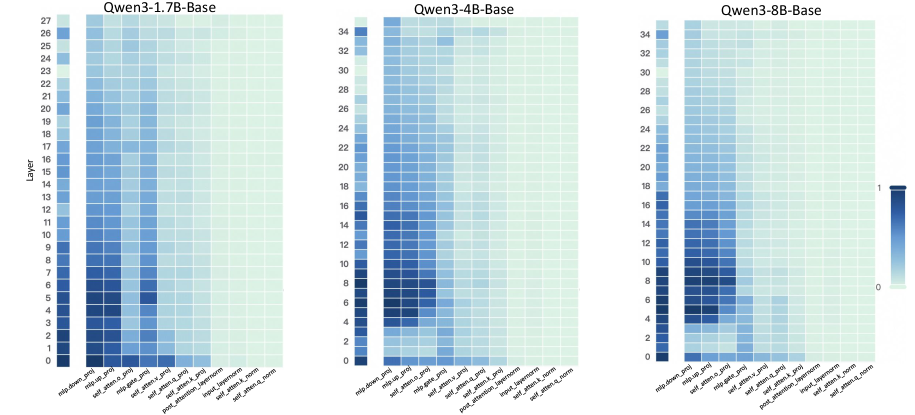

## Heatmaps: Qwen3 Model Layer Analysis

### Overview

The image presents three heatmaps comparing the activity across different layers and components of Qwen3 models with varying sizes (1.7B, 4B, and 8B parameters). The heatmaps visualize the relative activation levels within each layer for different modules, providing insights into how the model's processing changes with scale.

### Components/Axes

* **Titles:**

* Top-left: "Qwen3-1.7B-Base"

* Top-center: "Qwen3-4B-Base"

* Top-right: "Qwen3-8B-Base"

* **Y-axis:** "Layer"

* Leftmost heatmap: Ranges from 0 to 27, incrementing by 1.

* Center heatmap: Ranges from 0 to 34, incrementing by 2.

* Rightmost heatmap: Ranges from 0 to 34, incrementing by 2.

* **X-axis:** (Modules, from left to right)

* "mlp.down_proj"

* "mlp.up_proj"

* "self_atten.o_proj"

* "mlp.gate_proj"

* "self_atten.v_proj"

* "self_atten.q_proj"

* "self_atten.k_proj"

* "post_attention_layernorm"

* "input_layernorm"

* "self_atten.k_norm"

* "self_atten.a_norm"

* **Color Legend:** Located on the right side of the rightmost heatmap. The color gradient ranges from light green (0) to dark blue (1), representing the activation level.

### Detailed Analysis

**Qwen3-1.7B-Base (Left Heatmap):**

* The heatmap shows activity concentrated in the lower layers (0-10).

* **mlp.down_proj:** High activation in layers 0-9, decreasing gradually.

* **mlp.up_proj:** Similar to mlp.down_proj, high activation in layers 0-9.

* **self_atten.o_proj:** Moderate activation in layers 0-5.

* **mlp.gate_proj:** Low to moderate activation in layers 0-5.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

**Qwen3-4B-Base (Center Heatmap):**

* Activity is more spread out across the layers compared to the 1.7B model.

* **mlp.down_proj:** High activation in layers 0-15, then decreases.

* **mlp.up_proj:** High activation in layers 0-15, then decreases.

* **self_atten.o_proj:** Moderate activation in layers 0-10.

* **mlp.gate_proj:** Low to moderate activation in layers 0-10.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

**Qwen3-8B-Base (Right Heatmap):**

* The activity pattern is similar to the 4B model, but with slightly higher activation in the lower layers.

* **mlp.down_proj:** High activation in layers 0-15, then decreases.

* **mlp.up_proj:** High activation in layers 0-15, then decreases.

* **self_atten.o_proj:** Moderate activation in layers 0-10.

* **mlp.gate_proj:** Low to moderate activation in layers 0-10.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

### Key Observations

* The mlp.down_proj and mlp.up_proj modules show the highest activation levels across all three models, particularly in the lower layers.

* The self_atten.o_proj and mlp.gate_proj modules exhibit moderate activation, also concentrated in the lower layers.

* The remaining modules (self_atten.v_proj, self_atten.q_proj, self_atten.k_proj, post_attention_layernorm, input_layernorm, self_atten.k_norm, self_atten.a_norm) show very low activation across all layers and models.

* As the model size increases (1.7B to 4B to 8B), the activity tends to spread out more across the layers, suggesting that larger models utilize more layers for processing.

### Interpretation

The heatmaps provide a visual representation of the internal workings of the Qwen3 models. The concentration of activity in the mlp.down_proj and mlp.up_proj modules suggests that these modules play a crucial role in the model's processing, especially in the initial layers. The low activation of other modules might indicate that they are either less important or that their activity is more distributed and less concentrated in specific layers.

The trend of activity spreading out across more layers as the model size increases suggests that larger models are able to distribute the processing load more effectively, potentially leading to improved performance. The consistent patterns across the different model sizes also indicate that the overall architecture and processing flow remain similar, with the larger models simply scaling up the existing structure.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmaps: Qwen Model Layer Activation Analysis

### Overview

The image presents three heatmaps, each representing the activation levels of different layers within three Qwen language models: Qwen3-1.7B-Base, Qwen3-4B-Base, and Qwen3-8B-Base. The heatmaps visualize the relationship between model layers (vertical axis) and different attention mechanisms (horizontal axis). Color intensity represents the activation level, with darker blues indicating higher activation and lighter blues indicating lower activation.

### Components/Axes

Each heatmap shares the same structure:

* **Vertical Axis:** "Layer", ranging from 0 to 27 for Qwen3-1.7B-Base, 0 to 27 for Qwen3-4B-Base, and 0 to 27 for Qwen3-8B-Base.

* **Horizontal Axis:** Attention mechanisms, labeled as follows: "mlp_hidden_proj", "self_atten_k_proj", "self_atten_v_proj", "post_atten_layerNorm", "self_atten_o_proj".

* **Color Scale:** A gradient from light blue (approximately 0) to dark blue (approximately 1). The scale is positioned on the right side of each heatmap.

* **Titles:** Each heatmap is labeled with the corresponding model name: "Qwen3-1.7B-Base", "Qwen3-4B-Base", and "Qwen3-8B-Base".

### Detailed Analysis or Content Details

**Qwen3-1.7B-Base:**

* **Trend:** Generally, activation levels are higher in the lower layers (0-10) and decrease as the layer number increases. "mlp_hidden_proj" shows a relatively consistent activation across layers. "self_atten_k_proj", "self_atten_v_proj", and "self_atten_o_proj" show a more pronounced decrease in activation with increasing layer number. "post_atten_layerNorm" shows a slight increase in activation in the middle layers (around 10-15) before decreasing again.

* **Specific Values (approximate):**

* Layer 0, "mlp_hidden_proj": ~0.8

* Layer 0, "self_atten_k_proj": ~0.9

* Layer 10, "mlp_hidden_proj": ~0.6

* Layer 10, "self_atten_k_proj": ~0.4

* Layer 20, "mlp_hidden_proj": ~0.3

* Layer 20, "self_atten_k_proj": ~0.1

* Layer 5, "post_atten_layerNorm": ~0.5

* Layer 15, "post_atten_layerNorm": ~0.7

**Qwen3-4B-Base:**

* **Trend:** Similar to Qwen3-1.7B-Base, activation levels are generally higher in lower layers and decrease with increasing layer number. However, the overall activation levels appear slightly higher across all attention mechanisms compared to the 1.7B model. "post_atten_layerNorm" shows a more pronounced peak in activation around layers 10-15.

* **Specific Values (approximate):**

* Layer 0, "mlp_hidden_proj": ~0.9

* Layer 0, "self_atten_k_proj": ~1.0

* Layer 10, "mlp_hidden_proj": ~0.7

* Layer 10, "self_atten_k_proj": ~0.5

* Layer 20, "mlp_hidden_proj": ~0.4

* Layer 20, "self_atten_k_proj": ~0.2

* Layer 5, "post_atten_layerNorm": ~0.6

* Layer 15, "post_atten_layerNorm": ~0.8

**Qwen3-8B-Base:**

* **Trend:** The trend is consistent with the other two models – higher activation in lower layers, decreasing with depth. Activation levels are generally comparable to Qwen3-4B-Base. "post_atten_layerNorm" again shows a peak in activation around layers 10-15.

* **Specific Values (approximate):**

* Layer 0, "mlp_hidden_proj": ~0.8

* Layer 0, "self_atten_k_proj": ~0.9

* Layer 10, "mlp_hidden_proj": ~0.6

* Layer 10, "self_atten_k_proj": ~0.4

* Layer 20, "mlp_hidden_proj": ~0.3

* Layer 20, "self_atten_k_proj": ~0.1

* Layer 5, "post_atten_layerNorm": ~0.5

* Layer 15, "post_atten_layerNorm": ~0.7

### Key Observations

* All three models exhibit a similar pattern of decreasing activation levels with increasing layer depth.

* "mlp_hidden_proj" consistently shows relatively stable activation across layers.

* "post_atten_layerNorm" shows a localized peak in activation in the middle layers (around 10-15) for all three models.

* The 4B and 8B models generally have slightly higher activation levels than the 1.7B model.

### Interpretation

These heatmaps provide insights into how information flows and is processed within the Qwen models. The decreasing activation levels with depth suggest that initial layers are responsible for extracting fundamental features, while deeper layers refine and integrate these features. The consistent activation of "mlp_hidden_proj" indicates its importance in feature transformation across all layers. The peak in "post_atten_layerNorm" activation in the middle layers suggests that normalization plays a crucial role in stabilizing and optimizing the learning process at these depths.

The higher activation levels in the 4B and 8B models compared to the 1.7B model could indicate that larger models have a greater capacity to represent and process complex information. The consistent patterns across the three models suggest a shared architectural principle in how these Qwen models handle attention and normalization. Further investigation could explore the correlation between these activation patterns and the models' performance on specific tasks. The heatmaps are a valuable tool for understanding the internal workings of these large language models and identifying potential areas for optimization.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Series: Qwen3 Model Layer Activation Patterns

### Overview

The image displays three horizontally arranged heatmaps, each visualizing activation patterns across layers and components for different sizes of the Qwen3 language model base variants. The heatmaps use a color gradient to represent numerical values, likely indicating activation intensity, importance, or some normalized metric.

### Components/Axes

**Titles (Top of each heatmap):**

1. Left: `Qwen3-1.7B-Base`

2. Center: `Qwen3-4B-Base`

3. Right: `Qwen3-8B-Base`

**Y-Axis (Vertical, Left side of each heatmap):**

* **Label:** `Layer`

* **Scale:** Represents the model's layer index, starting from 0 at the bottom.

* Qwen3-1.7B-Base: Layers 0 to 27.

* Qwen3-4B-Base: Layers 0 to 34.

* Qwen3-8B-Base: Layers 0 to 34.

**X-Axis (Horizontal, Bottom of each heatmap):**

* **Labels (Identical for all three heatmaps, from left to right):**

1. `mlp.down_proj`

2. `mlp.gate_proj`

3. `mlp.up_proj`

4. `self_attn.k_proj`

5. `self_attn.q_proj`

6. `self_attn.v_proj`

7. `self_attn.o_proj`

8. `post_attention_layernorm`

9. `input_layernorm`

10. `lm_head`

**Color Legend (Far right of the image):**

* A vertical color bar.

* **Scale:** Ranges from `0` (bottom, light green) to `1` (top, dark blue).

* **Interpretation:** The color of each cell in the heatmaps corresponds to a value on this scale. Darker blue indicates a value closer to 1, while lighter green indicates a value closer to 0.

### Detailed Analysis

**General Pattern Across All Models:**

* **Trend Verification:** For all three models, the leftmost columns (corresponding to MLP projection layers: `mlp.down_proj`, `mlp.gate_proj`, `mlp.up_proj`) show a strong vertical gradient. They are darkest blue (high value) in the lowest layers and gradually become lighter (lower value) in the highest layers.

* The middle columns (self-attention projections: `self_attn.k_proj`, `self_attn.q_proj`, `self_attn.v_proj`, `self_attn.o_proj`) show a more complex, patchy pattern with moderate values concentrated in the lower-to-middle layers.

* The rightmost columns (`post_attention_layernorm`, `input_layernorm`, `lm_head`) are uniformly very light green (values near 0) across all layers in all models.

**Model-Specific Details:**

1. **Qwen3-1.7B-Base (28 layers):**

* The highest values (darkest blue) are concentrated in the `mlp.down_proj` and `mlp.gate_proj` columns within layers 0-10.

* The `self_attn.q_proj` column shows a notable cluster of moderate-to-high values in layers 0-15.

* Activation values diminish significantly above layer 20 for most components.

2. **Qwen3-4B-Base (35 layers):**

* The pattern is similar to the 1.7B model but extended over more layers.

* The high-value region for MLP projections (`mlp.down_proj`, `mlp.gate_proj`) extends slightly higher, up to around layer 15.

* The self-attention components show a more dispersed pattern of moderate values in the lower half of the network.

3. **Qwen3-8B-Base (35 layers):**

* The high-value region for MLP projections is the most extensive, with dark blue cells persisting up to layer 20 in the `mlp.down_proj` column.

* The self-attention components, particularly `self_attn.q_proj`, show a broader distribution of moderate values across the lower 25 layers compared to the smaller models.

* The overall contrast between high-value (blue) and low-value (green) regions appears slightly more pronounced.

### Key Observations

1. **Component Hierarchy:** MLP projection layers (`down_proj`, `gate_proj`, `up_proj`) consistently exhibit the highest values, especially in the lower network layers, across all model sizes.

2. **Layer Progression:** There is a clear top-down gradient where activation/importance is highest in the initial processing layers and decreases toward the final layers.

3. **Normalization Invariance:** The layernorm components (`post_attention_layernorm`, `input_layernorm`) and the language modeling head (`lm_head`) show negligible values (near 0) throughout, suggesting they are not the focus of this particular metric.

4. **Scaling Effect:** As model size increases (1.7B -> 4B -> 8B), the region of high activation in the MLP layers extends to a higher layer index, indicating that larger models may distribute these computations deeper into the network.

### Interpretation

This visualization likely represents the **relative importance or activation magnitude** of different weight matrices within the Qwen3 transformer architecture. The data suggests a fundamental architectural insight:

* **Core Computational Load:** The dense MLP layers (`down_proj`, `gate_proj`, `up_proj`) are the primary sites of high-magnitude processing, particularly in the early stages of the network where raw input is being transformed into a more useful representation.

* **Attention's Role:** Self-attention mechanisms show significant but more distributed activity, indicating their role in integrating information across the sequence, which may be less concentrated in specific layers compared to the MLP transformations.

* **Scaling Law Manifestation:** The extension of high MLP activation into deeper layers in larger models could be a visual correlate of scaling laws, where increased model capacity allows for more sustained, complex processing throughout the network depth.

* **Architectural Constants:** The near-zero values for normalization layers and the LM head are expected, as these components typically perform scaling and final projection rather than being sites of high-magnitude feature transformation.

**In essence, the heatmaps provide a "fingerprint" of computational focus, showing that the Qwen3 models, regardless of size, rely heavily on early-layer MLP processing, with the spatial extent of this focus growing with model scale.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Qwen3 Model Parameter Distribution Across Layers

### Overview

The image displays three comparative heatmaps visualizing parameter distribution patterns across different Qwen3 model architectures (1.7B, 4B, and 8B parameter variants). Each heatmap shows layer-wise distribution of parameters across model components, with color intensity representing parameter concentration (0-1 scale).

### Components/Axes

**Y-Axis (Layers):**

- Left heatmap (1.7B): 0-27 (28 layers)

- Middle heatmap (4B): 0-34 (35 layers)

- Right heatmap (8B): 0-34 (35 layers)

- All share "Layer" label with numerical increments

**X-Axis (Model Components):**

1. mlp_domain_proj

2. mlp_head_proj

3. mlp_head_slice

4. mlp_expert_proj

5. mlp_expert_slice

6. mlp_expert_router

7. mlp_post_proj

8. mlp_post_slice

9. mlp_attention_proj

10. mlp_attention_slice

11. mlp_attention_router

12. mlp_attention_head_proj

13. mlp_attention_head_slice

14. mlp_attention_head_router

15. mlp_attention_head_mlp (only in 4B model)

**Legend:**

- Color scale from dark blue (0) to light blue (1)

- Positioned right of each heatmap

- Consistent across all three visualizations

### Detailed Analysis

**1.5B Model (Left Heatmap):**

- Layers 0-5 show darkest cells (highest concentration)

- Most intense values in mlp_expert_proj (layer 5) and mlp_attention_proj (layer 4)

- Gradual lightening from layer 6 onward

- mlp_head_proj shows consistent mid-range values across layers

**4B Model (Middle Heatmap):**

- Layers 0-10 show darkest concentrations

- Peak values in mlp_expert_proj (layer 10) and mlp_attention_proj (layer 9)

- Distinct gradient from layer 0 (dark) to layer 34 (light)

- mlp_head_proj shows similar pattern to 1.7B model

**8B Model (Right Heatmap):**

- Most intense values in first 15 layers

- mlp_expert_proj shows strongest concentration (layer 15)

- mlp_attention_proj has highest values in layer 14

- Gradual lightening after layer 20

- mlp_head_proj shows less pronounced variation than smaller models

### Key Observations

1. **Layer Depth Correlation:** All models show decreasing parameter concentration with increasing layer depth

2. **Model Size Impact:** Larger models (8B) maintain higher concentration in early layers compared to smaller variants

3. **Component-Specific Patterns:**

- mlp_expert_proj consistently shows highest values across all models

- mlp_attention_proj shows secondary peaks in middle layers

- mlp_head_proj maintains relatively uniform distribution

4. **Gradient Strength:** 8B model exhibits most pronounced gradient (0.8 difference between layer 0 and 34)

5. **Anomaly:** 4B model has unique mlp_attention_head_mlp component not present in smaller variants

### Interpretation

These heatmaps reveal architectural optimization patterns across Qwen3 model variants:

1. **Parameter Distribution Strategy:** All models concentrate parameters in early layers, particularly in mlp_expert_proj components, suggesting prioritization of early-stage information processing

2. **Scaling Effects:** Larger models maintain higher parameter density in initial layers, potentially indicating more sophisticated early feature extraction capabilities

3. **Attention Mechanism:** mlp_attention_proj shows mid-layer concentration peaks, suggesting optimized attention mechanisms in deeper layers

4. **Component Specialization:** The presence of mlp_attention_head_mlp in 4B model indicates specialized attention processing absent in smaller variants

5. **Gradient Analysis:** The steeper gradient in 8B model suggests more efficient parameter utilization across depth, while smaller models show more uniform distribution

The data suggests deliberate architectural choices where larger models optimize for early-layer complexity while maintaining parameter efficiency through controlled distribution patterns. The consistent mlp_expert_proj dominance across all variants highlights its critical role in model performance.

DECODING INTELLIGENCE...